Drone-based RGB-Infrared Cross-Modality Vehicle Detection via Uncertainty-Aware Learning

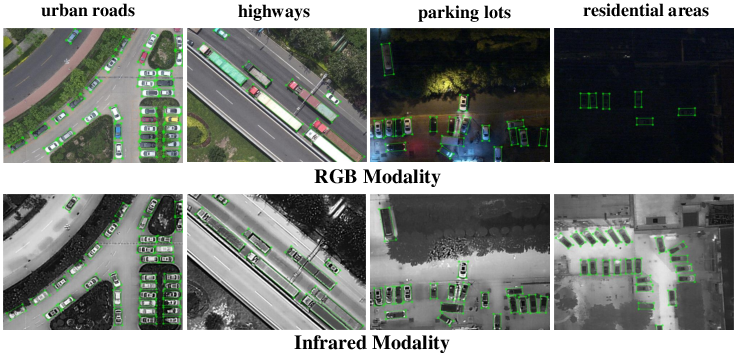

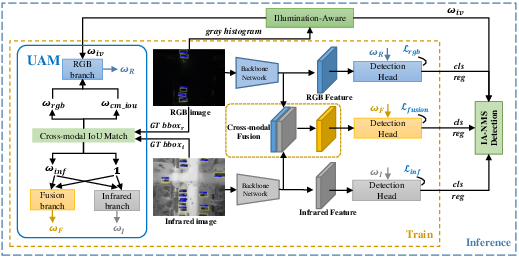

Abstract: Drone-based vehicle detection aims at finding the vehicle locations and categories in an aerial image. It empowers smart city traffic management and disaster rescue. Researchers have made mount of efforts in this area and achieved considerable progress. Nevertheless, it is still a challenge when the objects are hard to distinguish, especially in low light conditions. To tackle this problem, we construct a large-scale drone-based RGB-Infrared vehicle detection dataset, termed DroneVehicle. Our DroneVehicle collects 28, 439 RGB-Infrared image pairs, covering urban roads, residential areas, parking lots, and other scenarios from day to night. Due to the great gap between RGB and infrared images, cross-modal images provide both effective information and redundant information. To address this dilemma, we further propose an uncertainty-aware cross-modality vehicle detection (UA-CMDet) framework to extract complementary information from cross-modal images, which can significantly improve the detection performance in low light conditions. An uncertainty-aware module (UAM) is designed to quantify the uncertainty weights of each modality, which is calculated by the cross-modal Intersection over Union (IoU) and the RGB illumination value. Furthermore, we design an illumination-aware cross-modal non-maximum suppression algorithm to better integrate the modal-specific information in the inference phase. Extensive experiments on the DroneVehicle dataset demonstrate the flexibility and effectiveness of the proposed method for crossmodality vehicle detection. The dataset can be download from https://github.com/VisDrone/DroneVehicle.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about teaching drones to spot and label vehicles in pictures taken from the sky, both during the day and at night. The key idea is to use two kinds of images together:

- RGB images (like normal color photos)

- Infrared images (which show heat and work well in the dark)

The authors build a big new dataset and a smart method that knows when to trust one image type more than the other. This helps the drone find cars more accurately in tough situations, like low light or busy backgrounds.

Objectives and Questions

The researchers set out to answer a few simple questions:

- Can combining color photos (RGB) with infrared images help find vehicles better, especially at night?

- How do we decide which image type to trust more for each scene or each detected object?

- How can we handle the fact that the RGB and infrared cameras don’t perfectly line up, causing small position differences?

- Can we build a large, real-world dataset to train and test these ideas?

Methods and Approach

Think of the approach like a team effort with a smart coach:

- The “day teammate” is the RGB camera: great in good light, but struggles in the dark.

- The “night teammate” is the infrared camera: sees heat, so it’s strong at night, but sometimes mistakes warm objects or patterns for vehicles during the day.

- The “coach” is an uncertainty-aware system that decides, for each object and each scene, which teammate to trust more.

Here’s how it works in everyday terms:

- Building the dataset (DroneVehicle):

- 28,439 pairs of matching RGB and infrared images (56,878 images total).

- 953,087 labeled vehicles across five types: car, truck, bus, van, and freight car.

- Collected over many places (roads, residential areas, parking lots) and times (day, night, very dark night).

- Uses rotated rectangles (oriented bounding boxes) to match vehicles seen at angles, not just straight-on boxes.

- Measuring “uncertainty” with simple signals:

- Overlap check (IoU): Imagine drawing a box around a car in the RGB image and another box in the infrared image. If the boxes overlap a lot, they agree; if they barely overlap, they disagree. This overlap score helps measure alignment and confidence.

- Brightness check: The average brightness of the RGB image tells if it’s daytime, night, or very dark. If it’s dark, the system gives less weight to RGB and more to infrared.

- Three-branch detector (UA-CMDet):

- RGB branch: learns to detect vehicles from RGB images.

- Infrared branch: learns from infrared images.

- Fusion branch: combines features from both to make a joint prediction.

- A small “uncertainty-aware module” gives each branch a weight (how much to trust it) based on overlap and brightness. During training, boxes that are uncertain contribute less to the learning, so the model doesn’t get confused.

- Smarter final decision (Illumination-Aware NMS):

- Detectors often produce multiple overlapping boxes for the same car. Non-Maximum Suppression (NMS) keeps the best ones and removes duplicates.

- The paper’s version, IA-NMS, lowers the influence of the RGB branch when it’s dark, so nighttime mistakes from the RGB camera don’t mess up the final result.

Main Findings and Why They Matter

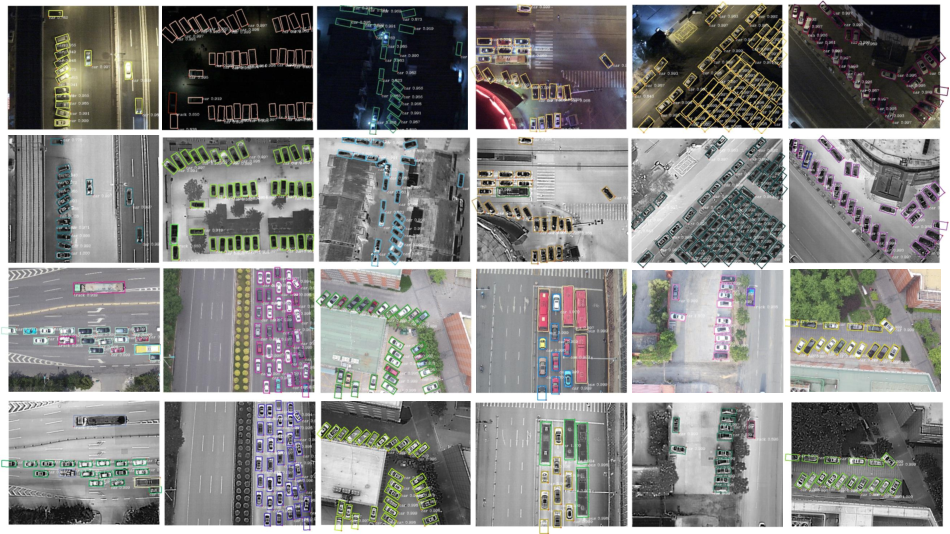

Here are the most important takeaways from the experiments on the new dataset:

- Combining RGB and infrared beats using either one alone, especially in low-light scenes.

- The uncertainty-aware “coach” helps the system know when to trust which camera more, reducing mistakes like:

- Missing vehicles at night in RGB images.

- False “ghost” vehicles in infrared images caused by heat reflections or lookalike shapes.

- The new dataset is large and diverse, covering day, night, and dark night, multiple heights and angles, and five vehicle types—this makes the model more robust in real-world drone scenarios.

- The full system (UA-CMDet) improves accuracy over standard baselines and over simple fusion methods without uncertainty handling. In short: smarter fusion and trust weighting leads to better results.

Implications and Impact

This research can help cities and emergency teams:

- Monitor traffic more reliably, day and night.

- Improve safety by accurately detecting vehicles in low visibility (e.g., dark roads, power outages).

- Support disaster response by finding vehicles quickly in difficult conditions.

Beyond vehicles, the idea of “uncertainty-aware” fusion is useful anywhere you combine different kinds of sensors (like cameras, thermal cameras, radar, etc.). The dataset is publicly available, so other researchers can build on this work to make aerial detection smarter and more dependable.

Collections

Sign up for free to add this paper to one or more collections.