- The paper shows that beam search implicitly enforces uniform information density (UID), leading to high-quality text output.

- It details how imposing UID-inspired regularizers improves BLEU scores across differing beam widths in neural translation.

- The study bridges cognitive theories and NLP, suggesting that aligning decoding with UID principles enhances text generation.

Decoding Neural Language Generators: The Role of Beam Search

Introduction

The paper "If Beam Search is the Answer, What was the Question?" (2010.02650) critically examines the effectiveness of beam search algorithms in neural language generation. Despite its high error rate, beam search remains predominant in producing high-quality outputs over more accurate exact search approaches. This problem highlights a critical gap between maximum a posteriori (MAP) objectives and desirable text properties. The authors propose that beam search enforces uniform information density (UID), a concept rooted in cognitive science, thus providing better text alignment with human language processing.

Beam Search and Maximum a Posteriori Objectives

Beam search has been a fundamental technique in NLP since the 1970s. It offers computational efficiency by maintaining a set of the most promising partial solutions, constrained by a fixed size. Neural probabilistic text generators use MAP decoding to determine the most probable hypothesis, maximizing the log-probability under the model. However, empirical results suggest that beam search, despite its search errors, often yields better outcomes than attempting exact MAP decoding, which can return inadequate outputs like empty strings.

In pursuit of understanding this discrepancy, the paper revisits the decoding objective of beam search, not as a mere approximation of MAP, but as a decoding strategy with an intrinsic alignment to the UID hypothesis. Essentially, beam search translates into exact search under regularization that inherently promotes UID.

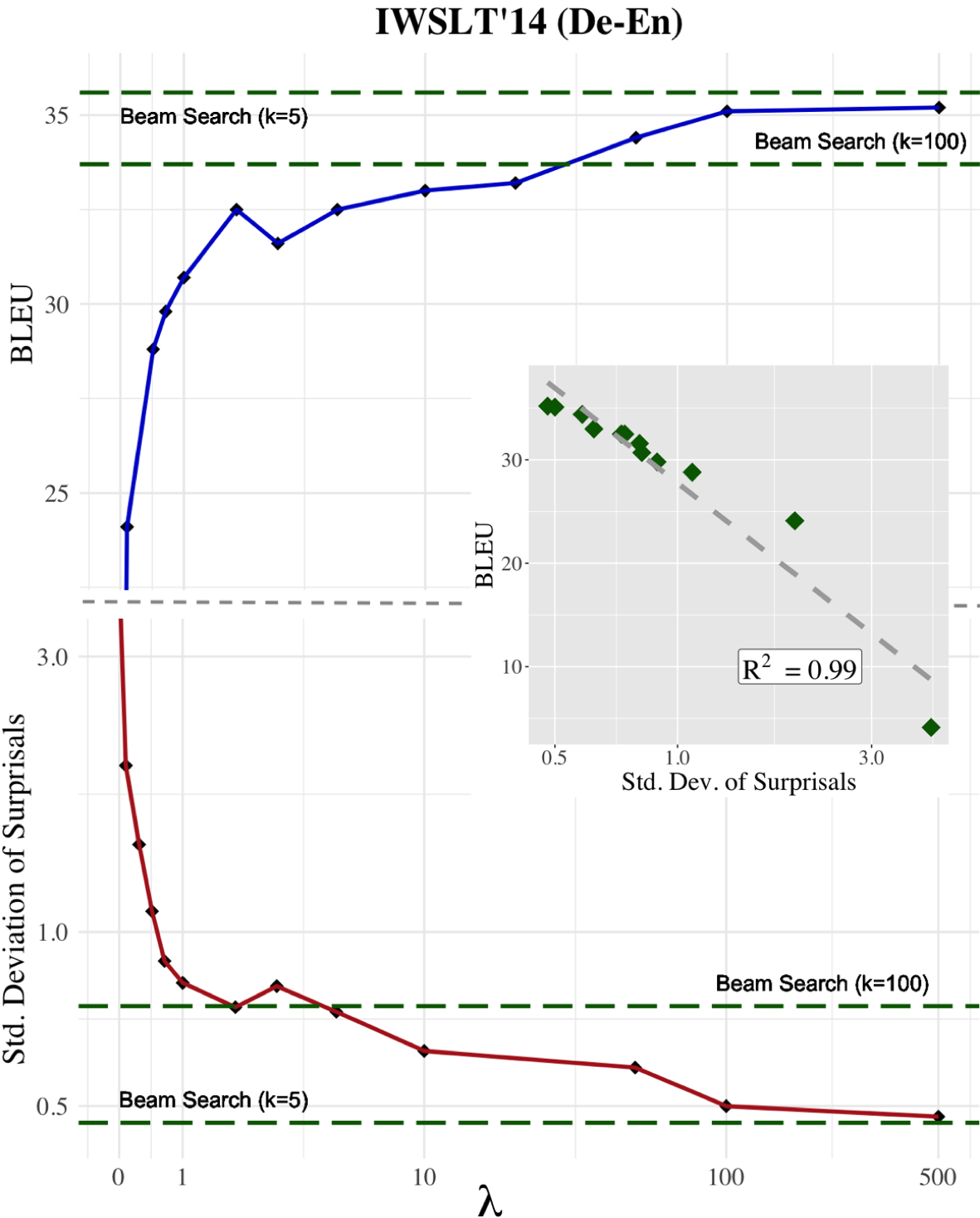

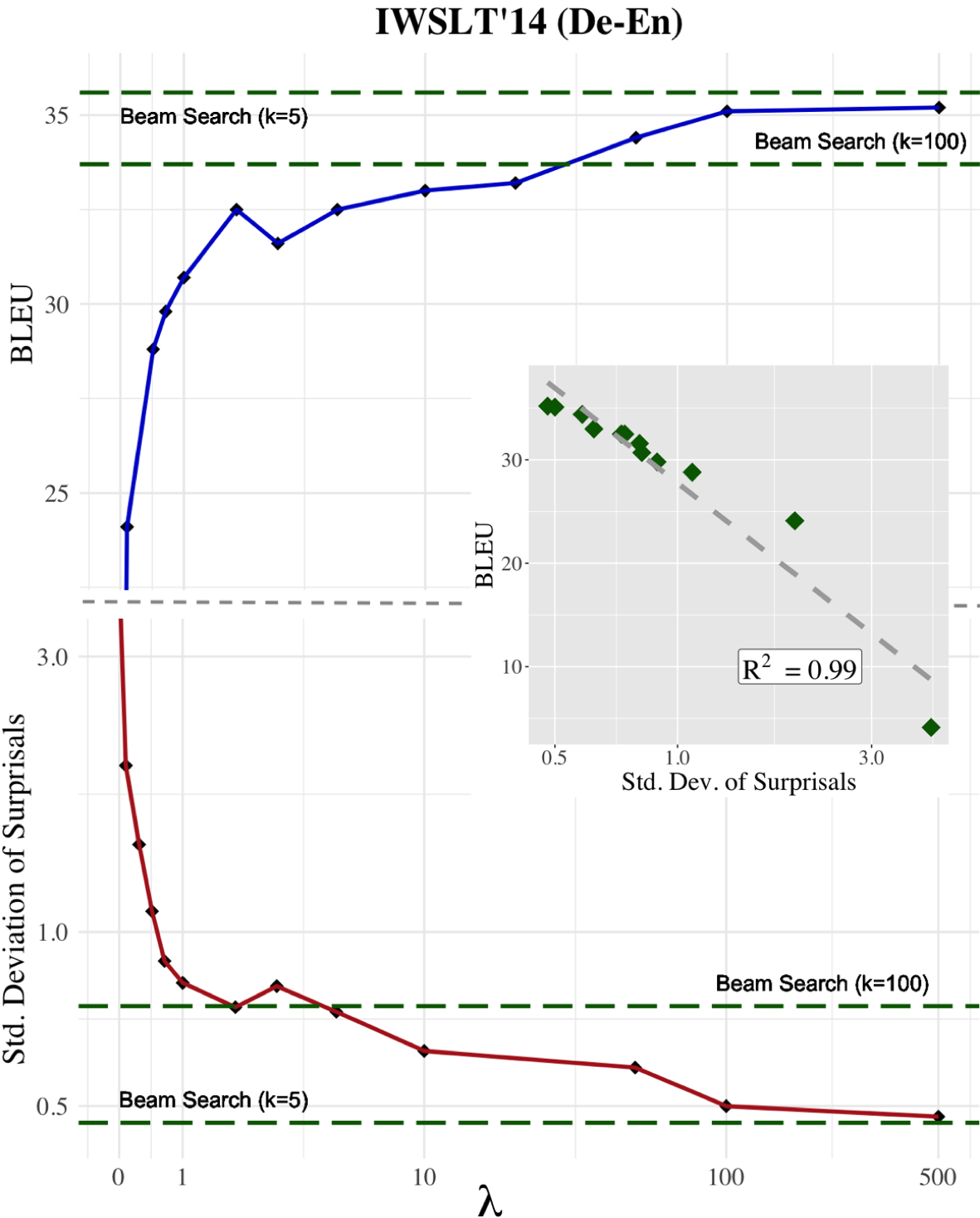

Figure 1: Distribution of surprisals and corpus BLEU for MAP objective translations with a UID-enforcing regularizer.

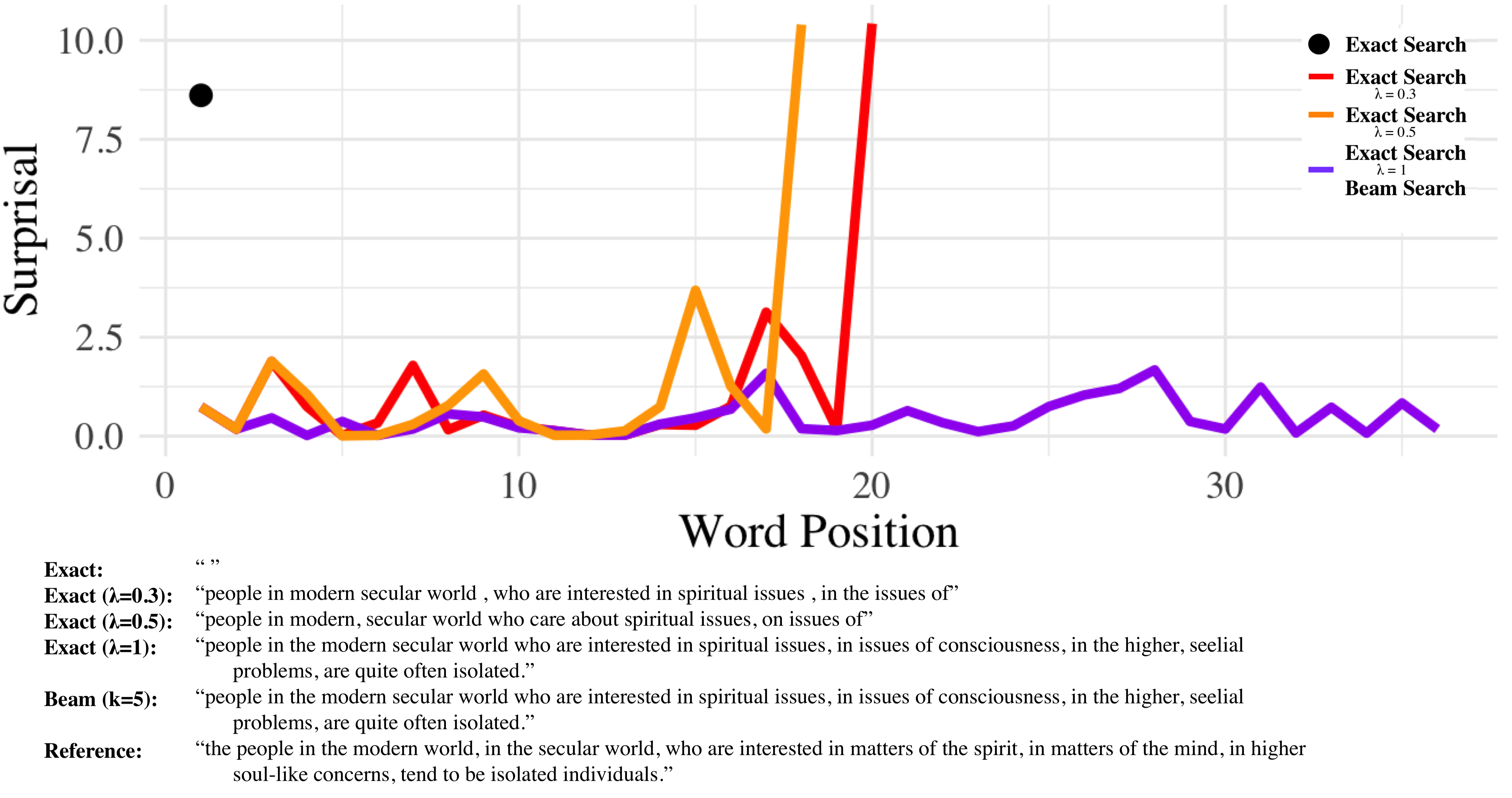

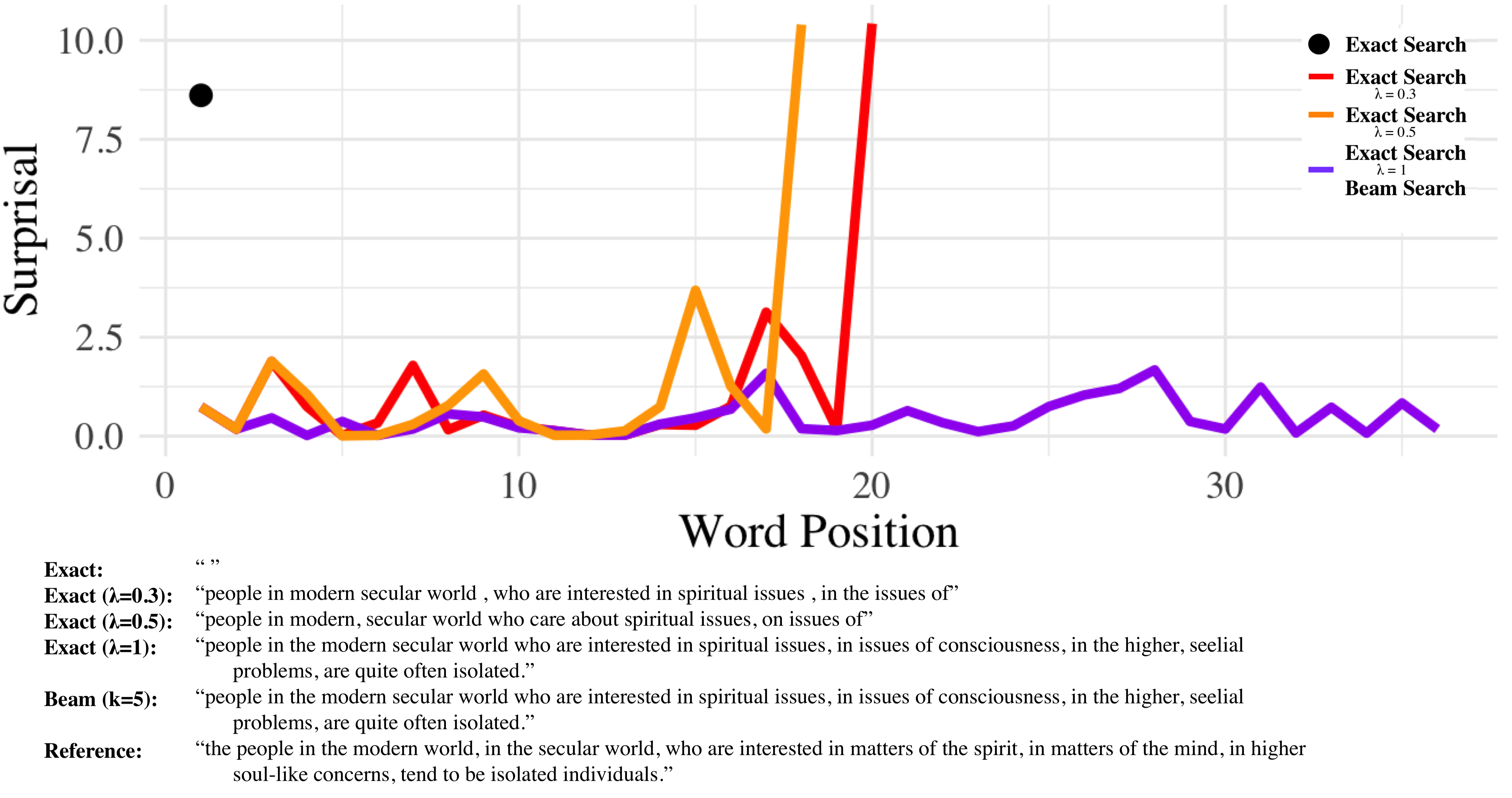

UID suggests a preference for text where information is evenly distributed across the linguistic signal, minimizing unexpected deviations. This aligns with psycholinguistic theories indicating that human language processing favors consistency in information distribution to facilitate comprehension. Beam search indirectly achieves this by strategically navigating local surprisal minimization, preventing sequences with disproportionate peaks in surprisal values.

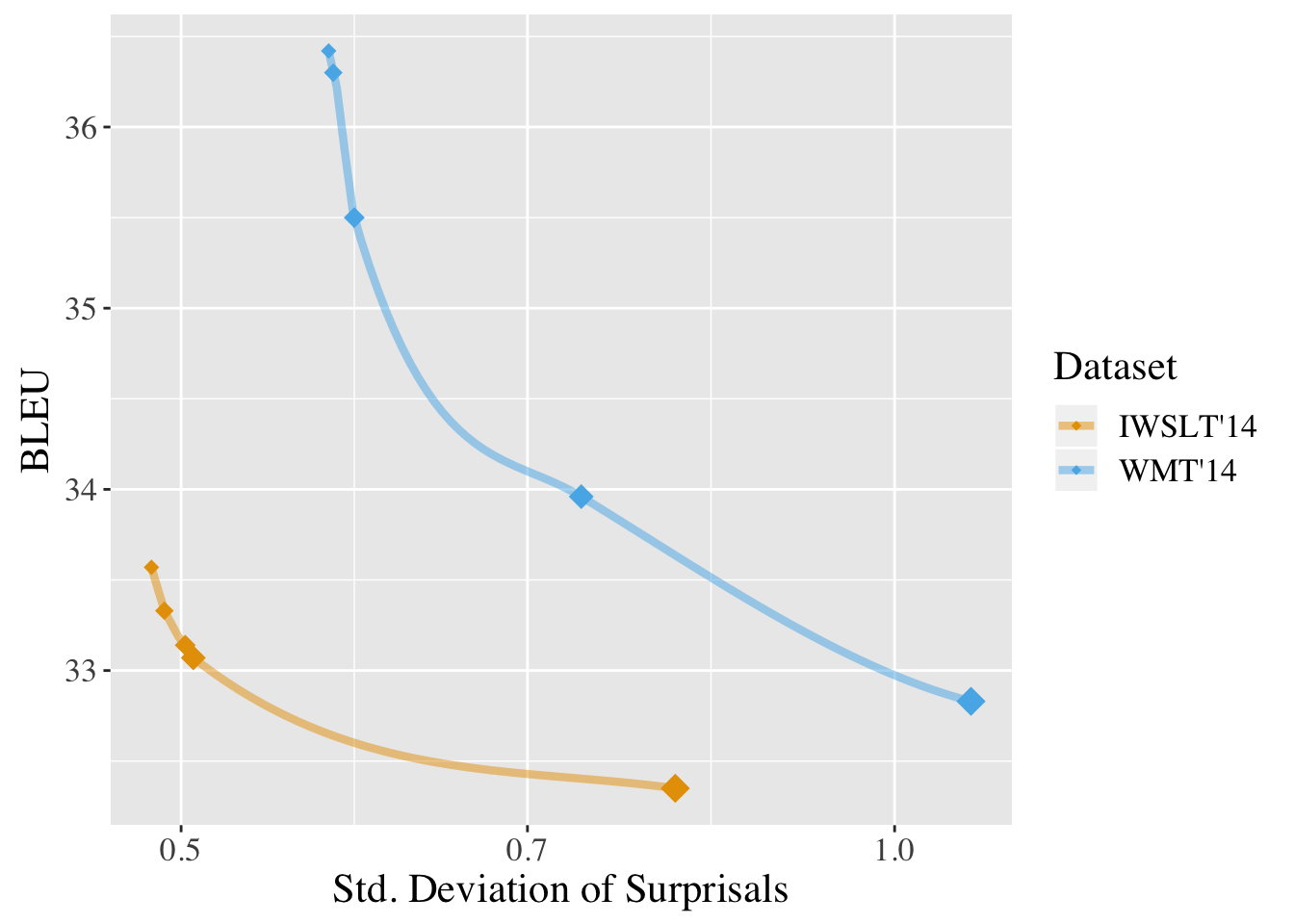

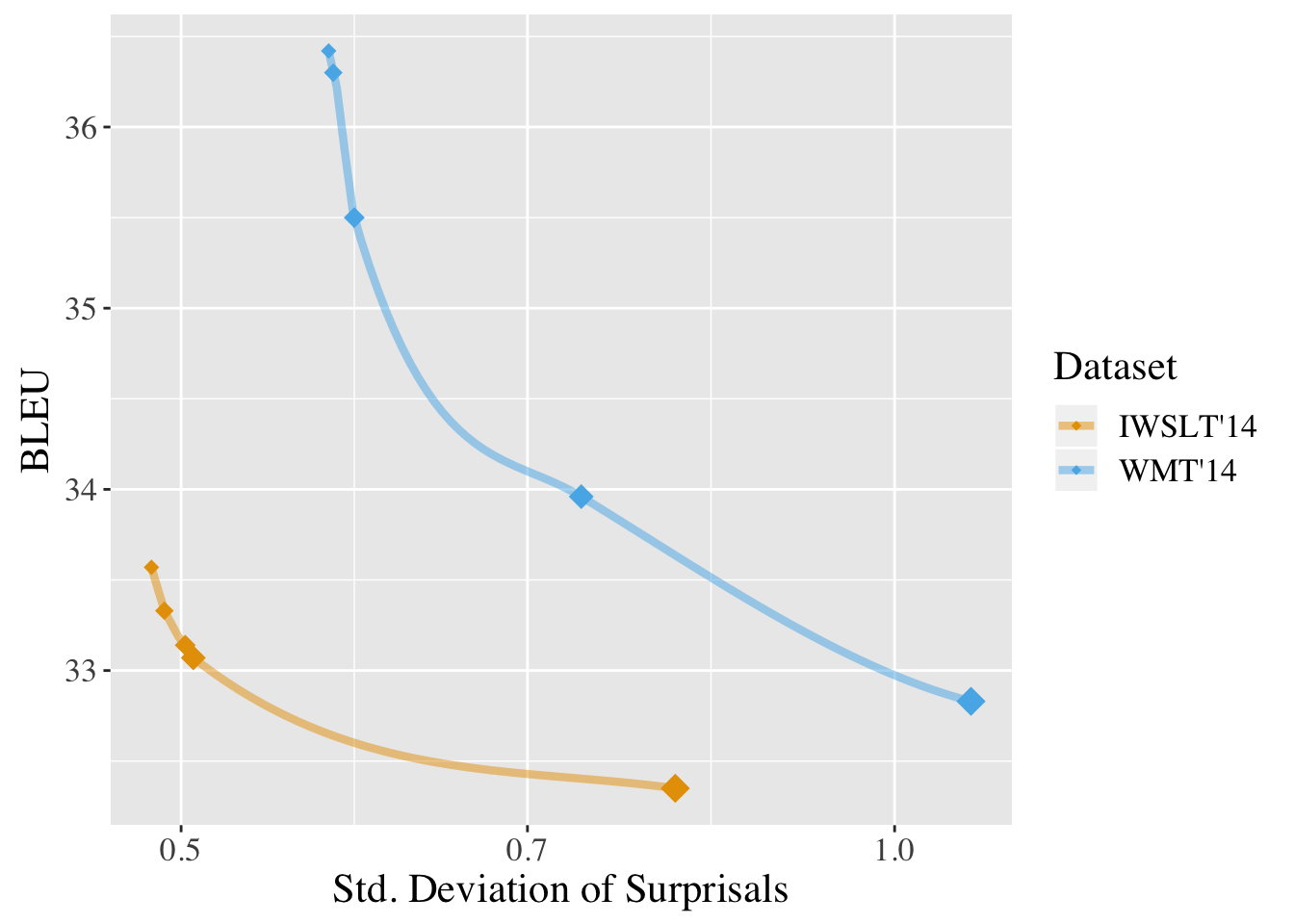

Empirical evidence supports this hypothesis, as demonstrated by a strong correlation between surprisals' standard deviation and BLEU scores—a standard metric for measuring translation quality. Translational outputs adhering closer to UID principles consistently exhibit higher quality, as indicated by increased BLEU scores.

Figure 2: Surprisals by time-step show beam search and regularized decoding maintaining UID principle.

Experimentation: Novel Regularizers

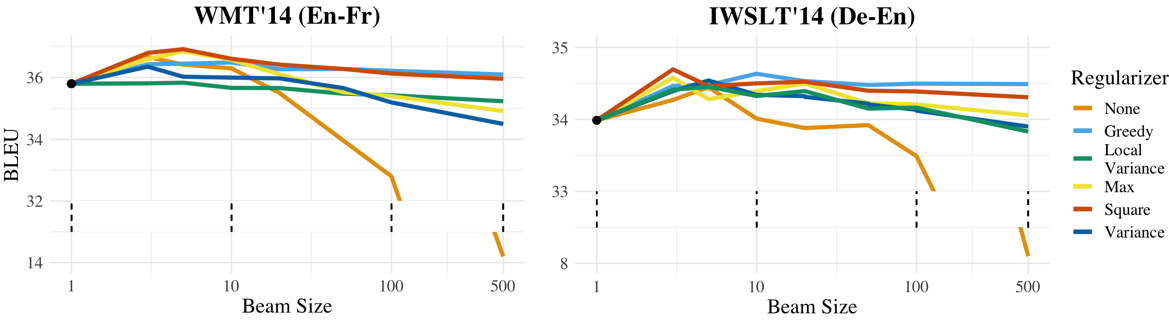

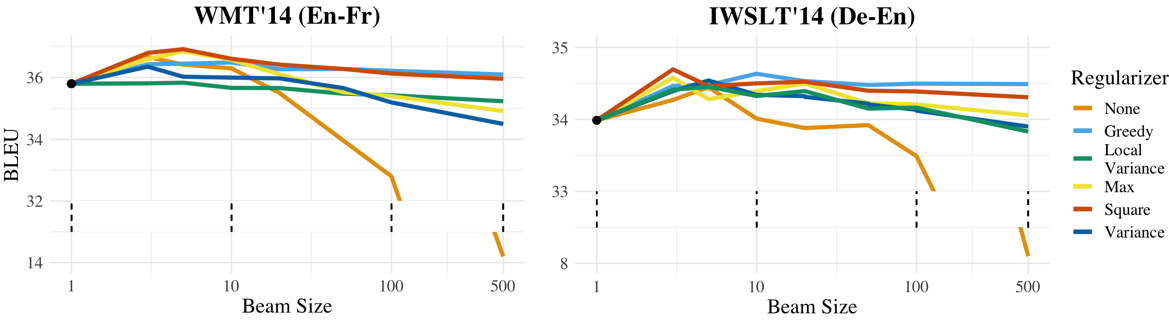

The authors propose and evaluate several UID-inspired regularizers, testing their impact on translation quality across different configurations:

- Variance Regularizer: Directly penalizes variance in surprisals, encouraging uniform distribution.

- Local Consistency Regularizer: Encourages adjacent temporal surprisal values to remain similar.

- Max Regularizer: Penalizes maximum surprisal peaks within a sequence, promoting smoother distributions.

- Squared Regularizer: Applies a quadratic penalty on surprisal values to enforce low variance more aggressively.

Experiments reveal that text generated under beam search with UID regularizers, even at higher beam widths, maintains improved BLEU scores compared to traditional methods without regularization. This further demonstrates that enforcing UID in decoding compliments neural models' text generation capabilities.

Figure 3: BLEU as a function of beam width across UID regularizers, illustrating improved performance.

Implications for Future Work

The findings suggest that integrating cognitive principles like UID into decoding strategies can potentially mitigate observed discrepancies in NLP model outputs versus human language production. Future research may explore refining these regularizers or discovering new cognitive theories that offer additional insights into language generation optimization.

Conclusion

This paper presents a compelling argument for viewing beam search beyond its traditional role, acknowledging its effective implementation of UID principles as an intrinsic factor for producing quality text from neural LLMs. These insights open avenues for developing advanced decoding strategies that align more closely with human cognitive processing, potentially revolutionizing machine translation and other areas within NLP.

Figure 4: BLEU vs. std. deviation of surprisals for beam search across test sets, illustrating the correlation between UID adherence and text quality.