- The paper demonstrates a comprehensive design of camera-based automated parking systems that leverages sensor fusion and hardware acceleration for real-time performance.

- The paper employs advanced image processing methods and machine learning for slot marking recognition and obstacle classification, enhancing detection precision.

- The paper addresses challenges in sensor calibration, integration with traditional sensors, and system reliability under automotive constraints.

Computer Vision in Automated Parking Systems: Design, Implementation, and Challenges

Introduction

Camera-based technologies have become integral to modern Advanced Driver Assistance Systems (ADAS), particularly within automated parking applications. This paper (2104.12537) presents a comprehensive exploration of vision-centric automated parking systems, detailing hardware architecture, algorithmic design, and the multifaceted use cases such systems must address. The discussion encompasses the full stack—from sensor fusion with traditional modalities to the challenges of robust, safety-critical perception on embedded platforms under automotive constraints.

Hardware Architecture for Automated Parking

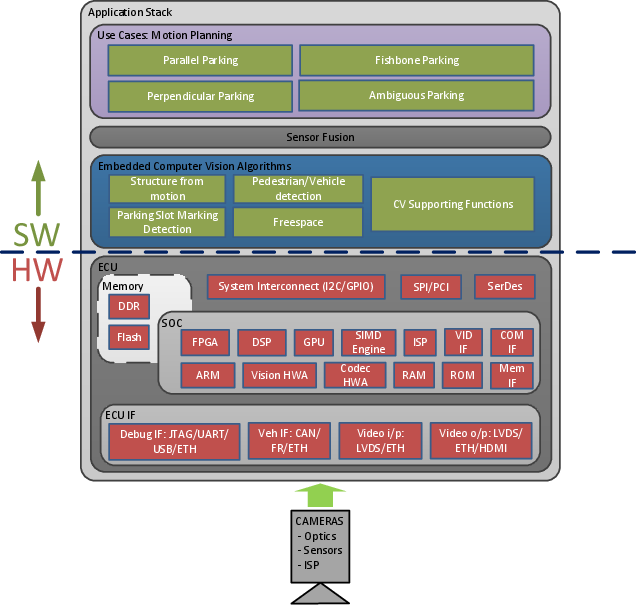

Automated parking systems are comprised of tightly integrated hardware components tailored for safety and real-time constraints. Centralized ECUs connect multiple wide-angle camera sensors via high-bandwidth, automotive-grade interfaces such as SerDes and Ethernet, balancing cost with functional throughput and safety. Memory architecture and on-chip bandwidth, often overlooked, directly impact algorithmic feasibility due to the high throughput demands of multi-camera systems. SOC selection is dictated by a complex interplay among pixel resolution and frame rate requirements, algorithmic parallelism, and system-level latency bounds.

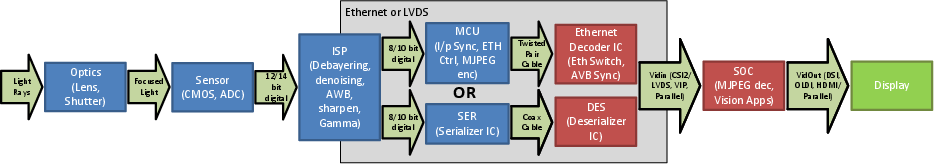

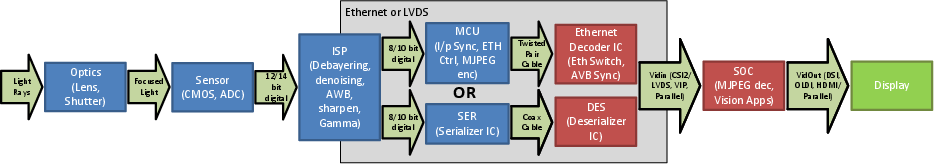

Figure 1: Block diagram of a Vision System, illustrating the integration of camera sensors, ISPs, and processing SOC for distributed automotive vision applications.

Wide-FOV fisheye optics are prevalent, yielding the necessary coverage for near-field sensing around the host vehicle, but introduce substantial projection distortion requiring nontrivial algorithmic compensation. Image Signal Processing (ISP), often implemented in dedicated hardware, processes raw sensor data—debayering, denoising, HDR correction—to formats suitable for vision algorithms. The trend toward custom computer vision accelerators within SOCs (e.g., dense optical flow, stereo disparity modules) enhances throughput and supports multi-threaded algorithm stacks.

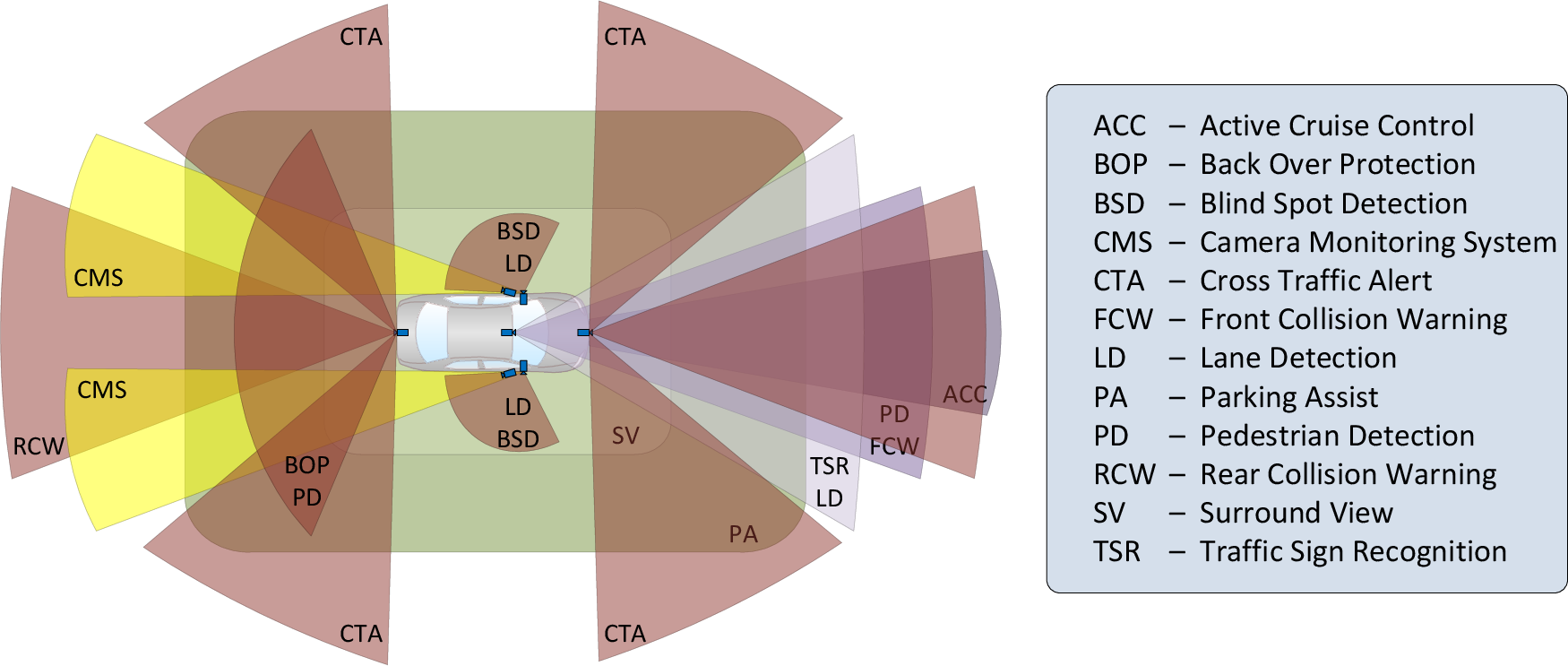

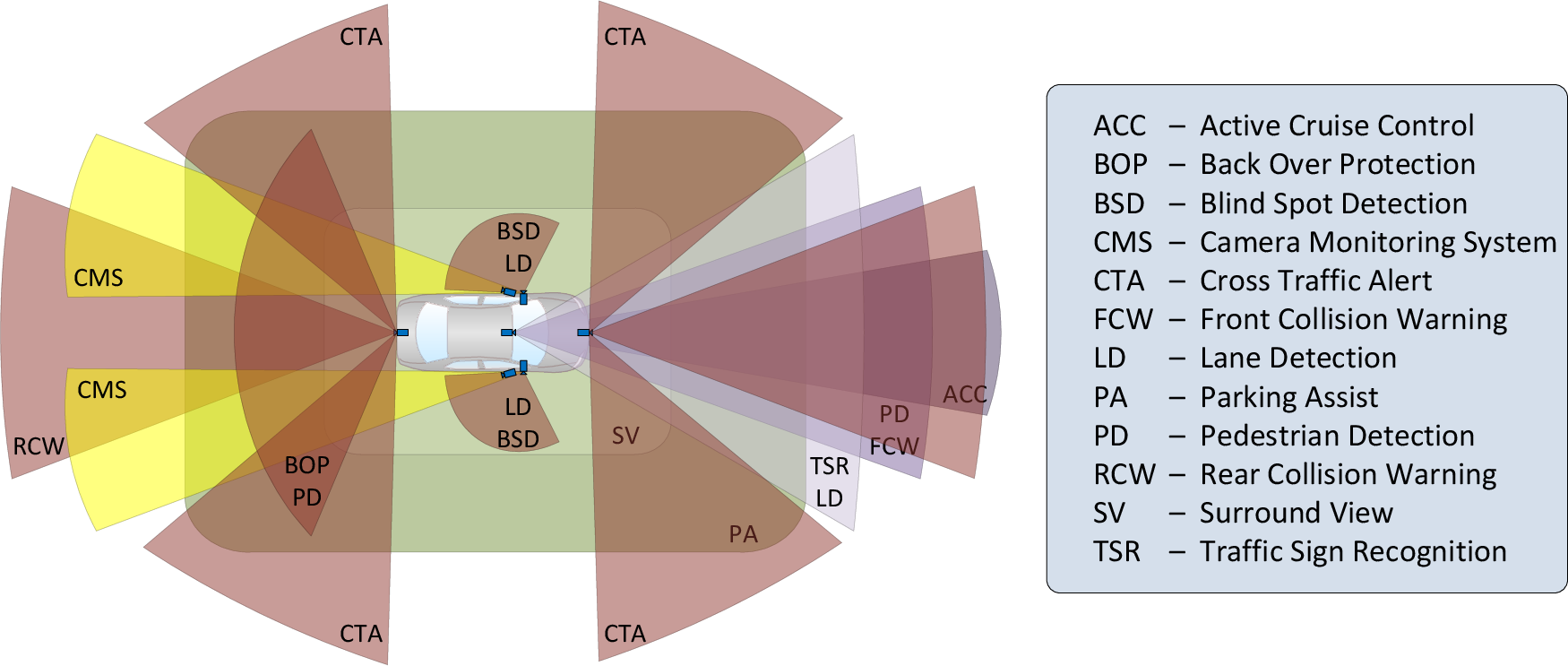

Figure 2: Camera-based ADAS applications and their respective field of view, highlighting the tailored camera layout for parking scenarios.

Automated Parking Use Cases and Vision-Enabled Benefits

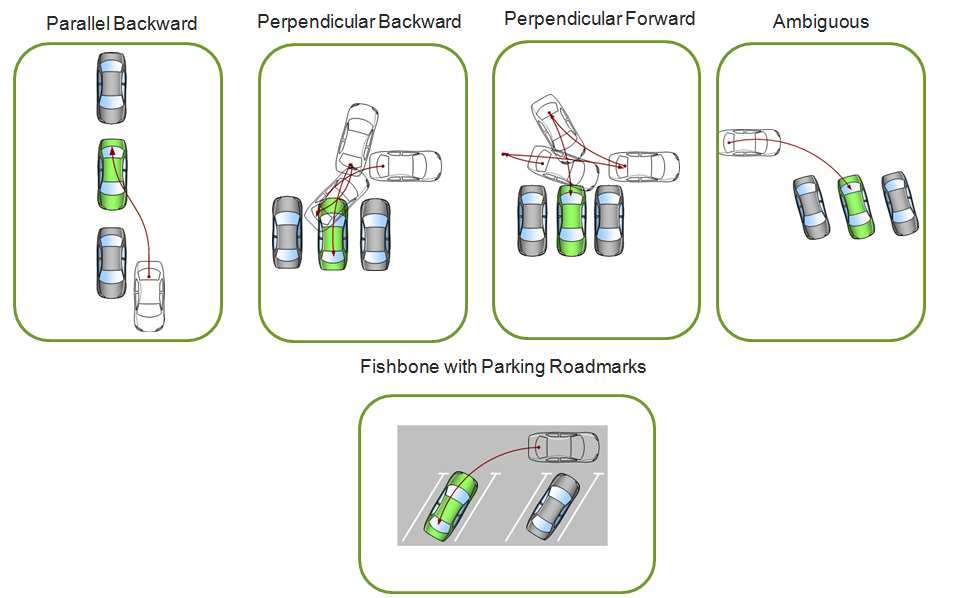

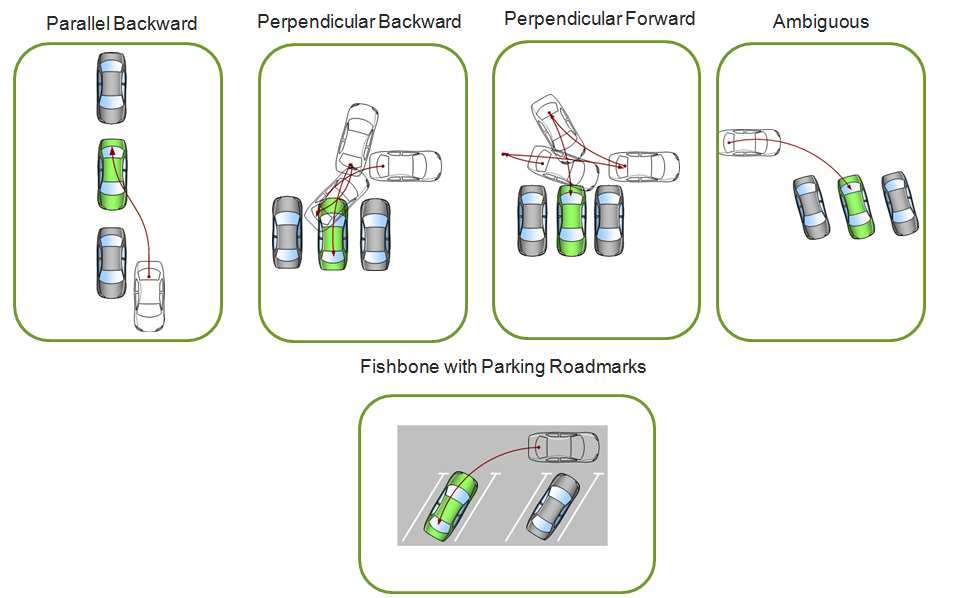

Automated parking maneuvers are highly variable, encompassing parallel, perpendicular (forward/reverse), fishbone, and ambiguous environments. Slot detection is a critical functionality, presently limited when relying solely on ultrasonics due to their inability to resolve fine features such as slot markings or kerb positions.

The introduction of multi-camera, computer vision-based systems provides several fundamental advantages:

- Slot Localization Precision: Cameras enable detection and localization of parking slot markings and boundaries directly, obviating the need for proximate obstacles required by ultrasonics. This results in more accurate alignment relative to the slot, rather than neighboring parked vehicles.

- Enhanced Range and Coverage: The detection range of cameras outpaces ultrasonics, allowing early selection and planning, and facilitating detection of subtle features (e.g., chain-link fences) missing in point cloud modalities.

- Object Classification: Machine learning applied to camera data allows for nuanced object classification (e.g., pedestrian versus static obstacle), which is crucial for trajectory planning and situational awareness.

- Redundancy and Sensor Fusion: Camera data, fused with traditional sensors, significantly increases robustness in emergency scenarios (e.g., comfort braking).

Figure 3: Classification of Parking scenarios—(a) Parallel Backward Parking, (b) Perpendicular Backward Parking, (c) Perpendicular Forward Parking, (d) Ambiguous Parking, (e) Fishbone Parking with roadmarkings—enabling algorithmic mapping between sensor configuration and maneuver type.

Vision-based systems also support overlaying object distance information on display streams, contributing to user comfort and fine-grained manual control.

Vision Algorithms: Feasibility and Integration

Four primary computer vision modules drive the automated parking stack:

- 3D Reconstruction: Depth estimation via stereo disparity or monocular structure from motion enables environmental mapping, slot boundary extraction, and trajectory planning. Dense, high-accuracy point cloud generation is essential for kerb detection and refined maneuver execution.

- Slot Marking Recognition: Image rectification, edge extraction, and model-based feature detection (HOG, LBP) facilitate recognition of ground markings, crucial for slot confirmation and legal compliance in structured lots.

- Object Detection and Pedestrian Tracking: Machine learning (classical and deep learning approaches) is employed for real-time detection and tracking, underpinning safety-critical functionalities such as emergency stop logic and dynamic trajectory adaptation.

- Freespace Estimation: Occupancy grid-based road segmentation identifies drivable areas and eliminates stale or erroneous obstacle data in local maps.

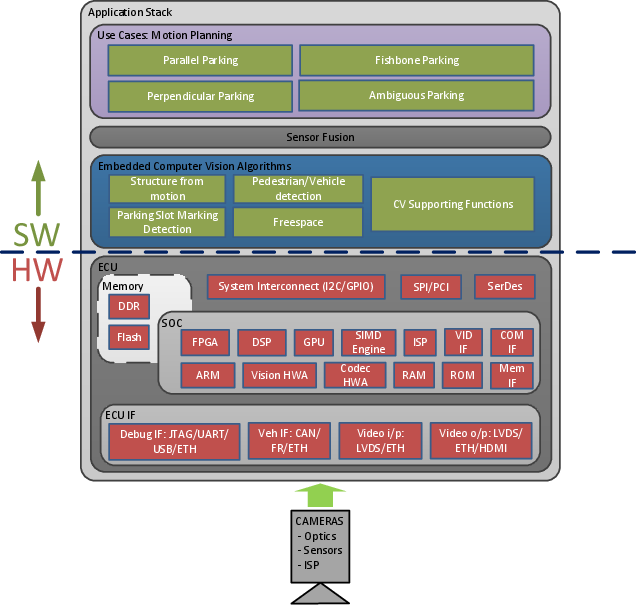

Algorithmic deployment is constrained by the real-time capability of the automotive compute stack; pixel-level operations are offloaded to hardware accelerators, while higher-level inference is orchestrated via DSPs or GPPs.

System Design and Application Stack

A full camera-based automated parking system is a tightly coupled stack, from physical sensors through computer vision modules to user interfaces and actuation. Design choices—from imager resolution to SOC type—have cascading effects on attainable use cases, algorithmic performance, and overall system robustness. State machine logic optimizes compute load dynamically by prioritizing active modules based on maneuver stage and environmental context.

Figure 4: Application Stack for full Automated Parking system using cameras, delineating vision algorithms mapped to embedded processing resources and user-facing functions.

Vision functions require continuous calibration and soiling detection to ensure reliability; sensor fusion (with ultrasonics/radar) mitigates limitations or failures in any individual modality.

Next-Generation Developments and Theoretical Implications

Recent advances in deep learning (especially CNNs) have yielded substantial gains in detection accuracy (object detection, semantic segmentation) and facilitate the migration of geometric vision algorithms into data-driven frameworks. HW support for >10 TOPS CNN inference is increasingly standard. Future systems will automate the full parking cycle, including slot search in unknown environments (requiring real-time SLAM, active exploration, and robust mapping under dynamic lighting and occlusion), pushing toward Level 5 autonomy.

Theoretical challenges remain in generalizing vision algorithms across highly variable and visually ambiguous parking environments. Trajectory replay for "Park4U Home" and automated search in unmapped lots highlight ongoing issues in landmark-based localization, dynamic obstacle adaptation, and long-horizon planning.

Conclusion

Camera-based computer vision is central to the evolution of automated parking systems, enabling robust slot detection, trajectory planning, obstacle avoidance, and situational awareness within the constraints of automotive hardware. Vision algorithms, fused with traditional range sensors, significantly expand use case coverage and practical reliability. The trend toward deep learning and hardware-accelerated perception is set to redefine the landscape, advancing practical autonomy and theoretical understanding in automotive robotics.