- The paper introduces APSD-OC, a fully automatic system that detects parking slots using YOLOv5 and DBSCAN without manual annotation.

- It applies perspective normalization to transform vehicle detections, ensuring robust slot localization even under occlusions and varying weather conditions.

- The ResNet34 classifier achieves over 99% accuracy on PKLot and CNRPark-EXT, demonstrating high generalization and efficiency.

Automatic Vision-Based Parking Slot Detection and Occupancy Classification

Introduction

The paper presents APSD-OC, a fully automatic vision-based algorithm for parking slot detection and occupancy classification. The approach eliminates the need for manual slot annotation, a major bottleneck in deploying scalable parking guidance information (PGI) systems. APSD-OC leverages vehicle detection across temporal image sequences, perspective normalization, density-based clustering, and a deep classifier for robust slot localization and occupancy inference. The method is evaluated on PKLot and CNRPark-EXT, two public datasets with diverse weather, occlusion, and viewpoint conditions, demonstrating high precision, recall, and generalization.

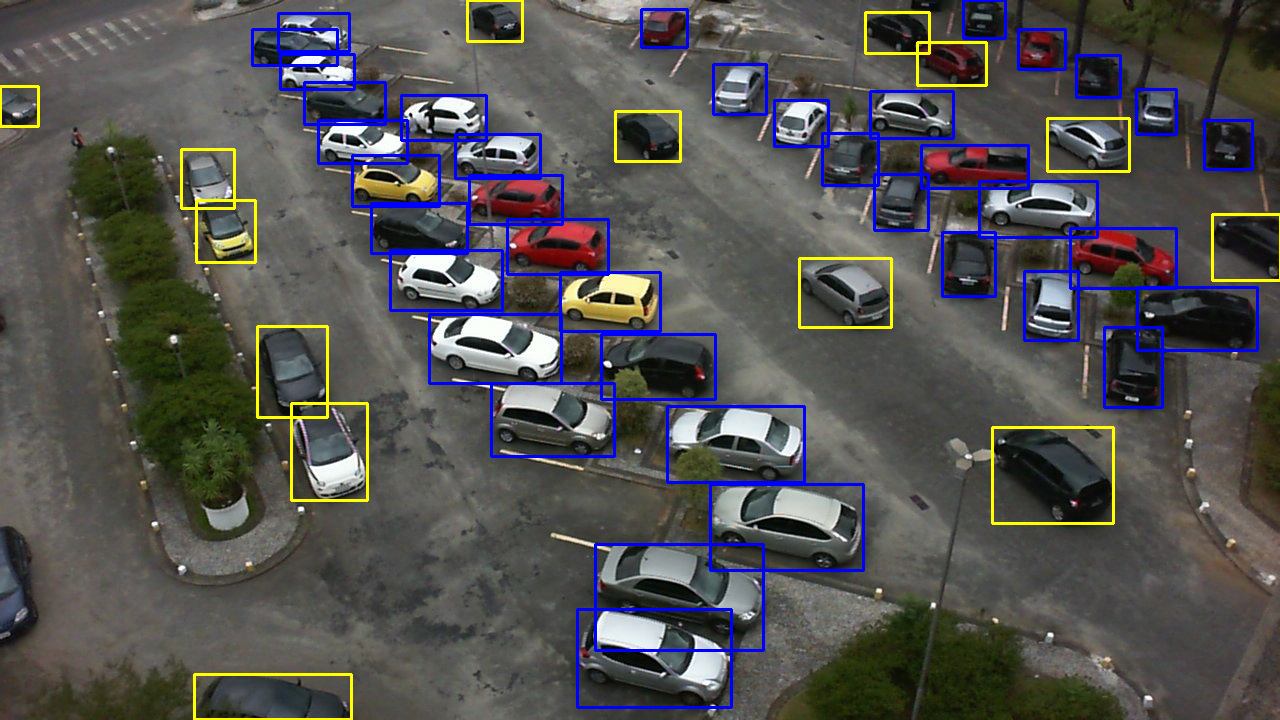

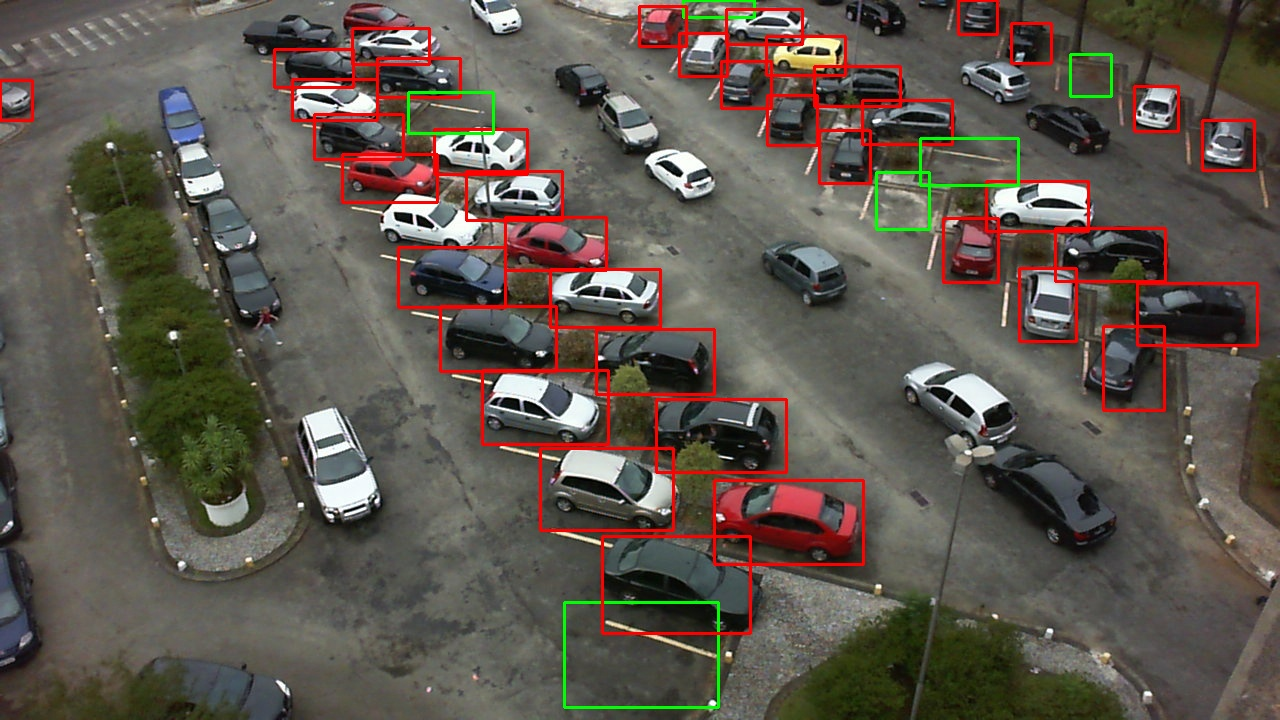

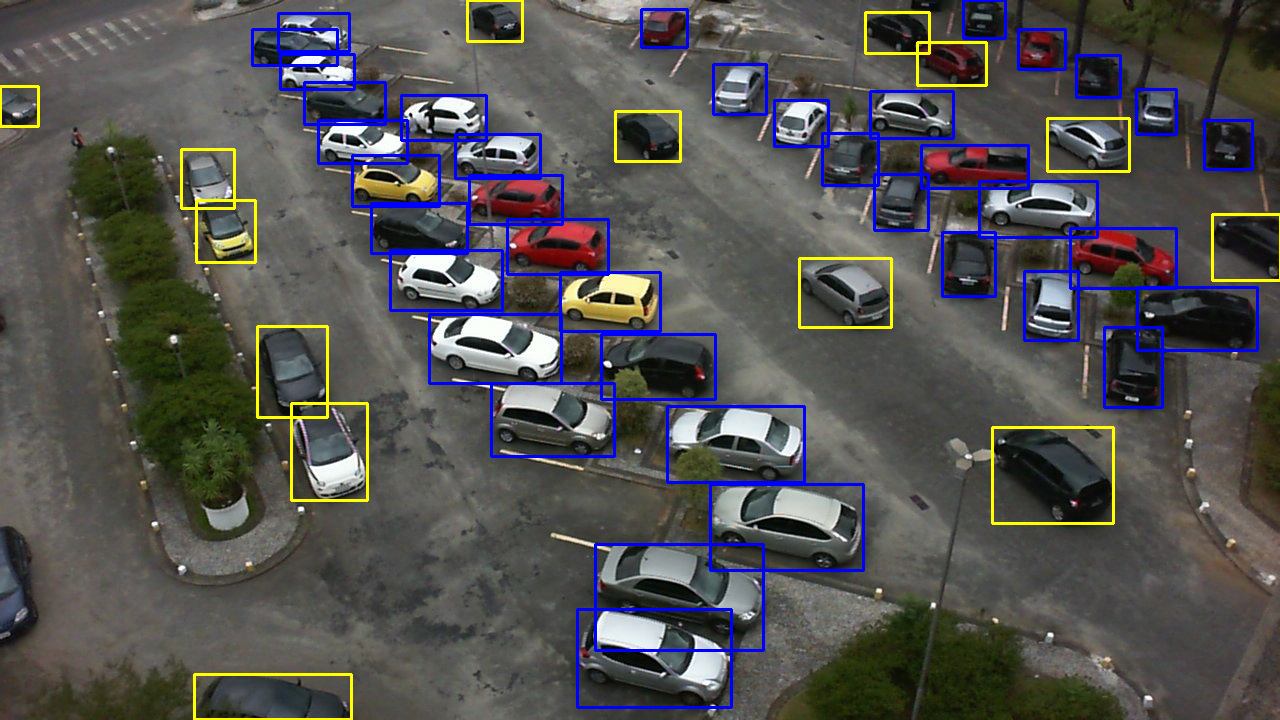

Figure 1: An example of a parking lot image from PKLot dataset. Properly parked vehicles are marked with blue bounding box.

System Architecture

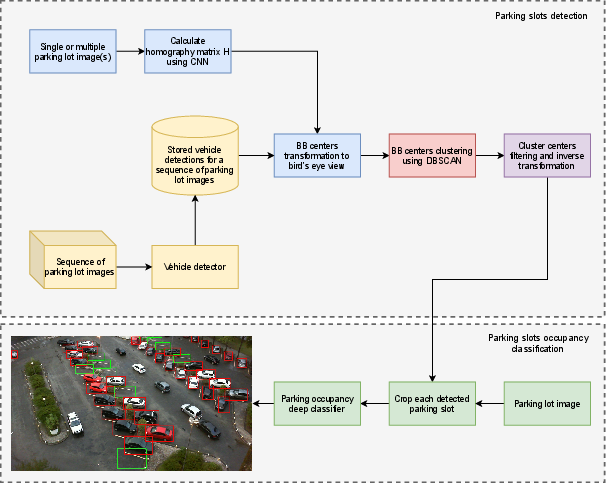

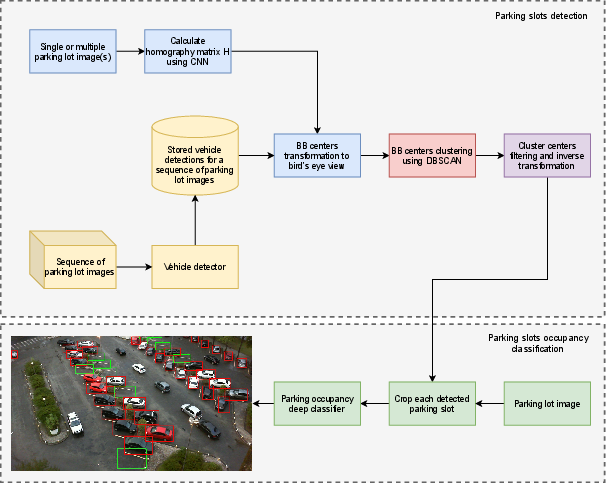

The APSD-OC pipeline consists of two main stages: (1) automatic parking slot detection and (2) slot occupancy classification. The detection stage processes a sequence of images from a fixed camera, applies vehicle detection (YOLOv5), transforms detections to a bird's eye view, and clusters detection centers using DBSCAN to infer slot locations. The occupancy classification stage crops detected slot regions and classifies them as occupied or vacant using a fine-tuned ResNet34.

Figure 2: The block diagram of the proposed APSD-OC algorithm.

Vehicle Detection

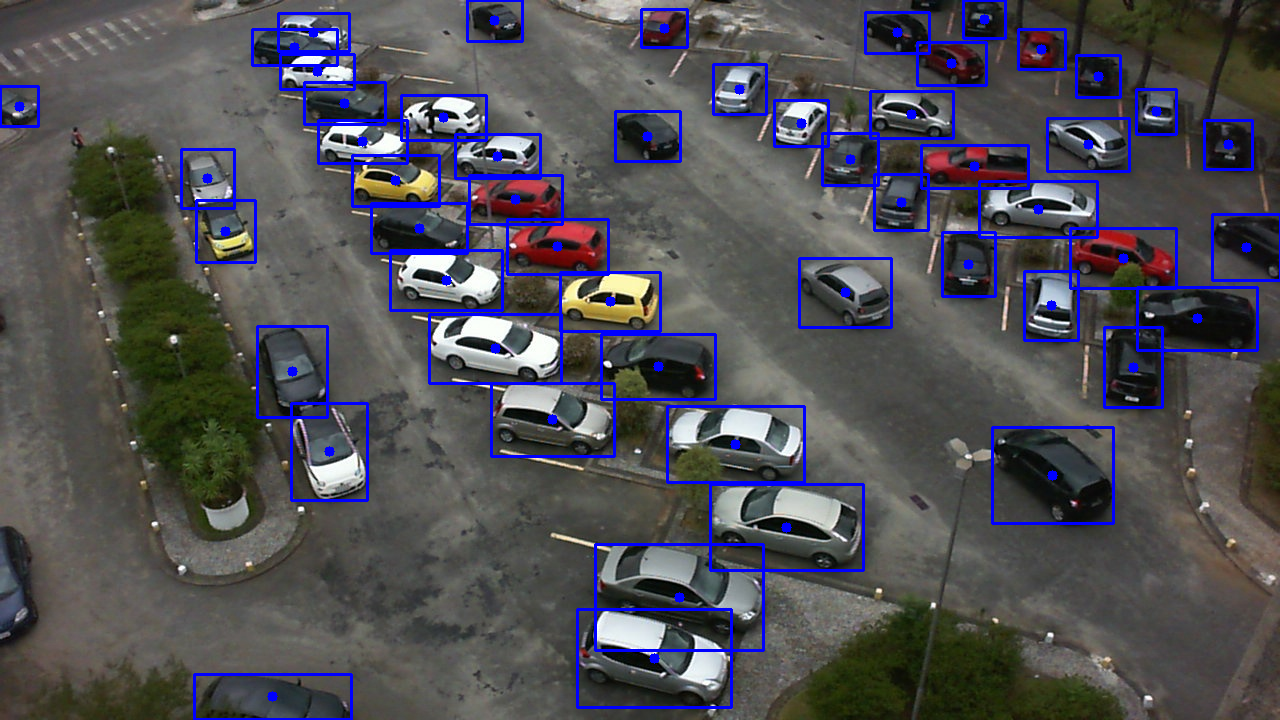

YOLOv5x, pretrained on COCO, is used for vehicle detection, focusing on "car" and "truck" classes with a confidence threshold of 0.5. Images are resized to 1280×1280 px. The detector outputs bounding boxes (BBs) for each vehicle, and their centers are extracted for further processing.

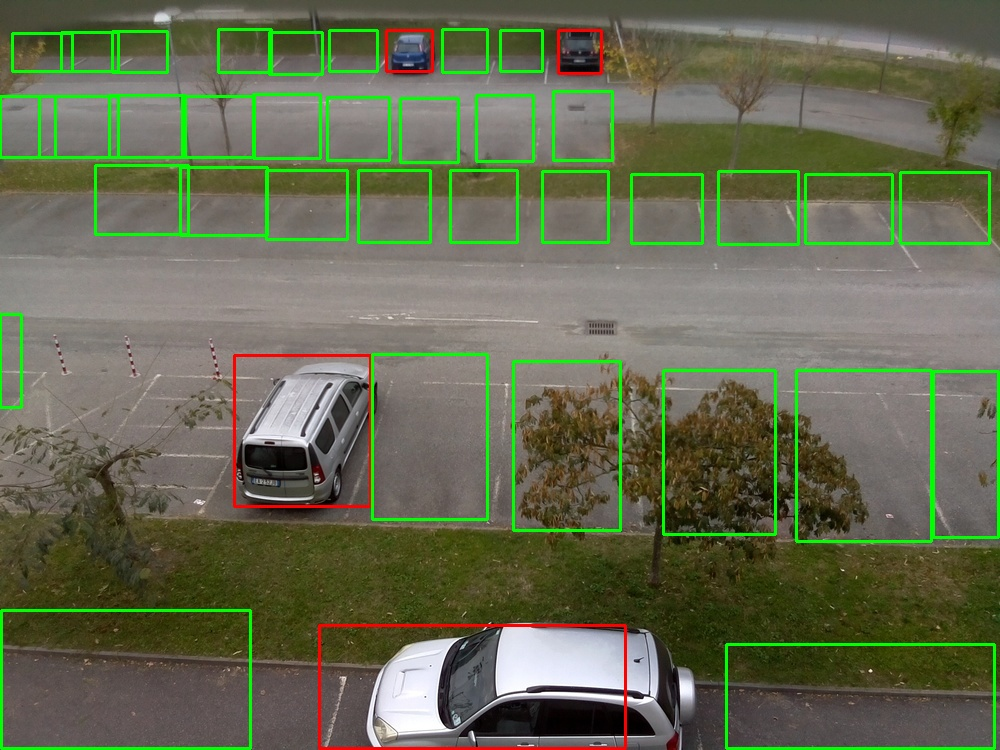

Figure 3: Vehicle detection in a single input image.

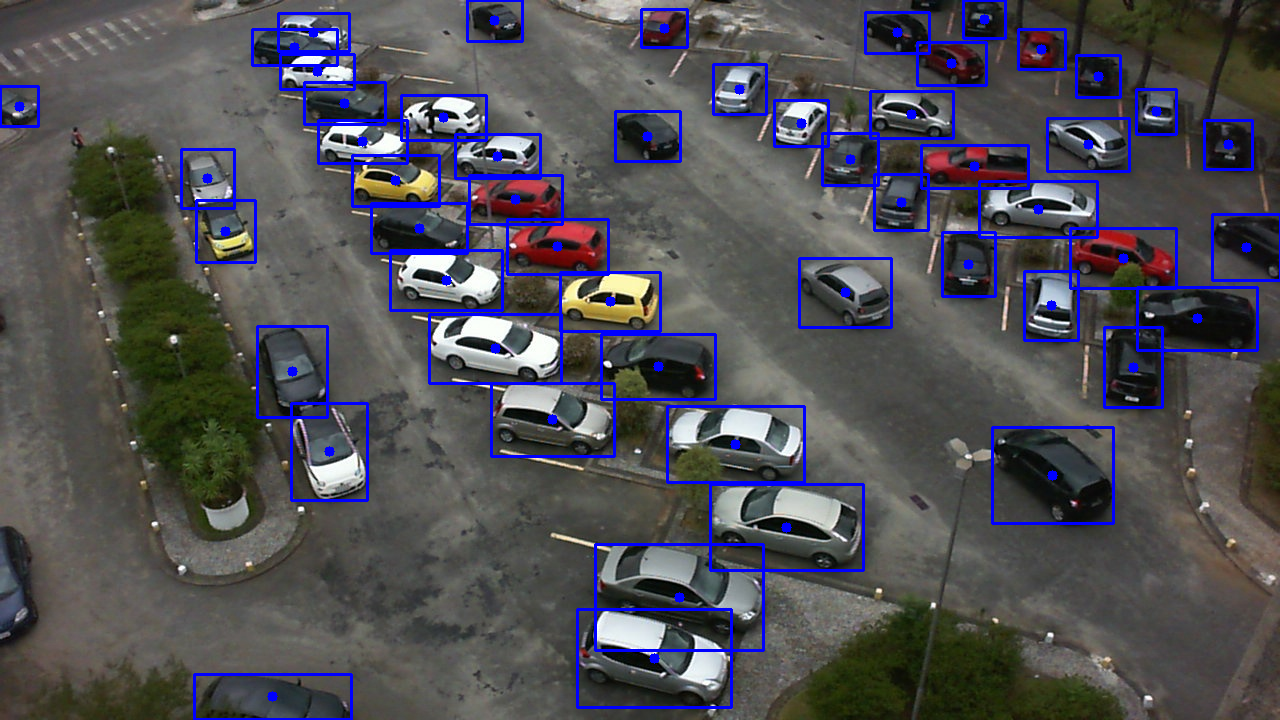

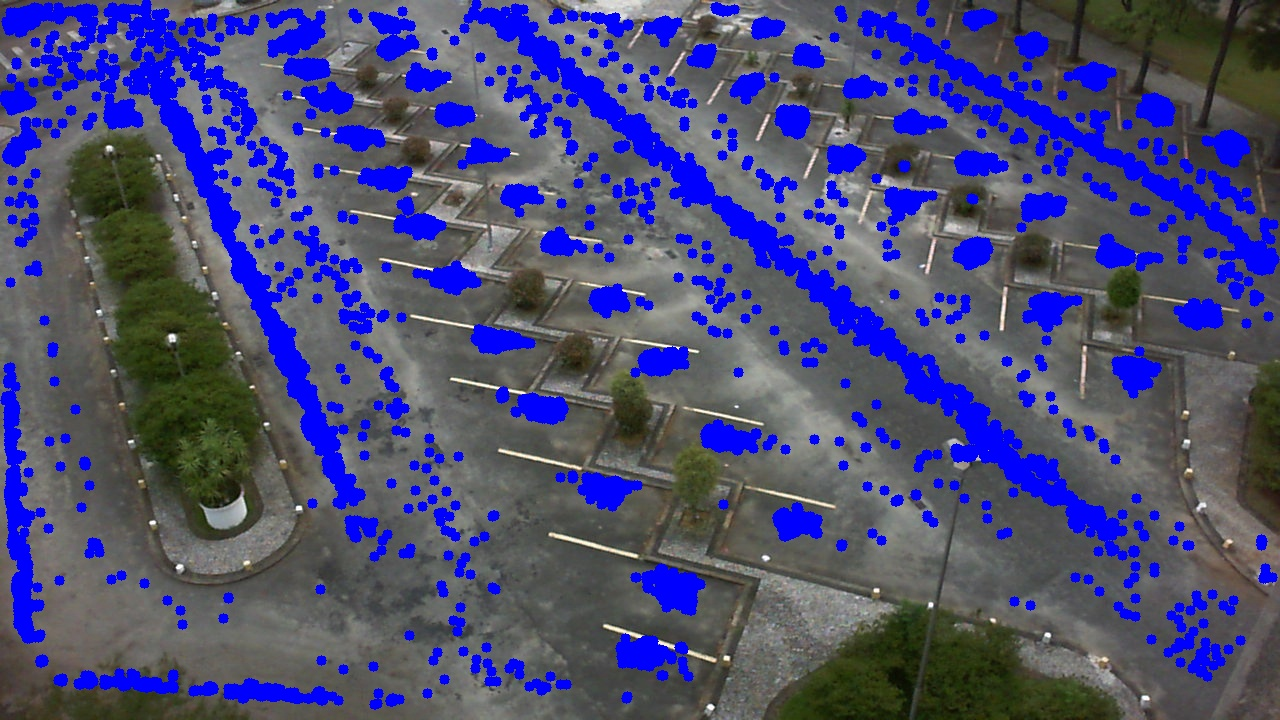

To address perspective distortion, BB centers are mapped to a bird's eye view using a homography matrix H, estimated via a CNN regressor trained on synthetic data (following [Abbas et al., (Abbas et al., 2019)]). This normalization ensures uniform cluster density across the parking lot, facilitating robust clustering.

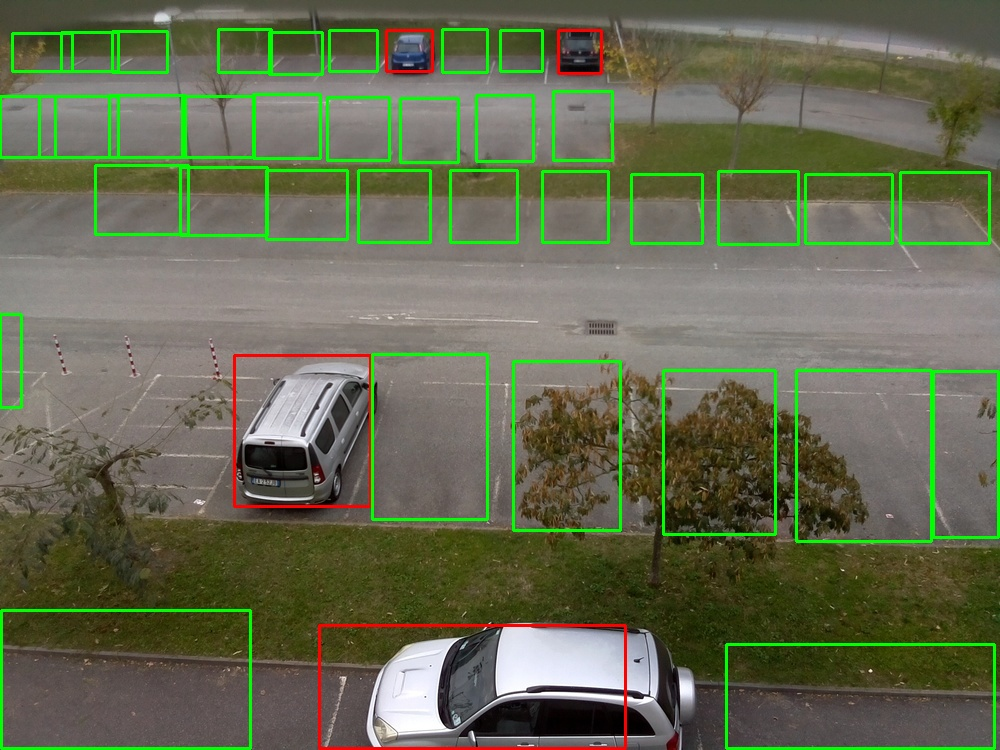

Figure 4: Transformed BBs centers of vehicle detections for N input images.

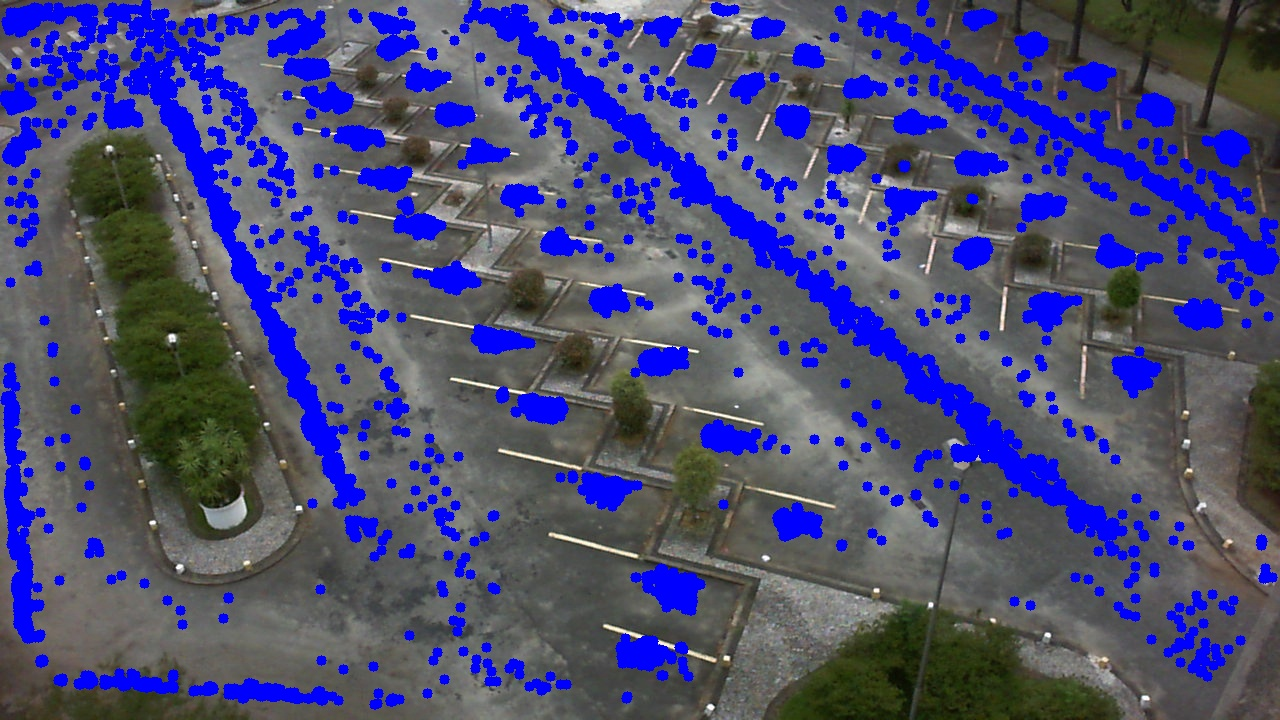

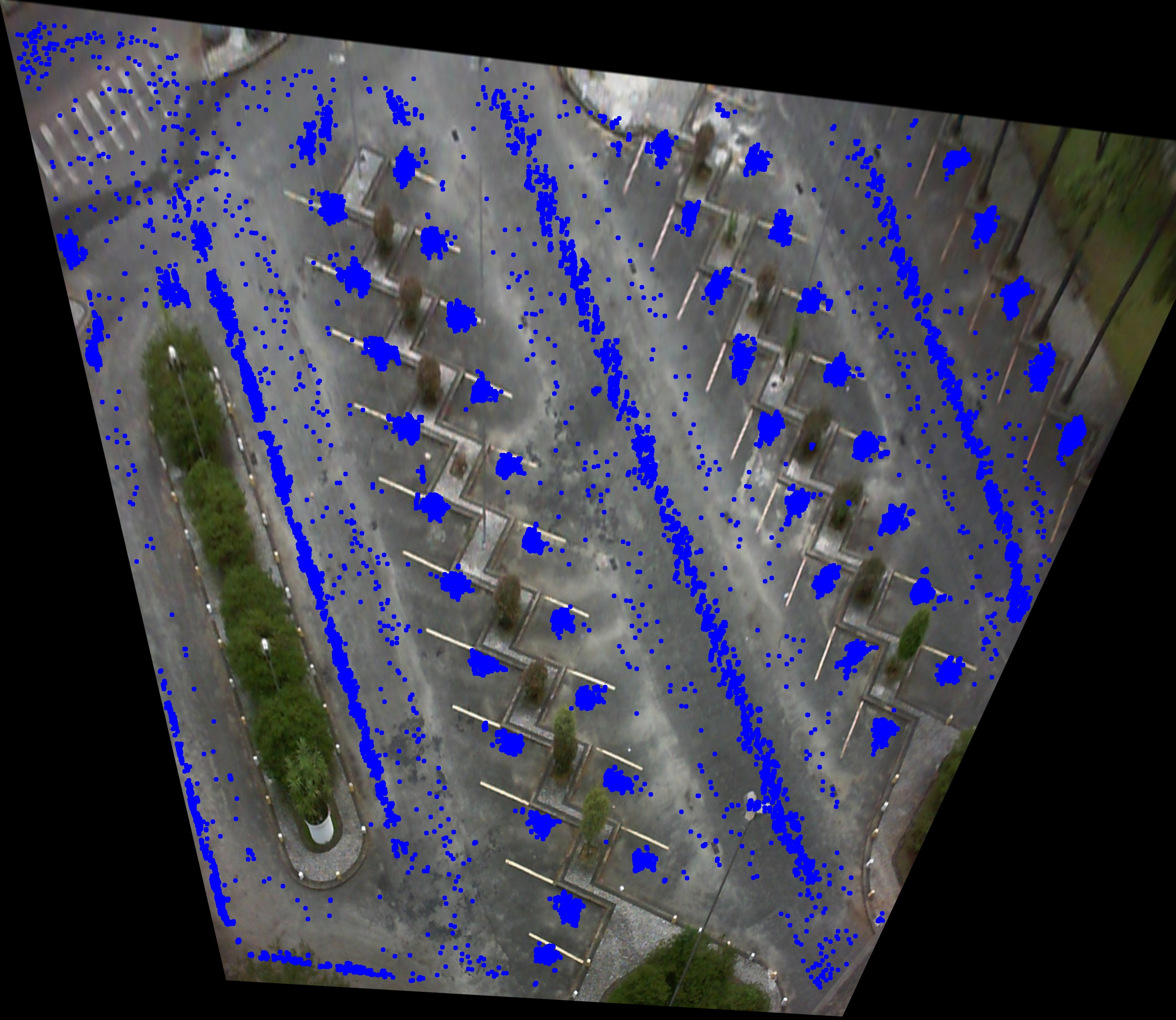

Clustering and Slot Localization

DBSCAN is applied to the transformed BB centers to identify high-density regions corresponding to parking slots. Clusters with high intra-cluster variance are filtered out, as they typically correspond to illegal parking or transient vehicle presence. The number of slots to retain is a user-supplied parameter, easily obtainable from the scene.

Figure 5: Filtered cluster centers mi corresponding to detected parking slots.

Cluster centers are then mapped back to the original image coordinates, and bounding boxes are defined for each slot based on the mean BBs in each cluster.

Occupancy Classification

Each detected slot region is cropped and classified as occupied or vacant using a ResNet34 model, pretrained on ImageNet and fine-tuned on PKLot and CNRPark-EXT. The classifier head is replaced and trained with a 1cycle learning rate policy, linear warmup, cosine annealing, and cyclical momentum (Adam optimizer). The approach follows the Amato split for training/testing to ensure comparability with prior work.

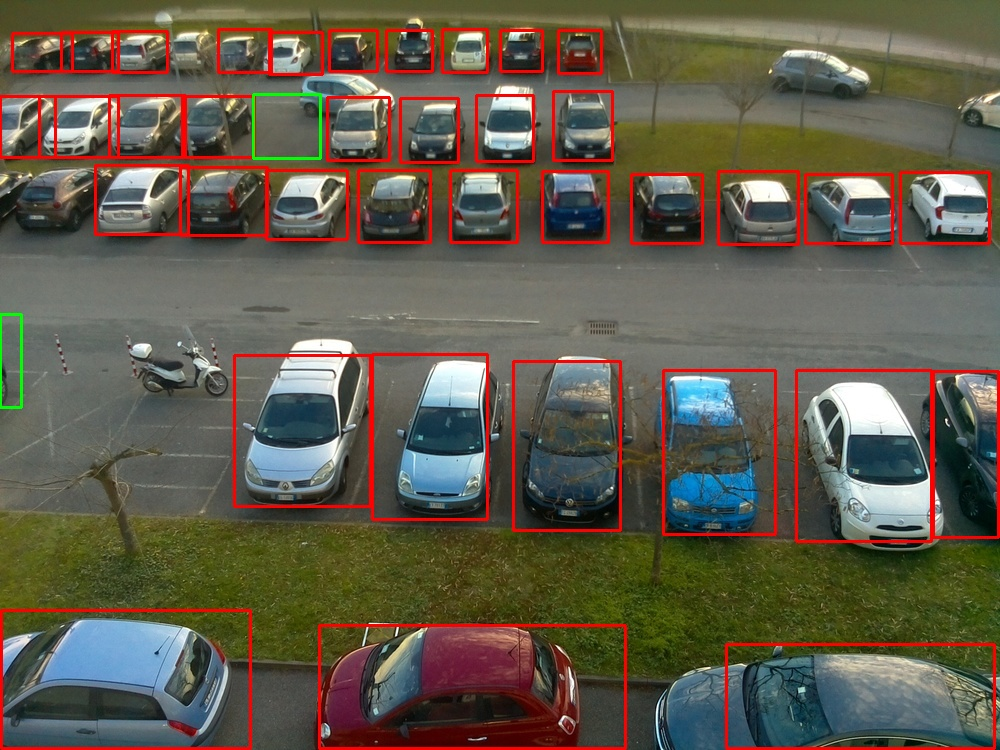

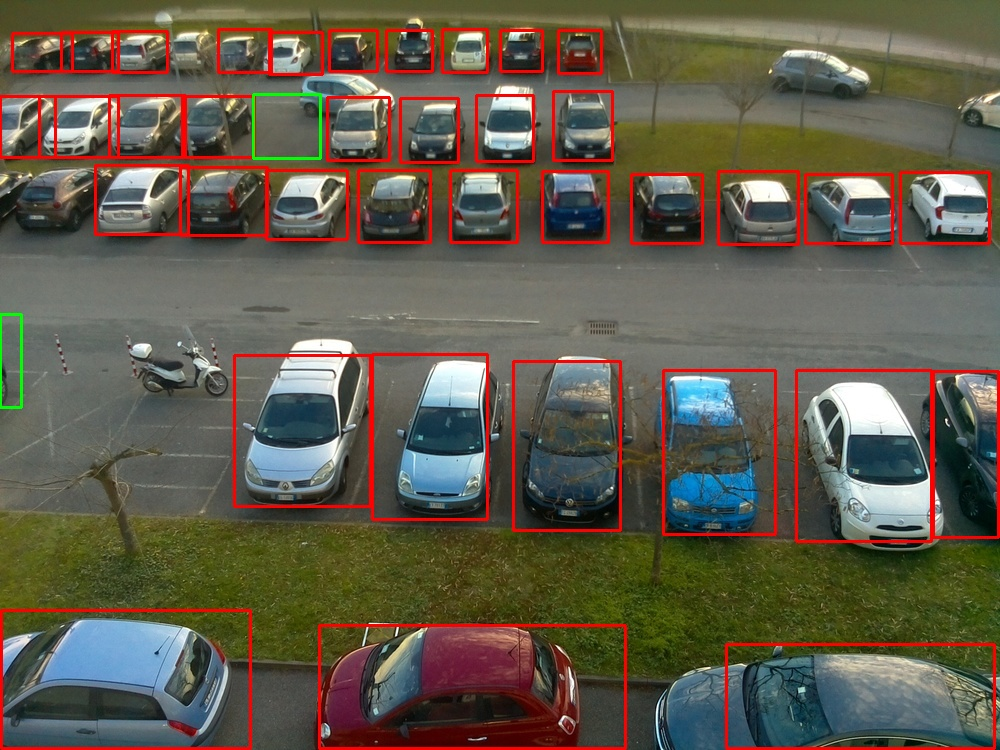

Figure 6: Parking lot with all vehicles properly parked.

Figure 7: Parking lot with several properly parked vehicles.

Experimental Evaluation

Datasets

- PKLot: 12,417 images, 695,900 slot annotations, three camera views, diverse weather.

- CNRPark-EXT: 4,278 images, 144,965 annotations, nine cameras, challenging occlusions.

Figure 8: PKLot PUCPR sunny

Parking Slot Detection

Detection is evaluated using precision and recall, with ground truth slot counts established via manual annotation due to incomplete dataset labels. As the number of input images increases, recall improves significantly, especially for large lots (e.g., PUCPR). For UFPR05, using all images yields 97.73% precision and recall. CNRPark-EXT results show 100% precision for several cameras, with recall limited primarily by occlusions.

Key findings:

- Precision and recall exceed 90% with sufficient temporal coverage.

- Robustness to illegal parking and passing vehicles is demonstrated.

- Only the number of visible slots is required as a user input.

Occupancy Classification

Classification is benchmarked against CarNet and mAlexNet. The ResNet34 classifier achieves the highest accuracy in 7/9 PKLot train/test splits, with AUC > 0.99 in all cases. On CNRPark-EXT, the method outperforms mAlexNet and AlexNet by a large margin, achieving >99% accuracy when trained on diverse viewpoints and weather.

Notable results:

- PKLot: 99.98% accuracy (UFPR04), 99.92% (UFPR05), 99.93% (PUCPR) in intra-lot splits.

- CNRPark-EXT: 99.67% accuracy, 0.9981 AUC with full training set.

- Generalization: High robustness to viewpoint and weather changes; >98% accuracy in cross-condition tests.

Implementation Considerations

- Computational Requirements: YOLOv5x and ResNet34 are efficient for real-time inference on modern GPUs; edge deployment is feasible with lighter YOLO variants and quantized classifiers.

- Scalability: The method is camera-agnostic, requiring only the number of slots per view. No manual annotation or camera calibration is needed.

- Limitations: Recall may be affected by persistent occlusions or insufficient temporal coverage. The method assumes a fixed camera position during slot detection.

- Deployment: The pipeline can be integrated into existing surveillance infrastructure, providing real-time PGI with minimal operational overhead.

Theoretical and Practical Implications

The APSD-OC framework demonstrates that slot localization can be reliably inferred from vehicle detection statistics over time, obviating the need for manual slot annotation or explicit parking line detection. The use of perspective normalization and density-based clustering generalizes across diverse scenes and camera geometries. The decoupling of slot detection and occupancy classification enables modular upgrades and adaptation to new environments.

Contradictory to prior claims, the results show that fully automatic slot detection is feasible and robust, even in the presence of occlusions and non-rectangular layouts, provided sufficient temporal data is available.

Future Directions

Potential extensions include:

- Automatic estimation of slot count via spatial analysis of detection distributions.

- Incorporation of spatial priors (e.g., slot alignment, regularity) to further improve detection in highly irregular lots.

- Temporal smoothing for occupancy classification to handle transient occlusions and improve robustness.

- Edge deployment with model compression and hardware acceleration for large-scale, city-wide PGI systems.

Conclusion

APSD-OC provides a practical, scalable, and robust solution for vision-based parking slot detection and occupancy classification. By leveraging temporal vehicle detection, perspective normalization, and deep learning, the method achieves high accuracy and generalization across challenging real-world datasets. The approach removes the need for manual annotation and is readily deployable in diverse urban environments, supporting the development of intelligent PGI systems and smart city infrastructure.