- The paper presents a systematic meta-survey that organizes over 50 reviews to create a unified taxonomy for XAI methods, concepts, and evaluation metrics.

- It categorizes XAI explanations by task, data type, interpretability features, and evaluation metrics, providing a clear framework to improve AI transparency.

- The work offers actionable insights for deploying both model-agnostic and interactive XAI methods in regulatory and safety-critical applications.

A Comprehensive Taxonomy for Explainable Artificial Intelligence

Explainable Artificial Intelligence (XAI) is a dynamic research field that has grown exponentially, necessitating a structured taxonomy to organize diverse XAI methods. This paper presents a systematic survey of surveys, culminating in a comprehensive taxonomy of XAI methods, concepts, and evaluation criteria. It aims to unify existing taxonomies and provide a foundation for targeted, use-case-oriented research in XAI.

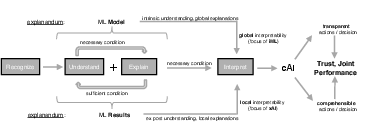

Figure 1: A framework for comprehensible artificial intelligence~\cite{bruckert2020next}.

Introduction to Explainable AI

The rapid development of machine learning models has raised significant challenges related to their transparency and interpretability. Many such models operate as "black boxes," making their internal decision-making processes obfuscated to human observers. XAI seeks to remedy this by providing methodologies and frameworks that render AI systems more understandable. Legislative measures such as the GDPR and industry standards like ISO26262 have further underscored the necessity for these capabilities, demanding explicability in algorithmic systems, particularly in safety-critical applications.

Taxonomy Development

The paper identifies over 50 influential surveys and performs a meta-study to extract and synthesize terminologies and concepts into a structured taxonomy. This taxonomy is intended to serve researchers, practitioners, and newcomers to the field by offering a panoramic view of XAI methods, their traits, and metrics for evaluation.

Problem Definition

- Task Type and Data Type: Different XAI methods cater to specific model task types (e.g., classification, regression) and data types (e.g., images, texts), influencing their applicability and operational context.

- Model Interpretability: The taxonomy differentiates between inherently interpretable models, blended models, self-explaining models, and post-hoc explanation methods, each offering varied insights and utility according to the complexity of the system being explained.

Explanator Properties

- Input and Output: The types and scope of inputs required by XAI methods are distinguished. Model-agnostic approaches are contrasted with model-specific ones, emphasizing portability and applicability across different model architectures.

- Interactivity and Constraints: The taxonomy extends to interactive methods that allow iterative feedback and correction from users, placing emphasis on functional and mathematical constraints that influence the design and execution of XAI methods.

Metrics for Evaluation

- Functionally-Grounded Metrics: Metrics such as fidelity, coverage, stability, and expressivity are outlined as indicators of the explanator's formal properties, measuring the degree to which the explanations align with the true model outputs and their perceived complexity.

- Human-Grounded Metrics: These metrics assess the interpretability and effectiveness of an explanation from the explainee’s perspective, often involving subjective assessments or user studies to understand the human-machine interaction dynamics.

- Application-Grounded Metrics: Focused on the practical implication and utility of explanations in real-world tasks, these metrics evaluate user satisfaction and system performance improvement when complemented by XAI techniques.

Implications and Future Directions

The taxonomy serves as a foundational guide for exploring the breadth of XAI methods and their applications. By offering a structured approach to XAI, the paper encourages more targeted and context-sensitive research initiatives. Future developments in AI will likely incorporate more refined methods for transparency, accountability, and user-centric design principles, with XAI playing a pivotal role in shaping trustworthy AI systems.

Conclusion

In summary, this paper makes significant strides toward organizing the diverse landscape of XAI through a systematic taxonomy, offering a holistic view of existing methods, concepts, and evaluative metrics. This taxonomy not only aids in bridging gaps within the research community but also lays the groundwork for future innovations in the field of interpretable machine learning.