- The paper introduces a 'causality first' strategy to improve safety, interpretability, and robustness in multi-agent reinforcement learning.

- The paper demonstrates how Structural Causal Models and Multi-Agent Causal Models address challenges like non-stationarity and decentralized control.

- The paper outlines future applications in traffic control, healthcare, and market dynamics, paving the way for resilient, real-world multi-agent systems.

Causal Multi-Agent Reinforcement Learning: Review and Open Problems

Introduction

"Causal Multi-Agent Reinforcement Learning: Review and Open Problems" explores the intersection of causality and Multi-Agent Reinforcement Learning (MARL), promoting a 'causality first' perspective. It argues for the potential benefits of incorporating causal methods into MARL, suggesting that causal inference can enhance safety, interpretability, and robustness while offering theoretical guarantees for emergent behaviors in multi-agent systems. The paper advocates for formulating MARL models with causal structures, positing that this approach could address some inherent challenges in multi-agent learning environments.

Theoretical Foundation: Reinforcement Learning and Causality

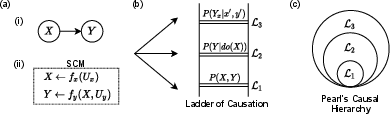

Reinforcement Learning (RL) focuses on the mapping of states to actions to maximize a reward signal, grappling with noise, non-stationarity, and partial observability. Causal inference, on the other hand, seeks to establish causal relationships between variables within structural models like Structural Causal Models (SCM), where graphical representations such as Directed Acyclic Graphs (DAGs) depict causal influences. The paper underscores the potential for SCMs in explicitly modeling decision-making processes that account for interventions, counterfactuals, and causal pathways.

Figure 1: The causal Bayesian network illustrates causal assumptions and directions, affecting exogenous and endogenous variables within an SCM.

Causal Reinforcement Learning

The section on Causal Reinforcement Learning (CRL) identifies areas where causal reasoning benefits RL. Techniques from causal inference can provide solutions for data fusion, transportability, off-policy learning, and counterfactual reasoning. By leveraging SCMs in RL, researchers can address biases arising from distribution shifts and employ causal tools for more efficient decision-making, potentially improving policy learning and evaluation via explicit causal pathways.

The Multi-Agent Case

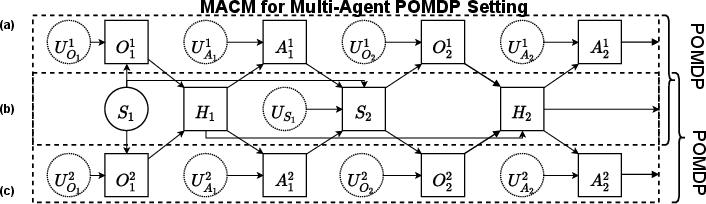

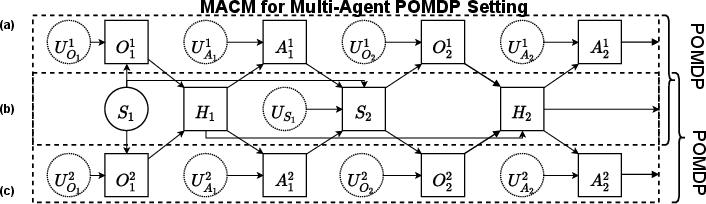

The multi-agent setting introduces additional complexities beyond single-agent environments, such as non-stationarity and decentralized control, which demand novel solutions. The paper suggests reframing MARL models in terms of Multi-Agent Causal Models (MACMs) that incorporate causal reasoning to tackle these challenges. MACMs enable explicit modeling of shared variables among agents, facilitating richer causal interactions and potentially improving learning dynamics.

Figure 2: Two-agent decision-making system depicted through an MACM, showcasing the interaction dynamics and shared histories among agents in decentralized settings.

Practical Implications and Future Directions

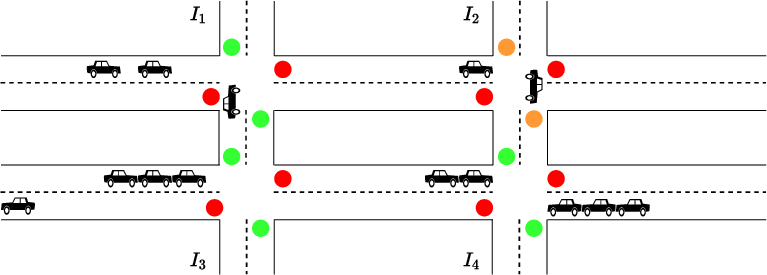

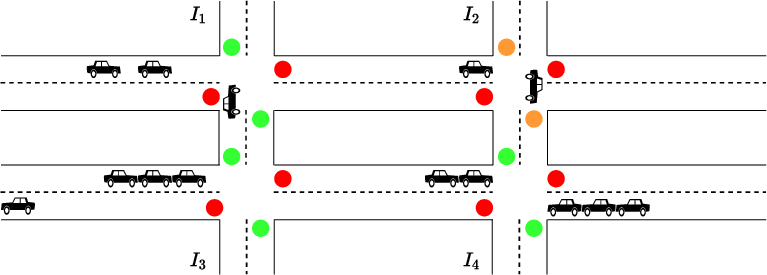

The paper speculates on future applications of causal MARL in areas such as traffic control, healthcare, market dynamics, and advertising. It emphasizes tackling non-stationarity through causal models that handle uncertainties and foster cooperation via communication protocols and shared knowledge. It highlights the potential for causal models to aid in decentralized reasoning and improve safety and robustness in critical applications, such as healthcare, through intelligent agent cooperation.

Figure 3: Multi-agent interaction in traffic signal control, showcasing decentralization and shared observation among networked intersections.

Conclusion

The integration of causal reasoning into MARL represents an intriguing frontier in AI research, offering potentially transformative benefits in robustness, efficiency, and interpretability. By formalizing causal interactions within multi-agent settings, researchers can address significant challenges posed by current systems and pave the way for innovative applications that leverage these capabilities across various domains. The paper calls for further exploration into causal MARL, encouraging the development of sophisticated frameworks that truly capitalize on the intersection of causality and multi-agent learning.