- The paper demonstrates that PLMs robustly encode simple semantic information while struggling with complex reasoning tasks.

- The study employs both edge and vertex probing techniques, revealing that fine-tuning improves certain alignments yet leaves monotonicity largely unsolved.

- The research shows that more expressive MLP probes extract richer linguistic insights compared to linear classifiers, emphasizing the role of probe complexity.

Introduction

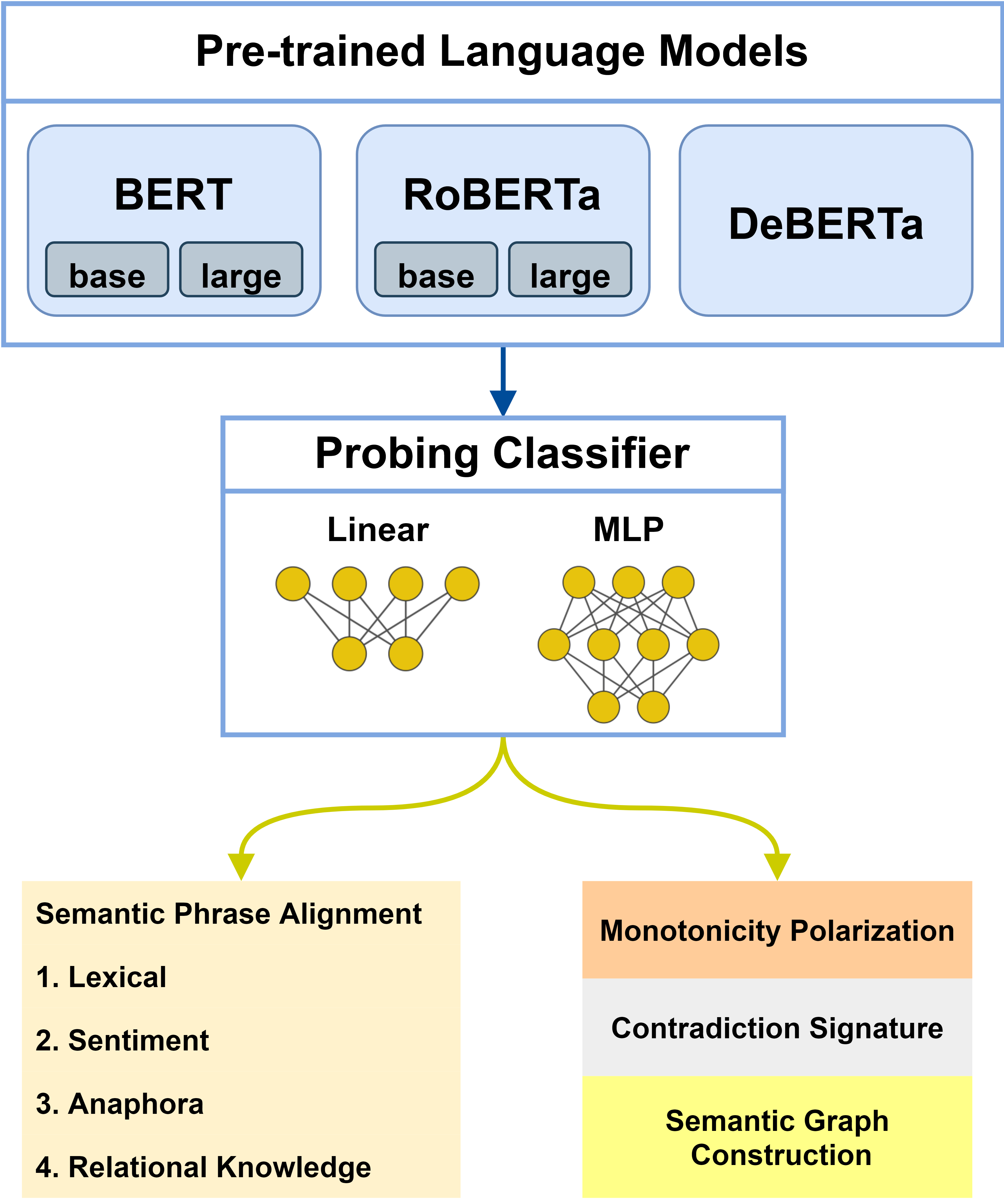

This paper systematically examines whether pre-trained LLMs (PLMs) encode the types of linguistic information necessary to support symbolic logical inference, particularly as used in Natural Language Inference (NLI) systems. Using a carefully designed probing framework, the study evaluates the extent to which contextualized representations produced by models such as BERT, RoBERTa, and DeBERTa encapsulate both simple and complex semantic phenomena needed for logical entailment and contradiction recognition. It further analyzes how fine-tuning for NLI tasks influences the acquisition of missing or weakly encoded linguistic information.

Methodology: Probing Framework Design

The authors employ both edge-probing and vertex-probing techniques, building upon well-established approaches for evaluating linguistic knowledge in contextualized embeddings. Edge probing targets relationships between pairs of spans (e.g., between a concept and its modifier), while vertex probing targets token-level properties (e.g., monotonicity marks).

Probing classifiers are layered atop frozen LLMs—primarily linear or single-hidden-layer MLPs—ensuring the extraction of information is reflective of representational encoding, not additional learning. The probe’s effectiveness is measured via:

Datasets span a range of phenomena, including semantic graphs, monotonicity, lexical alignment, anaphora, sentiment, relational knowledge, and contradiction signatures, recast from major benchmarks and annotated to suit the probing task requirements.

Probing Tasks and Linguistic Phenomena

The probing suite covers phenomena central to symbolic inference:

- Semantic Graph Construction: Detecting connections between sentence constituents (concepts, relations, modifiers).

- Monotonicity Polarization: Assigning monotonicity markers to tokens, crucial for logic-based inference.

- Semantic Alignment: Determining alignment at the lexical, anaphora, sentiment, and relational knowledge levels.

- Contradiction Signature Detection: Identifying phrase pairs responsible for contradictions, integrating syntax and semantics.

These tasks are implemented as either edge- or vertex-probing challenges, with examples and dataset construction rigorously detailed.

Experimental Results

The results demonstrate robust encoding of simple semantic information—such as lexical relations and concept-relation-modifier structures—across all models. Notably, semantic graph construction achieves high accuracy and selectivity, reflecting the models’ strengths aligned with prior syntactic and role-labeling findings.

However, for more complex phenomena requiring higher-order reasoning (e.g., monotonicity, sentiment/relation-based alignment), PLMs encode significantly less information. There is markedly stronger performance for simple phenomena (e.g., SA-Lex, SA-AP) compared to complex inference, even when switching from linear to MLP probes. DeBERTa exhibits somewhat superior performance on contradiction signatures relative to BERT and RoBERTa.

Label-wise analyses further reveal that encoding is often label-dependent, particularly for complex tasks; models perform better on hypothesis tokens than premise tokens in semantic alignment, indicating asymmetric information distribution.

Effect of Classifier Expressiveness

Contrary to certain previous assertions, results indicate that MLP probes can deliver both higher accuracy and similar selectivity as linear probes on semantically rich tasks, supporting arguments for allowing sufficient probe complexity to extract nontrivial knowledge without overfitting to task artifacts.

Mutual information-based analyses confirm that PLMs yield substantial information gain (often exceeding 50%) over word embedding baselines for most tasks. For lexical alignment, this gain exceeds 100%, even though word embeddings are specifically trained for such semantic distinctions. Gains are less pronounced in monotonicity and complex reasoning tasks, reinforcing the notion of representational limitations in these areas.

Fine-tuning on NLI Tasks

Probing models fine-tuned on MultiNLI reveals:

- Significant improvements in encoded information for sentiment and relational-knowledge-based alignment tasks, indicating that certain types of complex semantic knowledge can be acquired (or made more accessible) during fine-tuning.

- Persistently low monotonicity accuracy post-fine-tuning, implying either architectural limitations or a paucity of monotonicity-focused training data in MultiNLI.

Implementation Considerations

Probing and analysis are performed with the Jiant framework and HuggingFace Transformers, ensuring reproducibility and standardization. All PLMs are frozen during probing to ensure that only pre-trained representations are evaluated, not additional training artifacts. Hyperparameters are consistent with best practices (learning rate 1×10−4, batch size 4, epochs 10).

Implications and Future Directions

The findings have several key implications:

- PLMs are effective knowledge bases for simple syntactic and semantic features, but their capacity for representing complex semantic reasoning (e.g., monotonicity, compositional reasoning) is limited. This restricts their direct applicability as drop-in replacements for structured, logic-based inference modules in high-stakes NLI applications.

- Fine-tuning can expose or induce some complex semantic knowledge, but is insufficient for monotonicity, signaling either an architectural deficiency or a systematic dataset gap.

- Classifier choice in probing is nontrivial; restricting to linear probes may mask available information, whereas moderately expressive MLP probes yield a more faithful representation of model content.

These results motivate further research on:

- Probing with more sophisticated classifiers while controlling for overfitting.

- Developing pre-training objectives or architectures explicitly targeting monotonicity and compositional inference.

- Building hybrid systems that selectively query PLMs as linguistic knowledge bases, integrating with symbolic reasoning components to enhance scalability and coverage.

Conclusion

This study rigorously assesses the linguistic information for logical inference encoded within prevalent PLMs, revealing substantial strengths in simple semantic domains and notable limitations in complex reasoning domains. While fine-tuning can mitigate some deficits, core issues with complex inferential phenomena persist, underscoring the continued need for hybrid neural-symbolic integration and targeted architectural innovation. The probing methodology and dataset design outlined here establish a foundation for subsequent interpretability research and practical system augmentation aiming to align PLMs more closely with the requirements of symbolic inference.