Hybrid Human-AI Curriculum Development for Personalised Informal Learning Environments

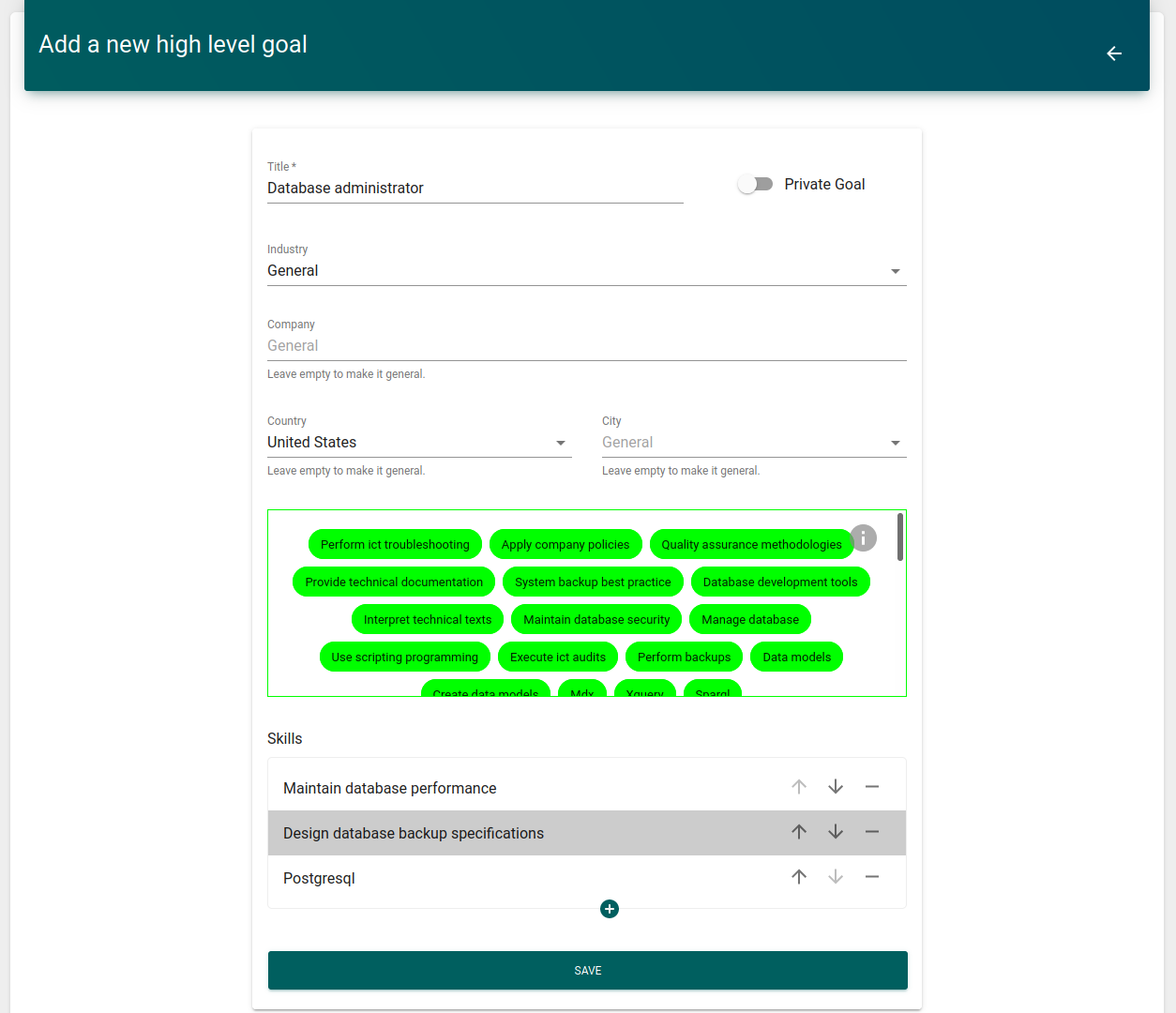

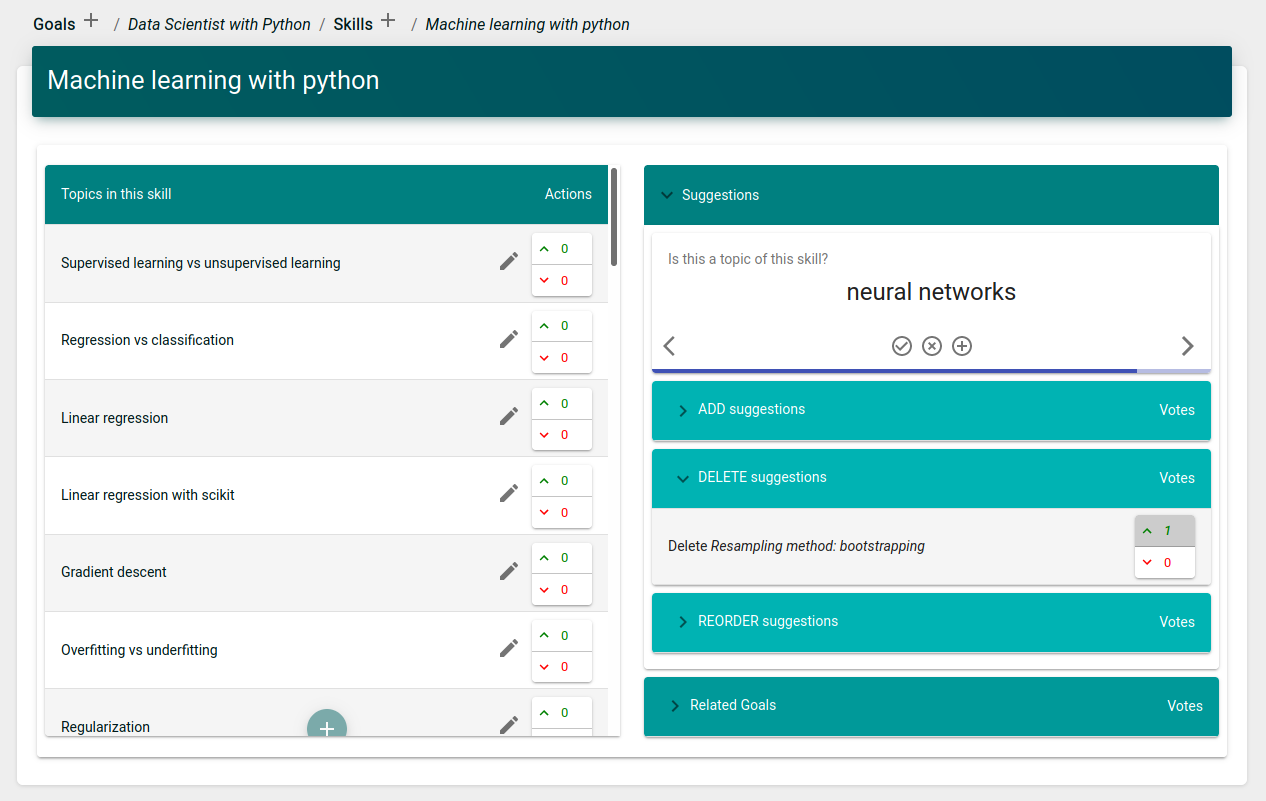

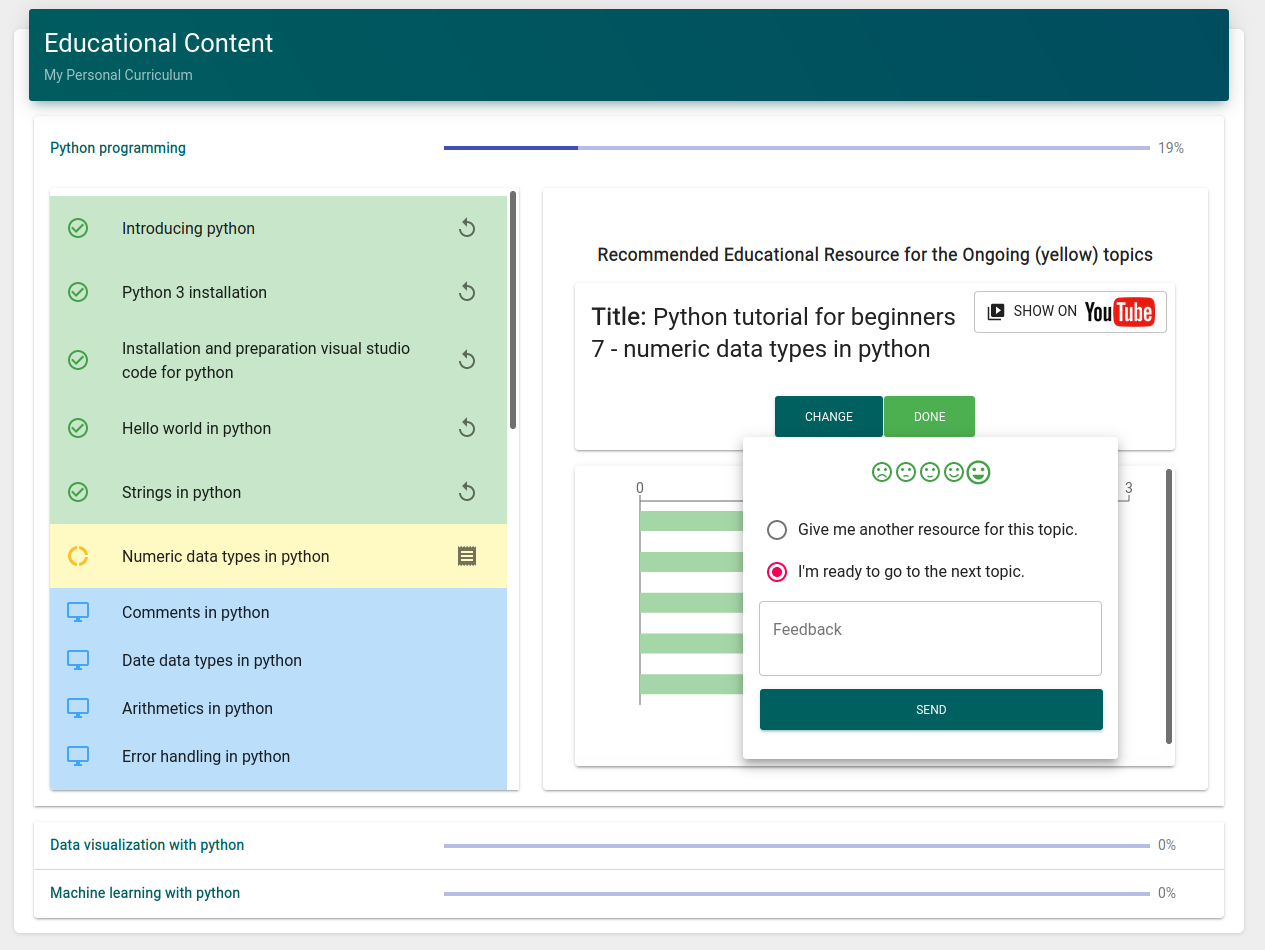

Abstract: Informal learning procedures have been changing extremely fast over the recent decades not only due to the advent of online learning, but also due to changes in what humans need to learn to meet their various life and career goals. Consequently, online, educational platforms are expected to provide personalized, up-to-date curricula to assist learners. Therefore, in this paper, we propose an AI and Crowdsourcing based approach to create and update curricula for individual learners. We show the design of this curriculum development system prototype, in which contributors receive AI-based recommendations to be able to define and update high-level learning goals, skills, and learning topics together with associated learning content. This curriculum development system was also integrated into our personalized online learning platform. To evaluate our prototype we compared experts' opinion with our system's recommendations, and resulted in 89%, 79%, and 93% F1-scores when recommending skills, learning topics, and educational materials respectively. Also, we interviewed eight senior level experts from educational institutions and career consulting organizations. Interviewees agreed that our curriculum development method has high potential to support authoring activities in dynamic, personalized learning environments.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of what remains missing, uncertain, or unexplored in the paper, phrased as concrete, actionable items for future research:

- Generalizability beyond data science: evaluate the system across multiple domains (e.g., healthcare, law, K-12 STEM) to assess performance variability and domain-specific challenges.

- Learner impact: run controlled studies to measure effects on learning outcomes, skill acquisition, retention, and employability compared to baseline curricula or alternative personalization systems.

- Comparative ablation: quantify the incremental contribution of each module (ESCO + BLEU matching, TF-IDF playlist topic seeds, LLDA/LDA modeling, metadata-based quality filter) via ablation experiments.

- Validity of using BLEU for occupation-skill matching: compare BLEU-based matching with semantic embedding approaches (e.g., SBERT, multilingual sentence transformers) for cross-lingual and synonym-aware mapping.

- Taxonomy dependence and coverage: assess limitations of ESCO (European bias, coverage gaps) and integrate/compare with O*NET, ISCO, and industry skill taxonomies; evaluate mapping consistency across taxonomies.

- Source bias and licensing: analyze reliance on YouTube and Wikipedia for educational materials (coverage, quality, licensing); incorporate OER repositories (e.g., OpenStax, MERLOT) and verify reuse compliance.

- Transcript quality and multilinguality: evaluate accuracy of YouTube transcripts (ASR errors, missing captions) and extend models to under-resourced languages; measure topic extraction robustness under noisy text.

- Topic modeling advances: compare LDA/LLDA with contextual methods (e.g., BERTopic, Top2Vec, hierarchical topic models) and report coherence, stability, and interpretability across domains and languages.

- Handling cold-start and long-tail topics: design fallbacks for skills/topics with sparse content (fewer than 50 transcripts/200 videos), including expert seeding, curriculum templates, or synthetic augmentation.

- Quality prediction model transferability: validate the metadata-based quality predictor on diverse content types and domains; characterize false-positive/false-negative rates and recalibrate thresholds accordingly.

- Crowdsourcing robustness: test susceptibility to gaming, sybil attacks, collusion, and popularity bias; implement identity verification, reputation decay, and anomaly detection; simulate adversarial scenarios.

- Suggestion review parameter tuning: empirically optimize the approval thresholds (e.g., minimum points, 75% up-vote rate, 1-week window) and assess adaptive policies that react to crowd reliability and workload.

- Expertise weighting and fairness: evaluate whether the point-weighted voting system over-privileges early or popular contributors; introduce expert verification layers or domain-calibrated reputations.

- Duplicate and synonym resolution: implement entity resolution to prevent duplicate or semantically overlapping skills/topics created manually; evaluate canonicalization accuracy and crowd-assisted merges.

- Provenance and versioning: add fine-grained provenance tracking of edits, sources, and model outputs; analyze how version updates affect learner pathways and content consistency over time.

- Privacy and compliance: specify and audit data collection for personalization (behavior history, preferences); ensure GDPR/CCPA compliance, consent management, and privacy-preserving analytics.

- Bias assessment: measure demographic, geographic, and linguistic biases introduced by ESCO and source content; report fairness metrics and mitigation strategies (e.g., diversity-aware recommendations).

- Scalability and performance: profile computational costs (topic modeling, ingestion, updates), latency, and throughput; propose architectural optimizations and cloud/hybrid deployment strategies.

- Real-time labor market integration: operationalize ingestion of job vacancy streams; evaluate timeliness and accuracy of skill demand updates and their impact on curriculum adaptations.

- Evaluation design limitations: expand expert panels (size, diversity), establish inter-rater reliability (e.g., Cohen’s kappa), and use blinded, randomized comparisons against baseline recommenders.

- Learner-centered usability: conduct broader UX studies with diverse learner populations to measure cognitive load, satisfaction, and time-to-content; iterate UI/UX based on measurable improvements.

- Explainability and transparency: develop user-facing explanations for AI recommendations and crowd decisions; measure trust and decision quality with/without explanations.

- Pedagogical sequencing and prerequisites: infer and validate prerequisite structures among skills/topics; compare data-driven sequencing with expert-designed curricula and mastery-learning frameworks.

- Assessment integration: add adaptive diagnostic and summative assessments tied to topics/skills; evaluate how assessment signals improve recommendations and learner progression.

- Internationalization: rigorously test cross-lingual support (non-Latin scripts, locale-specific skills), multilingual auto-completion, and translation consistency; report performance per language.

- Legal and ethical use of content: formalize policies for embedding, rehosting, and reuse of YouTube/Wikipedia resources; implement license detection, filtering, and attribution compliance checks.

- Accessibility and inclusivity: audit recommended materials for accessibility (captions, transcripts, screen-reader support) and inclusive design; include accessibility metadata in quality scoring.

- Reproducibility: release code, models, and evaluation datasets (or synthetic equivalents) with documentation to enable independent replication and benchmarking.

- Content staleness and drift: monitor and detect outdated or drifted materials; design update cadences and automated alerts; measure responsiveness to evolving skills and labor market needs.

- Hierarchical curricula and dependencies: explore hierarchical and graph-based representations (skills→topics→subtopics); evaluate algorithms for reordering and grouping that reflect learning pathways.

- Security and moderation: implement automated checks to prevent malicious content uploads, spam links, or unsafe materials; define human-in-the-loop moderation workflows and escalation paths.

- Non-video and structured content integration: extend beyond videos/articles to include datasets, coding exercises, simulations, and textbooks; evaluate effects on learning efficacy and engagement.

Collections

Sign up for free to add this paper to one or more collections.