- The paper introduces a combined CNN-BiLSTM model with an attention mechanism to accurately predict earthquake counts and maximum magnitudes.

- It leverages zero-order hold preprocessing and bi-directional LSTM layers to capture both spatial and temporal dependencies in seismic data.

- The model outperforms traditional methods with significant improvements in RMSE and R², demonstrating superior performance in complex seismic regions.

CNN-BiLSTM Model with Attention Mechanism for Earthquake Prediction

This essay provides a rigorous examination of the novel approach proposed in "A CNN-BiLSTM Model with Attention Mechanism for Earthquake Prediction" (arXiv ID: (2112.13444)). The study introduces a hybrid model that integrates CNNs, BiLSTMs, and an Attention Mechanism to accurately predict both the number and maximum magnitude of earthquakes in regions across mainland China. This paper leverages advances in neural network architectures to capture spatial and temporal dependencies in seismic data, enhancing earthquake prediction capabilities.

Hybrid Model Architecture

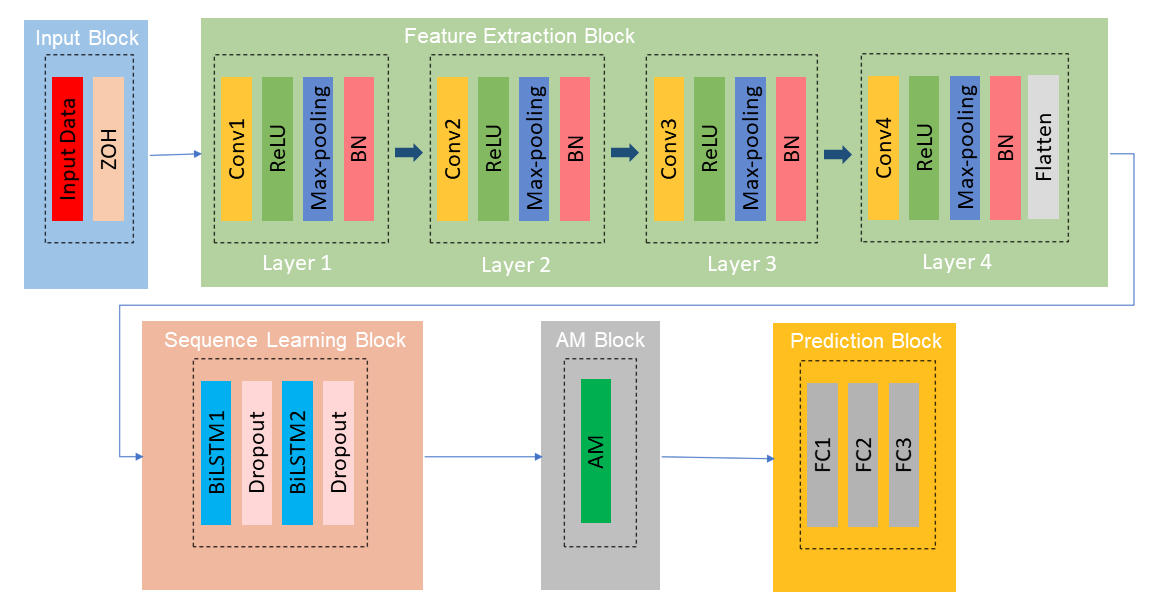

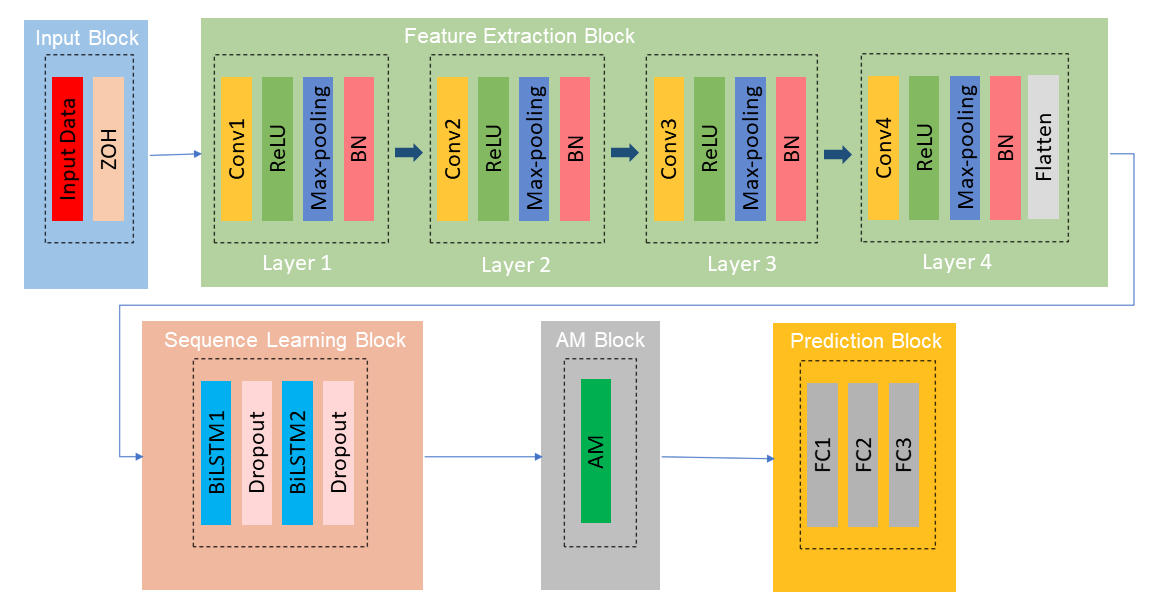

The proposed method is predicated on the synergistic combination of CNNs for spatial feature extraction, BiLSTM layers for temporal sequence learning, and an AM to prioritize significant features. The architecture comprises five major components: the input block, feature extraction block, sequence learning block, attention block, and prediction block (Figure 1).

Figure 1: The architecture of the proposed method.

- Input Block: Utilizes historical earthquake data processed with Zero-Order Hold (ZOH) to normalize the input, emphasizing significant features by filling zero values with preceding non-zero entries.

- Feature Extraction Block: A multi-layer 1D CNN extracts spatial features, with batch normalization and ReLU activations optimizing training stability and convergence speed.

- Sequence Learning Block: Comprises BiLSTM layers that process temporal dependencies bi-directionally, enhancing the model's ability to assimilate both historical and predictive temporal information.

- Attention Block: Employs an AM to dynamically weight features based on their relevance to prediction accuracy, which enhances the model's focus on statistically significant input sequences.

- Prediction Block: Includes fully connected layers that synthesize the attention-weighted features into output predictions for earthquake number and magnitude.

Data Preprocessing and Model Training

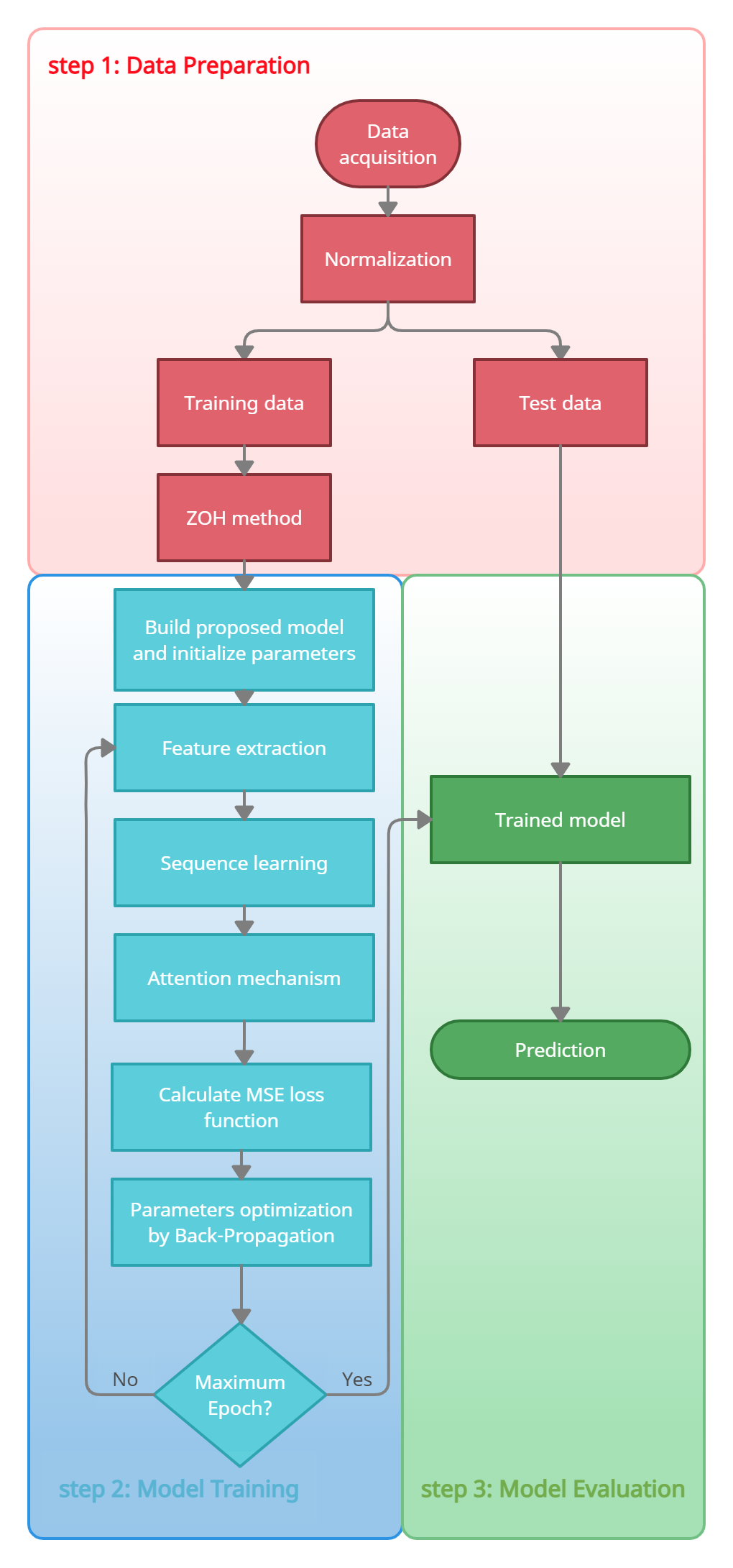

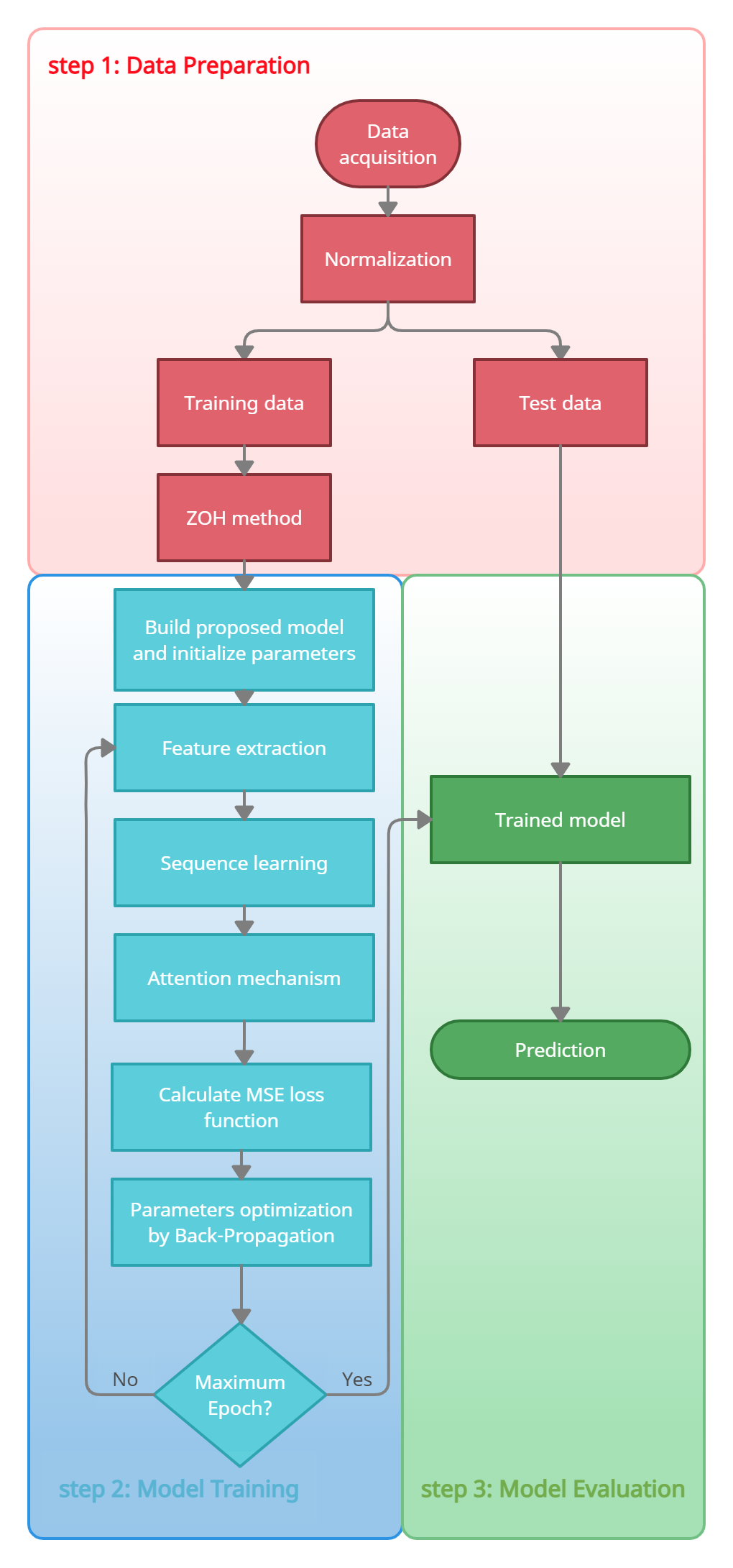

The authors emphasize the critical role of preprocessing in neural network performance, utilizing a ZOH technique to mitigate the influence of noise in zero-valued data points. This preprocessing step ensures that spatial and temporal patterns are preserved. Model training is optimized using Adam as the optimizer, with a learning rate that decays from 0.001 to 0.0001 over 150 epochs.

Figure 2: The flow chart of the proposed method.

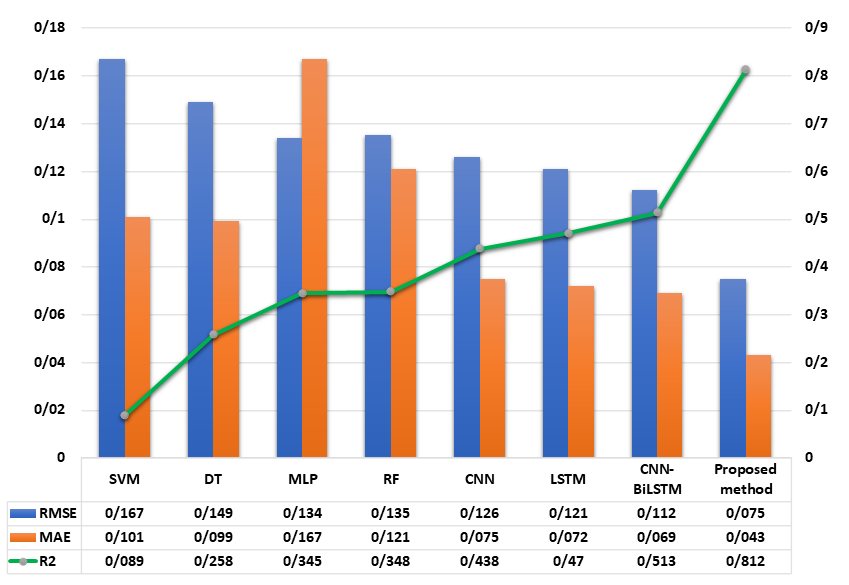

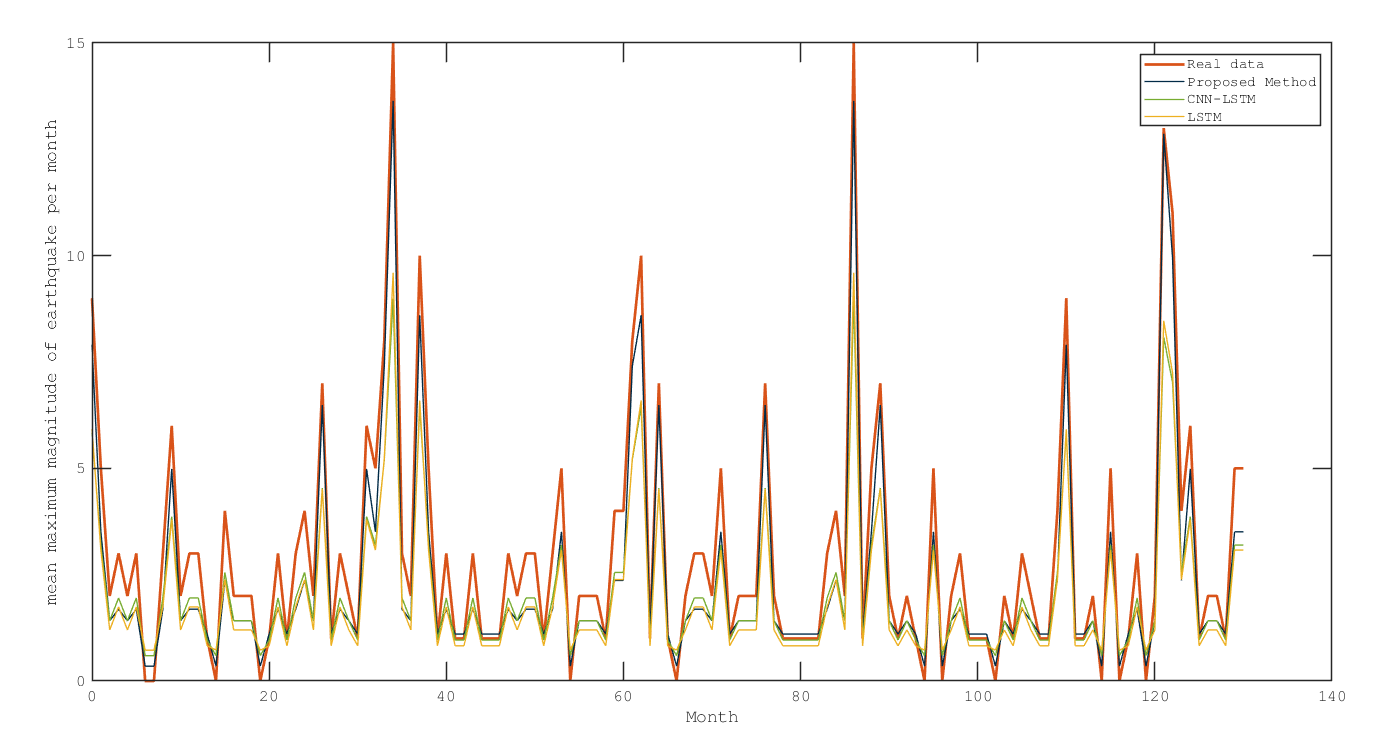

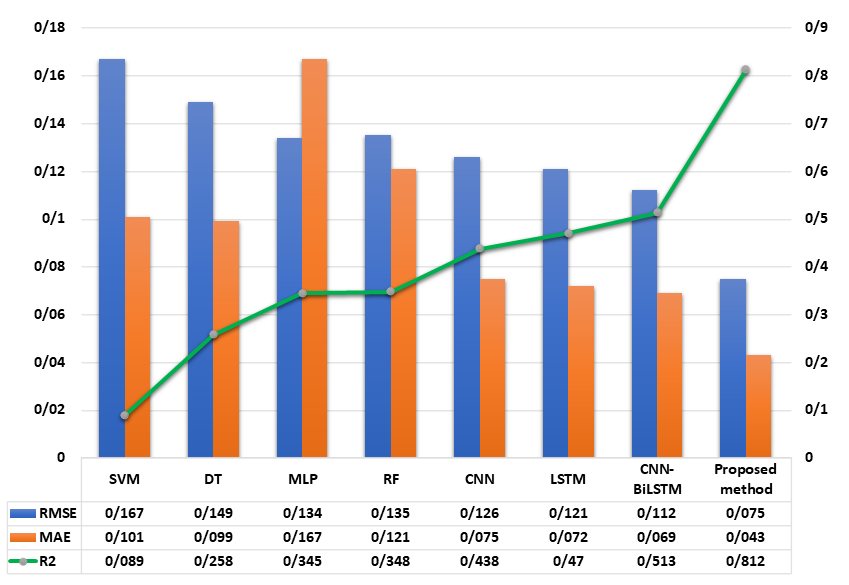

The model's performance was evaluated against traditional and state-of-the-art machine learning techniques, including SVM, RF, MLP, CNN, and LSTM, as well as hybrid CNN-BiLSTM architectures. Evaluation metrics such as RMSE, MAE, and R2 were employed to assert model efficacy, with the hybrid model demonstrating substantial improvements.

Figure 3: Comparison of the mean of the three evaluation metrics between comparison methods and the proposed method for number of earthquake.

Conclusion

The integration of CNN-BiLSTM with an Attention Mechanism within the proposed framework offers a comprehensive solution to the complex problem of earthquake prediction. By simultaneously capturing spatial and temporal dependencies and enhancing feature focus, the model delivers state-of-the-art performance in predicting seismic events. Future research could explore further refinement of attention mechanisms and the integration of additional geophysical features to improve predictive accuracy across different geologic settings.