- The paper introduces a hybrid method that integrates semantic landmarks into visual SLAM to enhance environmental understanding.

- The method improves pose estimation accuracy and reduces trajectory errors by up to 68% in dynamic settings.

- A ROS package implementation demonstrates real-time performance and expanded SLAM utility for advanced robotic applications.

An Online Semantic Mapping System for Extending and Enhancing Visual SLAM

Introduction

The paper "An Online Semantic Mapping System for Extending and Enhancing Visual SLAM" (2203.03944) proposes an innovative approach that integrates semantic mapping into visual SLAM to address limitations in traditional systems. Conventional SLAM systems predominantly rely on local geometric features for mapping, often resulting in purely geometric representations that restrict functionality to basic navigation and obstacle detection. To transcend these limitations, the authors introduce a hybrid method that enhances SLAM with semantic information, leveraging the precision of geometric features and the reliability of deep learning-based semantic landmarks. This method not only augments environmental perception for higher-level tasks but also significantly improves pose estimation accuracy.

Methodology

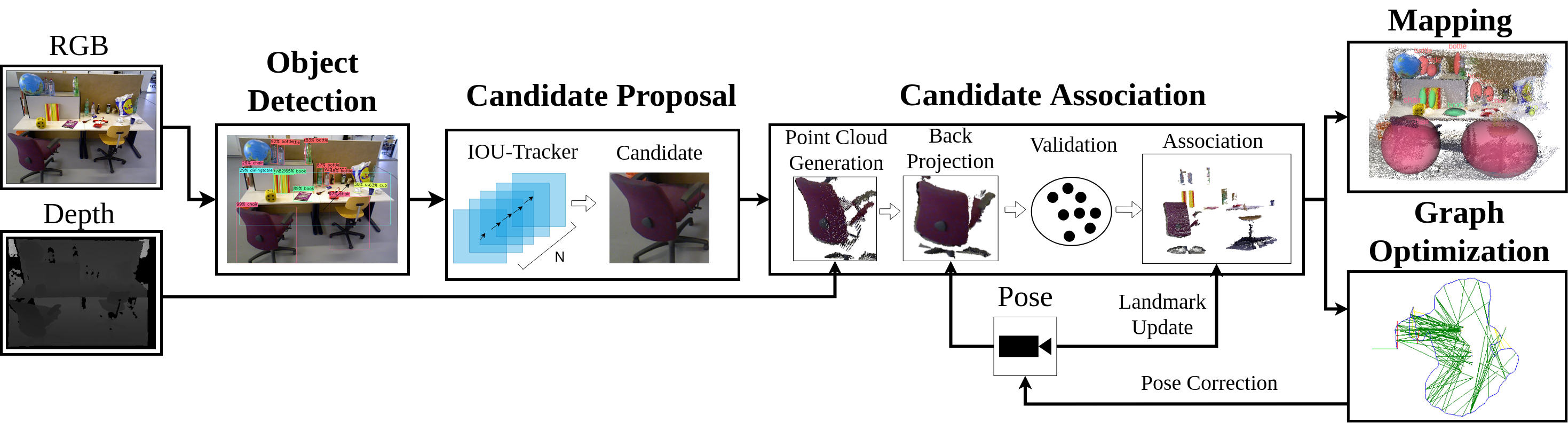

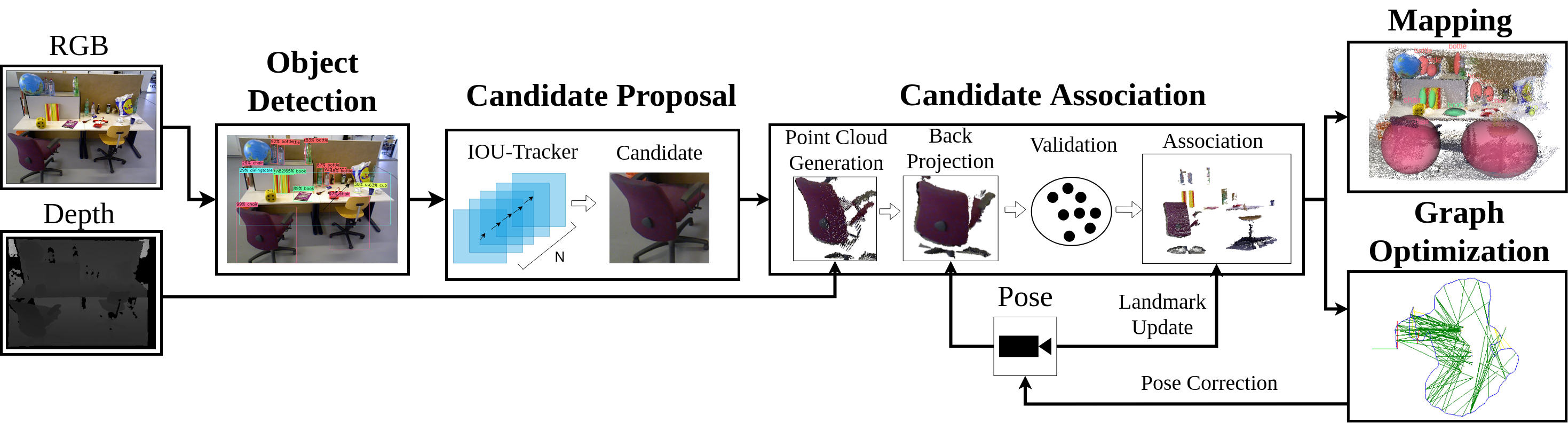

The proposed method involves a sequential pipeline for enriching maps with semantic data. Initially, objects are detected in 2D images and tracked using an adapted Intersection over Union (IoU) tracker, forming "tracklets" to handle ambiguous data and reduce the influence of dynamic objects. This generates landmark candidates which undergo a rigorous validation process before being integrated into the world frame as semantic constraints, enhancing pose estimation accuracy. These added constraints allow for a more robust SLAM performance, demonstrated by improvements in error rates across various datasets.

Figure 1: Overview of the proposed method, detailing the process from object detection to semantic integration in map construction.

Results

The authors implement their system as a modular Robot Operating System (ROS) package, interfacing seamlessly with graph-based SLAM methods. Their results show substantial improvements in pose estimation accuracy, with the system operating in real-time. Tests conducted on the TUM RGB-D and KITTI datasets reveal enhancements in trajectory error metrics, with error reductions up to 68% in challenging dynamic environments. The system's ability to reject dynamic objects as semantics and incorporate robust static landmark detections emphasizes its potential for complex, real-world applications.

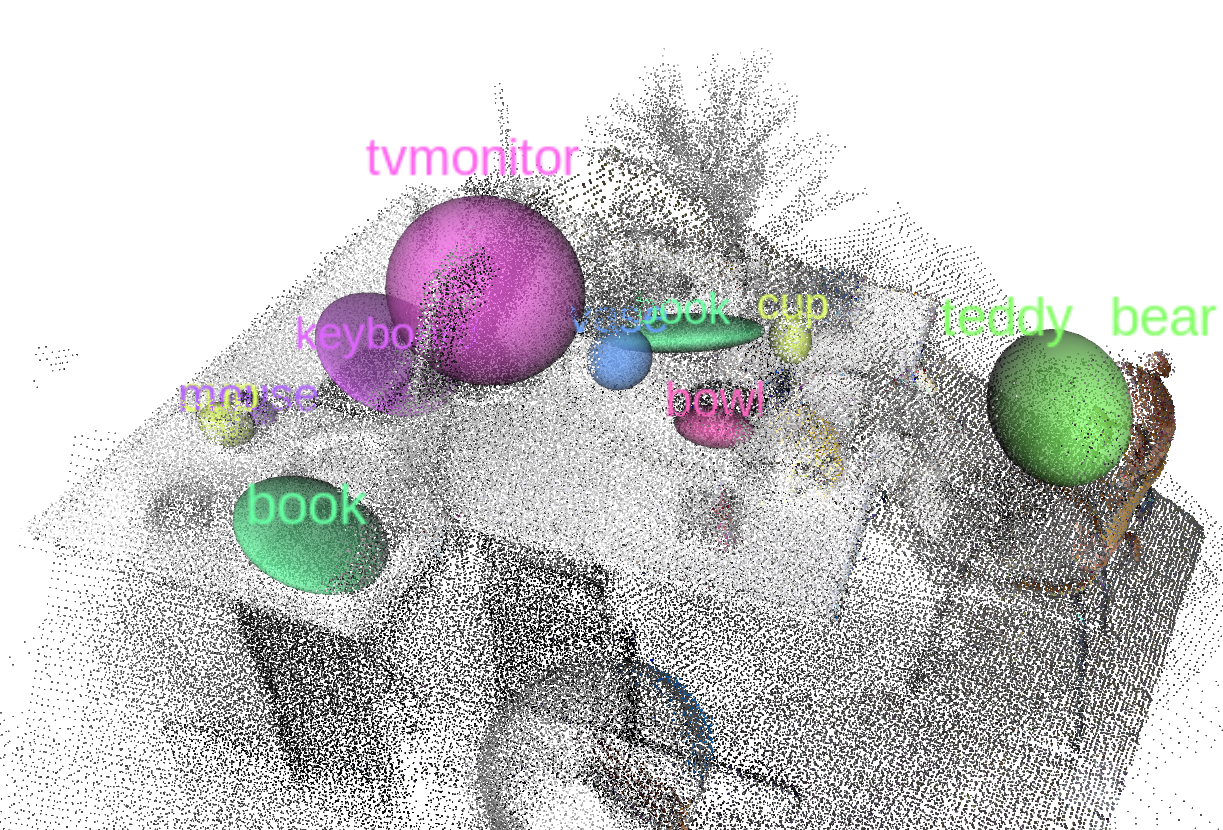

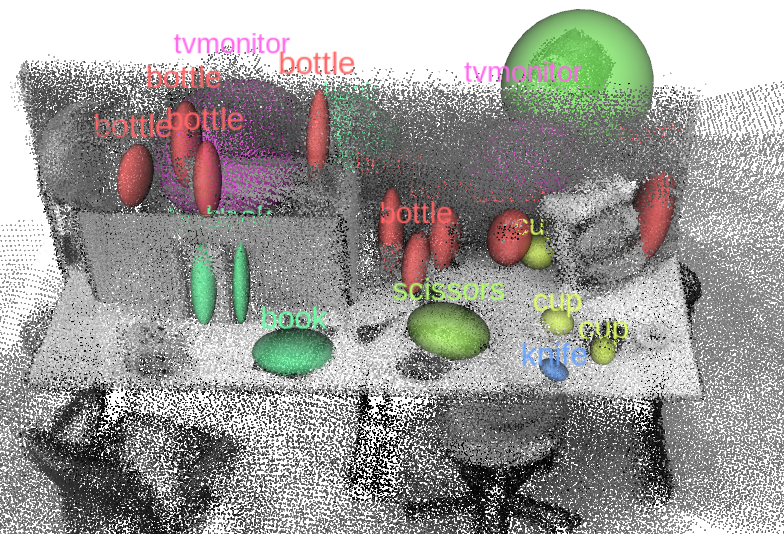

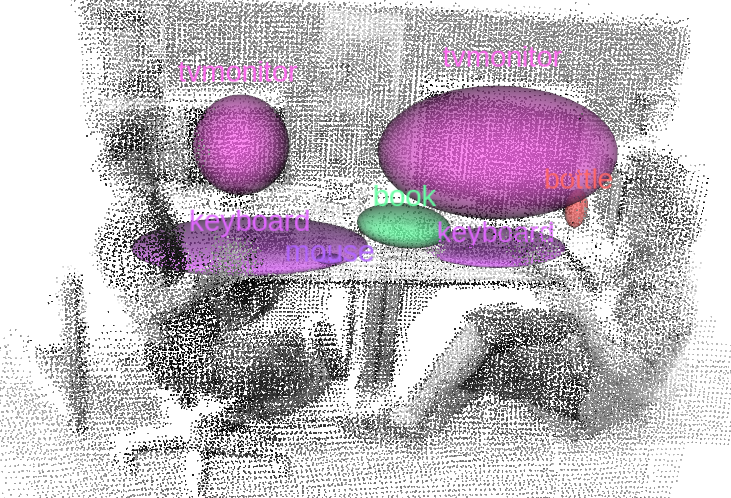

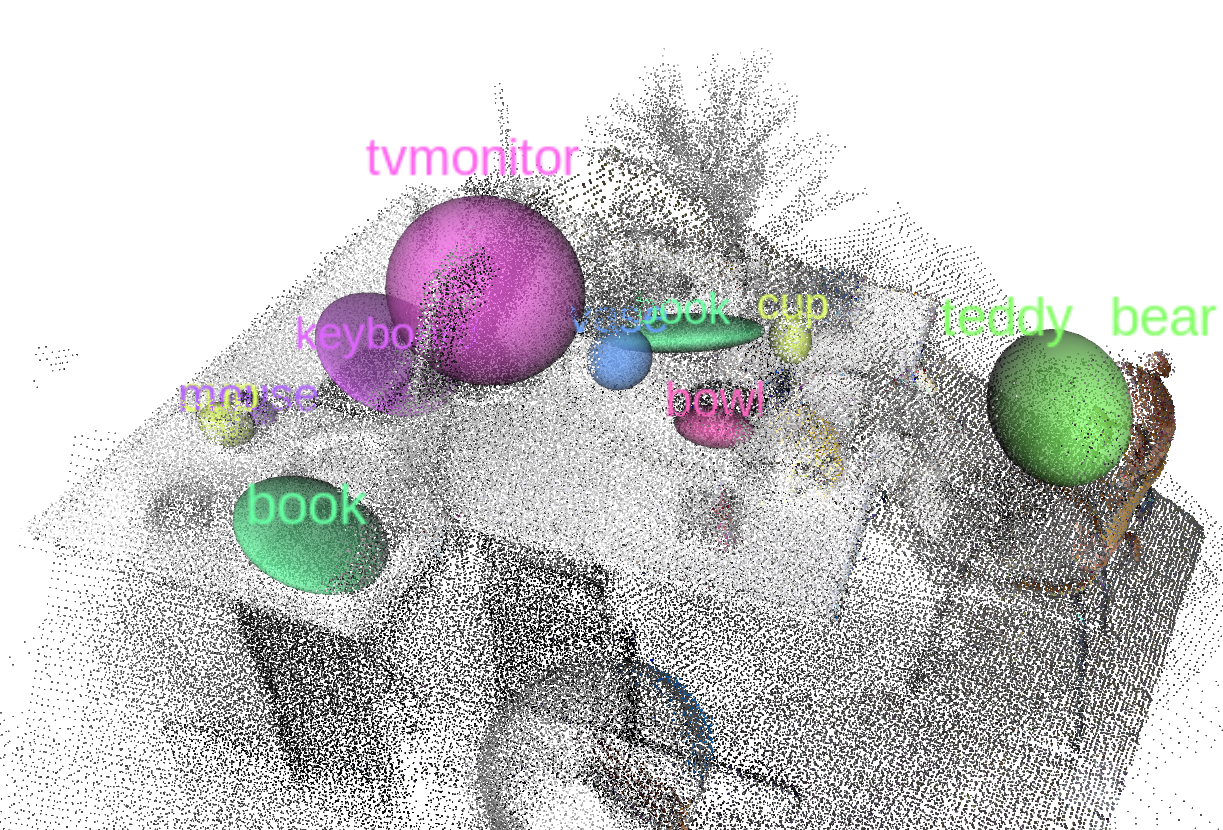

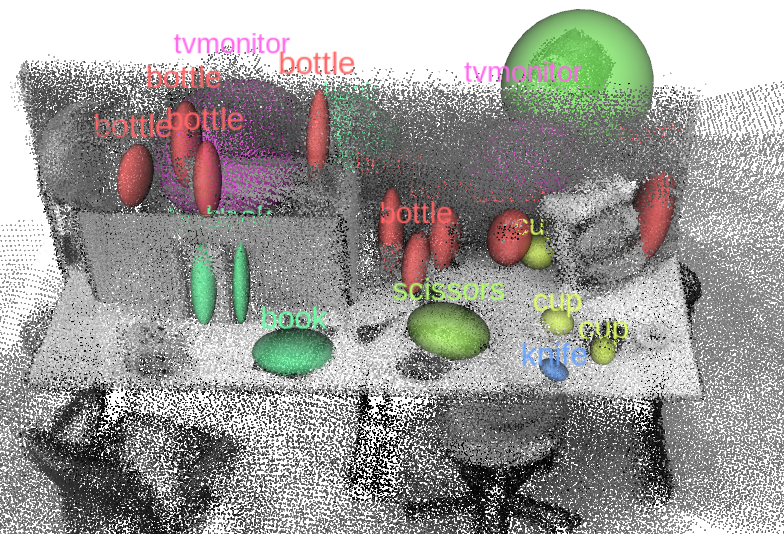

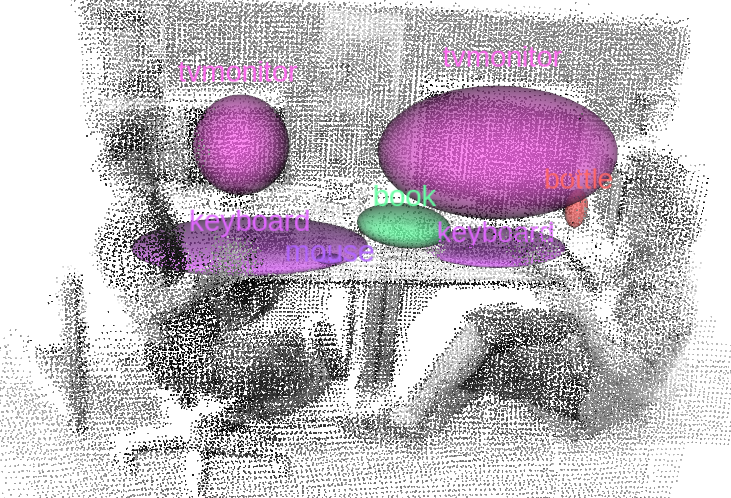

Figure 2: Semantic mapping results from sequences of the RGB-D TUM dataset, demonstrating the integration of semantic information to enhance mapping accuracy.

Discussion

The method proposed in this paper tackles critical challenges in visual SLAM by incorporating semantic information to enhance environmental understanding and pose estimation robustness. The introduction of semantic landmarks into SLAM applications can expand its utility in fields requiring higher-level task execution, such as Human-Robot Interaction and autonomous navigation in dynamic environments.

A key feature of the proposed system is its real-time performance, attributed to the streamlined process of semantic landmark generation and integration. However, the authors acknowledge limitations in handling object proximity and data association errors that may affect scalability and generalization. They propose employing probabilistic approaches for refined data association and considering object pose estimation to further advance system capabilities.

Conclusion

This research outlines significant progress in extending SLAM functionality through semantic integration. By addressing the core challenges of robust mapping and localization in dynamic environments, the system enhances the SLAM framework, facilitating more intelligent robotic applications. Future work will likely focus on refining data association strategies and incorporating pose estimation to expand applicability further. The work also sets a precedent for integrating semantic mapping with real-time robotic systems, paving the way for more autonomous and perceptually capable robotic platforms.