- The paper introduces BR-SNIS, a novel method using iterated sampling-importance resampling (i-SIR) to reduce bias in self-normalized importance sampling.

- The method is validated through theoretical bounds and numerical experiments, showing lower mean squared error in high-dimensional settings.

- BR-SNIS maintains computational efficiency while enhancing estimation accuracy in complex models like Bayesian regression and variational autoencoders.

Bias Reduced Self-Normalized Importance Sampling: An Overview

Introduction to Importance Sampling

Importance Sampling (IS) is a well-established Monte Carlo method used to estimate expectations under a target probability distribution by sampling from an alternative proposal distribution. IS is particularly valuable when direct sampling from the target distribution is computationally infeasible. This technique involves assigning weights to samples based on their likelihood under the target distribution relative to the proposal distribution.

Self-Normalized Importance Sampling (SNIS) and Its Limitations

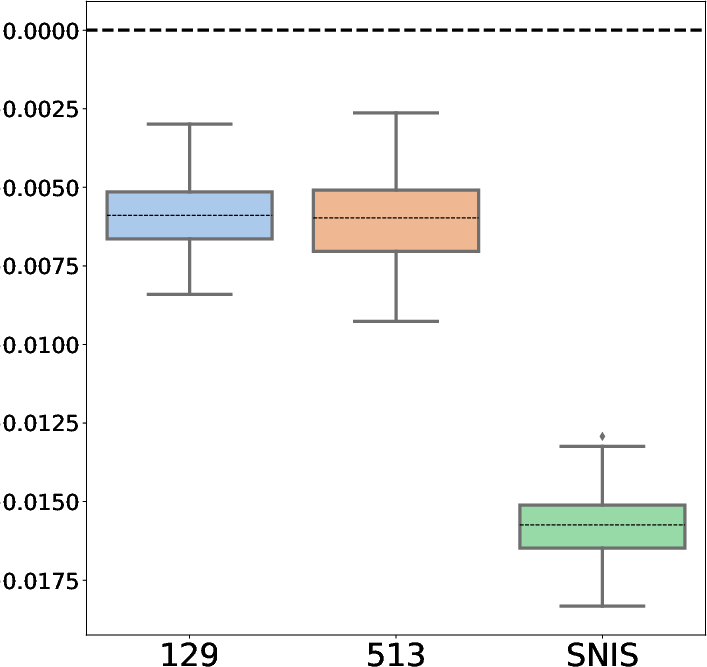

Self-normalized importance sampling (SNIS) is commonly used when the target distribution is known only up to a normalizing constant. This approach effectively normalizes the importance weights by the total sample weight, thereby facilitating the estimation of expectations without requiring the elusive normalizing constant. However, SNIS introduces a significant bias due to the inherent randomness in the ratio of these weights. The paper provides a theoretical framework for addressing these biases through new bias, variance, and high-probability bounds, supported by numerical examples.

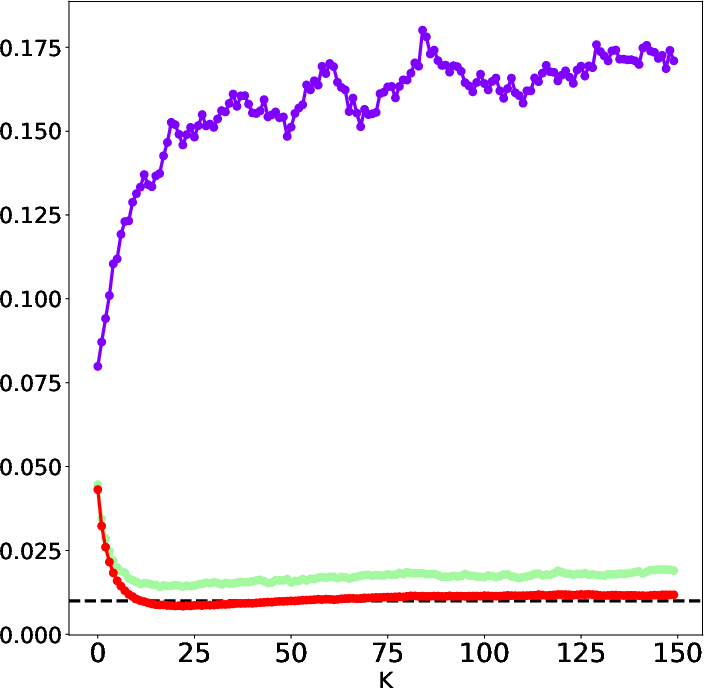

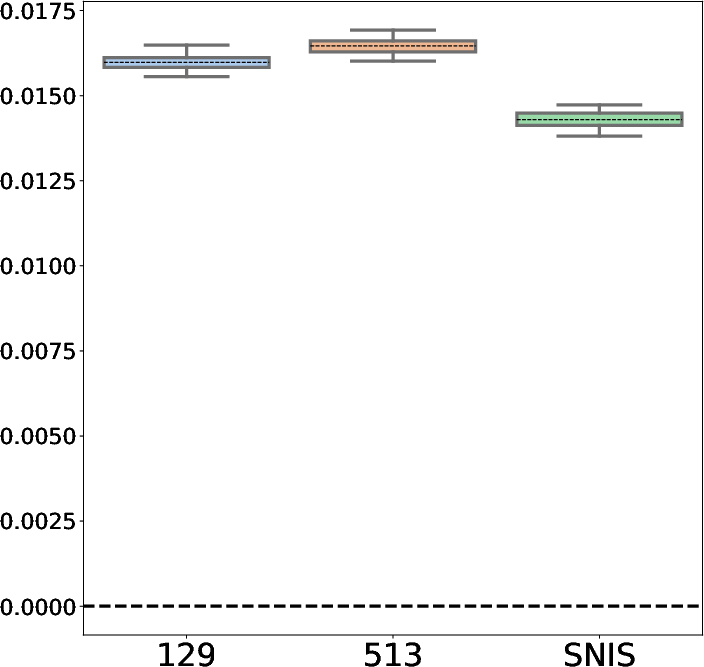

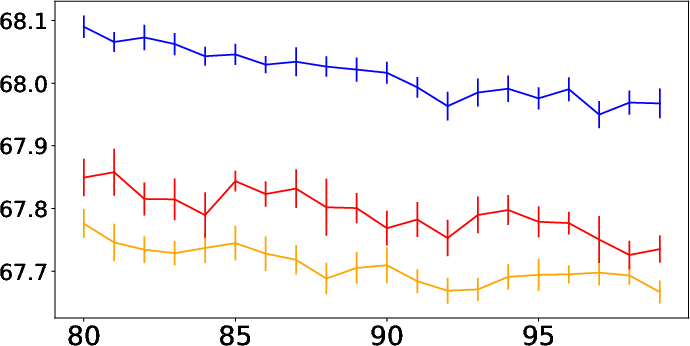

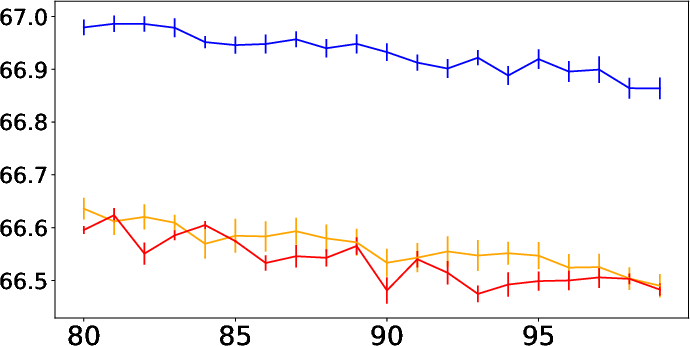

Figure 1: Mean squared error (MSE) dependency as observed in SNIS.

Introducing BR-SNIS

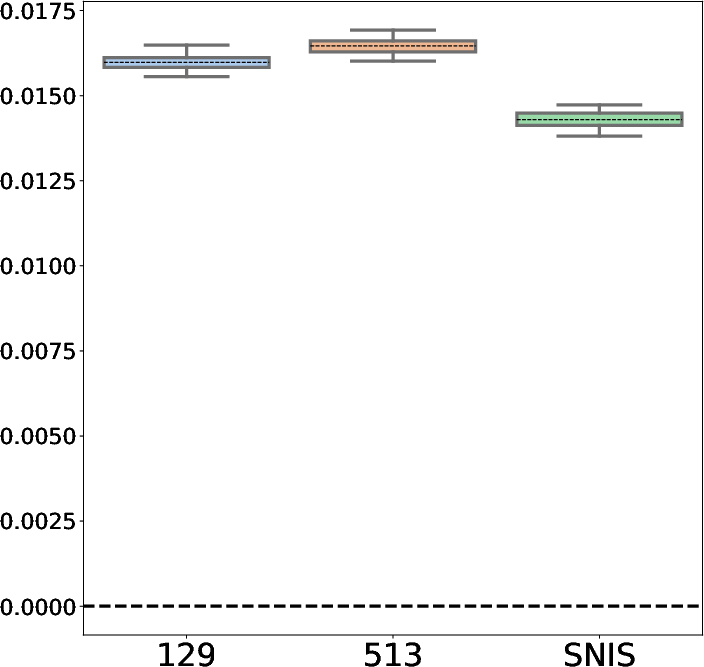

BR-SNIS stands for Bias Reduced Self-Normalized Importance Sampling. This paper introduces BR-SNIS as an advanced approach to mitigating the bias associated with SNIS, while maintaining computational complexity similar to SNIS. The key innovation in BR-SNIS is the strategic use of iterated sampling-importance resampling (i-SIR) methods that incorporate previously discarded samples into the estimation process. This recycling of samples within the estimation framework facilitates bias reduction without inflating the variance, a typical drawback in bias correction methods.

Theoretical Foundations and Algorithmic Insights

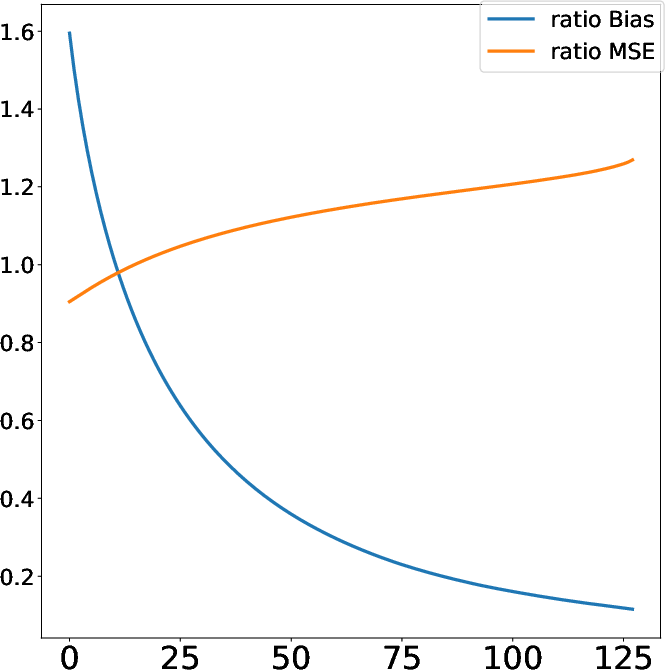

BR-SNIS is built upon rigorous algorithmic foundations. At its core, BR-SNIS utilizes i-SIR, a systematic-scan Gibbs sampler that iteratively refines candidate samples to approximate the target distribution. By promoting the convergence of a Markov chain of candidate pools to the desired distribution, the paper demonstrates geometric ergodicity properties that ensure substantial bias reduction as the number of iterations increases. These properties are further amplified by uniform ergodic characteristics of the involved sampling algorithms.

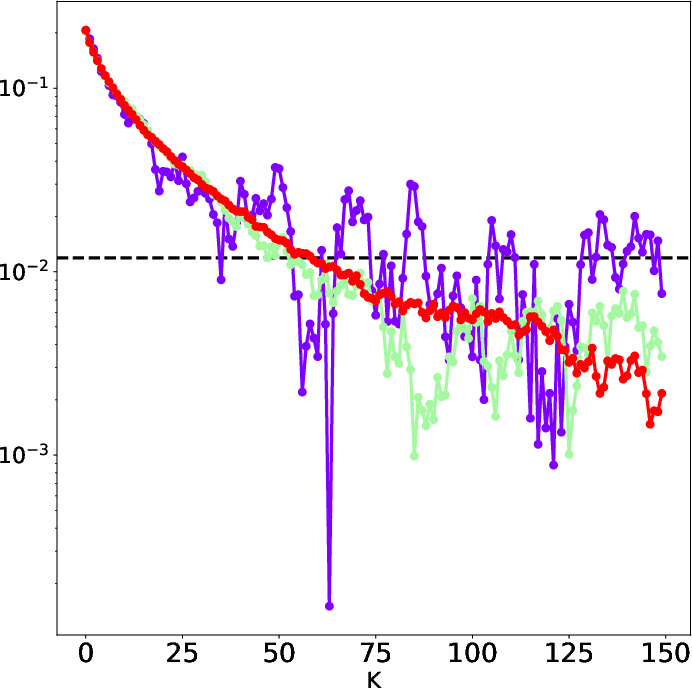

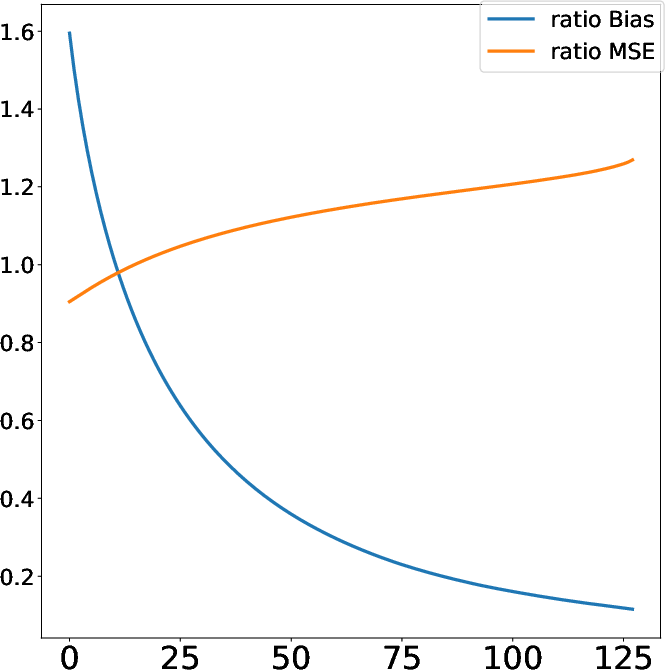

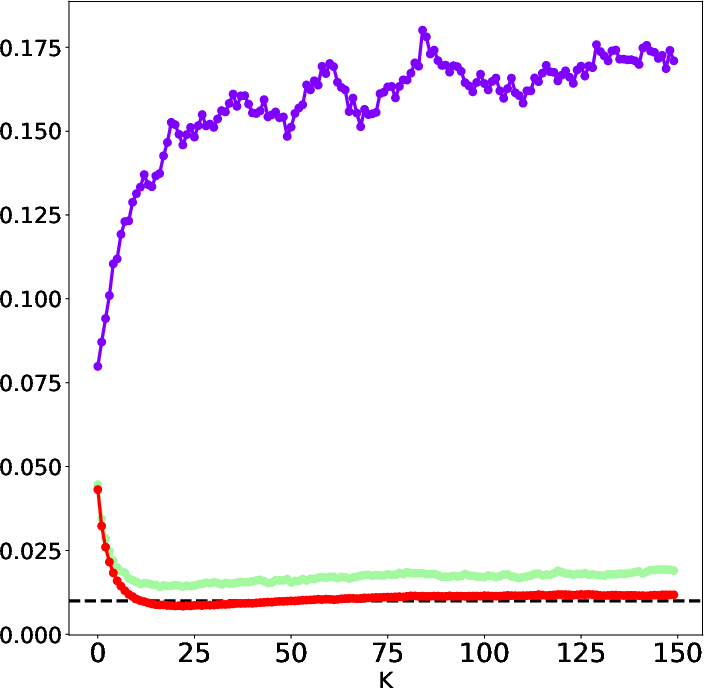

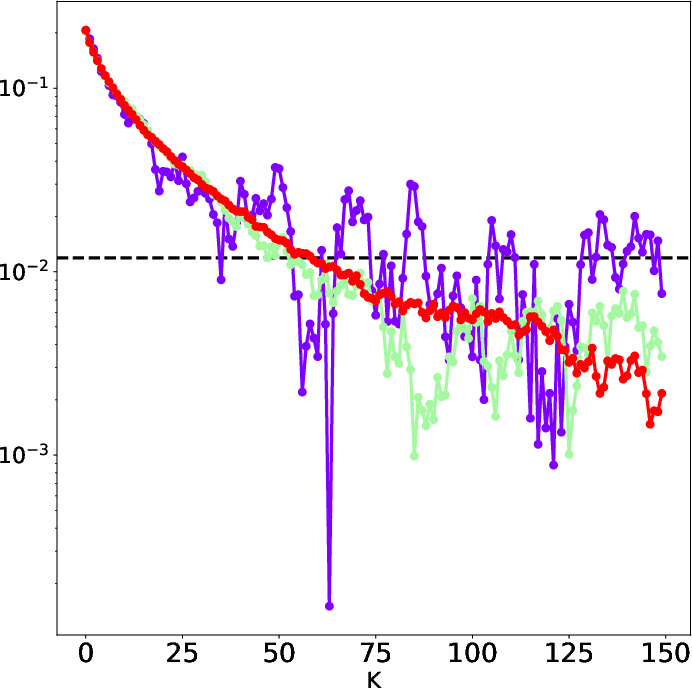

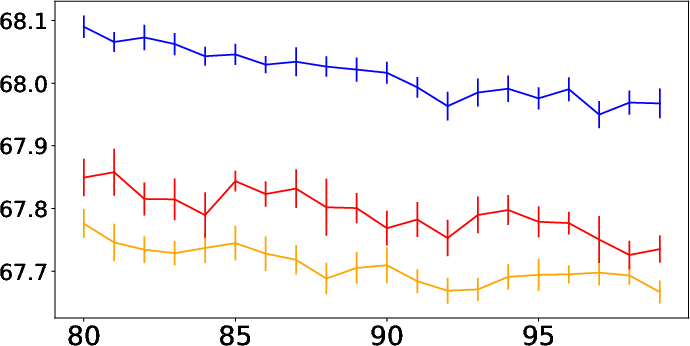

Figure 2: Visualization of the bias present in conventional SNIS compared to BR-SNIS.

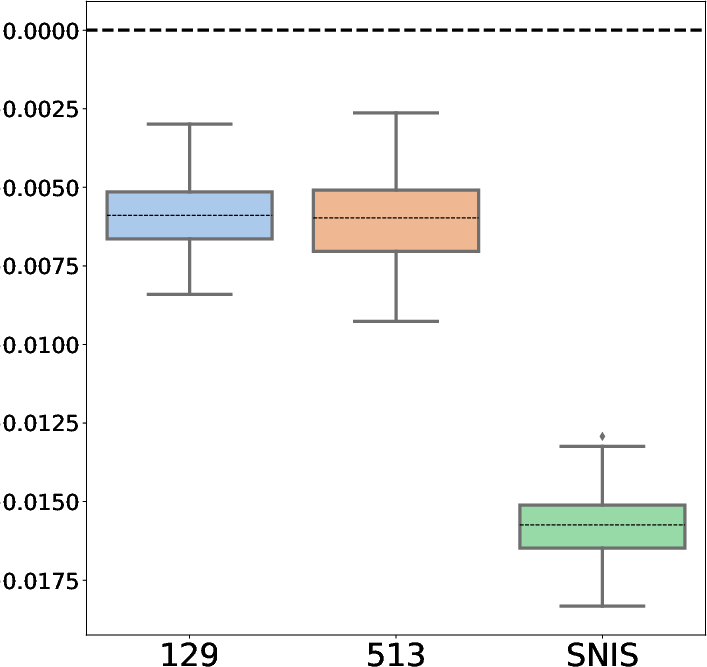

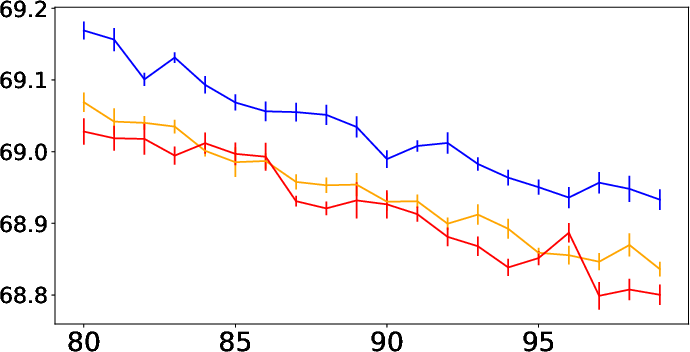

Within the paper, BR-SNIS is numerically validated against standard SNIS in various experimental setups including mixture models, Bayesian regression, and variational autoencoders (VAE). The numerical tests illustrate the considerable bias reduction achieved through BR-SNIS at modest computational costs. Especially in high-dimensional settings such as VAE, BR-SNIS outperforms SNIS in estimating posterior distributions with marked improvements in accuracy.

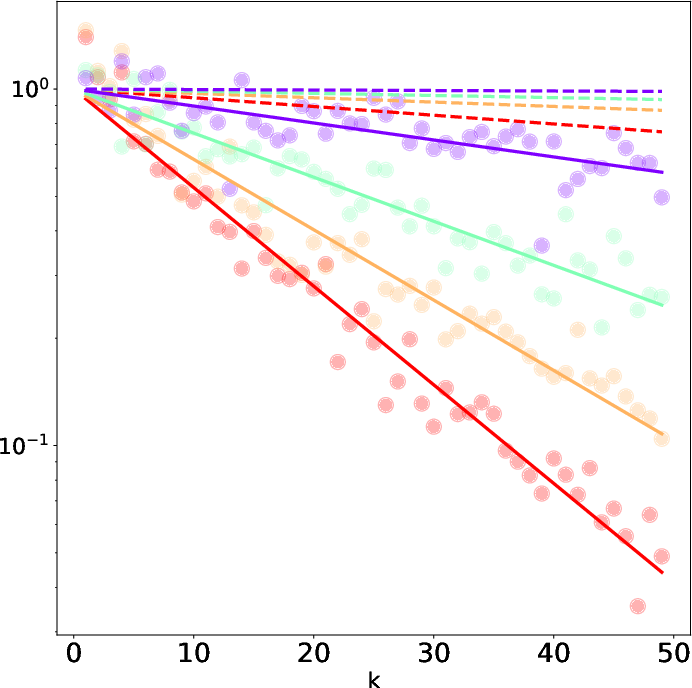

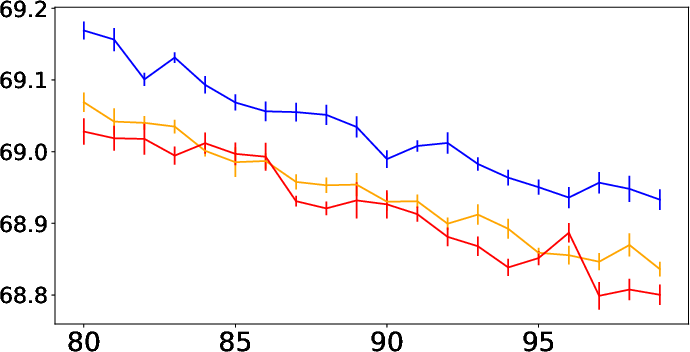

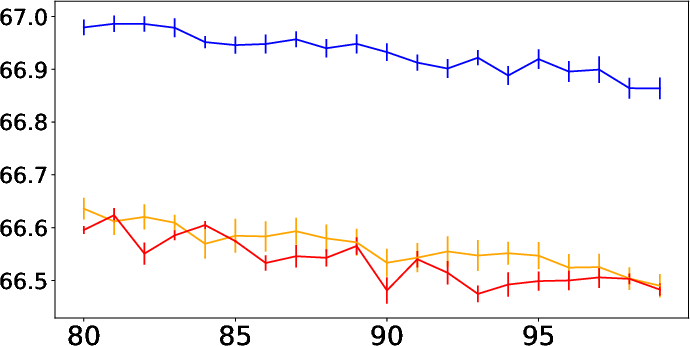

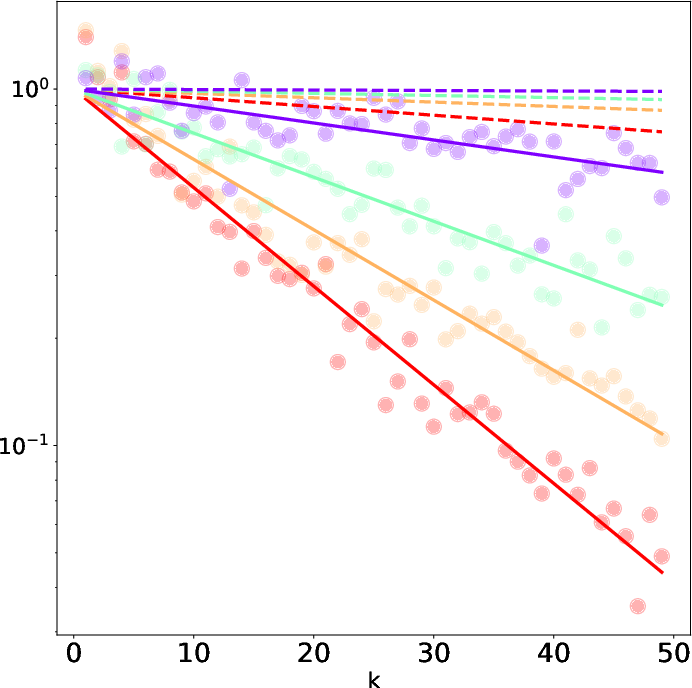

Figure 3: Performance difference in high-dimensional spaces, showcasing the dimension-wise effectiveness of BR-SNIS.

Implications and Future Directions

BR-SNIS holds notable promise for enhancing computational frameworks in AI and statistical learning. Its methodological versatility aligns with current trends towards sustainable computational practices while enhancing estimation accuracy in scenarios with complex probabilistic models. Furthermore, there is potential for extending BR-SNIS to other domains such as Hamiltonian Monte Carlo methods, thereby broadening its impact on Bayesian inference and machine learning in AI. Future research may explore this integration, particularly leveraging BR-SNIS to enhance sample-based computations in large-scale inference problems.

Conclusion

The introduction of BR-SNIS signifies a meaningful advance in importance sampling methodologies. By reducing bias without increasing variance, particularly in environmental conditions only partially known, BR-SNIS equips researchers with a powerful tool for unbiased estimation across varied application domains. As the computational landscape continues to evolve, methodologies like BR-SNIS will form the cornerstone for future developments in efficient sampling and estimation within the AI framework.

(Overall, the paper "BR-SNIS: Bias Reduced Self-Normalized Importance Sampling" (2207.06364) contributes valuable insights into bias reduction techniques and reinforces the role of importance sampling in contemporary computational statistics and AI.)