- The paper introduces LatentOps, which composes arbitrary text operations in a continuous latent space using ODEs and energy-based models.

- It employs parameter-efficient adaptations of pretrained GPT-2 to maintain fluency while enabling flexible manipulations of text attributes.

- Experimental results show improved attribute accuracy and runtime efficiency compared to models like PPLM and FUDGE.

Composable Text Controls in Latent Space with ODEs

The paper "Composable Text Controls in Latent Space with ODEs" (2208.00638) presents a novel approach to handling compositional text operations using a continuous latent space. By leveraging ordinary differential equations (ODEs), the authors introduce LatentOps, which enables the composition of arbitrary text operations within a single framework by utilizing latent representations.

Introduction to LatentOps

LatentOps is designed to address real-world applications where text requires manipulation across various attributes—such as sentiment, formality, and tense—simultaneously or sequentially. Unlike conventional models that fine-tune LLMs for specific operations or combinations of attributes, LatentOps operates within a compact latent space. This latent space is established using parameter-efficient adaptations of pretrained LLMs like GPT-2, facilitating arbitrary text transformations without the computational overhead of retraining large models for each task.

Figure 1: Examples of different composition of text operations, such as editing a text in terms of different attributes sequentially (top) or at the same time (middle), or generating a new text of target properties (bottom). The proposed LatentOps enables a single LM (e.g., an adapted GPT-2) to perform arbitrary text operation composition in the latent space.

Methodology

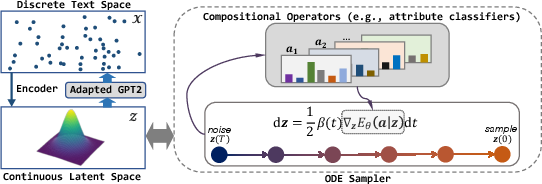

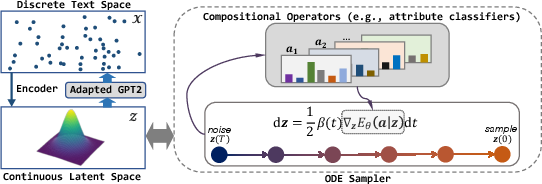

Latent Space and EBMs

LatentOps employs energy-based models (EBMs) to define the distribution of text attributes in the latent space. Given an attribute vector, the method integrates plug-in operators to form an energy-based distribution. This integration allows the model to incorporate various attributes, such as sentiment or formality, into the text without resorting to discrete search spaces that are challenging to optimize, especially for complex text sequences.

ODE Sampling

A significant innovation of LatentOps is the use of ODEs for efficient sampling in the latent space. This technique is more robust compared to traditional Langevin dynamics, which can be sensitive to hyperparameters and requires extensive calibration. By using ODEs, LatentOps efficiently draws samples from the desired distributions, which are then decoded into text sequences via a connected LLM such as GPT-2.

Figure 2: Overview of LatentOps. (Left): We equip pretrained LMs (e.g., GPT-2) with the compact continuous latent space through parameter-efficient adaptation.

Parameter-Efficient Adaptation

The latent space is linked to the pretrained LM decoder via a process that fine-tunes only a minimal subset of the LLM’s parameters. This approach ensures that the adapted LLM retains its fluency and coherence capabilities while being able to decode latent vectors into meaningful text efficiently.

Experimental Results

LatentOps was rigorously evaluated on various tasks, including text generation and editing with compositional attributes. It demonstrated superior performance in generating high-quality and diverse text when compared to existing plug-and-play models such as PPLM and FUDGE, often achieving higher attribute accuracy while maintaining competitive fluency and diversity metrics.

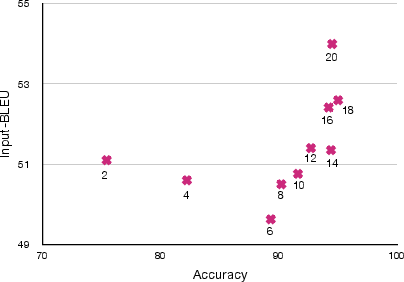

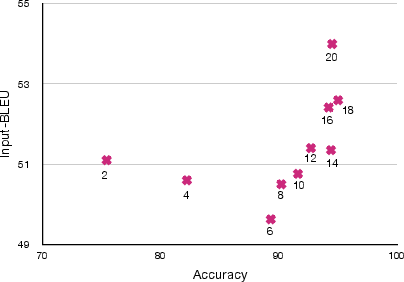

Figure 3: The trend of change of accuracy and input-BLEU as N increases. The digit below each data point represents the corresponding N.

The proposed method showed not only improved qualitative results but also displayed efficiency in terms of computational time, significantly outperforming analogous methods in runtime evaluations.

Conclusion

The introduction of LatentOps represents a substantial step forward in text generation and manipulation, offering a flexible, efficient, and scalable solution to composing text attributes within a latent space using ODEs. Future research could extend this approach to more sophisticated text tasks and investigate its applications in diverse domains of AI, potentially leading to further advancements in controllable text generation.