- The paper proposes a low complexity RSIC model using EfficientNetB0 with multihead attention, achieving competitive accuracy with reduced memory usage.

- It integrates data augmentation, transfer learning, and quantization to optimize training and enable effective deployment on edge devices.

- Results on the NWPU-RESISC45 dataset demonstrate significant accuracy gains and memory efficiency, confirming the model's practical viability.

A Robust and Low Complexity Deep Learning Model for Remote Sensing Image Classification

Introduction

The paper "A Robust and Low Complexity Deep Learning Model for Remote Sensing Image Classification" addresses the critical task of remote sensing image classification (RSIC) by proposing an efficient and compact deep learning model designed for deployment on edge devices. The RSIC task involves classifying scenes captured by remote sensing technologies, which is pivotal for applications like urban planning, environmental monitoring, and natural hazard detection.

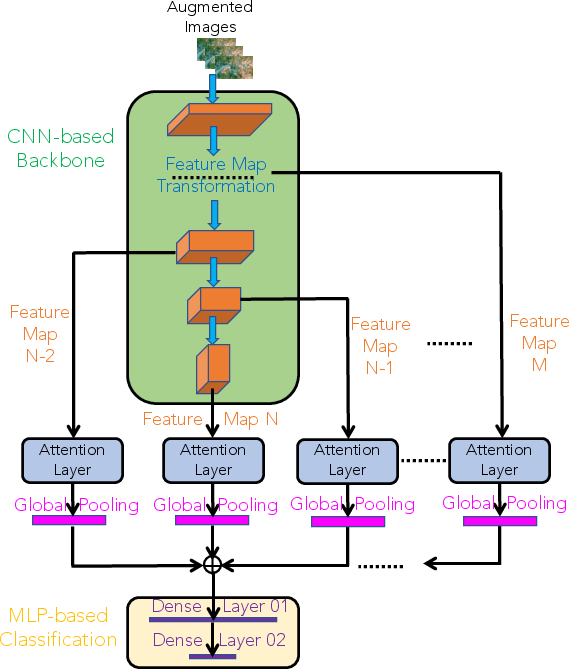

Model Architecture Overview

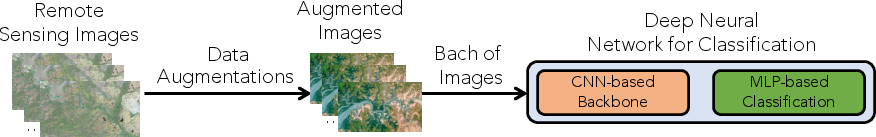

The proposed model leverages a multi-faceted approach involving the evaluation of lightweight deep neural network architectures, application of attention mechanisms, transfer learning, and quantization techniques to ensure the model remains under memory constraints while maintaining high accuracy. The architecture is strategically designed to incorporate the following components:

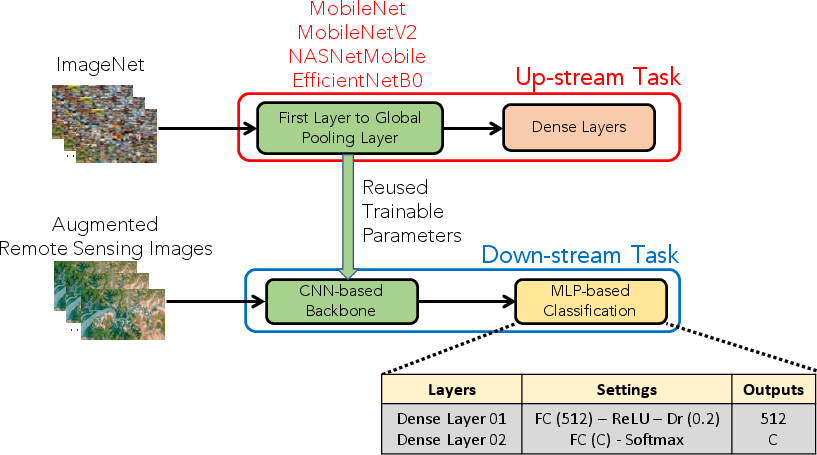

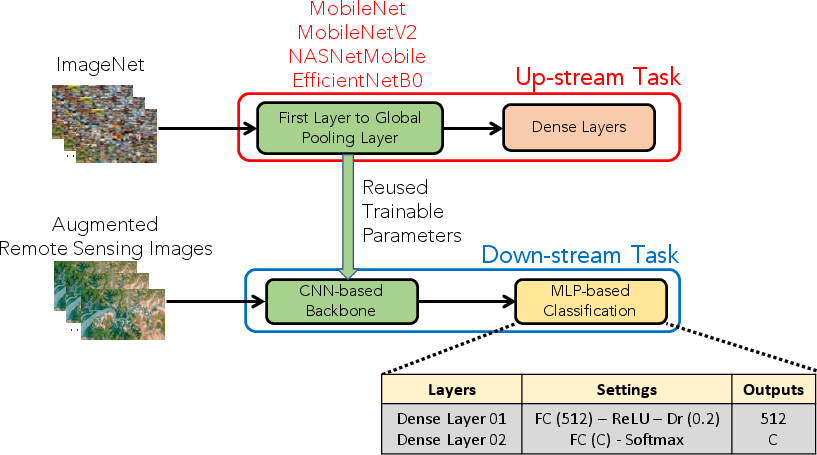

- Benchmark Low-Complexity Networks: Initial experimentation involves four networks: MobileNetV1, MobileNetV2, NASNetMobile, and EfficientNetB0. EfficientNetB0 is identified as the optimal choice due to its favorable trade-off between accuracy and model complexity.

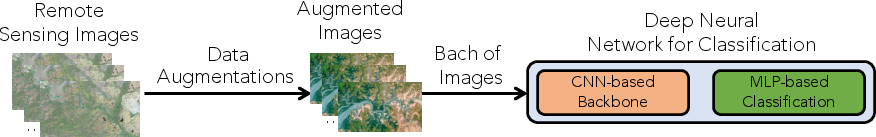

Figure 1: The high level architecture of proposed RSIC system.

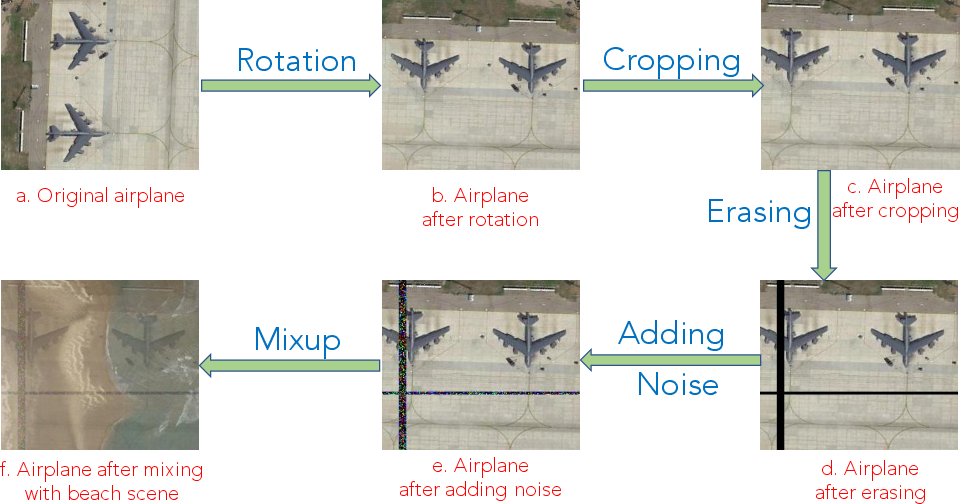

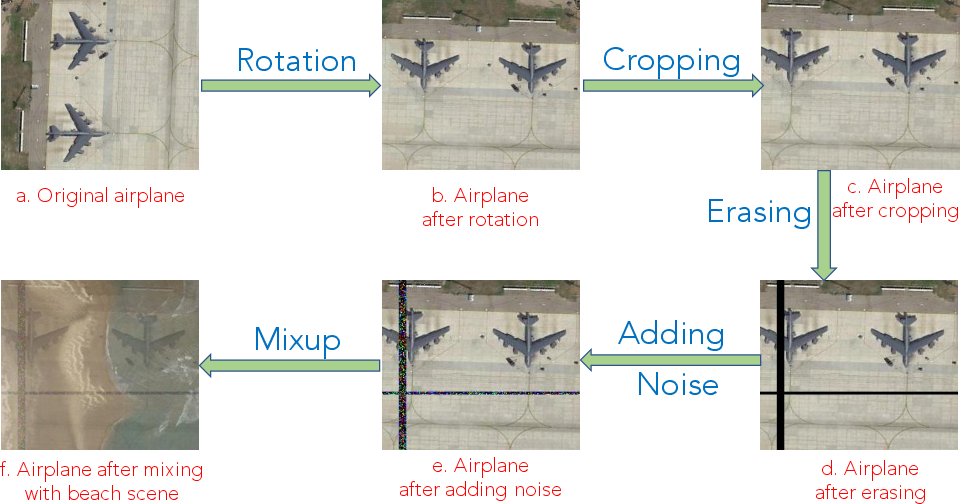

- Data Augmentation: Various data augmentation techniques, including random cropping, noise addition, and mixup, are employed to enhance the training dataset and improve generalization.

Figure 2: Data augmentation methods: Rotation, Random Cropping, Random Erasing, Adding Noise, and Mixup in the order.

- Transfer Learning: EfficientNetB0 is pre-trained on the extensive ImageNet dataset and fine-tuned for RSIC, leveraging transfer learning to accelerate convergence and improve model accuracy.

Figure 3: Apply the transfer learning technique for the proposed deep neural network classification.

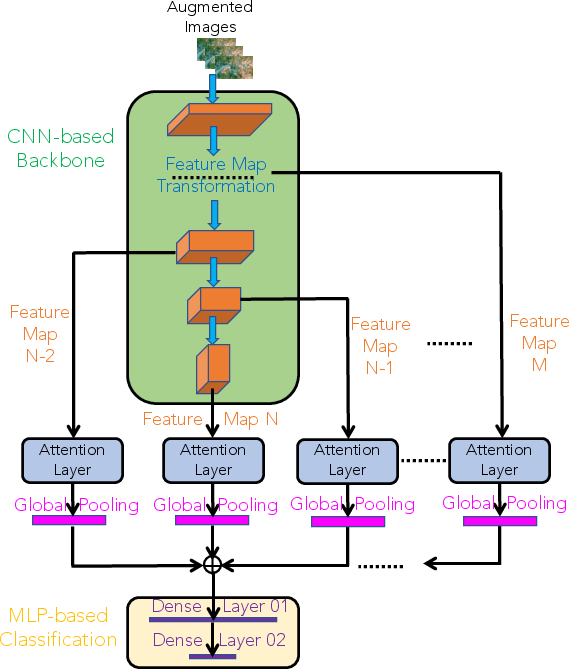

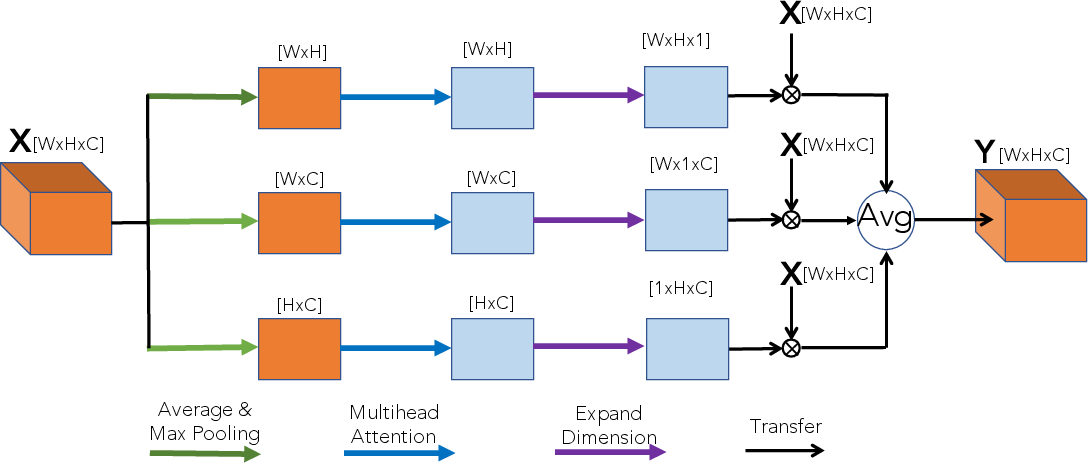

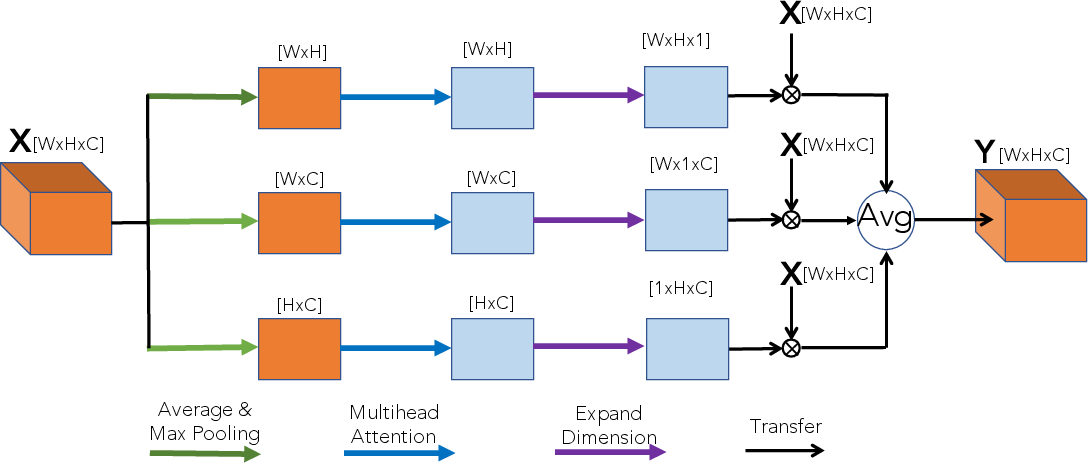

- Attention Mechanisms and Multihead Attention: A novel multihead attention layer is introduced, focusing on enhancing feature map regions critical for classification. This layer takes into account spatial and channel dimensions to better represent feature dependencies.

Figure 4: Apply attentions schemes to further improve the proposed deep neural network classification.

Figure 5: Proposed Multihead attention based layer.

Results and Evaluation

The model's performance is evaluated on the NWPU-RESISC45 dataset, showcasing competitive accuracy scores across different training percentages (10% and 20% training data). The integration of quantization techniques further refines the model's memory requirements to meet edge device constraints, reducing the model's footprint to under 9.4 MB.

- The EfficientNetB0 with applied multihead attention demonstrates robust performance improvements, achieving accuracy gains compared to baseline models without attention.

- Results indicate that the proposed model's accuracy is competitive with state-of-the-art methods, with efficient memory usage that is suitable for edge deployment.

Conclusion

The presented work successfully merges several advanced techniques—transfer learning, attention mechanisms, and model quantization—to create a low-complexity yet powerful RSIC model. It sets the groundwork for future exploration into deploying AI models on resource-constrained devices without compromising performance. By maintaining a balance between accuracy and computational efficiency, this approach exemplifies a significant step towards practical applications in real-world remote sensing tasks.