- The paper introduces a dynamic Res-U-Net architecture augmented with PCA, VDVI, MBI, and Sobel edge features to enhance multiscale building segmentation.

- It employs composite-guided training with Combo-Loss and cyclical learning rate strategies to achieve high accuracy and resource efficiency across diverse datasets.

- The study demonstrates superior performance in urban mapping while highlighting persistent challenges with shadow occlusion and spectral similarity.

Feature-Augmented Deep Networks for Multiscale Building Segmentation in High-Resolution UAV and Satellite Imagery

Introduction

This study introduces a comprehensive deep learning framework that addresses critical challenges in automated building segmentation from high-resolution remote sensing data, particularly RGB UAV and satellite imagery. Traditional approaches relying solely on spectral information are inherently limited in urban environments due to spectral similarity between buildings and non-buildings, frequent presence of shadows, and highly variable building geometries. The authors propose a methodology that augments RGB data with derived spatial-spectral features and deploys a dynamic, multiscale-capable Res-U-Net segmentation architecture. Additionally, they contribute optimization in model training through modern curriculum strategies, coupled loss functions, and explicit resource efficiency considerations.

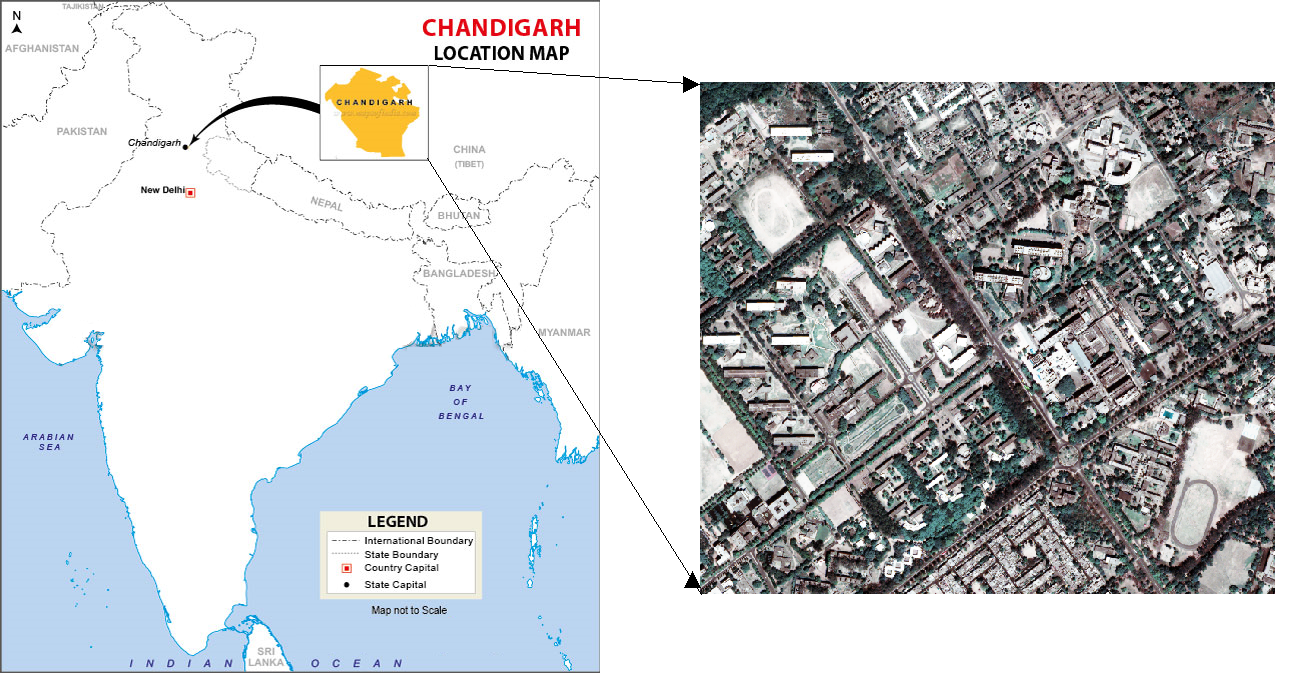

Figure 1: Inset map and snapshot highlighting Chandigarh City, India; the test site for this study.

Curated Multiscale Dataset and Feature Augmentation

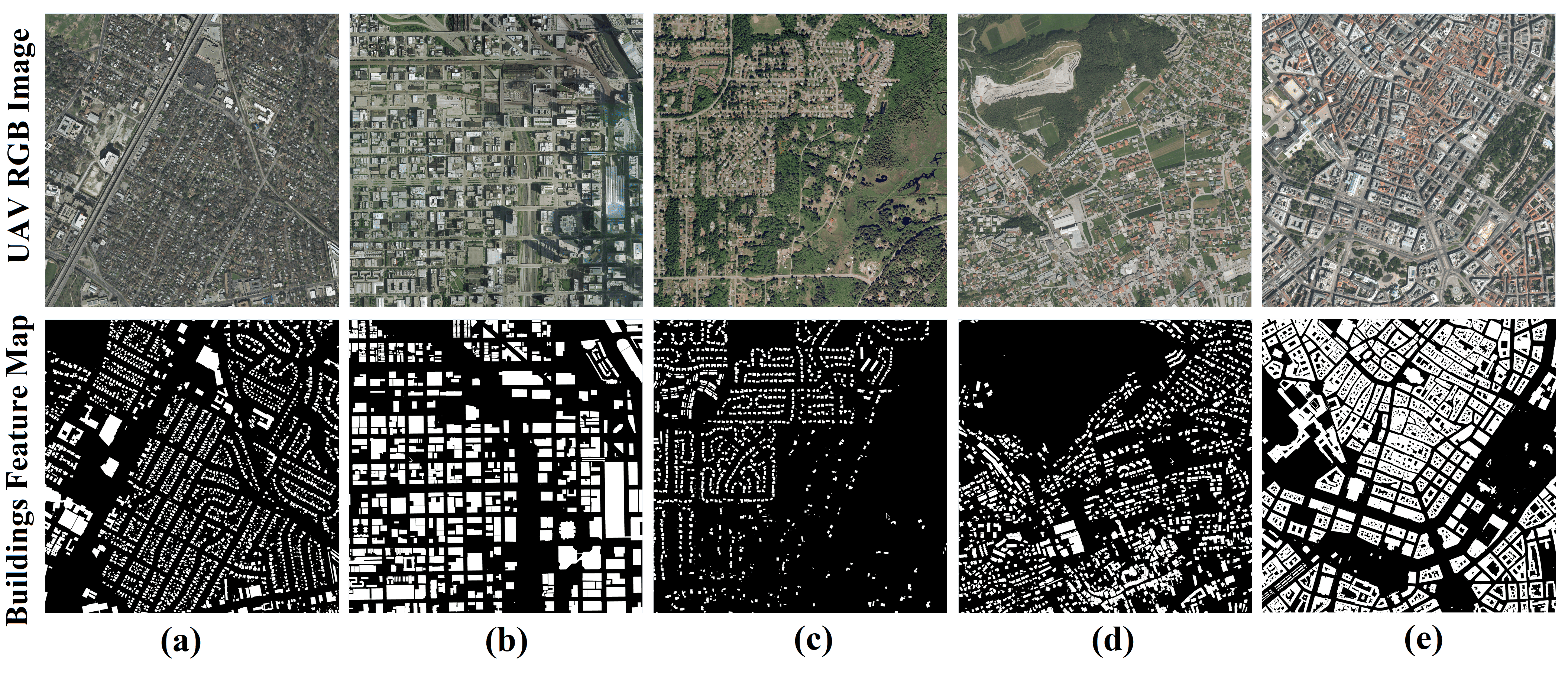

A robust multiscale, multi-sensor dataset is curated from five major open-source aerial and satellite datasets spanning the US, Europe, Africa, and Asia. Images are tiled to 224×224 pixels, and a minimum-label-density filter is applied to mitigate class imbalance and label sparsity—a necessary step to avoid model bias during training.

Figure 2: Image and label pairs from each of the five datasets: (a) Inria Image Labelling Dataset, (b) Wuhan Housing Dataset, (c) Massachusetts Building Dataset, (d) CrowdAI Mapping Challenge Dataset, (e) Open Cities AI Mapping Challenge Dataset.

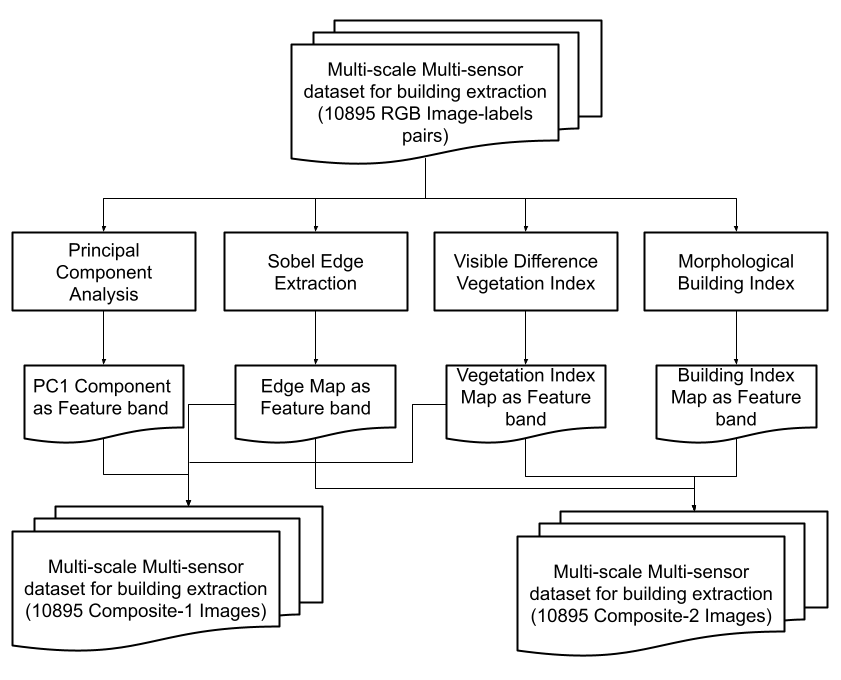

To break the limits of purely spectral discrimination, four secondary features are derived from the input RGB data:

- Principal Component Analysis (PCA): PC1 as an orthogonalized capture of maximum variance.

- Visible Difference Vegetation Index (VDVI): For explicit discrimination between buildings and vegetation using only visible bands.

- Morphological Building Index (MBI): For discrimination against shadows and brightness-oriented segmentation.

- Sobel Edges: For explicit emphasis on edge localization.

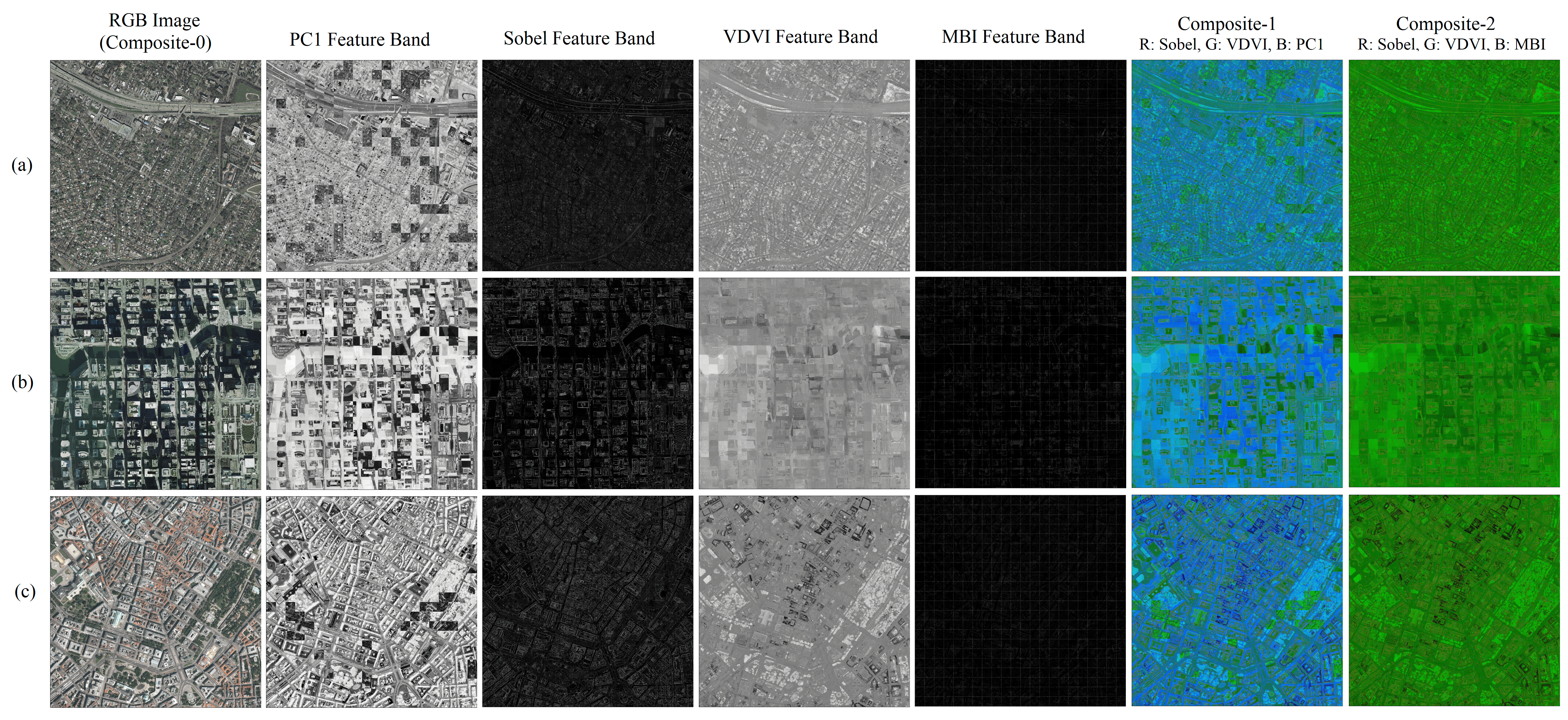

Three 3-band composite types are created: CB0 (original RGB), CB1 (Sobel/VDVI/PC1), and CB2 (Sobel/VDVI/MBI), facilitating controlled experiments to understand the utility of each information type.

Figure 3: Methodology for extracting guiding features and generating different image composites for the dataset.

Figure 4: Three UAV images exhibiting the feature bands and Composite-0, Composite-1, and Composite-2 combinations.

Dynamic Res-U-Net for Multiscale Segmentation

The core segmentation model is a dynamic Res-U-Net—integrating pre-trained ResNet34 as encoder with a dynamically initialized U-Net decoder and PixelShuffle upsampling. The model is architecture-agnostic to input scale, supporting spatial resolutions from 0.4–2.7 m.

Training Strategies and Optimization

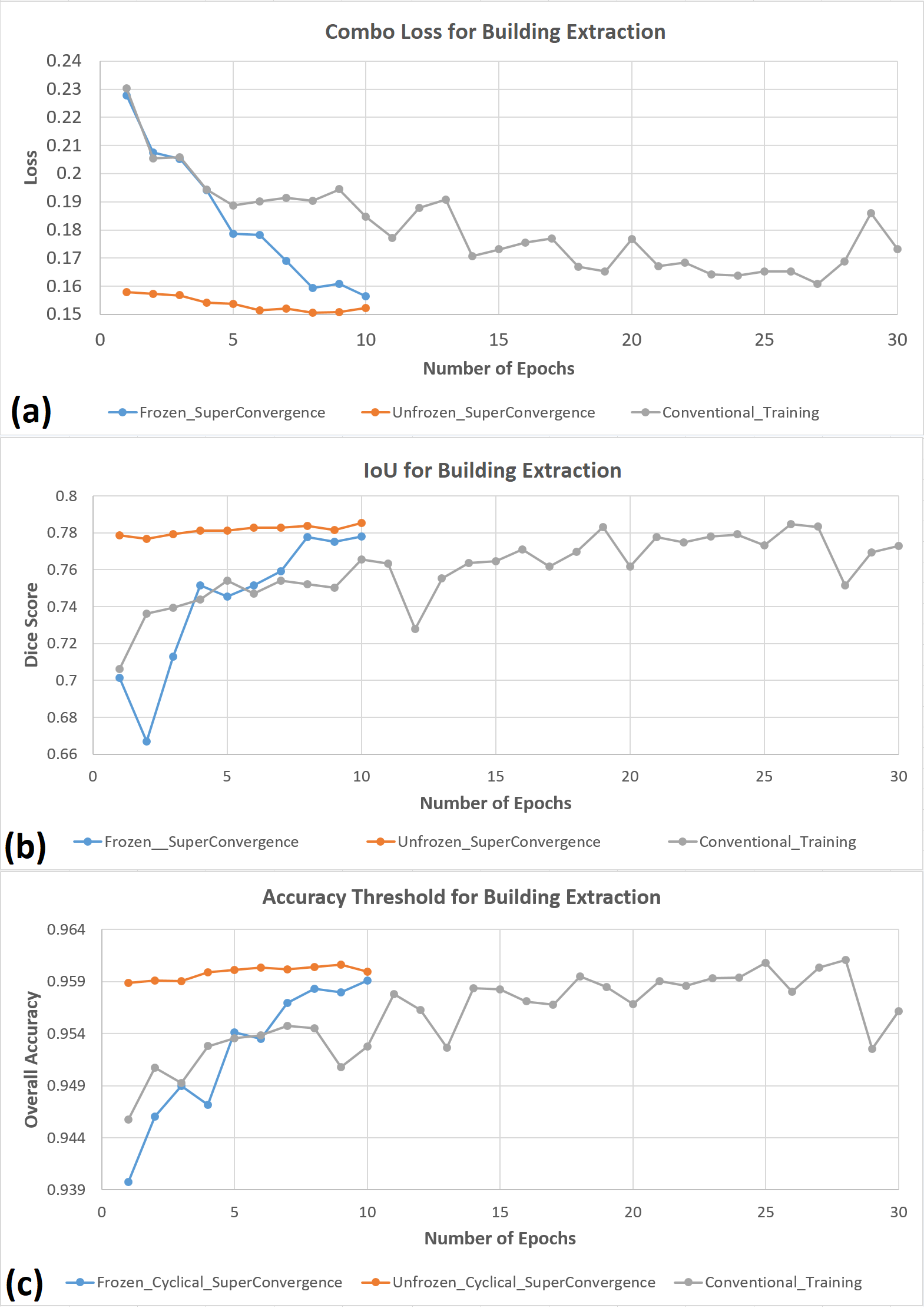

The authors apply cyclical learning rate scheduling (CLR) and SuperConvergence to substantially reduce wall time and resource consumption, achieving convergence within ∼10–15 epochs rather than typical 30+ epochs required by static learning rates. A two-stage curriculum (frozen/unfrozen training) is implemented—first freezing all but the final layer for transfer learning initialization, then jointly optimizing all parameters.

- ADAM optimizer is used with decay rate 0.9.

- Resource Efficiency: Reported reduction in training time of >50% relative to conventional strategies on consumer-grade GPUs.

Quantitative and Qualitative Results

In-Sample Multiscale Validation

- CB0 (RGB only): Mean IoU = 0.80, mean F1 = 0.86, overall accuracy = 96.5%.

- CB1 and CB2: Slight reduction in performance (mean IoU = 0.65–0.69), but selectively superior for backgrounds with strong vegetation or shadow interference.

Out-of-Sample Testing: UAV and Satellite

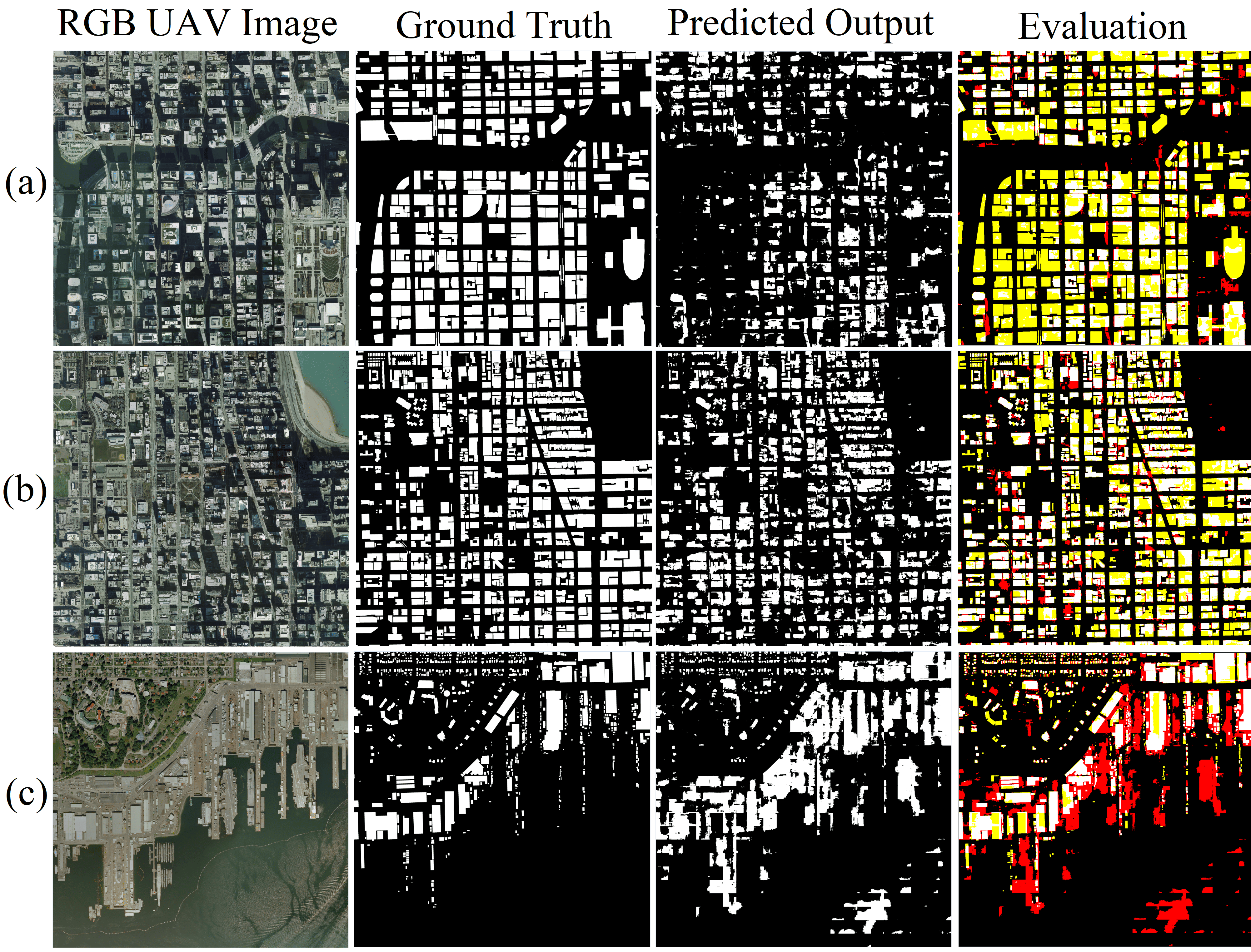

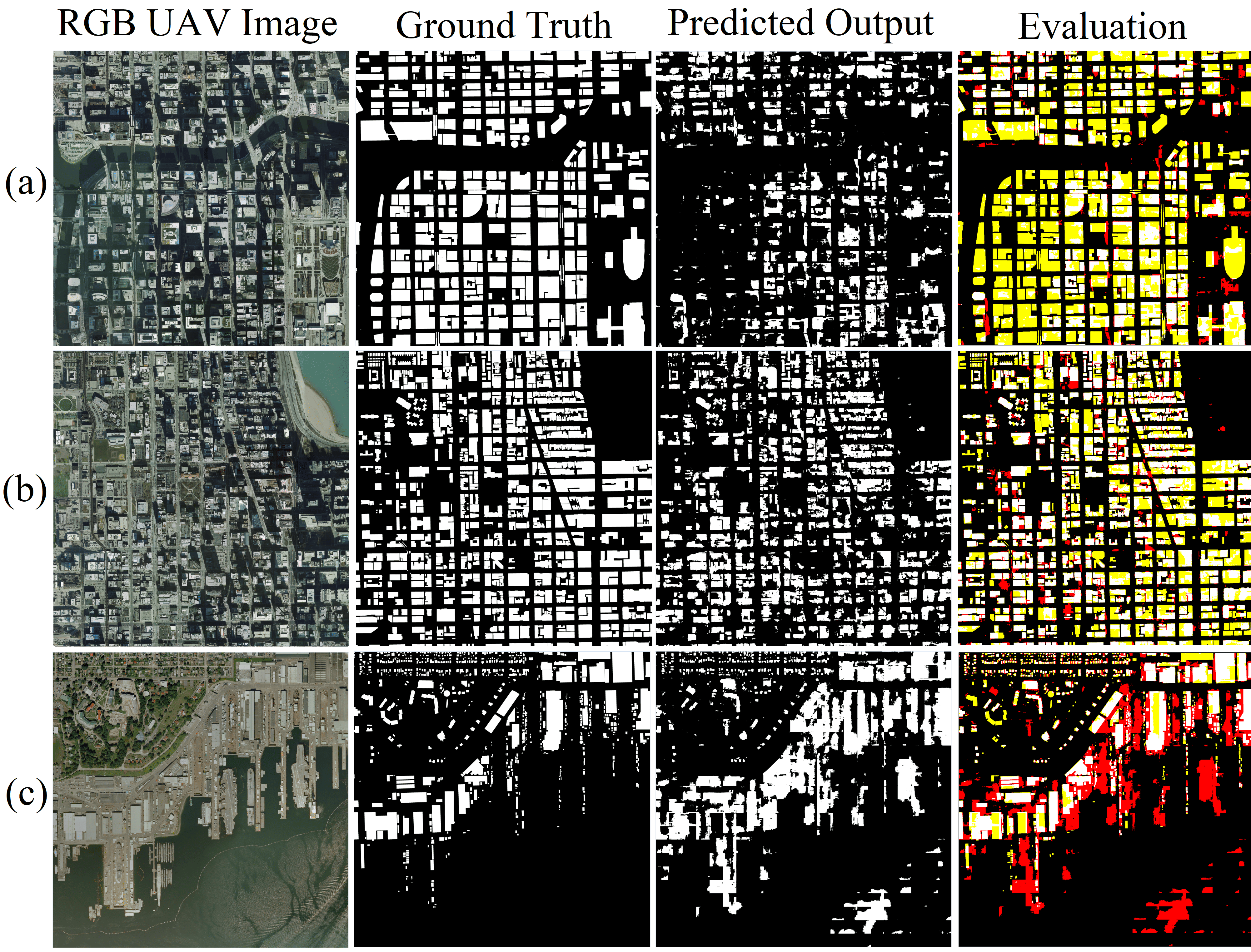

UAV and satellite test sets demonstrate effective cross-domain transfer and generalization, except in cases dominated by heavy shadow occlusion or extreme background confusion.

Figure 6: UAV test set instances and their building segmentation results for each of the three composites.

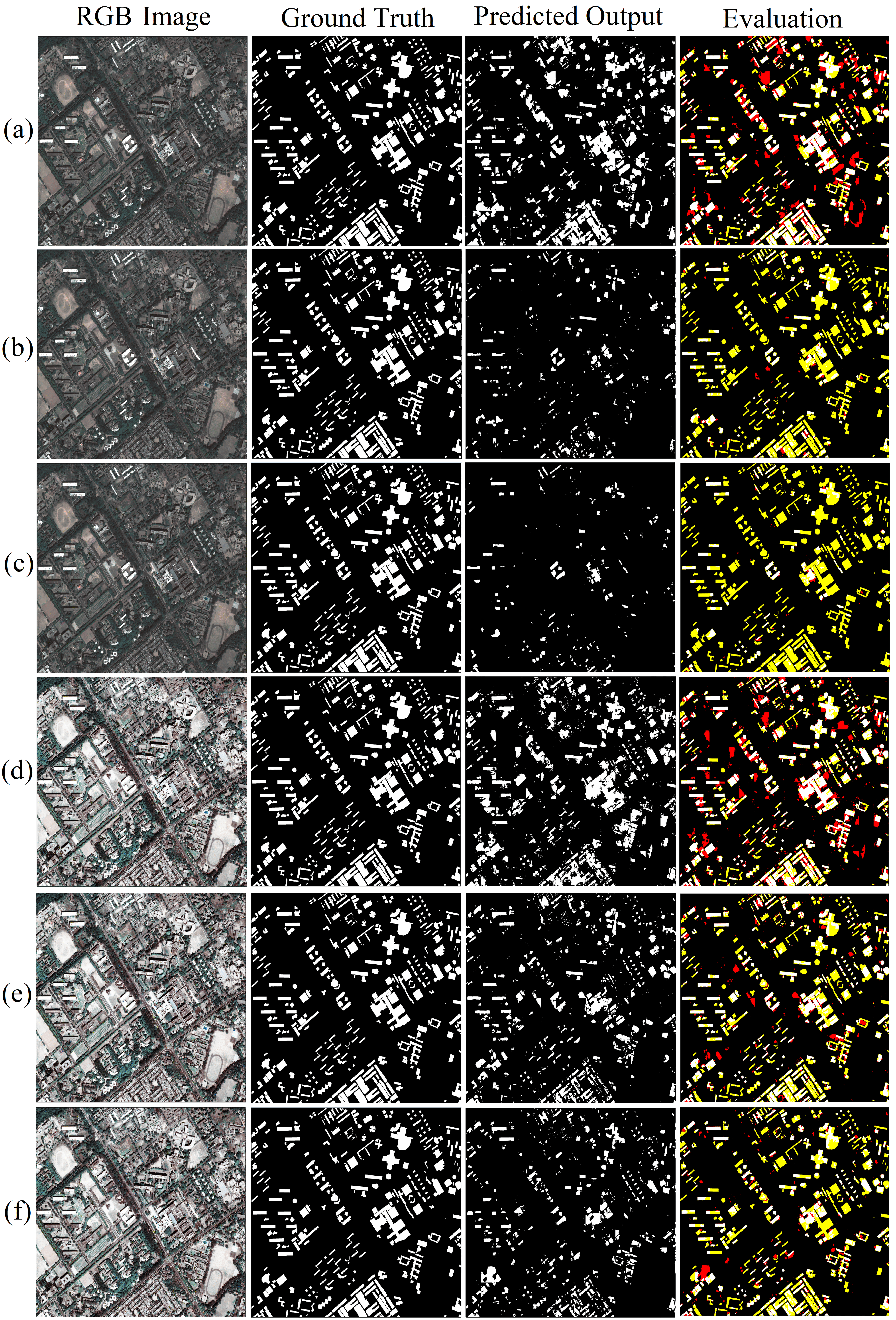

Figure 7: Satellite test set evaluation. The first column is input to the model, the second column is the ground truth, the third column shows model predictions for building segmentation, and the fourth column is evaluation images showing TP (white), TN (black), FP (red), and FN (yellow).

- Challenge: False negatives increase predominantly in presence of building-shadow overlap or extreme water/bright non-building structures.

- Contrast-Equalization: Introduced for satellite imagery, raises both true positive and false positive detection, enabling control over recall/precision tradeoff.

Cross-Loss Analysis

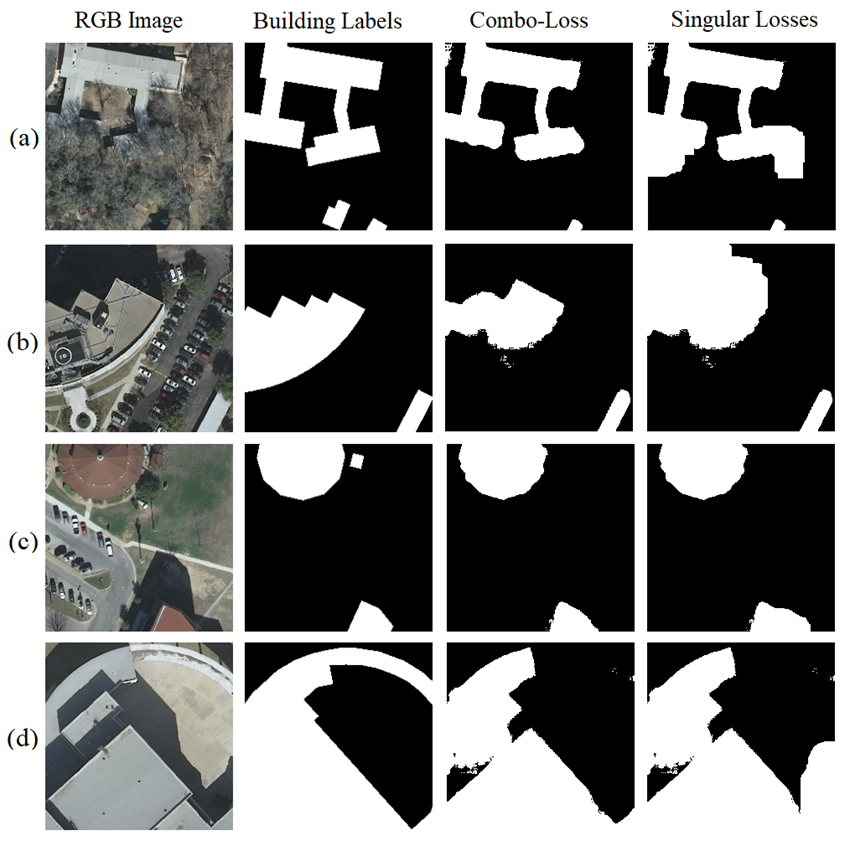

The proposed Combo-Loss function is empirically validated to achieve both higher IoU and visibly crisper object boundaries compared to BCE or Dice alone, especially under conditions of boundary ambiguity and spectrally similar non-building adjacents.

Figure 8: Comparison of Combo-Loss and singular loss for building segmentation. The first column contains the RGB Image, the second column contains corresponding image labels, the third column contains building segmentation results when Combo-Loss is used, the fourth column contains building segmentation results when other singular loss functions are used.

Training Policy Ablation

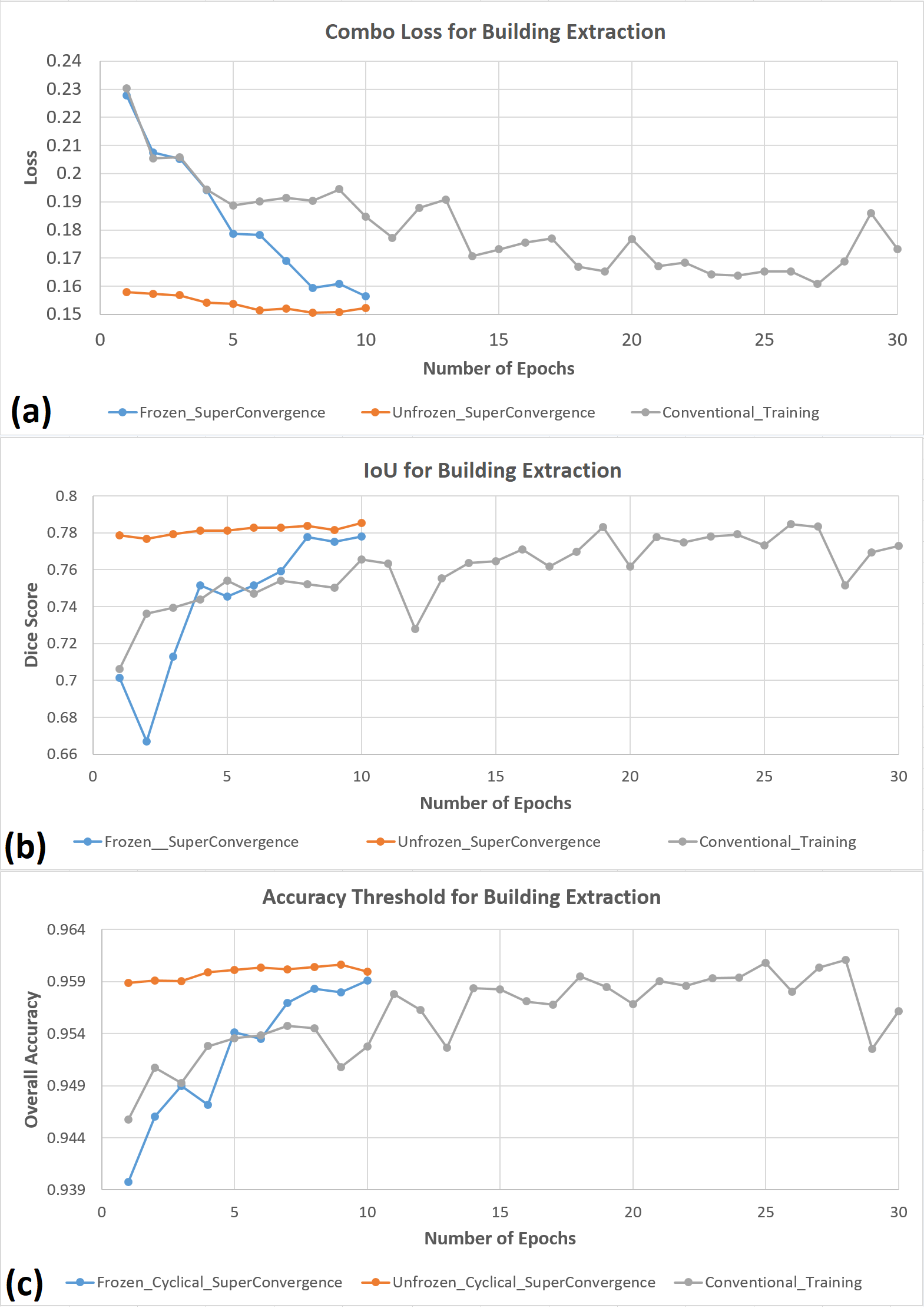

CLR and SuperConvergence yield steep early convergence in loss, IoU, and accuracy (as compared in Figure 9), validating their resource/time efficiency without compromising best-case accuracy.

Figure 9: (a) The combo loss curves for three different training techniques for Res-U-Net trained on CB0 validation dataset, (b) The IoU curves for three different training techniques for Res-U-Net trained on CB0 validation dataset, (c) The accuracy curves for three different training techniques for Res-U-Net trained on CB0 dataset.

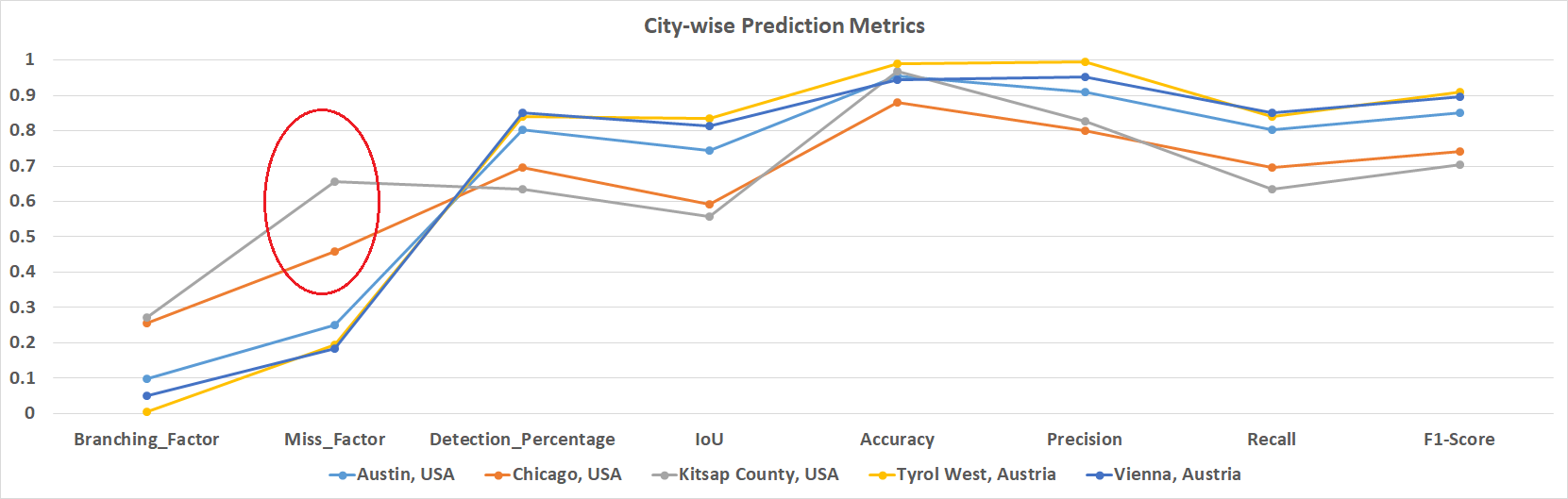

Error Analysis and Failure Modes

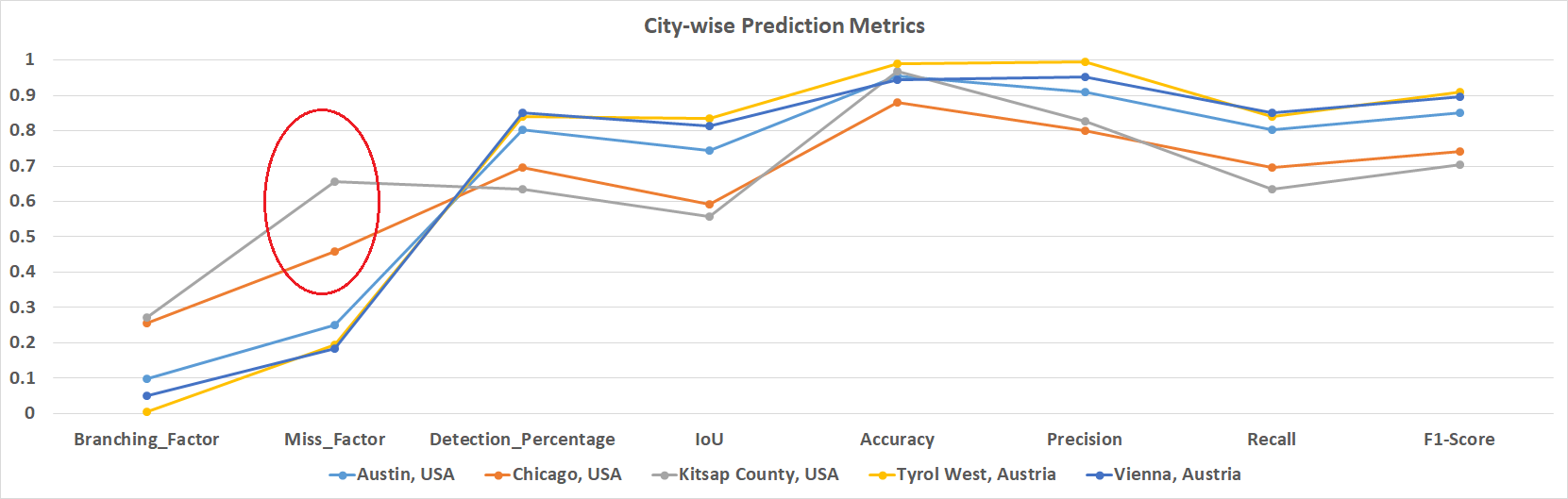

Ablation by geography reveals false negative rate is maximized in cities and image regions with high shadow fraction (Figure 10). Direct manual inspection confirms the residual challenge in separating shadow-entangled buildings from true negatives when spectral or spatial features collapse under occlusion.

Figure 10: City-wise prediction metrics for the UAV test set, the high miss factor in Chicago and Kitsap is highlighted.

Figure 11: Select instances from the UAV test set of CB0 combination that led to high false positives and high miss factor.

Comparative Evaluation and Benchmarks

The proposed Res-U-Net + Combo-Loss (CB0) achieves results that outperform or match state-of-the-art approaches, including transformer backbones and advanced attention/topological regularization, in mean accuracy, F1, and IoU on public benchmarks (Inria, Massachusetts, Open Cities, etc.), with significantly lower computational and annotation requirements.

Implications, Limitations, and Future Directions

- Practical Implications: The framework is directly transferable to mapping applications in urban planning, disaster response, and infrastructure management, under visible-only satellite or UAV imagery constraints.

- Limitation: All tested deep learning strategies fail to yield high recall for buildings in persistent occlusion by shadow, indicating a persistent theoretical gap that likely requires incorporation of temporal or multi-spectral (NIR/SAR) cues.

- Future Work: Primary research directions should focus on shadow-invariant feature representation, integration of additional complimentary sensing modalities, and segmentation regularization using explicit shape priors or synthetic data augmentation for shadowed environments.

- Open Science: Code (PyTorch/FastAI-compatible) is made available. Model interoperability and transfer learning paths are facilitated by adherence to standard three-band (RGB) input.

Conclusion

The study provides a comprehensive solution for multiscale building segmentation in spectrally restricted remote sensing imagery, combining systematic feature augmentation, a scalable U-Net/ResNet architecture, and resource-efficient training. While overall results approach or surpass existing deep learning segmentation benchmarks for building extraction, persistent limitations remain for shadow-occluded structures. The methodology sets a practical reference for further research in the domain, with immediate real-world applicability to building detection and urban analytics from high-resolution RGB data.