- The paper introduces a new shared task that benchmarks language model pretraining on a corpus mimicking child-like linguistic exposure.

- It categorizes the challenge into strict, strict-small, and loose tracks to assess data efficiency under varied dataset constraints.

- The study bridges human language acquisition and model performance, highlighting techniques beneficial for low-resource language processing.

Summary of "The BabyLM Challenge: Sample-efficient Pretraining on a Developmentally Plausible Corpus"

The paper "Call for Papers - The BabyLM Challenge: Sample-efficient Pretraining on a Developmentally Plausible Corpus" (2301.11796) introduces a shared task named the BabyLM Challenge. This initiative aims to explore LLM pretraining on datasets that mimic the scale and type of linguistic input children receive, offering a practical framework for sample-efficient LLM development. Three distinct tracks are provided for participants to investigate various training strategies under these conditions.

Motivation

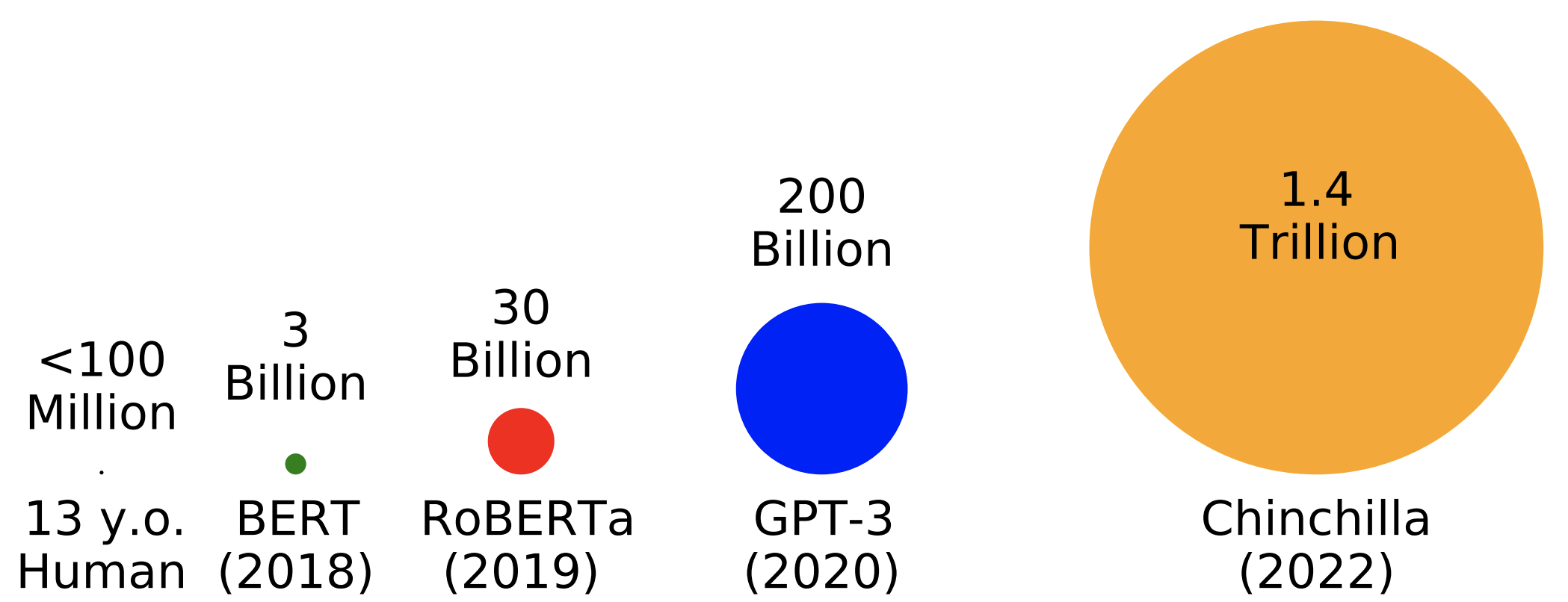

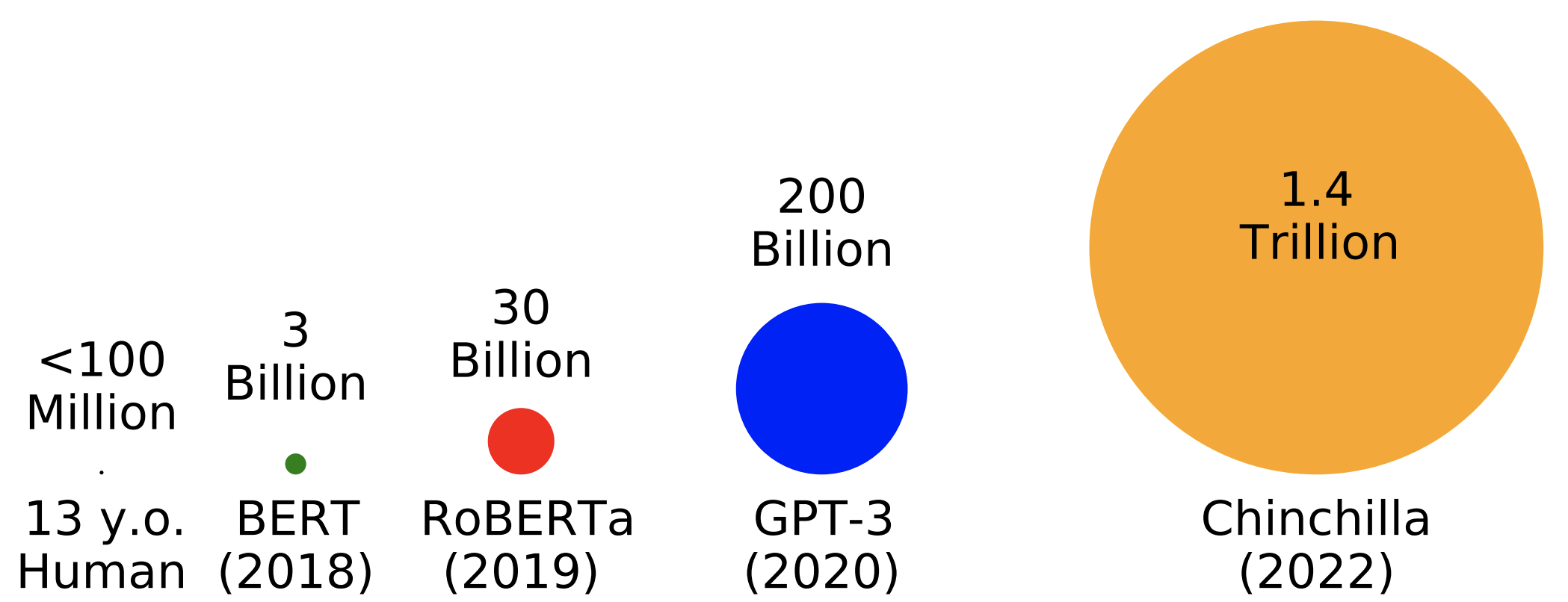

In recent years, the focus in LLM development has heavily leaned towards optimization at vast scales, both in terms of model parameters and dataset sizes, often far surpassing the typical linguistic exposure of human children (Figure 1).

Figure 1: Modern LLMs are trained on data multiple orders of magnitude larger than the amount available to a typical human child.

Despite the advances in large-scale language modeling, the paper emphasizes a notable gap in the research focused on smaller, human-like data scales. This challenge highlights the potential benefits of scaled-down pretraining, such as fostering novel techniques for data efficiency and enabling more accurate cognitive models that emulate human language acquisition. This could lead to significant insights into how humans learn languages effectively and inspire methods to enhance modeling for low-resource languages.

Challenge Structure and Tracks

The BabyLM Challenge comprises three primary tracks: Strict, Strict-small, and Loose. Participants in each track face various constraints regarding dataset size and content.

- Strict and Strict-small Tracks: These tracks confine submissions to a predetermined dataset size, namely 10M and 100M words, including data from child-directed speech, children's books, transcribed speech, and encyclopedic content like Wikipedia. The dataset offers a diverse language input, representing various domains, ideal for evaluating models on the shared evaluation pipeline.

- Loose Track: This track allows for more flexibility, permitting the use of non-linguistic data or model-generated text given the 100M word cap. Innovations in domain selection and data modality are encouraged here, fostering further exploration into language modeling outside conventional boundaries.

Dataset Composition

The datasets utilized in the challenge are crafted to reflect the type and quantity of linguistic input a child might encounter. Key considerations include:

- Scale: The dataset size mimics the annual linguistic exposure estimated for children, which ranges from 2M to 7M words.

- Content: A predominantly transcribed speech dataset reflects the spoken nature of most linguistic input to children, optimally configuring the dataset to address developmental preliminaries in language acquisition.

Evaluation and Baselines

An open evaluation pipeline will be provided, utilizing tools like HuggingFace’s transformers library to facilitate model testing across diverse settings. An assortment of baseline models, trained using the hyperparameters from well-known LLMs like OPT, RoBERTa, and T5, will be released. These baselines serve as rudimentary benchmarks, establishing a foundation upon which participants can build and compare their improvements.

Implications and Future Developments

The BabyLM Challenge positions itself as a crucial entry point for investigating sample-efficient LLM pretraining under data constraints inspired by human development. By focusing on smaller scales, the challenge democratises research, making it more accessible outside of large industry labs. This may stimulate advancements in data-efficient architectural approaches that could scale up to benefit larger datasets and low-resource language applications.

Moreover, this research opens pathways to understanding cognitive mechanisms underlying efficient human language learning, potentially leading to breakthroughs that bridge model performance with human-like language acquisition.

Conclusion

The BabyLM Challenge offers a substantial opportunity for advancements in sample-efficient language modeling, encouraging innovation within limited data confines while aligning closely with human developmental linguistic inputs. Through this challenge, researchers are invited to contribute toward building more cognitively plausible models, laying foundational insights that may inspire future trends in both language modeling and cognitive science.