Archetypal Analysis++: Rethinking the Initialization Strategy

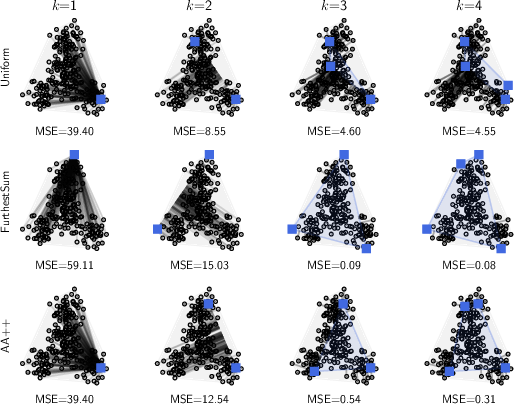

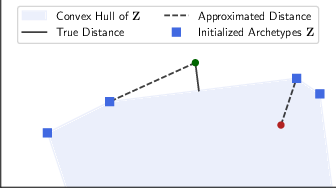

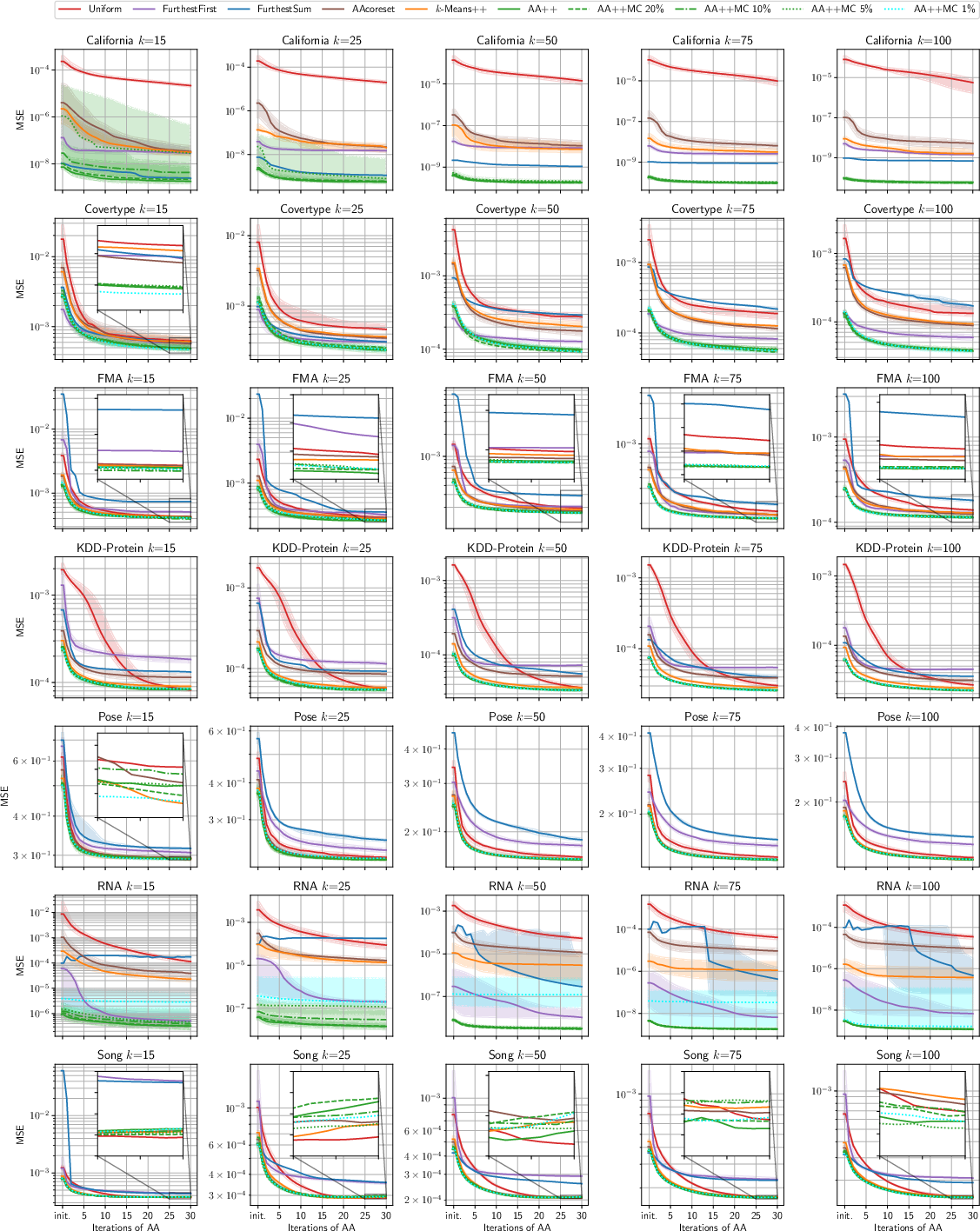

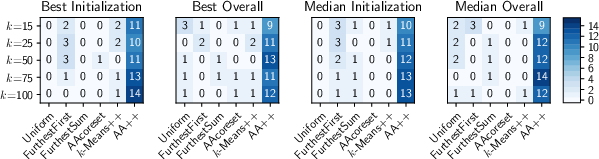

Abstract: Archetypal analysis is a matrix factorization method with convexity constraints. Due to local minima, a good initialization is essential, but frequently used initialization methods yield either sub-optimal starting points or are prone to get stuck in poor local minima. In this paper, we propose archetypal analysis++ (AA++), a probabilistic initialization strategy for archetypal analysis that sequentially samples points based on their influence on the objective function, similar to $k$-means++. In fact, we argue that $k$-means++ already approximates the proposed initialization method. Furthermore, we suggest to adapt an efficient Monte Carlo approximation of $k$-means++ to AA++. In an extensive empirical evaluation of 15 real-world data sets of varying sizes and dimensionalities and considering two pre-processing strategies, we show that AA++ almost always outperforms all baselines, including the most frequently used ones.

- A geometric approach to archetypal analysis via sparse projections. In Proceedings of International Conference on Machine Learning, pp. 42–51. PMLR, 2020.

- k𝑘kitalic_k-means++: The advantages of careful seeding. In Proceedings of the Eighteenth Annual ACM-SIAM Symposium on Discrete Algorithms, SODA ’07, pp. 1027–1035. Society for Industrial and Applied Mathematics, 2007.

- Approximate k𝑘kitalic_k-means++ in sublinear time. In Thirtieth AAAI Conference on Artificial Intelligence, 2016.

- Making archetypal analysis practical. In Joint Pattern Recognition Symposium, pp. 272–281. Springer, 2009.

- Archetypal analysis as an autoencoder. In Workshop New Challenges in Neural Computation, volume 2015, pp. 8–16, 2015.

- Archetypal analysis for neuronal clique detection in low-rate calcium fluorescence imaging. In Proceedings of the 44th Annual International Conference of the IEEE Engineering in Medicine & Biology Society, pp. 162–166. IEEE, 2022.

- The million song dataset. In Proceedings of the 12th International Conference on Music Information Retrieval, 2011.

- Archetypal analysis of geophysical data illustrated by sea surface temperature. Artificial Intelligence for the Earth Systems, 1(3):e210007, 2022.

- Comparative accuracies of artificial neural networks and discriminant analysis in predicting forest cover types from cartographic variables. Computers and Electronics in Agriculture, 24(3):131–151, 1999.

- Airfoil self-noise and prediction. 1989.

- Cristian Sminchisescu Catalin Ionescu, Fuxin Li. Latent structured models for human pose estimation. In International Conference on Computer Vision, 2011.

- IJCNN 2001 challenge: Generalization ability and text decoding. In Proceedings of International Joint Conference on Neural Networks, volume 2, pp. 1031–1036. IEEE, 2001.

- A large-scale view of marine heatwaves revealed by archetype analysis. Nature Communications, 13(1):7843, 2022.

- Fast and robust archetypal analysis for representation learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1478–1485, 2014.

- Archetypal analysis. Technometrics, 36(4):338–347, 1994.

- Moving archetypes. Physica D: Nonlinear Phenomena, 107(1):1–16, 1997.

- A geometric approach to archetypal analysis and nonnegative matrix factorization. Technometrics, 59(3):361–370, 2017.

- Near-convex archetypal analysis. IEEE Signal Processing Letters, 27:81–85, 2019.

- FMA: A dataset for music analysis. In 18th International Society for Music Information Retrieval Conference (ISMIR), 2017.

- Interval archetypes: a new tool for interval data analysis. Statistical Analysis and Data Mining: The ASA Data Science Journal, 5(4):322–335, 2012.

- UCI machine learning repository, 2017. URL http://archive.ics.uci.edu/ml.

- Competing output-sensitive frame algorithms. Computational Geometry, 45(4):186–197, 2012.

- Archetypal analysis with missing data: see all samples by looking at a few based on extreme profiles. The American Statistician, 74(2):169–183, 2020.

- Flavia Esposito. A review on initialization methods for nonnegative matrix factorization: towards omics data experiments. Mathematics, 9(9):1006, 2021.

- Weighted and robust archetypal analysis. Computational Statistics & Data Analysis, 55(3):1215–1225, 2011.

- Archetypal analysis for population genetics. PLoS Computational Biology, 18(8):e1010301, 2022.

- An 𝒪(n3L)𝒪superscript𝑛3𝐿\mathcal{O}(n^{3}L)caligraphic_O ( italic_n start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT italic_L ) primal interior point algorithm for convex quadratic programming. Mathematical Programming, 49(1):325–340, 1990.

- Teofilo F Gonzalez. Clustering to minimize the maximum intercluster distance. Theoretical Computer Science, 38:293–306, 1985.

- Probabilistic methods for approximate archetypal analysis. Information and Inference: A Journal of the IMA, 2022.

- Abdelwaheb Hannachi and N Trendafilov. Archetypal analysis: Mining weather and climate extremes. Journal of Climate, 30(17):6927–6944, 2017.

- Array programming with NumPy. Nature, 585(7825):357–362, 2020.

- Inferring biological tasks using pareto analysis of high-dimensional data. Nature Methods, 12(3):233–235, 2015.

- WK Hastings. Monte Carlo sampling methods using Markov chains and their applications. Biometrika, 57(1):97–109, 1970.

- Archetypal analysis for modeling multisubject fMRI data. IEEE Journal of Selected Topics in Signal Processing, 10(7):1160–1171, 2016.

- A best possible heuristic for the k𝑘kitalic_k-center problem. Mathematics of Operations Research, 10(2):180–184, 1985.

- Human3.6M: Large Scale Datasets and Predictive Methods for 3D Human Sensing in Natural Environments. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2014.

- Partitioning Around Medoids (Program PAM). Wiley And Sons, 1990.

- Deep archetypal analysis. In German Conference on Pattern Recognition, pp. 171–185. Springer, 2019.

- Learning extremal representations with deep archetypal analysis. International Journal of Computer Vision, 129(4):805–820, 2021.

- Geometry of the gene expression space of individual cells. PLoS Computational Biology, 11(7):e1004224, 2015.

- Classification of social anhedonia using temporal and spatial network features from a social cognition fMRI task. Human Brain Mapping, 40(17):4965–4981, 2019.

- Solving least squares problems, volume 15. SIAM, 1995.

- Learning the parts of objects by non-negative matrix factorization. Nature, 401(6755):788–791, 1999.

- Stuart Lloyd. Least squares quantization in PCM. IEEE Transactions on Information Theory, 28(2):129–137, 1982.

- Coresets for archetypal analysis. In Advances in Neural Information Processing Systems, volume 32, 2019.

- Frame-based data factorizations. In International Conference on Machine Learning, pp. 2305–2313. PMLR, 2017.

- Online dictionary learning for approximate archetypal analysis. In Proceedings of the European Conference on Computer Vision, pp. 486–501, 2018.

- Archetypal analysis for machine learning. In Proceedings of IEEE International Workshop on Machine Learning for Signal Processing, pp. 172–177. IEEE, 2010.

- Archetypal analysis for machine learning and data mining. Neurocomputing, 80:54–63, 2012.

- Unsupervised initialization of archetypal analysis and proportional membership fuzzy clustering. In International Conference on Intelligent Data Engineering and Automated Learning, pp. 12–20. Springer, 2019.

- Combining electro-and magnetoencephalography data using directional archetypal analysis. Frontiers in Neuroscience, 16, 2022.

- The effectiveness of lloyd-type methods for the k𝑘kitalic_k-means problem. Journal of the ACM, 59(6):1–22, 2013.

- Sparse spatial autoregressions. Statistics & Probability Letters, 33(3):291–297, 1997.

- Sun attribute database: Discovering, annotating, and recognizing scene attributes. In IEEE Conference on Computer Vision and Pattern Recognition, pp. 2751–2758. IEEE, 2012.

- Archetypal analysis for nominal observations. IEEE Transactions on Pattern Analysis and Machine Intelligence, 38(5):849–861, 2015.

- Probabilistic archetypal analysis. Machine Learning, 102(1):85–113, 2016.

- Graph-based neural acceleration for nonnegative matrix factorization. arXiv preprint arXiv:2202.00264, 2022.

- Abdul Suleman. On ill-conceived initialization in archetypal analysis. Advances in Data Analysis and Classification, 11(4):785–808, 2017.

- Archetypal analysis of diverse pseudomonas aeruginosatranscriptomes reveals adaptation in cystic fibrosis airways. BMC Bioinformatics, 14(1):1–15, 2013.

- Generalized low rank models. Foundations and Trends in Machine Learning, 9(1):1–118, 2016.

- Detection of non-coding RNAs on the basis of predicted secondary structure formation free energy change. BMC Bioinformatics, 7(1):1–30, 2006.

- Finding archetypal spaces using neural networks. In Proceedings of IEEE International Conference on Big Data, pp. 2634–2643. IEEE, 2019.

- Archetypoids: A new approach to define representative archetypal data. Computational Statistics & Data Analysis, 87:102–115, 2015.

- I-C Yeh. Modeling of strength of high-performance concrete using artificial neural networks. Cement and Concrete Research, 28(12):1797–1808, 1998.

- Günter M Ziegler. Lectures on Polytopes. Graduate Texts in Mathematics. Springer New York, 2012.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.