- The paper introduces a comprehensive design space that merges symbolic procedural models with neural techniques to achieve high-quality visual synthesis.

- It details methodologies including task specification, DSL formulation, and program synthesis to support effective 2D, 3D, and texture generation.

- The study outlines future research directions focusing on improved task specification and complex program generation to overcome current limitations.

Neurosymbolic Models for Computer Graphics

The paper "Neurosymbolic Models for Computer Graphics" (2304.10320) explores the integration of symbolic procedural models with machine learning techniques to advance computer graphics applications. This merging aims to capitalize on the interpretability, compact representations, and high-quality output characteristic of symbolic models, while leveraging the adaptability and ease-of-use offered by machine learning.

Overview of Neurosymbolic Models

Neurosymbolic models amalgamate the strengths of symbolic and neural methodologies. Symbolic procedural models have long been favored for their ability to produce high-quality visuals from compact, interpretable representations. However, creating these models from scratch is challenging and requires substantial expertise. Alternatively, neural models simplify the creation process, allowing explicit specification of visual characteristics, but often at the cost of interpretability.

In computer graphics, neurosymbolic models aim to synthesize, represent, and manipulate visual data by hybridizing symbolic and neural approaches. This paper delineates a design space where various neurosymbolic model formulations can be compared, showcasing the utility and potential research directions in several specific applications.

Design Space for Neurosymbolic Models

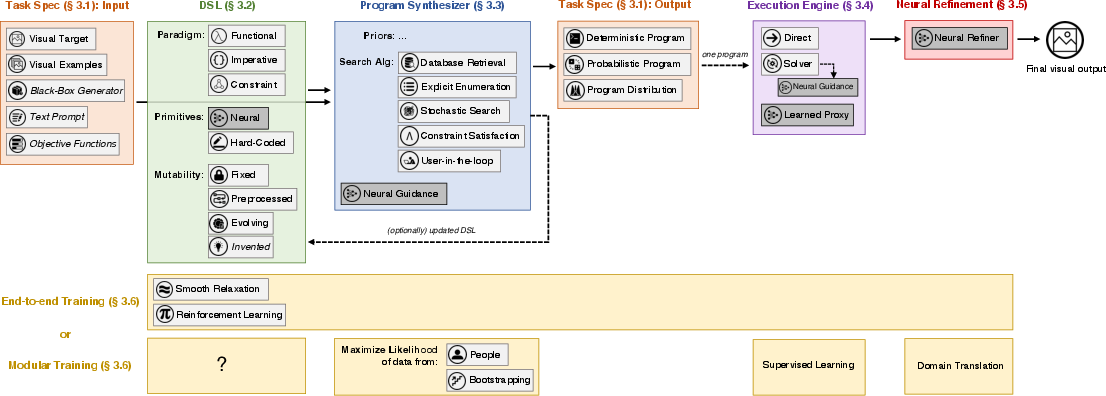

The paper proposes a comprehensive design space, which is structured around major components in the neurosymbolic modeling pipeline:

- Task Specification: Defines how user intent is communicated to the system, whether through visual targets, examples, or constraints.

- Domain-Specific Language (DSL): Specifies the language used for program representation, covering different paradigms and the incorporation of neural primitives.

- Program Synthesizer: Outlines the strategies for generating programs that fulfill the task specifications, including search algorithms and guidance mechanisms.

- Execution Engine: Describes the methodologies for realizing the program outputs, which might incorporate both direct execution and solver-based approaches.

- Neural Postprocessing: Outlines optional neural refinement steps for enhancing visual output quality.

This framework reveals current research trends and identifies underexplored areas in the design space, providing a roadmap for potential advancements.

Applications in Graphics Domains

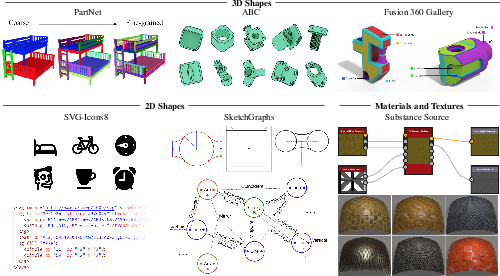

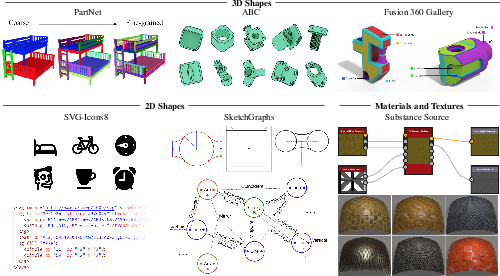

2D Shapes: The neurosymbolic methods for 2D shapes involve the synthesis of layouts, engineering sketches, vector graphics, and the inversion of graphical representations to infer underlying programs. These applications demonstrate the models' ability to handle both generation and reconstruction tasks effectively.

Figure 1: The design space of neurosymbolic models. A neurosymbolic model takes as input a specification of user intent and a domain-specific language (DSL), and it synthesizes programs that output visual data that satisfies the user intent.

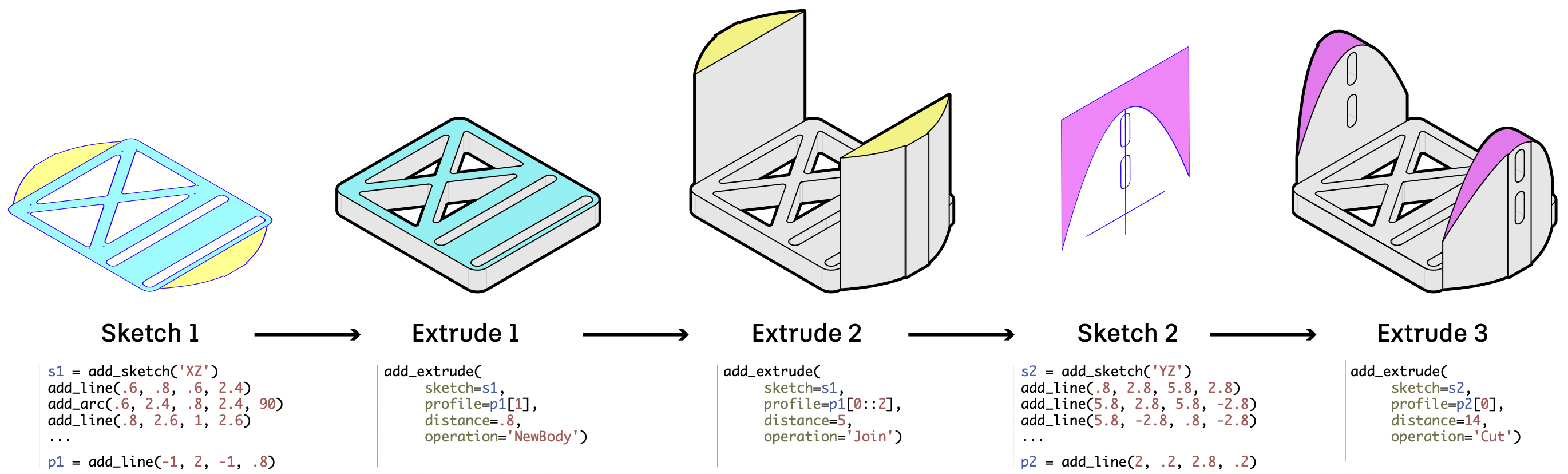

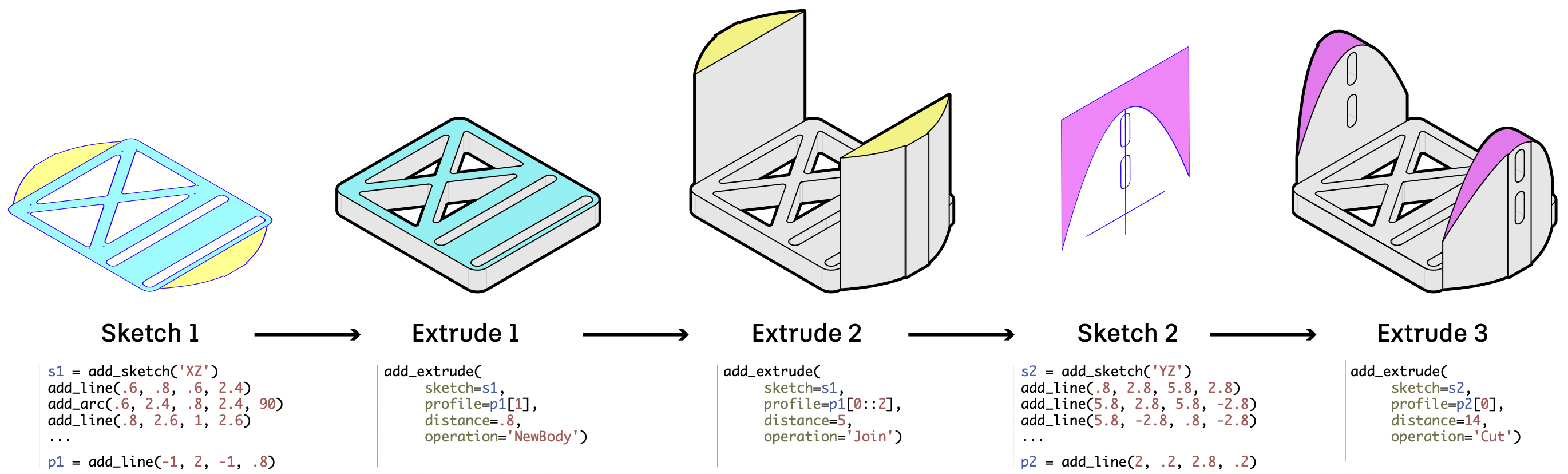

3D Shapes: In 3D modeling, neurosymbolic systems infer shape programs from visual targets and generate novel shapes autonomously. This approach supports structured data interpretation and manipulation in formats like CAD, CSG, and others.

Figure 2: Procedural modeling as applied to CAD: using feature-based modeling to produce solid geometry as a composition of operations on 2D sketches extruded into boundary representations~\cite{Fusion360Gallery}.

Materials and Textures: Recent work synthesizes node graphs for procedural materials either through learned generation or inverse procedural modeling, aiming to replicate target appearances or generate novel, visually plausible textures.

Figure 3: Examples of datasets that provide structured data that is more amenable to neurosymbolic models. We show examples of datasets for 2D shapes, 3D shapes, and materials. All datasets provide some form of structural information, such as part decompositions, construction sequences, or geometric relationships between parts.

Future Directions and Challenges

The paper highlights several avenues for future research:

- Task Specification Innovations: Explore new ways to specify user intent and broaden interaction paradigms (e.g., natural language interfaces).

- Complex Program Generation: Advance neurosymbolic models' ability to handle complex languages for practical applications.

- Invention of Domain-Specific Languages: Discover new languages tailored to specific visual domains and distributions.

- Modular and End-to-End Training: Enhance techniques for training in the absence of curated datasets of human-authored programs.

These challenges underscore the potential for expansive development within neurosymbolic frameworks, suggesting strategies for overcoming the current limitations in both usability and capability.

Conclusion

Neurosymbolic modeling represents a promising frontier in computer graphics, with applications reaching across design, automotive, simulation, and entertainment. By marrying symbolic robustness with neural flexibility, these models are paving the way for more interpretable, versatile, and efficient visual content creation. As research continues to address outlined gaps in implementation, neurosymbolic models are likely to become increasingly integral to modern computer graphics workflows.