- The paper introduces a framework that combines global distributed and structured symbolic latent representations to model complex scenes.

- It employs a dual-layer hierarchy with the StructDRAW prior for autoregressive feature-level generation and improved interpretability.

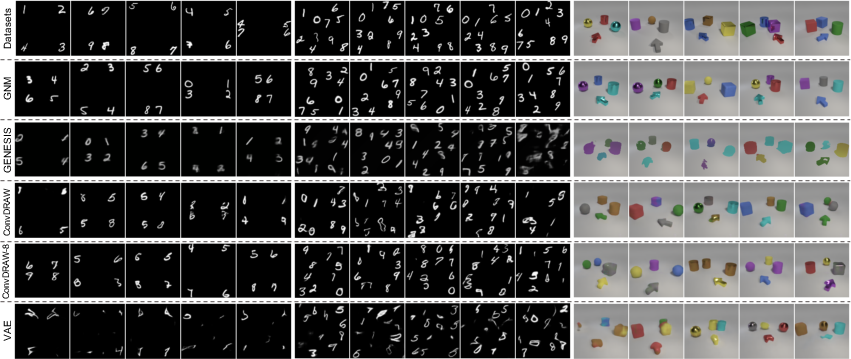

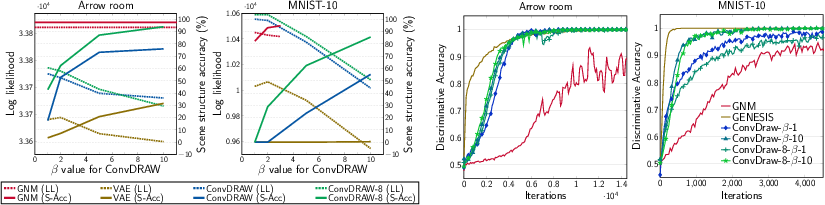

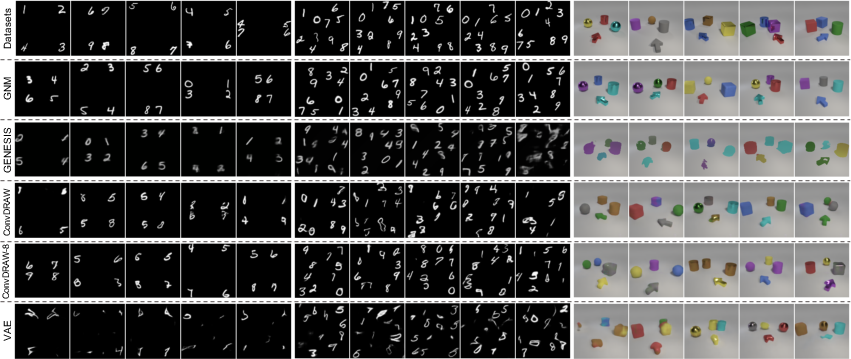

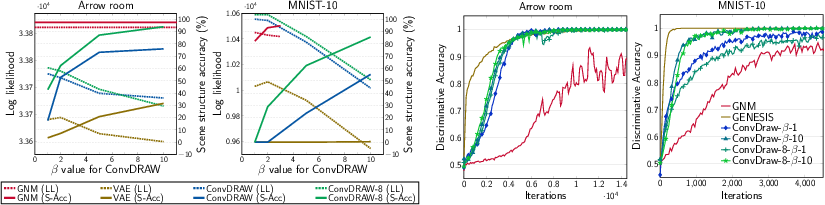

- Experiments on MNIST-4, MNIST-10, and Arrow room datasets demonstrate enhanced scene structure accuracy and competitive log-likelihoods.

Generative Neurosymbolic Machines

Introduction

The paper, "Generative Neurosymbolic Machines" (2010.12152), explores the reconciliation of symbolic and distributed representations within deep learning, addressing limitations in capturing complex and structured observations. The Generative Neurosymbolic Machines (GNM) are proposed to leverage both distributed and symbolic representations, supporting structured recognition and density-based generation. The architecture combines a two-layer latent hierarchy: a global distributed latent representation and a structured symbolic latent map, enhanced by the StructDRAW prior.

Symbolic and Distributed Representations

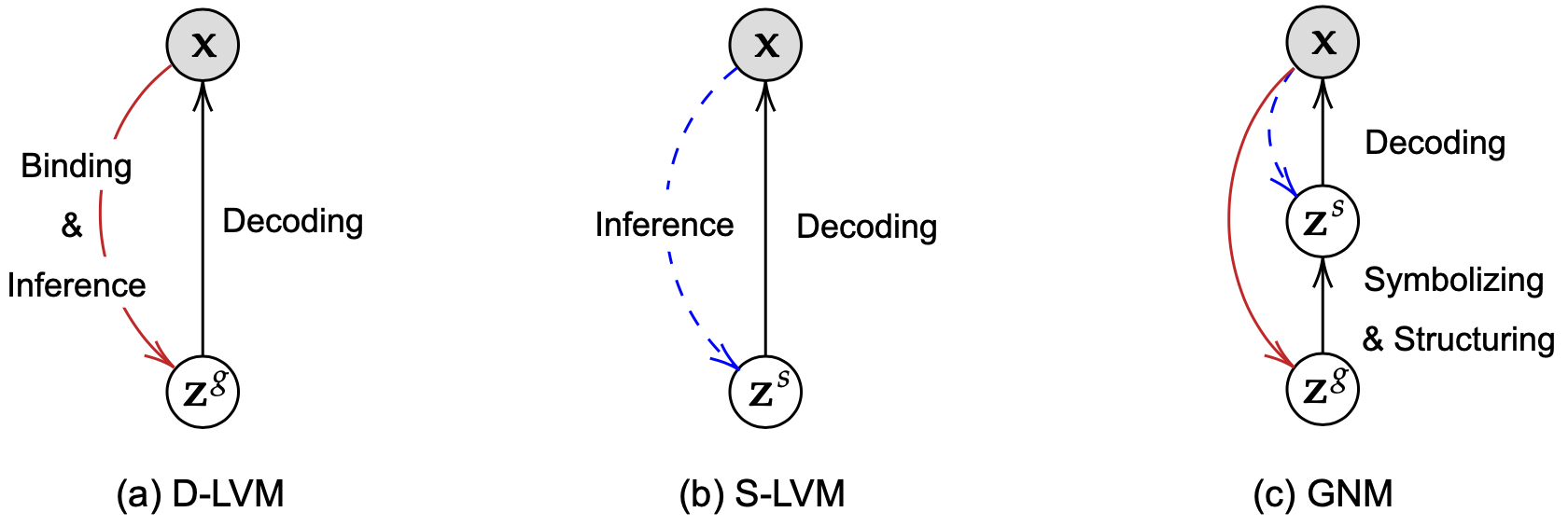

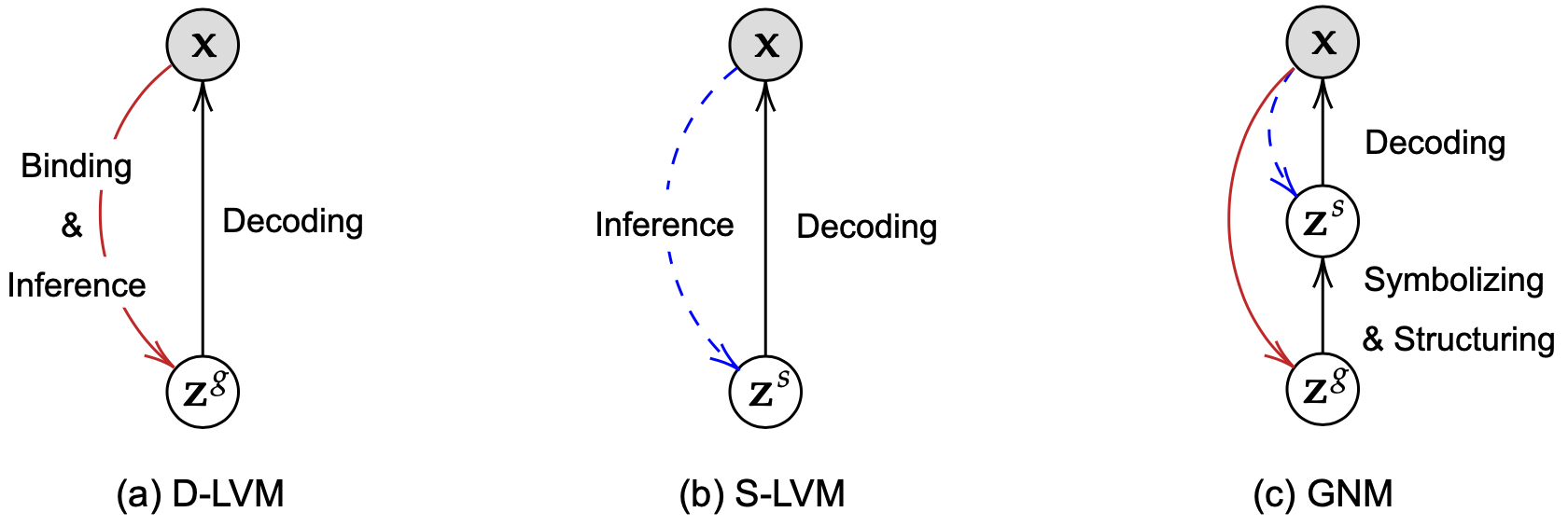

In latent variable models, representations perform variable binding and value inference, with VAEs exemplifying this approach. Symbolic representations assign semantic roles independently, while distributed representations allow semantic roles to be shared across latent vectors with correlations among elements. D-LVM offers flexibility in representing complex distributions, while S-LVM enhances interpretability and facilitates reasoning and modularity.

Figure 1: Graphical models of D-LVM, S-LVM, and GNM. bzg is the global distributed latent representation, bzs is the symbolic structured representation, and bx is an observation.

Generative Neurosymbolic Machines

Generation Process

GNM generates observations through a hierarchical latent structure. The top layer uses a distributed global representation (bzg) for capturing the global scene structure, while the bottom layer utilizes this to construct structured symbolic representations (bzs). The proposed StructDRAW prior enhances the latent structure maps' expressiveness by drawing abstract features in autoregressive steps and allowing for comprehensive global interaction.

Structured Representation

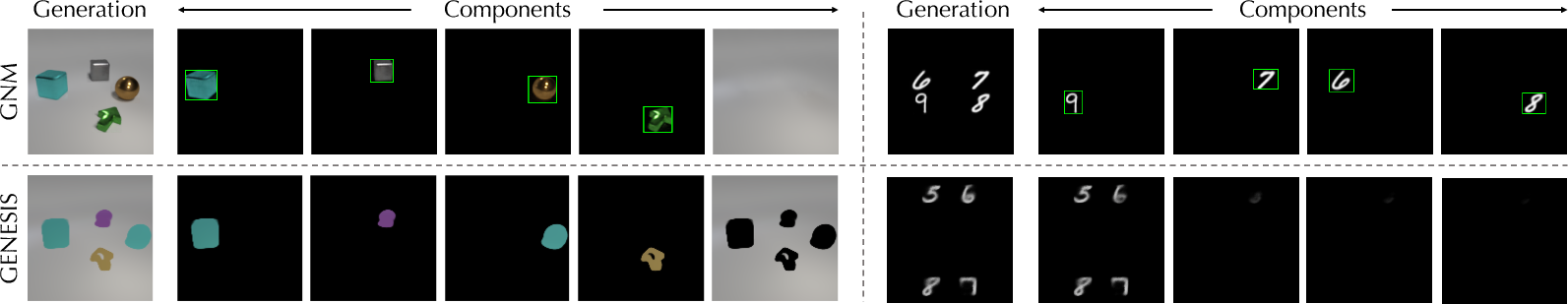

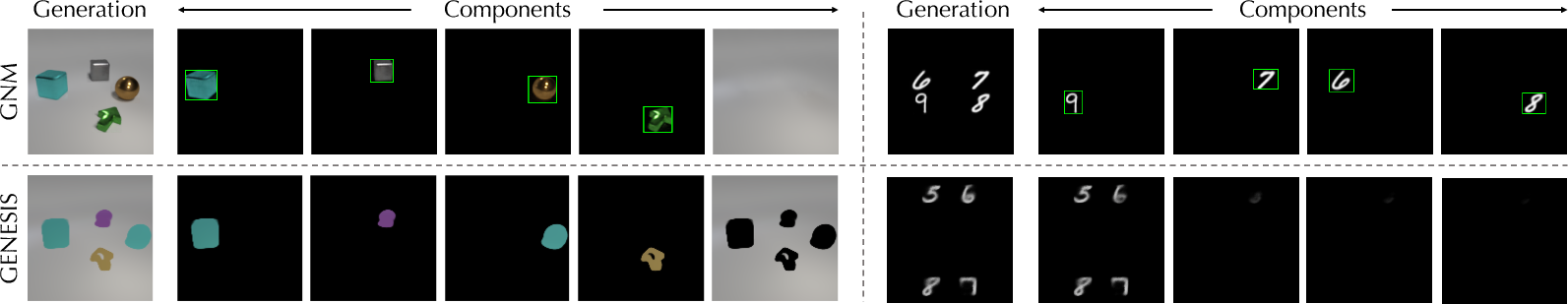

Structured representations in GNM disentangle variables into symbolic components like presence, position, depth, and appearance for multi-object scene modeling. This hybrid symbolic-distributed approach facilitates scalable modeling in object-crowded scenes.

Figure 2: Datasets and generation examples. MNIST-4 (left), MNIST-10 (middle), and Arrow room (right).

StructDRAW

StructDRAW addresses the limitations of expressive priors by drawing abstract structures instead of pixels, focusing on feature-level representation. It introduces an interaction layer to facilitate global correlations among components and supports scalable modeling in complex scenes.

Figure 3: Component-wise generation with GNM and GENESIS. Green bounding boxes represent $bz^\where$.

Inference and Learning

Inference approximates the posterior using mean-field decomposition, combining flexible distributed global representations with structured symbolic representations. Curriculum training facilitates component learning by iteratively optimizing background and foreground explanations, ensuring robust modular learning.

Experiments

GNM exhibits superior performance over baselines in generating images with clarity and maintaining scene structure across MNIST-4, MNIST-10, and Arrow room datasets. It achieves high scene structure accuracy (S-Acc), realistic discriminability scores (D-Steps), and competitive log-likelihoods, demonstrating effective modeling of complex scene dependencies.

Figure 4: Beta effect (left) and learning curve for binary discriminator (right).

Conclusion

Generative Neurosymbolic Machines successfully integrate symbolic and distributed representations, advancing scene modeling capabilities in generative latent variable frameworks. Its dual representation enables interpretable and compositional learning and density-based generation, opening avenues for further exploration in reasoning and causal learning. Future challenges include applying GNM in reinforcement learning and exploring deeper integration of symbolic logic with neural models.