Neural Language of Thought Models

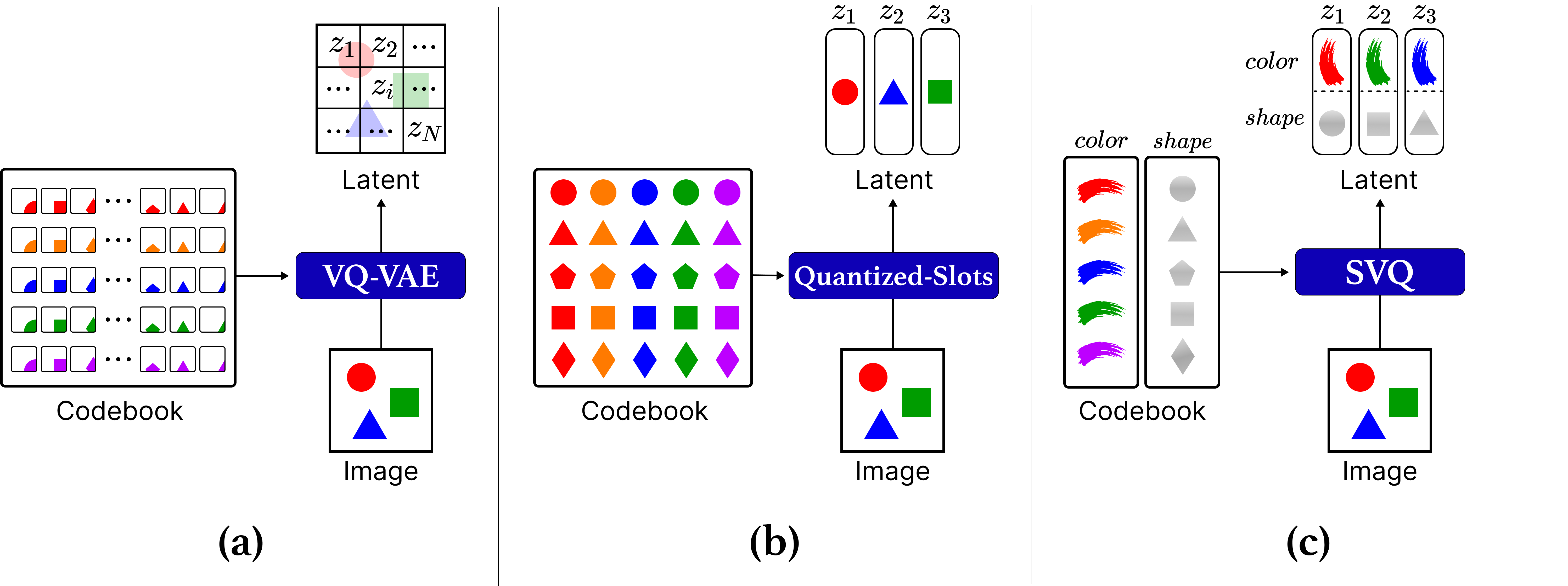

Abstract: The Language of Thought Hypothesis suggests that human cognition operates on a structured, language-like system of mental representations. While neural LLMs can naturally benefit from the compositional structure inherently and explicitly expressed in language data, learning such representations from non-linguistic general observations, like images, remains a challenge. In this work, we introduce the Neural Language of Thought Model (NLoTM), a novel approach for unsupervised learning of LoTH-inspired representation and generation. NLoTM comprises two key components: (1) the Semantic Vector-Quantized Variational Autoencoder, which learns hierarchical, composable discrete representations aligned with objects and their properties, and (2) the Autoregressive LoT Prior, an autoregressive transformer that learns to generate semantic concept tokens compositionally, capturing the underlying data distribution. We evaluate NLoTM on several 2D and 3D image datasets, demonstrating superior performance in downstream tasks, out-of-distribution generalization, and image generation quality compared to patch-based VQ-VAE and continuous object-centric representations. Our work presents a significant step towards creating neural networks exhibiting more human-like understanding by developing LoT-like representations and offers insights into the intersection of cognitive science and machine learning.

- Object-centric image generation with factored depths, locations, and appearances. arXiv preprint arXiv:2004.00642, 2020.

- Neural module networks. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 39–48, 2016.

- Estimating or propagating gradients through stochastic neurons for conditional computation. CoRR, abs/1308.3432, 2013. URL http://arxiv.org/abs/1308.3432.

- Monet: Unsupervised scene decomposition and representation. arXiv preprint arXiv:1901.11390, 2019.

- ROOTS: Object-centric representation and rendering of 3D scenes. Journal of Machine Learning Research, 22(259):1–36, 2021. URL http://jmlr.org/papers/v22/20-1176.html.

- Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv preprint arXiv:1412.3555, 2014.

- Describing textures in the wild. In Proceedings of the IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), 2014.

- Exploiting spatial invariance for scalable unsupervised object tracking. arXiv preprint arXiv:1911.09033, 2019a.

- Spatially invariant unsupervised object detection with convolutional neural networks. In Proceedings of AAAI, 2019b.

- The helmholtz machine. Neural computation, 7(5):889–904, 1995.

- Generative scene graph networks. In International Conference on Learning Representations, 2021. URL https://openreview.net/forum?id=RmcPm9m3tnk.

- Jukebox: A generative model for music. CoRR, abs/2005.00341, 2020. URL https://arxiv.org/abs/2005.00341.

- Generalization and robustness implications in object-centric learning. In International Conference on Machine Learning, ICML 2022, 17-23 July 2022, Baltimore, Maryland, USA, volume 162 of Proceedings of Machine Learning Research, pp. 5221–5285. PMLR, 2022. URL https://proceedings.mlr.press/v162/dittadi22a.html.

- Google scanned objects: A high-quality dataset of 3d scanned household items, 2022. URL https://arxiv.org/abs/2204.11918.

- Unsupervised discovery of 3d physical objects from video, 2021.

- GENESIS: generative scene inference and sampling with object-centric latent representations. In 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, April 26-30, 2020. OpenReview.net, 2020. URL https://openreview.net/forum?id=BkxfaTVFwH.

- Genesis-v2: Inferring unordered object representations without iterative refinement, 2022.

- Attend, infer, repeat: Fast scene understanding with generative models. In Advances in Neural Information Processing Systems, pp. 3225–3233, 2016.

- Taming transformers for high-resolution image synthesis. In IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2021, virtual, June 19-25, 2021, pp. 12873–12883. Computer Vision Foundation / IEEE, 2021. doi: 10.1109/CVPR46437.2021.01268. URL https://openaccess.thecvf.com/content/CVPR2021/html/Esser_Taming_Transformers_for_High-Resolution_Image_Synthesis_CVPR_2021_paper.html.

- Unsupervised learning of temporal abstractions with slot-based transformers. arXiv preprint arXiv:2203.13573, 2022.

- Robert M. Gray. Vector quantization. IEEE ASSP Magazine, 1:4–29, 1984. URL https://api.semanticscholar.org/CorpusID:14754287.

- Neural expectation maximization. In Advances in Neural Information Processing Systems, pp. 6691–6701, 2017.

- Multi-object representation learning with iterative variational inference. arXiv preprint arXiv:1903.00450, 2019.

- On the binding problem in artificial neural networks. arXiv preprint arXiv:2012.05208, 2020.

- Dream to control: Learning behaviors by latent imagination. arXiv preprint arXiv:1912.01603, 2019.

- Object discovery and representation networks. In ECCV, pp. 123–143. Springer, 2022.

- Generative neurosymbolic machines. In Advances in Neural Information Processing Systems, 2020.

- Scalor: Generative world models with scalable object representations. In International Conference on Learning Representations, 2019.

- Clevr: A diagnostic dataset for compositional language and elementary visual reasoning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2901–2910, 2017.

- Simone: View-invariant, temporally-abstracted object representations via unsupervised video decomposition. arXiv preprint arXiv:2106.03849, 2021.

- Clevrtex: A texture-rich benchmark for unsupervised multi-object segmentation. In Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks 1, NeurIPS Datasets and Benchmarks 2021, December 2021, virtual, 2021. URL https://datasets-benchmarks-proceedings.neurips.cc/paper/2021/hash/e2c420d928d4bf8ce0ff2ec19b371514-Abstract-round2.html.

- Adam: A method for stochastic optimization. In 3rd International Conference on Learning Representations, ICLR 2015, San Diego, CA, USA, May 7-9, 2015, Conference Track Proceedings, 2015. URL http://arxiv.org/abs/1412.6980.

- Auto-encoding variational bayes. arXiv preprint arXiv:1312.6114, 2013.

- Conditional Object-Centric Learning from Video. In International Conference on Learning Representations (ICLR), 2022.

- Quantized disentangled representations for object-centric visual tasks, 2023. URL https://openreview.net/forum?id=JIptuwnqwn.

- Building machines that learn and think like people. CoRR, abs/1604.00289, 2016. URL http://arxiv.org/abs/1604.00289.

- Improving generative imagination in object-centric world models. In International Conference on Machine Learning, pp. 4114–4124, 2020a.

- Space: Unsupervised object-oriented scene representation via spatial attention and decomposition. In International Conference on Learning Representations, 2020b.

- Object-centric learning with slot attention, 2020.

- Planning in the brain. Neuron, 110(6):914–934, 2022.

- Stephen E. Palmer. Hierarchical structure in perceptual representation. Cognitive Psychology, 9(4):441–474, 1977. ISSN 0010-0285. doi: https://doi.org/10.1016/0010-0285(77)90016-0. URL https://www.sciencedirect.com/science/article/pii/0010028577900160.

- Zero-shot text-to-image generation. In Proceedings of the 38th International Conference on Machine Learning, ICML 2021, 18-24 July 2021, Virtual Event, volume 139 of Proceedings of Machine Learning Research, pp. 8821–8831. PMLR, 2021. URL http://proceedings.mlr.press/v139/ramesh21a.html.

- Generating diverse high-fidelity images with VQ-VAE-2. In Advances in Neural Information Processing Systems 32: Annual Conference on Neural Information Processing Systems 2019, NeurIPS 2019, December 8-14, 2019, Vancouver, BC, Canada, pp. 14837–14847, 2019. URL https://proceedings.neurips.cc/paper/2019/hash/5f8e2fa1718d1bbcadf1cd9c7a54fb8c-Abstract.html.

- Stochastic backpropagation and variational inference in deep latent gaussian models. In International Conference on Machine Learning, volume 2, 2014.

- Bridging the gap to real-world object-centric learning. arXiv preprint arXiv:2209.14860, 2022.

- Wolf Singer. Binding by synchrony. Scholarpedia, 2:1657, 2007.

- Illiterate DALL-E learns to compose. In The Tenth International Conference on Learning Representations, ICLR 2022, Virtual Event, April 25-29, 2022. OpenReview.net, 2022a. URL https://openreview.net/forum?id=h0OYV0We3oh.

- Simple unsupervised object-centric learning for complex and naturalistic videos. In NeurIPS, 2022b. URL http://papers.nips.cc/paper_files/paper/2022/hash/735c847a07bf6dd4486ca1ace242a88c-Abstract-Conference.html.

- Neural systematic binder. In The Eleventh International Conference on Learning Representations, 2023. URL https://openreview.net/forum?id=ZPHE4fht19t.

- Core knowledge. Developmental science, 10(1):89–96, 2007.

- Capsules with inverted dot-product attention routing. In 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, April 26-30, 2020. OpenReview.net, 2020. URL https://openreview.net/forum?id=HJe6uANtwH.

- Conditional image generation with pixelcnn decoders. In Advances in neural information processing systems, pp. 4790–4798, 2016.

- Neural discrete representation learning. In Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, December 4-9, 2017, Long Beach, CA, USA, pp. 6306–6315, 2017. URL https://proceedings.neurips.cc/paper/2017/hash/7a98af17e63a0ac09ce2e96d03992fbc-Abstract.html.

- Towards causal generative scene models via competition of experts, 2020.

- Nicholas J Wade. The vision of helmholtz. Journal of the History of the Neurosciences, 30(4):405–424, 2021.

- Cut and learn for unsupervised object detection and instance segmentation, 2023a.

- Slot-vae: Object-centric scene generation with slot attention. In International Conference on Machine Learning, ICML 2023, 23-29 July 2023, Honolulu, Hawaii, USA, volume 202 of Proceedings of Machine Learning Research, pp. 36020–36035. PMLR, 2023b. URL https://proceedings.mlr.press/v202/wang23r.html.

- Spriteworld: A flexible, configurable reinforcement learning environment. https://github.com/deepmind/spriteworld/, 2019a. URL https://github.com/deepmind/spriteworld/.

- Spatial broadcast decoder: A simple architecture for learning disentangled representations in vaes. CoRR, abs/1901.07017, 2019b. URL http://arxiv.org/abs/1901.07017.

- Systematic visual reasoning through object-centric relational abstraction. CoRR, abs/2306.02500, 2023a. doi: 10.48550/arXiv.2306.02500. URL https://doi.org/10.48550/arXiv.2306.02500.

- Systematic visual reasoning through object-centric relational abstraction. CoRR, abs/2306.02500, 2023b. doi: 10.48550/arXiv.2306.02500. URL https://doi.org/10.48550/arXiv.2306.02500.

- Self-supervised visual representation learning with semantic grouping. arXiv preprint arXiv:2205.15288, 2022.

- Generative video transformer: Can objects be the words? In International Conference on Machine Learning, pp. 11307–11318. PMLR, 2021.

- Videogpt: Video generation using VQ-VAE and transformers. CoRR, abs/2104.10157, 2021. URL https://arxiv.org/abs/2104.10157.

- An investigation into pre-training object-centric representations for reinforcement learning. CoRR, abs/2302.04419, 2023. doi: 10.48550/arXiv.2302.04419. URL https://doi.org/10.48550/arXiv.2302.04419.

- Vector-quantized image modeling with improved VQGAN. In The Tenth International Conference on Learning Representations, ICLR 2022, Virtual Event, April 25-29, 2022. OpenReview.net, 2022. URL https://openreview.net/forum?id=pfNyExj7z2.

- Robust and controllable object-centric learning through energy-based models. arXiv preprint arXiv:2210.05519, 2022.

- Parts: Unsupervised segmentation with slots, attention and independence maximization. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 10439–10447, 2021.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.