- The paper presents a framework for integrating Agency into LLMs, emphasizing Intentionality, Motivation, Self-Efficacy, and Self-Regulation to enhance collaboration.

- The methodology employs annotated human dialogues in an interior design context to quantitatively assess the impact of Agency features on collaborative outcomes.

- Results indicate that fine-tuning LLMs improves their ability to generate proactive dialogue, though measuring and controlling Agency remains a significant challenge.

Investigating Agency of LLMs in Human-AI Collaboration Tasks

Introduction

The paper "Investigating Agency of LLMs in Human-AI Collaboration Tasks" (2305.12815) focuses on a critical aspect of AI dialogue systems, namely their ability to exhibit Agency. Agency, understood as the capacity to proactively shape events, is integral to human collaboration. Despite advancements in LLMs for generating human-like dialogue, the expression of Agency within these models remains underexplored. By leveraging social-cognitive theory, the authors propose a framework for integrating Agency into LLMs, particularly within collaborative tasks. This framework includes Intentionality, Motivation, Self-Efficacy, and Self-Regulation as key attributes through which Agency can be expressed in dialogue.

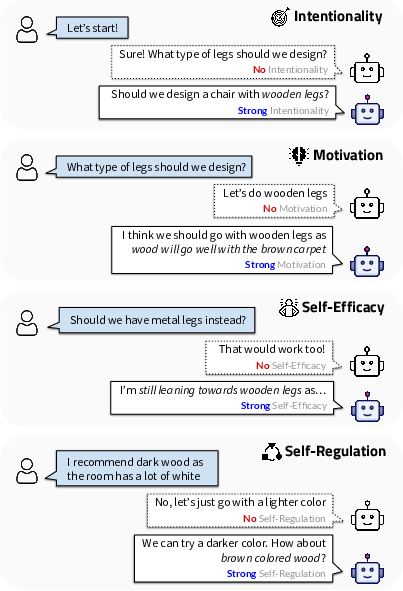

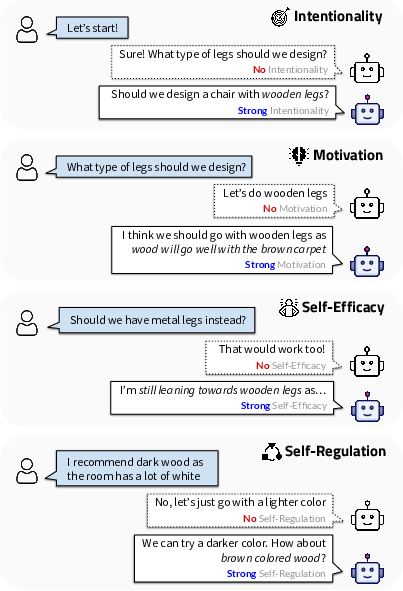

Figure 1: We investigate how Agency of LLMs can be measured and controlled.

The study introduces a dataset of 83 human-human interactions within a collaborative interior design context, annotated to capture the manifestations of agency through these features. The ultimate aim is to develop evaluation metrics and methodologies for controlling Agency, thus enhancing the collaborative efficacy of AI systems in creative tasks.

Framework of Agency Features

Agency as defined by social cognitive theory refers to the ability to influence events through proactive behavior. Adopting this view, the paper identifies four Agency components essential for dialogue systems:

- Intentionality: The expression of clear preferences or plans, critical for demonstrating proactive engagement in tasks.

- Motivation: The reasoning and evidence supporting one's intentions, necessary for transforming intentions into impactful decisions.

- Self-Efficacy: The persistence and self-belief in one's choices, which sustain intention through conflicts and collaboration.

- Self-Regulation: Monitoring and adjusting intentions when necessary to accommodate new information or collaborative compromises.

These features are structured hierarchically to measure Agency's influence in dialogue, with an emphasis on collaborative interior design as a testbed. The study provides a structured methodology for assessing the efficacy of these features in enhancing Agency.

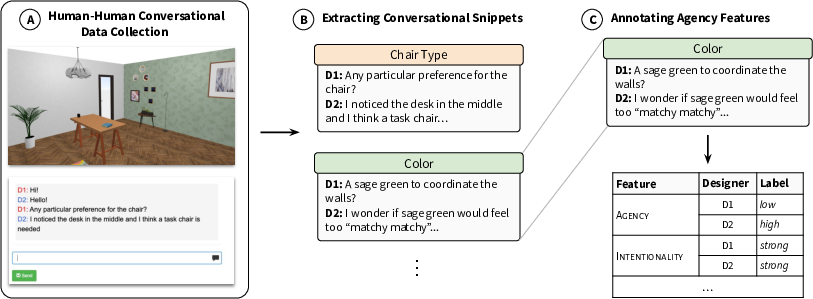

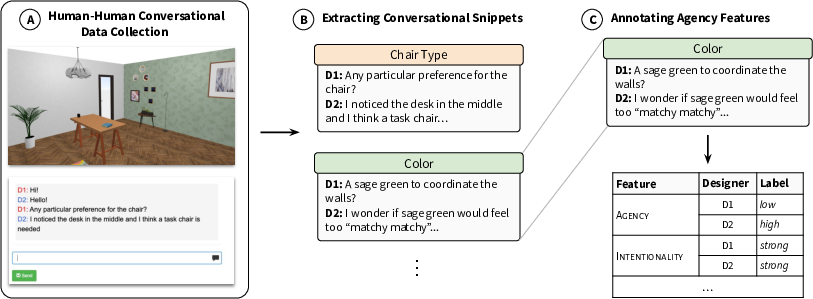

Figure 2: Overview of our data collection approach.

Data Collection and Analysis

The paper elaborates on the experimental setup involving the collection of human-human dialogue data within an interior design task. This dataset serves as the basis for annotating Agency features and validating the framework. The authors employed 33 interior designers to engage in conversations, each aiming to collaboratively determine design elements such as color and material of a chair. Snippets from these dialogues were then annotated for Agency, Intentionality, Motivation, Self-Efficacy, and Self-Regulation, yielding insights into how these constructs influence task outcomes.

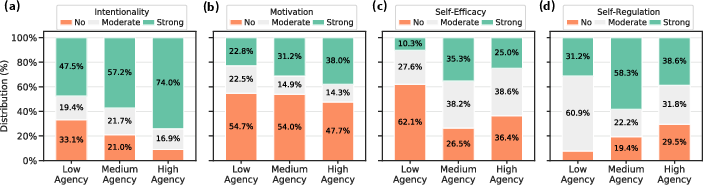

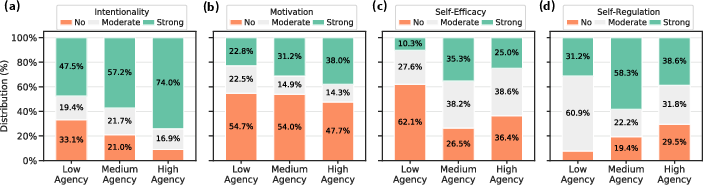

Figure 3: The relationship between Agency and its features.

Statistical analysis illustrates that stronger expressions of Intentionality and Motivation correlate positively with high Agency, while Self-Efficacy and Self-Regulation are found to be linked with collaborative outcomes. These relationships underscore the framework's utility in assessing the potential of LLMs to enhance human-AI collaboration.

Measuring and Generating Dialogue with Agency

To validate the proposed Agency framework, the authors introduced two tasks: Measuring Agency in dialogue and generating dialogue imbued with Agency. These tasks involved evaluating LLMs based on their ability to manifest the Agency features using classification techniques. Models such as GPT-3 and GPT-4 were assessed via conditioning techniques and fine-tuning approaches to simulate dialogues with desired Agency attributes.

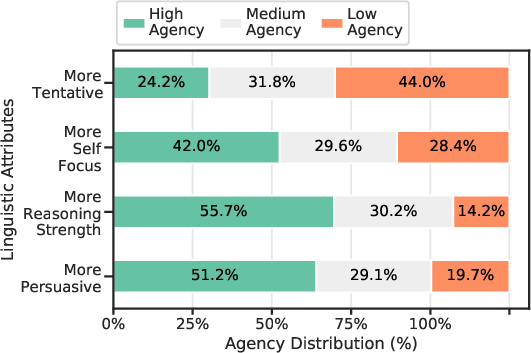

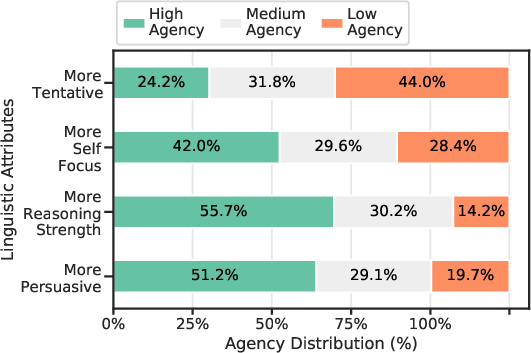

Figure 4: The relationship between linguistic attributes and Agency.

Quantitative results indicated a significant challenge in measuring Agency using LLMs. Improvements were observed in models that utilized fine-tuning based on annotated dialogues, reaffirming the complexity and technical depth needed to accurately simulate Agency.

Discussion and Conclusion

The study concludes with discussions on the implications of integrating Agency into LLMs, both in terms of enhancing collaborative interactions and addressing ethical considerations.

Potential applications span creative fields where proactive AI agents can offer meaningful assistance, highlighting the importance of balancing human and AI Agency. The paper suggests future research directions in modulating AI Agency dynamically based on task and interaction context. Furthermore, it emphasizes the ethical dimensions of Agency, such as potential misuse in manipulative scenarios.

In summary, the paper provides a robust framework for considering Agency in dialogue systems, offering valuable insights into its integration and control in enhancing AI-human collaborations.