- The paper argues that the traditional agent-centric framework imposes anthropocentric biases that obscure the true nature of intelligence.

- It differentiates between agentic, agential, and non-agentic systems, highlighting limitations in current LLM-based approaches.

- The findings suggest a shift towards systemic and material intelligence, integrating complex systems theory with practical AI innovations.

Is the `Agent' Paradigm a Limiting Framework for Next-Generation Intelligent Systems?

Introduction

The concept of an agent' has become central in AI research, especially evident in the implementation and conceptualization of LLM-based systems. Historically, agents are defined as autonomous entities capable of interacting with their environment, often employing goal-seeking behaviors. Despite this, the agent-based framework may impose limitations and biases—particularly anthropocentric assumptions—that obscure the fundamental nature of intelligence, particularly in its application to AI systems such as [LLM-based agents](https://www.emergentmind.com/topics/llm-based-agents).

*Figure 1: The Conceptual Landscape of [Agentic AI](https://www.emergentmind.com/topics/agentic-ai). A force-directed layout of the 98-concept [knowledge graph](https://www.emergentmind.com/topics/knowledge-graph-kg). Node size is scaled by PageRank (influence), and color indicates category. The central cluster is dominated byArchitecture/Model', Entity/System', and a high density ofCritique/Challenge' and `Application/Domain' concepts.*

Re-evaluation of Agent-Centric Paradigms

The paper challenges the agent-centric model by delineating three main types of systems: agentic, agential, and non-agentic. Agentic systems are AI systems with the appearance of autonomy, such as LLM-based agents. Agential systems, often biological, are those that are fully autonomous and self-maintaining. In contrast, non-agentic systems operate without any impression of agency, functioning merely as tools. This distinction calls into question the efficacy of the agentic framework, which may be heuristically useful but potentially misleading as it obscures the underlying mechanics, particularly in LLMs, which operate on pattern completion rather than true agency.

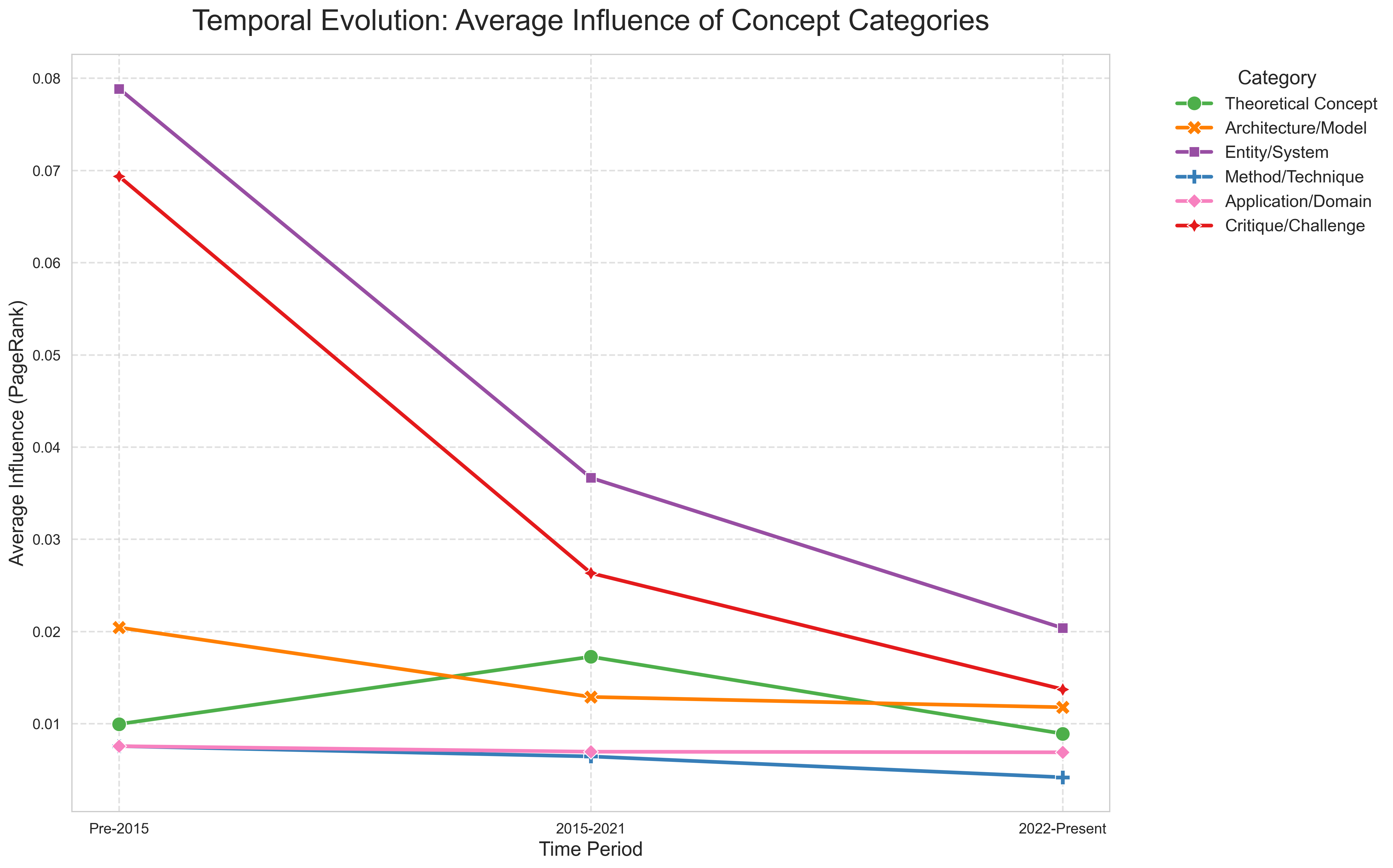

Figure 2: Temporal Evolution: Average Influence of Concept Categories. The plot indicates a paradigm shift from abstract architectures to systemic and critical implications of agent technologies.

Active Inference and LLMs: A Case Study

Active Inference (AIF), rooted in the Free Energy Principle, is highlighted as an agent-centric framework where agents minimize variational free energy. While elegant, this framework is critiqued for its anthropomorphic tendencies such as attributing cognitive processes to mere statistical mechanisms. Similar criticisms apply to LLMs, which are often treated as agents due to their complex outputs. Critically, these LLMs do not possess intrinsic autonomy or goal-directedness, functioning instead as sophisticated forms of algorithmic mimicry rather than genuinely intelligent agents.

The Transition to Systemic and Material Intelligence

The emphasis is shifting towards agential systems and system-level properties that highlight emergent behaviors over discrete agent actions. This perspective leverages principles from complex systems theory whereby intelligence and agency emerge from the interaction and self-organization of components rather than existing within purely agentic frameworks.

For instance, unconventional computing paradigms and neuromorphic systems showcase how intelligence might arise from substrate-dependent dynamics, challenging the conventional agent-centric view. This perspective encourages exploring how intelligence manifests materially, suggesting a move away from abstract algorithmic constructs toward more robust system-level understandings.

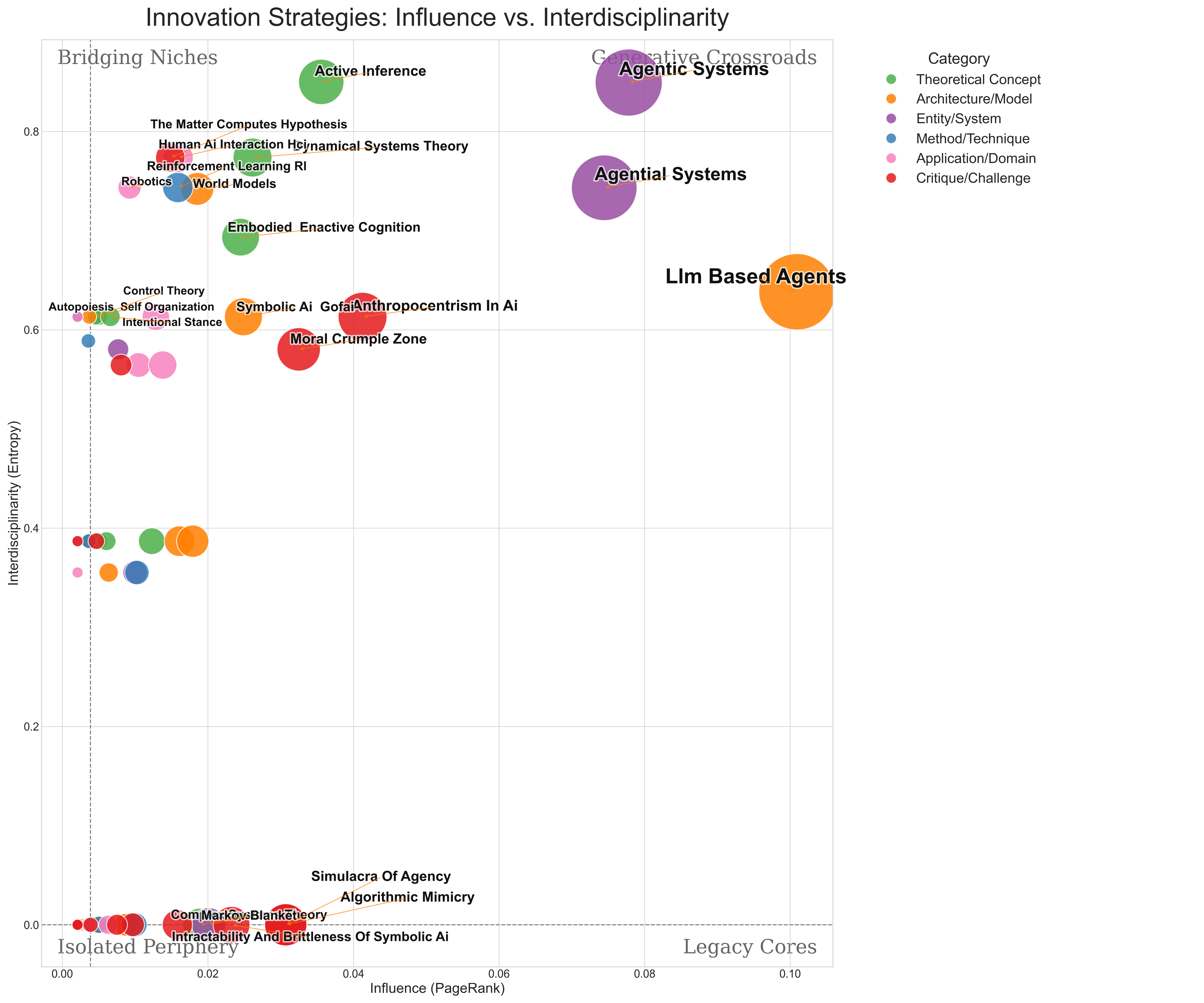

Figure 3: Innovation Strategies: Influence vs. Interdisciplinarity. Concepts such as LLM-based Agents' andAgentic Systems' drive the field's discourse through interdisciplinary intersections.

Implications for AI Research and Future Trajectories

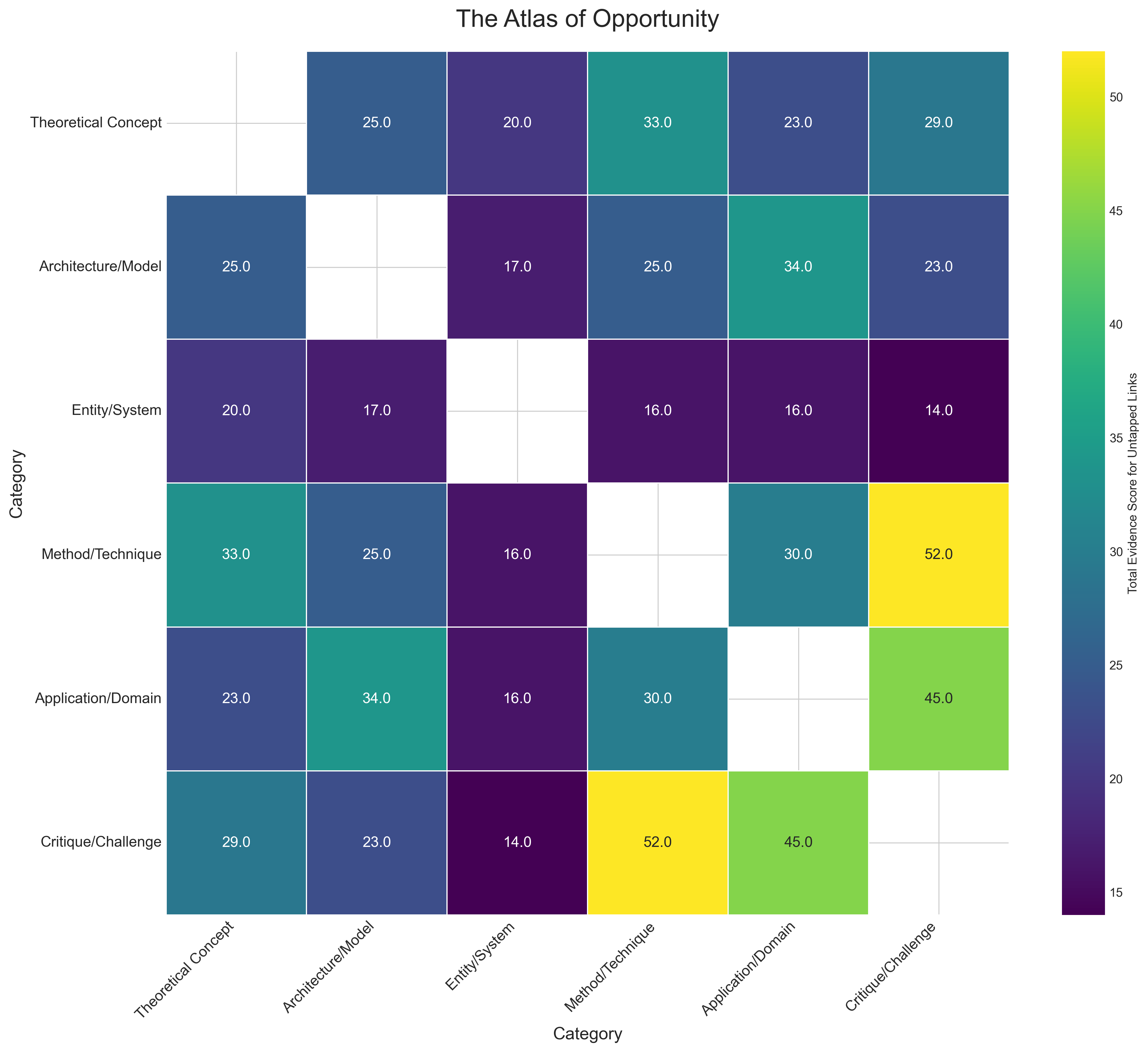

The findings indicate a need for a critical re-examination of agent-based paradigms, reframing AI research to accommodate system-level theories that incorporate continuous interaction and world modeling. The research presents an Atlas of Opportunity, identifying research gaps where innovations could connect methodologies with practical applications. This approach emphasizes bridging the gap between critiques of agency and methods such as reinforcement learning to design robust, reliable systems.

Figure 4: The Atlas of Opportunity: Untapped Innovation Frontiers. Highest opportunity scores appear for links between Method/Technique and Critique/Challenge, emphasizing a significant innovation frontier.

Conclusion

Agent-centric frameworks, while historically pioneering, reveal limitations when juxtaposed with emerging techniques and theoretical critiques. The shift towards system-level intelligence and the integration of physical material properties in AI systems holds promise for achieving a more nuanced understanding of intelligence. Moving away from anthropomorphic constraints allows for the development of AI that is not bound by human-like agency, but that harnesses the intrinsic dynamics of complex systems, potentially achieving a more universal form of adaptive intelligence.