Clembench: Using Game Play to Evaluate Chat-Optimized Language Models as Conversational Agents

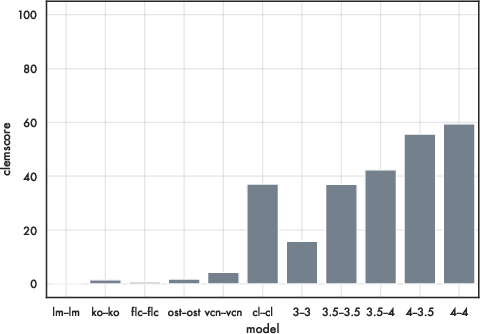

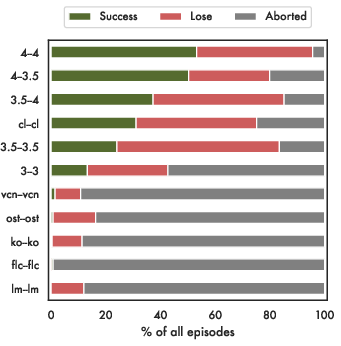

Abstract: Recent work has proposed a methodology for the systematic evaluation of "Situated Language Understanding Agents"-agents that operate in rich linguistic and non-linguistic contexts-through testing them in carefully constructed interactive settings. Other recent work has argued that LLMs, if suitably set up, can be understood as (simulators of) such agents. A connection suggests itself, which this paper explores: Can LLMs be evaluated meaningfully by exposing them to constrained game-like settings that are built to challenge specific capabilities? As a proof of concept, this paper investigates five interaction settings, showing that current chat-optimised LLMs are, to an extent, capable to follow game-play instructions. Both this capability and the quality of the game play, measured by how well the objectives of the different games are met, follows the development cycle, with newer models performing better. The metrics even for the comparatively simple example games are far from being saturated, suggesting that the proposed instrument will remain to have diagnostic value. Our general framework for implementing and evaluating games with LLMs is available at https://github.com/clembench .

- AlephAlpha. 2023. What is luminous? https://docs.aleph-alpha.com/docs/introduction/luminous/. Accessed: 2023-06-12.

- Falcon-40B: an open large language model with state-of-the-art performance.

- Jacob Andreas. 2022. Language models as agent models. In Findings of the Association for Computational Linguistics: EMNLP 2022, pages 5769–5779, Abu Dhabi, United Arab Emirates. Association for Computational Linguistics.

- Training a helpful and harmless assistant with reinforcement learning from human feedback. CoRR, abs/2204.05862.

- A multitask, multilingual, multimodal evaluation of chatgpt on reasoning, hallucination, and interactivity. CoRR, abs/2302.04023.

- Thorsten Brants and Alex Franz. 2006. Web 1T 5-gram Version 1.

- Language models are few-shot learners. In Advances in Neural Information Processing Systems 33: Annual Conference on Neural Information Processing Systems 2020, NeurIPS 2020, December 6-12, 2020, virtual.

- Sparks of artificial general intelligence: Early experiments with GPT-4. CoRR, abs/2303.12712.

- Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality.

- Herbert H Clark and Susan E Brennan. 1991. Grounding in communication. In Perspectives on socially shared cognition., pages 127–149. American Psychological Association.

- Jacob Cohen. 1960. A coefficient of agreement for nominal scales. Educational and Psychological Measurement, 20(1):37–46.

- Koala: A dialogue model for academic research. Blog post.

- The slurk interaction server framework: Better data for better dialog models. In Proceedings of the Language Resources and Evaluation Conference, pages 4069–4078, Marseille, France. European Language Resources Association.

- HuggingFace. 2023. Open llm leaderboard. https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard. Accessed: 2023-06-12.

- Openassistant conversations - democratizing large language model alignment. CoRR, abs/2304.07327.

- David Lewis. 1969. Convention. Harvard University Press.

- David Lewis. 1979. Scorekeeping in a language game. In Semantics from different points of view, pages 172–187. Springer.

- Alpacaeval: An automatic evaluator of instruction-following models. https://github.com/tatsu-lab/alpaca_eval.

- Evaluating verifiability in generative search engines. CoRR, abs/2304.09848.

- LMSYS. 2023. LMSYS chat leaderboard. https://chat.lmsys.org/?leaderboard. Accessed: 2023-06-12.

- Brielen Madureira and David Schlangen. 2022. Can visual dialogue models do scorekeeping? exploring how dialogue representations incrementally encode shared knowledge. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pages 651–664, Dublin, Ireland. Association for Computational Linguistics.

- Playing atari with deep reinforcement learning. CoRR, abs/1312.5602.

- OpenAI. 2023. GPT-4 technical report. CoRR, abs/2303.08774.

- Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems, 35:27730–27744.

- Generative agents: Interactive simulacra of human behavior. CoRR, abs/2304.03442.

- A. L. Samuel. 1959. Some studies in machine learning using the game of checkers. IBM Journal of Research and Development, 3(3):210–229.

- David Schlangen. 2023a. Dialogue games for benchmarking language understanding: Motivation, taxonomy, strategy. CoRR, abs/2304.07007.

- David Schlangen. 2023b. On general language undertanding. In Findings of the Association for Computational Linguistics: EMNLP 2023, Singapore. Association for Computational Linguistics.

- Hugginggpt: Solving AI tasks with chatgpt and its friends in huggingface. CoRR, abs/2303.17580.

- Mastering the game of Go without human knowledge. Nature, 550(7676):354–359.

- Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. CoRR, abs/2206.04615.

- Learning to summarize with human feedback. In Advances in Neural Information Processing Systems, volume 33, pages 3008–3021. Curran Associates, Inc.

- Finetuned language models are zero-shot learners. In The Tenth International Conference on Learning Representations, ICLR 2022, Virtual Event, April 25-29, 2022. OpenReview.net.

- George Yule. 1997. Referential Communication Tasks. Routledge, New York, USA.

- Adapting language models for zero-shot learning by meta-tuning on dataset and prompt collections. In Findings of the Association for Computational Linguistics: EMNLP 2021, pages 2856–2878, Punta Cana, Dominican Republic. Association for Computational Linguistics.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.