Decoding the Underlying Meaning of Multimodal Hateful Memes

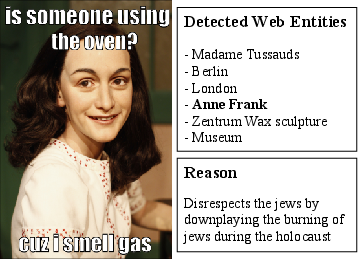

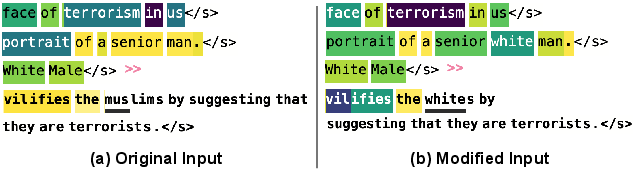

Abstract: Recent studies have proposed models that yielded promising performance for the hateful meme classification task. Nevertheless, these proposed models do not generate interpretable explanations that uncover the underlying meaning and support the classification output. A major reason for the lack of explainable hateful meme methods is the absence of a hateful meme dataset that contains ground truth explanations for benchmarking or training. Intuitively, having such explanations can educate and assist content moderators in interpreting and removing flagged hateful memes. This paper address this research gap by introducing Hateful meme with Reasons Dataset (HatReD), which is a new multimodal hateful meme dataset annotated with the underlying hateful contextual reasons. We also define a new conditional generation task that aims to automatically generate underlying reasons to explain hateful memes and establish the baseline performance of state-of-the-art pre-trained LLMs on this task. We further demonstrate the usefulness of HatReD by analyzing the challenges of the new conditional generation task in explaining memes in seen and unseen domains. The dataset and benchmark models are made available here: https://github.com/Social-AI-Studio/HatRed

- Bottom-up and top-down attention for image captioning and visual question answering. In CVPR, 2018.

- Prompting for multimodal hateful meme classification. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, pages 321–332, Abu Dhabi, United Arab Emirates, December 2022. Association for Computational Linguistics.

- Unifying vision-and-language tasks via text generation. In ICML, 2021.

- Latent hatred: A benchmark for understanding implicit hate speech. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pages 345–363, Online and Punta Cana, Dominican Republic, November 2021. Association for Computational Linguistics.

- Semeval-2022 task 5: Multimedia automatic misogyny identification. In Proceedings of the 16th International Workshop on Semantic Evaluation (SemEval-2022). Association for Computational Linguistics, 2022.

- Benchmark dataset of memes with text transcriptions for automatic detection of multi-modal misogynistic content. arXiv preprint arXiv:2106.08409, 2021.

- On explaining multimodal hateful meme detection models. In Proceedings of the ACM Web Conference 2022, WWW ’22, page 3651–3655, New York, NY, USA, 2022. Association for Computing Machinery.

- Fairface: Face attribute dataset for balanced race, gender, and age. arXiv preprint arXiv:1908.04913, 2019.

- The hateful memes challenge: Detecting hate speech in multimodal memes. Advances in Neural Information Processing Systems, 33:2611–2624, 2020.

- The hateful memes challenge: Competition report. In Hugo Jair Escalante and Katja Hofmann, editors, Proceedings of the NeurIPS 2020 Competition and Demonstration Track, volume 133 of Proceedings of Machine Learning Research, pages 344–360. PMLR, 06–12 Dec 2021.

- Disentangling hate in online memes. In Proceedings of the 29th ACM International Conference on Multimedia, pages 5138–5147, 2021.

- Visualbert: A simple and performant baseline for vision and language. arXiv preprint arXiv:1908.03557, 2019.

- Chin-Yew Lin. ROUGE: A package for automatic evaluation of summaries. In Text Summarization Branches Out, pages 74–81, Barcelona, Spain, July 2004. Association for Computational Linguistics.

- A multimodal framework for the detection of hateful memes. arXiv preprint arXiv:2012.12871, 2020.

- Roberta: A robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692, 2019.

- Findings of the WOAH 5 shared task on fine grained hateful memes detection. In Proceedings of the 5th Workshop on Online Abuse and Harms (WOAH 2021), pages 201–206, Online, August 2021. Association for Computational Linguistics.

- Clipcap: CLIP prefix for image captioning. CoRR, 2021.

- Niklas Muennighoff. Vilio: State-of-the-art visio-linguistic models applied to hateful memes. CoRR, 2020.

- Bleu: a method for automatic evaluation of machine translation. In Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics, pages 311–318, Philadelphia, Pennsylvania, USA, July 2002. Association for Computational Linguistics.

- Detecting harmful memes and their targets. In Findings of the Association for Computational Linguistics: ACL/IJCNLP, pages 2783–2796, 2021.

- MOMENTA: A multimodal framework for detecting harmful memes and their targets. In Findings of the Association for Computational Linguistics: EMNLP, pages 4439–4455, 2021.

- Language models are unsupervised multitask learners. OpenAI blog, 1(8):9, 2019.

- Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of Machine Learning Research, 21(140):1–67, 2020.

- How much knowledge can you pack into the parameters of a language model? In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pages 5418–5426, Online, November 2020. Association for Computational Linguistics.

- Leveraging pre-trained checkpoints for sequence generation tasks. Transactions of the Association for Computational Linguistics, 8:264–280, 2020.

- Social bias frames: Reasoning about social and power implications of language. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 5477–5490, Online, July 2020. Association for Computational Linguistics.

- Disarm: Detecting the victims targeted by harmful memes. In Findings of the Association for Computational Linguistics: NAACL 2022, pages 1572–1588, 2022.

- Detecting and understanding harmful memes: A survey. arXiv preprint arXiv:2205.04274, 2022.

- Characterizing the entities in harmful memes: Who is the hero, the villain, the victim? arXiv preprint arXiv:2301.11219, 2023.

- Axiomatic attribution for deep networks. In International conference on machine learning, pages 3319–3328. PMLR, 2017.

- Multimodal meme dataset (multioff) for identifying offensive content in image and text. In Proceedings of the Second Workshop on Trolling, Aggression and Cyberbullying, pages 32–41, 2020.

- Detectron2. https://github.com/facebookresearch/detectron2, 2019. (accessed February 28, 2023).

- Multimodal hate speech detection via cross-domain knowledge transfer. In Proceedings of the 30th ACM International Conference on Multimedia, MM ’22, page 4505–4514, New York, NY, USA, 2022. Association for Computing Machinery.

- Bertscore: Evaluating text generation with bert. arXiv preprint arXiv:1904.09675, 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.