- The paper demonstrates that small language models achieve comparable performance to large models while offering enhanced adaptability and cost-efficiency.

- It details methods such as parameter reduction and efficient fine-tuning using techniques like LoRA and QLoRA to optimize these models.

- The study highlights societal and industrial benefits, including data sovereignty and privacy, making AI innovation more accessible.

Mini-Giants: "Small" LLMs and Open Source Win-Win

Introduction

The paper explores the potential of "mini-giants," small LMs that present a feasible alternative to LLMs like GPT-3 and GPT-4. These smaller models, typically with a parameter size of around or below 10B, offer capabilities and performance on par with their larger counterparts. This essay presents an expert analysis of the considerations, methods, and implications of developing and deploying mini-giants, as discussed in the paper "Mini-Giants: 'Small' LLMs and Open Source Win-Win."

Advantages of Small LLMs

The paper emphasizes three critical advantages of small LMs: adaptability, controllability, and affordability. Smaller models provide enhanced adaptability due to their manageable size, enabling easier fine-tuning and modification for specific applications. The paper argues this adaptability is crucial for domain-specific applications and scenarios that require tight integration of data and models.

In addition, smaller LMs improve controllability by allowing organizations to operate models on local infrastructure, thereby maintaining data sovereignty and autonomy over information flow and usage. This feature is significant in privacy-sensitive industries like finance and healthcare, where data governance is paramount.

Affordability is another key benefit, as these models can be fine-tuned and trained within manageable timeframes and costs, particularly important in open-source environments and communities like Kaggle. The reduced resource requirements obviate prohibitive costs associated with large LMs, allowing broader accessibility and innovation.

Methods to Develop Small LLMs

Parameter Reduction

Several methods aim at reducing the parameter size to make LLMs smaller while maintaining their effectiveness. The Chinchilla model underscores the principle that increased data over mere parameter count enhances model performance. Further, LLaMa represents an advancement by optimizing training data utilization and employing efficient multi-headed attention layers.

Efficient Fine-Tuning

Multiple techniques optimize the fine-tuning of LMs. Adapter, Prefix fine-tuning, and LoRA are significant among these. Adaptive models introduce additional NN layers to existing models, facilitating targeted customization. LoRA, which adds low-rank trainable matrices parallel to existing weights, exemplifies efficient fine-tuning, achieving performance close to full parameter tuning with vastly reduced parameters.

Novel Architectures

Techniques such as QLoRA further innovate by employing quantization and low-rank adaptation to optimize memory and computation. Additionally, architectures like ControlNet demonstrate the potential for adaptable fine-tuning across various generative tasks.

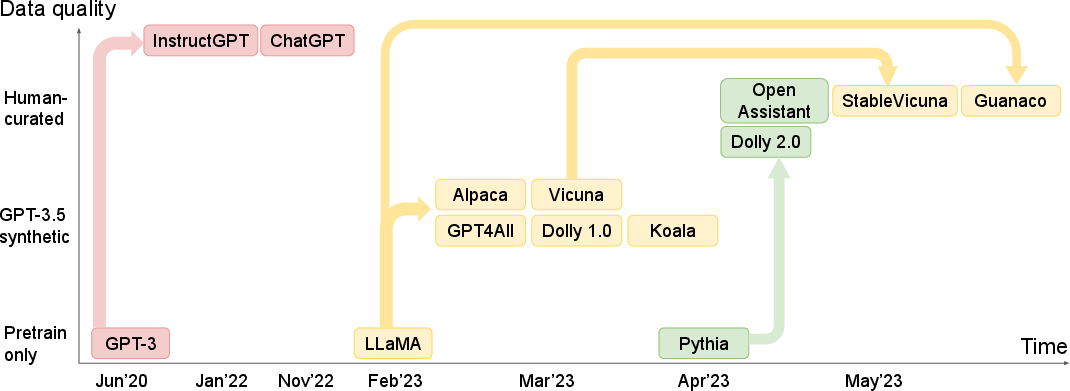

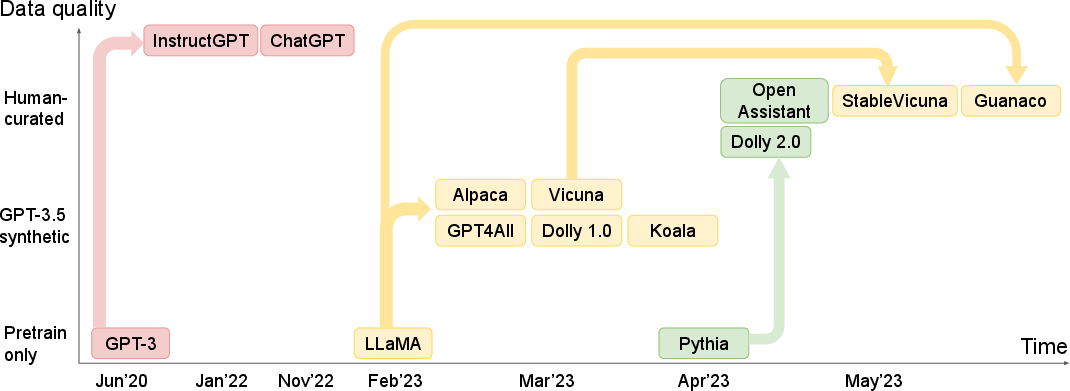

Figure 1: An evolution tree of recently released instruction-following small LMs.

Survey of "Small" Instruction-Following LMs

A detailed survey of small LMs demonstrates rapid advancements and diversification. Models like Alpaca, GPT4All, and Vicuna, often fine-tuned from LLaMA, exemplify innovative approaches in harnessing high-quality instructional data. These efforts highlight the community's responsiveness to creating open-source, instruction-following models.

Models employing human-curated data, such as Dolly and Open Assistant, showcase a different paradigm that balances performance and openness. The release of datasets like oasst1 foregrounds the importance of comprehensive data in fostering versatile small LMs.

Practical Applications

The applications of mini-giants extend their impact beyond theoretical advantages. For instance, they are pivotal in scenarios demanding localized computation and in domains necessitating adherence to privacy standards, such as healthcare. The paper draws parallels with AI systems like Woebot, which utilized small models for therapeutic purposes, underscoring the societal benefits of small LMs.

Discussion and Future Outlook

The paper suggests that mini-giants contribute to AI's democratization by emphasizing adaptability, controllability, and affordability. These models empower communities to innovate and address specialized requirements without incurring substantial costs. The authors articulate a forward-looking perspective, advocating for broad participation in AI development and deployment to mitigate risks and optimize benefits, contributing to a sustainably equitable technological landscape.

Conclusion

Overall, the paper provides a comprehensive exploration of small LMs as viable, efficient alternatives to their larger counterparts. These mini-giants present significant potential for applications across various industries, powered by efficient training methods and adaptable architectures. The ongoing research and development in this niche promise continued growth in making AI accessible and beneficial for a wider audience.