Small Language Models are the Future of Agentic AI

Abstract: LLMs are often praised for exhibiting near-human performance on a wide range of tasks and valued for their ability to hold a general conversation. The rise of agentic AI systems is, however, ushering in a mass of applications in which LLMs perform a small number of specialized tasks repetitively and with little variation. Here we lay out the position that small LLMs (SLMs) are sufficiently powerful, inherently more suitable, and necessarily more economical for many invocations in agentic systems, and are therefore the future of agentic AI. Our argumentation is grounded in the current level of capabilities exhibited by SLMs, the common architectures of agentic systems, and the economy of LM deployment. We further argue that in situations where general-purpose conversational abilities are essential, heterogeneous agentic systems (i.e., agents invoking multiple different models) are the natural choice. We discuss the potential barriers for the adoption of SLMs in agentic systems and outline a general LLM-to-SLM agent conversion algorithm. Our position, formulated as a value statement, highlights the significance of the operational and economic impact even a partial shift from LLMs to SLMs is to have on the AI agent industry. We aim to stimulate the discussion on the effective use of AI resources and hope to advance the efforts to lower the costs of AI of the present day. Calling for both contributions to and critique of our position, we commit to publishing all such correspondence at https://research.nvidia.com/labs/lpr/slm-agents.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper argues that small LLMs (SLMs) — compact AI models that can run on everyday devices — are often a better choice than very LLMs for many “agentic AI” systems. Agentic AI means software agents that use AI to decide what tools to use and in what order to complete tasks. The authors say SLMs are strong enough, easier to use, and cheaper for lots of the jobs agents do, and they explain why shifting from LLMs to SLMs could make AI more practical and affordable.

Quick definitions

- AI agent: Think of an AI agent like a smart helper that can plan a task, call tools (like a calculator, web search, or database), and follow steps to get work done.

- LLM: A very big, general-purpose text AI that can talk about almost anything, but needs huge computers to run fast.

- SLM (Small LLM): A smaller text AI that focuses on quicker, cheaper, or more specific tasks and can run on regular computers or even phones.

What questions are they trying to answer?

The paper focuses on three simple questions:

- Are small models strong enough to do most of the language tasks agents need?

- Are small models a better fit for how agents work day-to-day?

- Are small models more economical to run, especially at scale?

The authors also ask: If sometimes we do need general conversation or broad reasoning, is it best to mix models — using SLMs most of the time and calling LLMs only when necessary?

How did the authors make their case?

Instead of doing one big experiment, this is a position paper: a carefully argued viewpoint backed by recent examples, system designs, and cost comparisons. Here’s their approach in everyday terms:

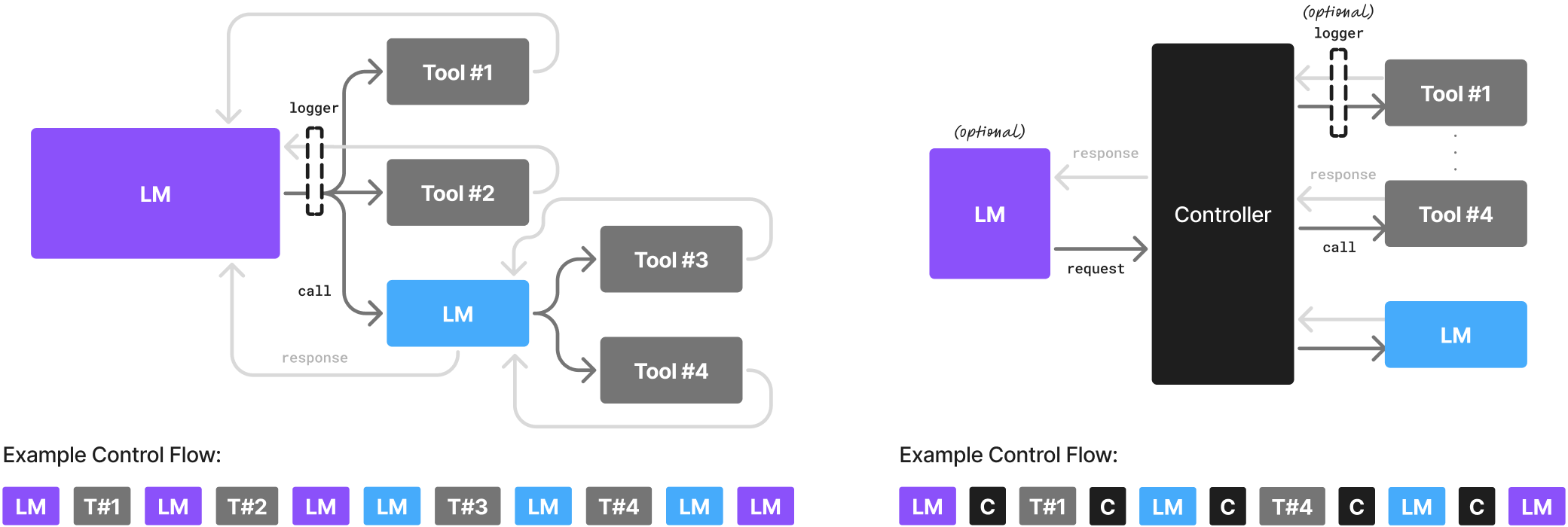

- They look at how agents actually work: agents break big goals into smaller steps, each step is often repetitive and narrow (like “extract key dates,” “summarize a complaint,” or “format a database entry”). That’s a good match for SLMs.

- They gather recent evidence showing that modern SLMs can do instruction following, tool calling (like using APIs), coding help, and commonsense reasoning at levels close to much larger models — but faster and cheaper.

- They explain why small models fit better operationally: they start quicker, fine-tune faster, and can run locally (on your device), which lowers costs and can improve privacy and speed.

- They consider pushback (for example, “But LLMs understand language more generally” or “Centralized LLMs are cheaper at scale”) and offer counterpoints.

- They outline a practical “conversion algorithm” — a step-by-step plan to replace some LLM calls inside an agent with specialized SLMs over time.

Think of it like recommending a workshop use more specialized tools rather than one huge Swiss Army knife for everything. When you break the job into small, repeatable steps, having the right small tool makes the work faster and cheaper.

What did they find, and why is it important?

The authors highlight three key reasons SLMs fit agentic AI:

- SLMs are strong enough: Modern small models can follow instructions, call tools reliably, and reason in focused tasks. For many agent steps, you don’t need full “talk about anything” ability — you need accuracy and consistent formatting.

- SLMs are a better operational fit: Agents often require strict outputs (like JSON data or exact code snippets). SLMs can be fine-tuned to always produce the exact format the agent’s code expects, reducing errors and “hallucinations.”

- SLMs are more economical: Smaller models run faster with less energy. They’re cheaper in the cloud and often can be deployed at the “edge” (on laptops, desktops, or phones), saving time and money and improving privacy.

They also suggest a balanced approach: use SLMs as the default for specialized steps, and call an LLM only when you really need broad, open-ended reasoning or conversation. This “heterogeneous” design — mixing different models — can give you the best of both worlds.

A simple plan to switch from LLMs to SLMs

The paper describes a practical path for teams to migrate agent tasks from LLMs to SLMs:

- First, safely log what the agent is doing (inputs, outputs, and tool calls) while protecting user privacy.

- Next, clean the data to remove sensitive information.

- Then, group similar tasks together (like “summarize emails” or “fill forms”) so you can build one specialist per task.

- Choose SLMs that fit each task.

- Fine-tune those SLMs on the task data so they produce exactly what your agent needs.

- Keep iterating: as agents run, collect more examples and improve the SLM specialists.

This is like training a set of junior specialists for each station in a factory line, rather than having one all-purpose genius doing everything.

What are the challenges and alternative views?

The authors acknowledge several barriers:

- Industry momentum: A lot of money and infrastructure already support LLMs, so change takes time.

- Benchmarks: Many tests favor general conversation abilities, not the narrow tasks agents usually do.

- Awareness: SLMs don’t get as much hype, even when they’re the right fit.

They also address common counterarguments:

- “LLMs understand language more generally.” True for wide-open tasks, but agents usually break problems into small, focused steps where SLMs are plenty strong, and fine-tuning makes them extremely reliable.

- “Centralized LLMs are cheaper at scale.” Sometimes, but new scheduling tech and falling setup costs make SLM serving more practical, and many agent tasks benefit from edge/local speed and privacy.

What’s the bigger impact?

If teams shift to SLM-first agent designs:

- Costs drop: Running smaller models means faster responses and lower bills.

- Access grows: More people and organizations can build and deploy capable AI agents on regular hardware.

- Sustainability improves: Less energy per task helps the environment.

- Privacy and control get better: Running locally keeps sensitive data on your device.

- Systems become modular: Agents can be built like Lego sets — plug in the best small specialist for each job, and swap or improve them over time.

In short, the paper’s message is simple: For many agent tasks, small, well-trained models are “good enough” and often better. Use SLMs as your default tools, call LLMs only when necessary, and your agents can become faster, cheaper, and more reliable.

Collections

Sign up for free to add this paper to one or more collections.