- The paper introduces NeuroImagen, which reconstructs images from EEG signals using multi-level semantics extraction to overcome noisy data challenges.

- It employs a latent diffusion model that fuses pixel-level saliency maps and sample-level semantics for precise and high-resolution image outputs.

- Experiments demonstrate superior performance over baseline methods, highlighting significant improvements in both semantic and structural accuracy.

Seeing through the Brain: Image Reconstruction of Visual Perception from Human Brain Signals

This essay provides an expert summary of the research paper titled "Seeing through the Brain: Image Reconstruction of Visual Perception from Human Brain Signals" (2308.02510). The paper introduces NeuroImagen, a method for reconstructing images from Electroencephalography (EEG) signals, aiming to bridge the gap between human visual perception and computational models.

Introduction to NeuroImagen

The proposed method, NeuroImagen, addresses the challenge of extracting meaningful visual information from EEG signals, which are inherently noisy and recorded in a time-series format. EEG data are dynamic and provide a more practical solution than the expensive and cumbersome fMRI methods traditionally used in visual decoding. The goal is to effectively reconstruct images using EEG data, which requires overcoming issues such as electrode misplacement and low signal-to-noise ratio (SNR).

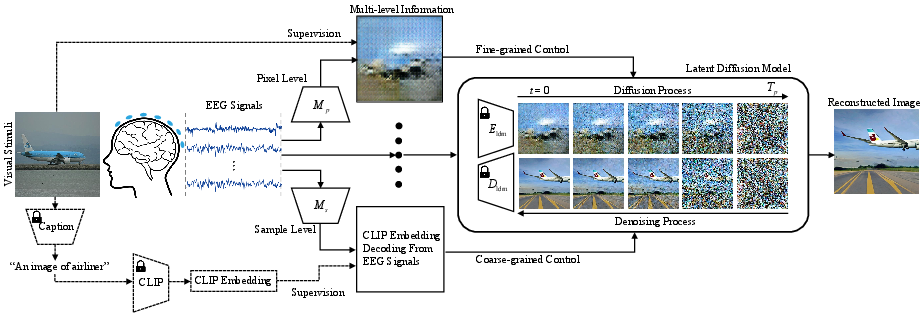

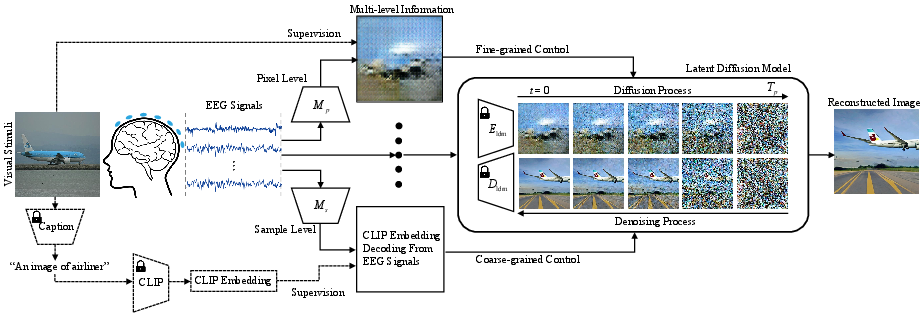

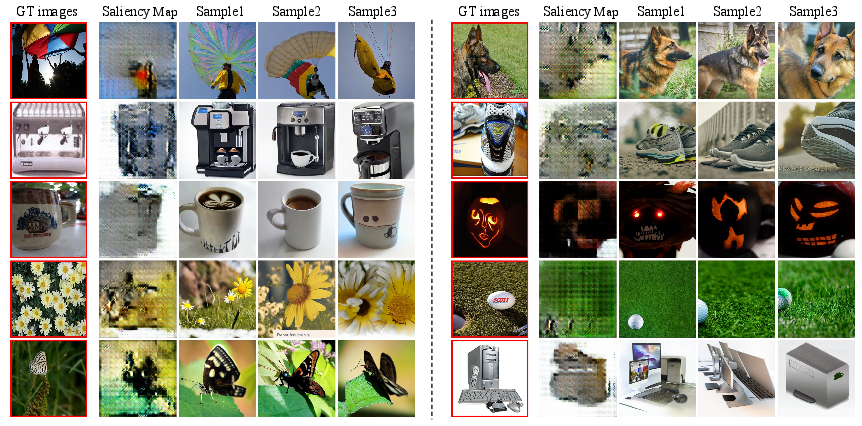

NeuroImagen capitalizes on multi-level semantic extraction from EEG signals to draw diverse granularity outputs. It utilizes a latent diffusion model to convert these outputs into high-resolution visual stimuli. The method leverages both pixel-level and sample-level semantic information, facilitating accurate reconstruction despite the inherent challenges posed by EEG data.

Figure 1: Overview of NeuroImagen. Modules within dotted lines are used only during training.

Methodology

NeuroImagen employs multi-level semantics extraction, incorporating both pixel-level and sample-level semantics:

- Pixel-Level Semantics: This involves saliency maps that provide color, position, and shape information of visual stimuli. Pixel-level extraction aims to capture intricate details and structure from EEG signals.

- Sample-Level Semantics: This decodes coarse-grained information like image category and textual descriptions, using approaches such as Contrastive Language-Image Pretraining (CLIP) to align EEG-extracted data with textual embeddings.

Image Reconstruction

The extracted semantics are fed into a latent diffusion model, which operates within the latent space rather than pixel space, enabling faster processing and reduced computational costs. This model improves the quality of reconstructions by overlaying sample-level semantics onto pixel-level saliency maps, ultimately guiding the reconstruction process with greater precision.

Figure 2: Examples of ground-truth images, label captions, and BLIP captions, respectively.

Experimental Results

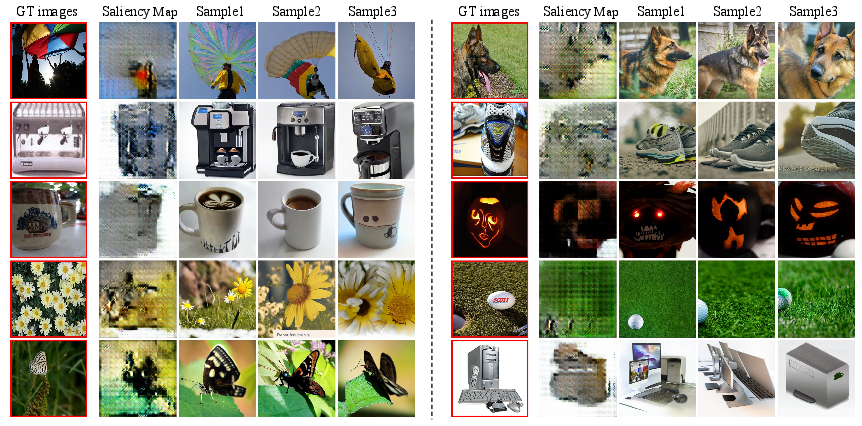

The NeuroImagen framework was tested on an EEG-image dataset containing diverse categories from ImageNet, involving data from multiple subjects. Evaluation metrics such as N-way Top-k Classification Accuracy, Inception Score (IS), and Structural Similarity Index Measure (SSIM) were employed to assess performance. NeuroImagen demonstrated superior quantitative and qualitative results compared to baseline methods like Brain2Image and NeuroVision.

Figure 3: Main results of NeuroImagen, showcasing reconstructed visual stimuli.

Comparisons with Baselines and Ablations

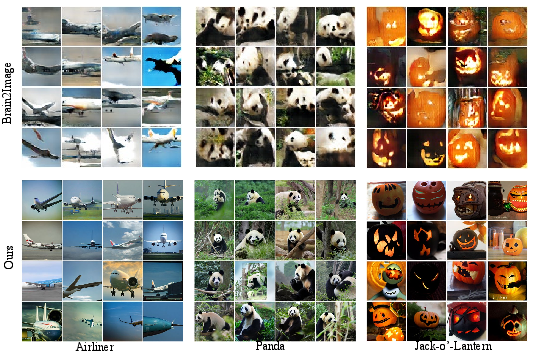

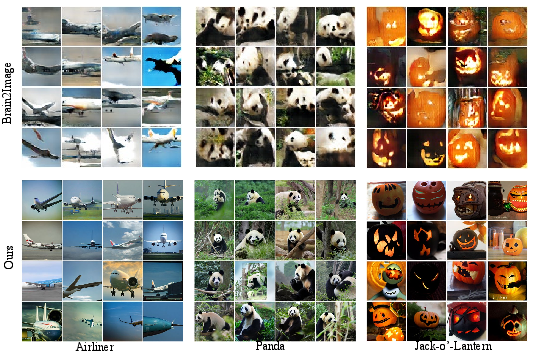

NeuroImagen outperformed existing methods such as Brain2Image, with significant improvements in semantic and structural accuracy. Ablation studies revealed the importance of both the pixel-level and sample-level semantic modules, highlighting the method's robustness and consistency across different subjects.

Figure 4: Comparison baseline Brain2Image and NeuroImagen.

Conclusion

NeuroImagen represents a significant advancement in EEG-based visual perception reconstruction, providing insights into the potential of integrating neuroscience with artificial intelligence. The method offers a preliminary yet promising framework for understanding visually-evoked brain activity and paves the way for future innovations in cognitive computational systems. These efforts can inspire further interdisciplinary research that seeks to unravel the complexities of human cognitive processing.