- The paper demonstrates how PI-DeepONet integrates physics loss and operator loss to accurately approximate the solution operator of a nonlinear parabolic PDE.

- It achieves significant computational efficiency and robustness against parameter variations, reducing sensitivity to high-dimensional challenges.

- The study highlights that leveraging physical laws within the neural network framework minimizes the need for extensive data, making it a scalable tool for complex dynamic systems.

Learning the Solution Operator of a Nonlinear Parabolic Equation Using PI-DeepONet

Introduction

The paper "Learning the Solution Operator of a Nonlinear Parabolic Equation Using Physics-Informed Deep Operator Network" addresses the significant challenge of solving complex differential equations encountered in various scientific and engineering applications. Traditional numerical approaches for solving such equations, including the finite volume method and the finite difference method, often lead to high computational costs and are highly sensitive to parameter changes. This research proposes using a Physics-Informed Deep Operator Network (PI-DeepONet) to approximate the solution operator for a nonlinear parabolic equation, thereby integrating physical laws directly into a deep neural network framework.

Methodology

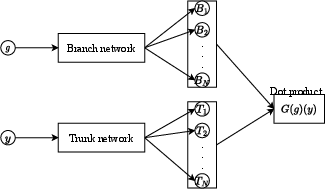

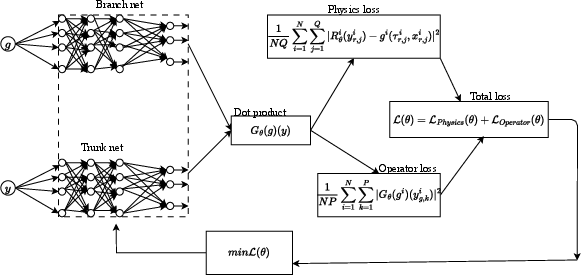

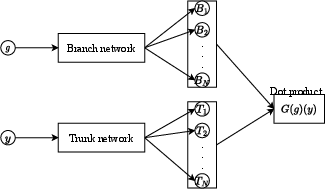

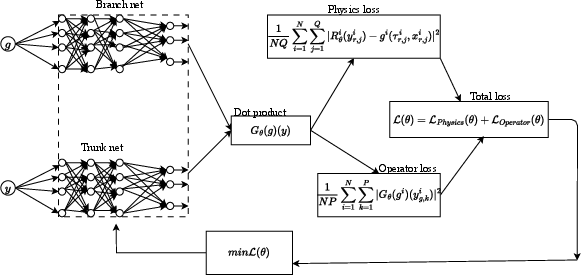

The methodology revolves around utilizing PI-DeepONet, an advanced version of DeepONet that incorporates physics-based constraints into the learning process. PI-DeepONet is designed to efficiently learn the solution operator of partial differential equations (PDEs), even those with non-trivial nonlinearities and varying parameters. The architecture of DeepONet includes two primary components: the branch net, which processes the input function discretized across multiple sensors, and the trunk net, which handles the location variables of the domain being studied.

Through a rigorous training process that leverages these components, PI-DeepONet can approximate the solution operator by minimizing a specifically defined loss function. This loss function is composed of two parts: the physics loss, which enforces the adherence of the network's output to the governing PDE, and the operator loss, which ensures fidelity to the initial and boundary conditions. The convergence of these losses during training allows for the precise prediction of PDE solutions without necessitating extensive retraining or data recalibration when parameters change.

Figure 1: DeepONet architecture illustrating the branch and trunk networks.

The paper focuses on a nonlinear parabolic equation expressed as:

∂τφ−∂x2α(φ)=g(τ,x)

where g is the source term and φ represents the solution. The PI-DeepONet framework is utilized to approximate the operator mapping from the source function g to the solution φ. This mapping is nonlinear due to the inherent complexities of the parabolic PDE and requires integration of physical constraints during learning to maintain consistency with fundamental laws described by the Hamilton-Jacobi-Bellman (HJB) equation.

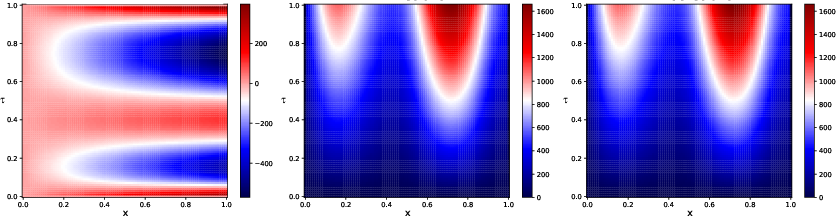

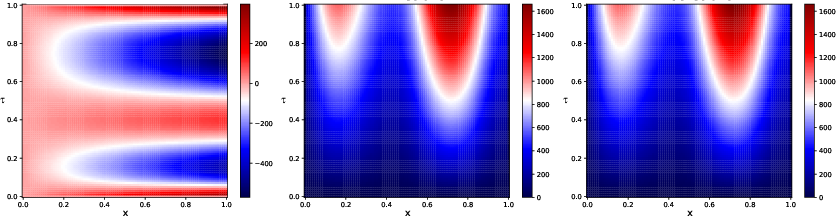

Figure 2: Comparison of a physics-informed DeepONet solution (NN solution) and the numerical solution obtained by the finite difference method (FDM numerical solution). The right-hand side represents the input function g.

Results and Discussion

The results demonstrate that PI-DeepONet not only approximates the solution operator with remarkable precision but also handles parametric variations efficiently. The study highlights the network's robustness in training without extensive datasets, relying on physics-based losses for guidance. Notably, PI-DeepONet can solve the PDEs within fractional computational times compared to traditional methods, without reinitialization for parameter changes. This positions PI-DeepONet as a highly scalable and adaptable tool for complex dynamic systems across varying conditions.

A key aspect underscored by the research is the model's reduced sensitivity to the so-called "curse of dimensionality," commonly faced in high-dimensional input spaces. By effectively capturing the operator features of PDEs, PI-DeepONet facilitates more scalable computations, potentially broadening the scope of applied mathematics and engineering domains where such equations are prevalent.

Conclusion

The deployment of PI-DeepONet marks a significant forward step in efficiently solving complex nonlinear PDEs, as demonstrated by its application to a model derived from the HJB equation. The approach's innate ability to incorporate and leverage physics within a neural network structure not only enhances prediction accuracy but also optimizes computational resource use. Future work will undoubtedly explore broader application contexts and further optimize the architectures for even greater efficiency and adaptability. This research underscores the promise of integrating machine learning techniques with traditional mathematical frameworks to tackle analytically intractable problems in science and engineering.