A Theory of Multimodal Learning

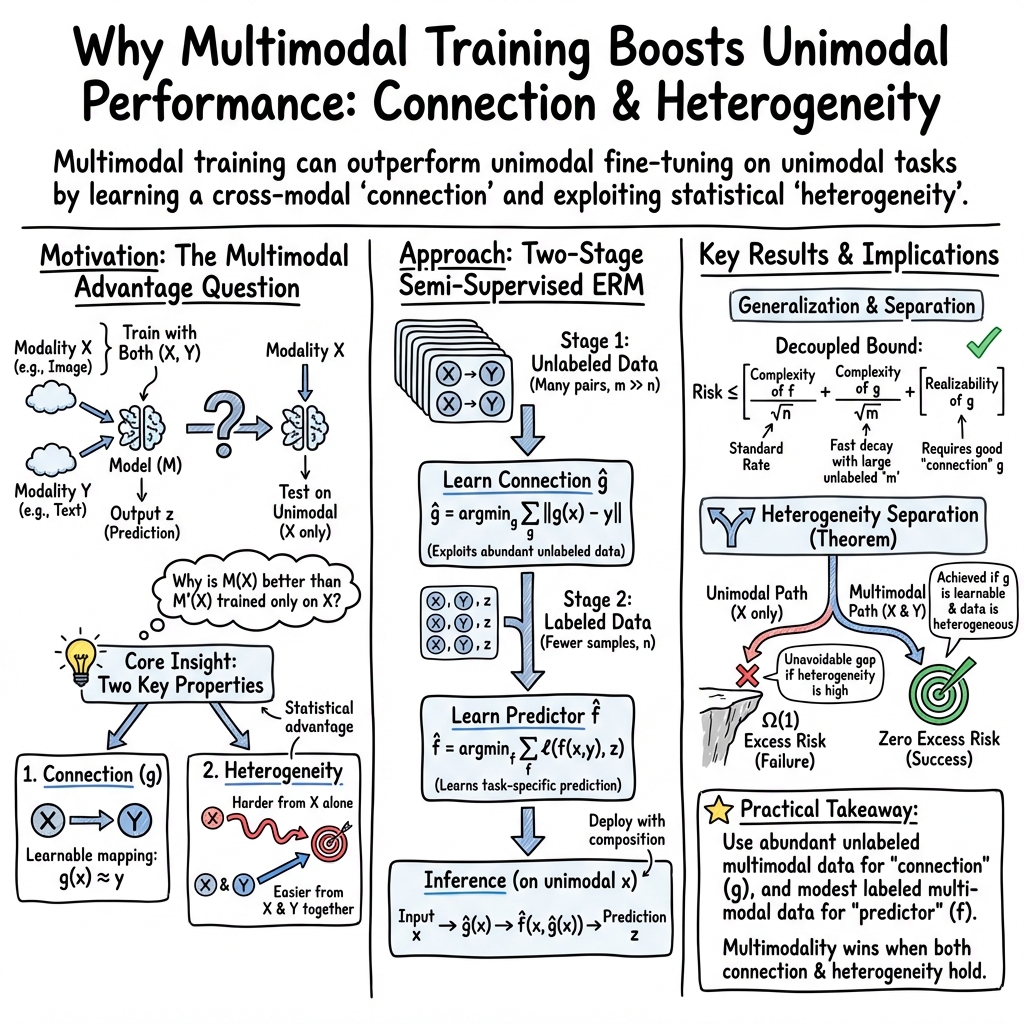

Abstract: Human perception of the empirical world involves recognizing the diverse appearances, or 'modalities', of underlying objects. Despite the longstanding consideration of this perspective in philosophy and cognitive science, the study of multimodality remains relatively under-explored within the field of machine learning. Nevertheless, current studies of multimodal machine learning are limited to empirical practices, lacking theoretical foundations beyond heuristic arguments. An intriguing finding from the practice of multimodal learning is that a model trained on multiple modalities can outperform a finely-tuned unimodal model, even on unimodal tasks. This paper provides a theoretical framework that explains this phenomenon, by studying generalization properties of multimodal learning algorithms. We demonstrate that multimodal learning allows for a superior generalization bound compared to unimodal learning, up to a factor of $O(\sqrt{n})$, where $n$ represents the sample size. Such advantage occurs when both connection and heterogeneity exist between the modalities.

- Learning from multiple partially observed views-an application to multilingual text categorization. Advances in neural information processing systems, 22, 2009.

- A framework for learning predictive structures from multiple tasks and unlabeled data. Journal of Machine Learning Research, 6(11), 2005.

- A theoretical analysis of contrastive unsupervised representation learning. arXiv preprint arXiv:1902.09229, 2019.

- Multimodal machine learning: A survey and taxonomy. IEEE transactions on pattern analysis and machine intelligence, 41(2):423–443, 2018.

- Jonathan Baxter. A model of inductive bias learning. Journal of artificial intelligence research, 12:149–198, 2000.

- Exploiting task relatedness for multiple task learning. In Learning Theory and Kernel Machines: 16th Annual Conference on Learning Theory and 7th Kernel Workshop, COLT/Kernel 2003, Washington, DC, USA, August 24-27, 2003. Proceedings, pages 567–580. Springer, 2003.

- Linear algorithms for online multitask classification. The Journal of Machine Learning Research, 11:2901–2934, 2010.

- Text to 3d scene generation with rich lexical grounding. arXiv preprint arXiv:1505.06289, 2015.

- Few-shot learning via learning the representation, provably. arXiv preprint arXiv:2002.09434, 2020.

- Multimodal saliency and fusion for movie summarization based on aural, visual, and textual attention. IEEE Transactions on Multimedia, 15(7):1553–1568, 2013.

- Learning robust representations via multi-view information bottleneck. arXiv preprint arXiv:2002.07017, 2020.

- On the provable advantage of unsupervised pretraining. arXiv preprint arXiv:2303.01566, 2023.

- Generative adversarial networks. Communications of the ACM, 63(11):139–144, 2020.

- Deep multimodal representation learning: A survey. IEEE Access, 7:63373–63394, 2019.

- Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 770–778, 2016.

- Framing image description as a ranking task: Data, models and evaluation metrics. Journal of Artificial Intelligence Research, 47:853–899, 2013.

- What makes multi-modal learning better than single (provably). Advances in Neural Information Processing Systems, 34:10944–10956, 2021.

- Quantifying & modeling feature interactions: An information decomposition framework. arXiv preprint arXiv:2302.12247, 2023.

- Foundations and recent trends in multimodal machine learning: Principles, challenges, and open questions. arXiv preprint arXiv:2209.03430, 2022.

- Rainer W Lienhart. Comparison of automatic shot boundary detection algorithms. In Storage and retrieval for image and video databases VII, volume 3656, pages 290–301. SPIE, 1998.

- Oracle inequalities and optimal inference under group sparsity. 2011.

- Andreas Maurer. Bounds for linear multi-task learning. The Journal of Machine Learning Research, 7:117–139, 2006.

- Andreas Maurer. The rademacher complexity of linear transformation classes. In Learning Theory: 19th Annual Conference on Learning Theory, COLT 2006, Pittsburgh, PA, USA, June 22-25, 2006. Proceedings 19, pages 65–78. Springer, 2006.

- Andreas Maurer. A chain rule for the expected suprema of gaussian processes. Theoretical Computer Science, 650:109–122, 2016.

- The benefit of multitask representation learning. Journal of Machine Learning Research, 17(81):1–32, 2016.

- Foundations of machine learning. MIT press, 2018.

- Multimodal deep learning. In Proceedings of the 28th international conference on machine learning (ICML-11), pages 689–696, 2011.

- OpenAI. Gpt-4 technical report. arXiv, 2023.

- A pac-bayesian bound for lifelong learning. In International Conference on Machine Learning, pages 991–999. PMLR, 2014.

- Excess risk bounds for multitask learning with trace norm regularization. In Conference on Learning Theory, pages 55–76. PMLR, 2013.

- Deep multimodal learning: A survey on recent advances and trends. IEEE signal processing magazine, 34(6):96–108, 2017.

- Generative adversarial text to image synthesis. In International conference on machine learning, pages 1060–1069. PMLR, 2016.

- A generalist agent. arXiv preprint arXiv:2205.06175, 2022.

- On the importance of contrastive loss in multimodal learning. arXiv preprint arXiv:2304.03717, 2023.

- High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 10684–10695, 2022.

- An information theoretic framework for multi-view learning. 2008.

- Tcgm: An information-theoretic framework for semi-supervised multi-modality learning. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part III 16, pages 171–188. Springer, 2020.

- Learning to learn: Introduction and overview. Learning to learn, pages 3–17, 1998.

- On the theory of transfer learning: The importance of task diversity. Advances in neural information processing systems, 33:7852–7862, 2020.

- Attention is all you need. Advances in neural information processing systems, 30, 2017.

- Integration of acoustic and visual speech signals using neural networks. IEEE Communications Magazine, 27(11):65–71, 1989.

- Cpm-nets: Cross partial multi-view networks. Advances in Neural Information Processing Systems, 32, 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.