- The paper demonstrates that miscalibrated confidence—both confidently incorrect and unconfidently correct predictions—significantly undermines user trust in AI systems.

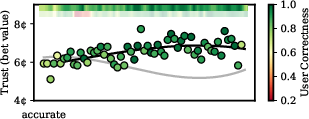

- Experiments using a betting game reveal that even a few confidently incorrect predictions lead to persistent trust degradation and decreased collaborative performance.

- Predictive modeling with logistic regression and GRU networks effectively captures the temporal dynamics of user-AI interactions, emphasizing the need for robust calibration.

Understanding User Trust in AI Under Uncertainty

Introduction

The paper "A Diachronic Perspective on User Trust in AI under Uncertainty" presents a thorough investigation into the dynamics of user trust in AI systems, particularly how miscalibrated confidence signals can erode trust over time. This study is essential in understanding user-AI interactions, especially in high-stakes environments where AI decisions have significant implications. Through a series of experiments, the authors explore the factors influencing trust degradation and demonstrate the importance of accurate confidence calibration in fostering effective human-AI collaboration.

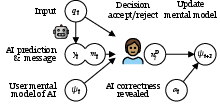

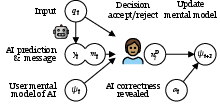

Figure 1: Diachronic view of a typical human-AI collaborative setting. At each timestep t, the user uses their prior mental model ψt to accept or reject the AI system's answer yt, supported by an additional message mt (AI's confidence), and updates their mental model of the AI system to ψt+1.

Miscalibration and its Effects on User Trust

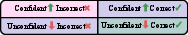

The central hypothesis is that user trust in AI systems can be severely affected by miscalibration, where an AI's confidence does not align with its prediction accuracy. The research categorizes miscalibration into confidently incorrect (CI) and unconfidently correct (UC) predictions and examines their distinct impacts on user trust.

Experimental Setup and Findings

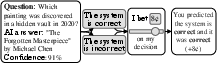

The experiments involve a betting game where users interact with a simulated AI system that provides predictions with confidence levels. Users bet on the validity of the AI's predictions, serving as a proxy for trust. The study observes that:

Modeling and Implications

To better understand trust dynamics, various predictive models, including logistic regression and GRU networks, are developed to estimate user trust and predict user decisions based on interaction history and AI confidence levels. Recurrent models like GRUs capture the complex temporal dynamics more effectively than simple linear models.

The study's findings underscore the necessity for robust calibration methods in AI systems, emphasizing that even subtle miscalibrations can have profound effects on trust and collaborative efficiency.

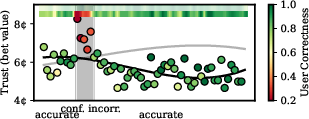

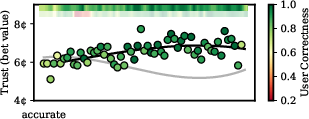

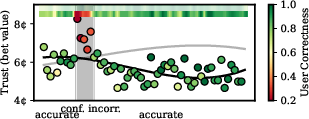

Figure 4: Average user bet values (y-axis) and bet correctness with no intervention (control, top) and CI intervention (bottom).

Conclusion

This paper highlights the critical role of confidence calibration in AI systems, especially in high-stakes decision scenarios where user trust is integral to success. The results point towards the need for user-centric design approaches that consider trust dynamics, facilitating more reliable human-AI collaborations. Future research should focus on strategies for quickly regaining trust and explore diverse reward structures in real-world applications.

Continued exploration into trust dynamics will aid the development of AI systems that align more closely with human expectations, fostering safer and more effective integrations in various domains.