- The paper presents Prithvi, a foundation model pre-trained on over 1TB of multispectral satellite imagery using a masked autoencoder architecture.

- The paper details a novel data sampling and preprocessing pipeline for harmonized Landsat-Sentinel imagery, ensuring broad geospatial representation.

- The paper demonstrates Prithvi's effectiveness on cloud gap imputation, flood mapping, and wildfire scar segmentation, outperforming traditional methods.

Foundation Models for Generalist Geospatial Artificial Intelligence

This essay provides an expert overview of the paper "Foundation Models for Generalist Geospatial Artificial Intelligence" (2310.18660). The paper presents a significant advancement in the application of foundation models to geospatial data, focusing on the Prithvi model—a large-scale, transformer-based model trained on substantial volumes of multispectral satellite imagery.

Introduction to Geospatial Foundation Models

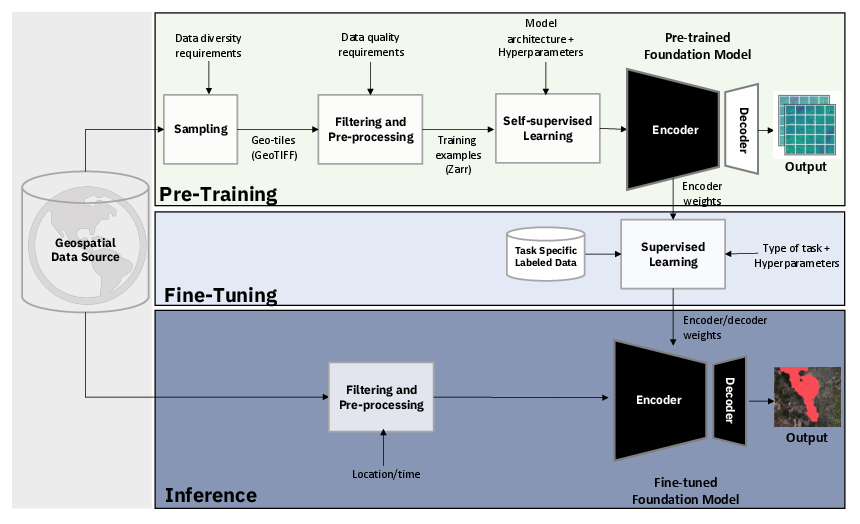

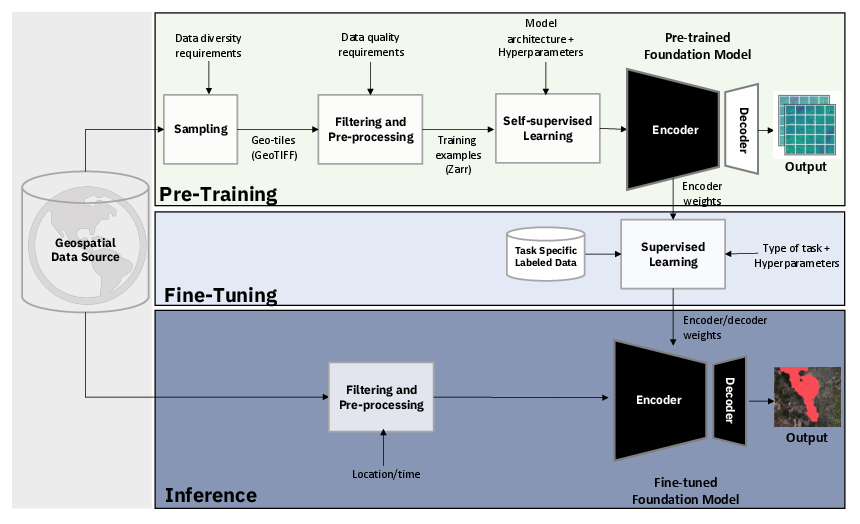

Geospatial AI has traditionally relied on task-specific models trained on labeled data, which is labor-intensive to acquire and labeled. Foundation models, which employ self-supervised learning on large unlabeled datasets before fine-tuning on smaller labeled datasets, represent a paradigm shift. Prithvi leverages this approach, being pre-trained on over 1TB of data from the Harmonized Landsat-Sentinel 2 (HLS) dataset and fine-tuned for tasks such as cloud gap imputation and wildfire scar segmentation.

Figure 1: We propose a first-of-its-kind framework for the development of geospatial foundation models from raw satellite imagery, which we leverage to generate the Prithvi-100M model.

Data Preprocessing and Training

Harmonized Landsat Sentinel-2 Dataset

Prithvi utilizes the HLS dataset, which offers harmonized data from multiple satellite sources at a resolution of 30 meters. The dataset combines observations from Landsat and Sentinel satellites to provide frequent and comprehensive imagery suitable for pretraining large models.

Efficient Data Sampling and Preprocessing

The paper introduces a novel pipeline to efficiently sample and preprocess satellite imagery. A stratified sampling approach based on geospatial statistics ensures broad representation without redundancy, followed by preprocessing to exclude data with significant cloud cover, leveraging Fmask for these determinations.

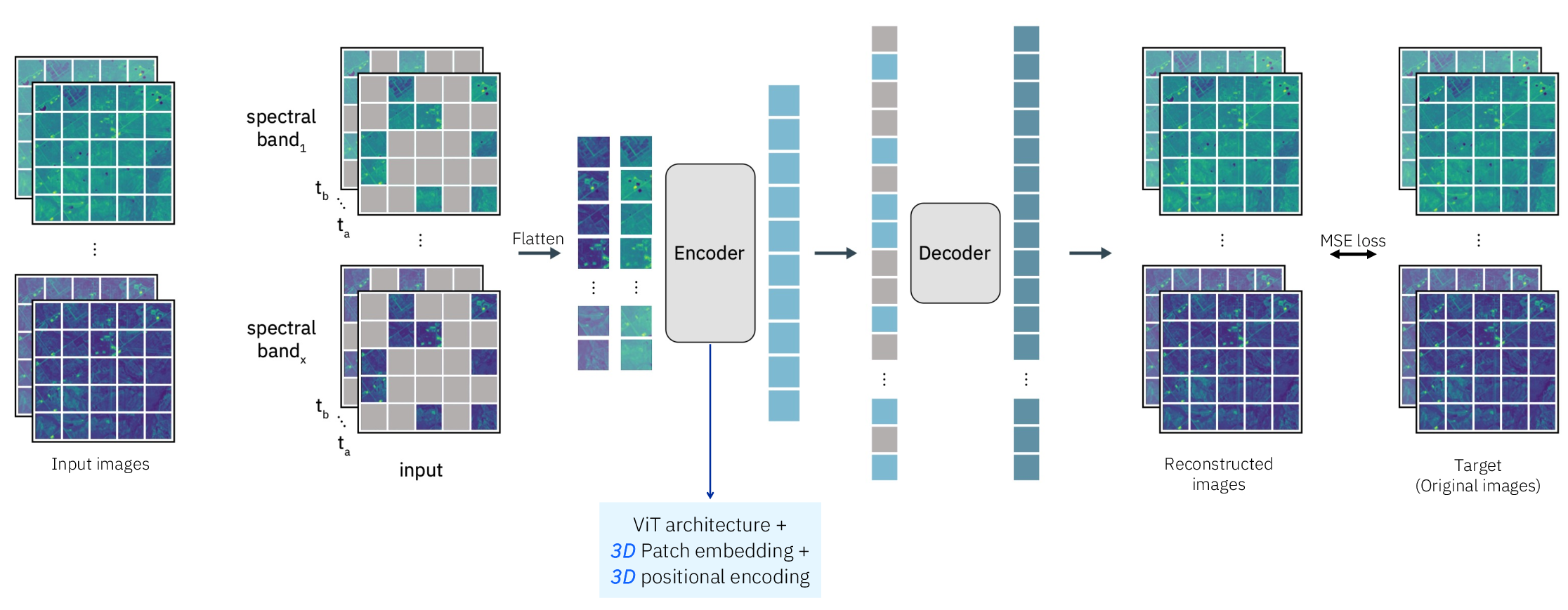

Model Architecture

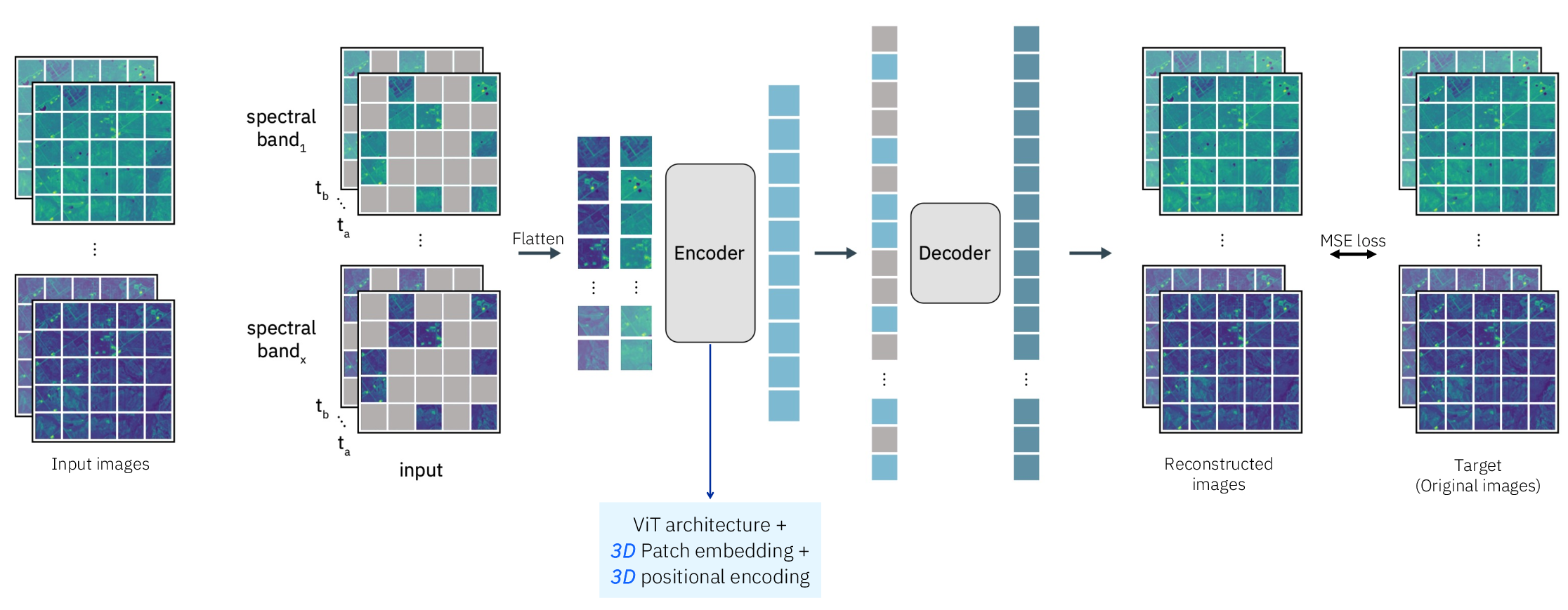

Prithvi uses a Masked Autoencoder (MAE) architecture with a Vision Transformer (ViT) backbone for self-supervised learning. The model processes spatiotemporal data through 3D positional and patch embeddings tailored for satellite imagery.

Figure 2: The masked autoencoder (MAE) structure for pre-training Prithvi on large-scale multi-temporal and multi-spectral satellite images.

Pretraining Details

The pretraining adopts MAE, reconstructing masked tokens through latent representations. Optimized through the AdamW optimizer, the training process involves large-scale data handling, improved by using Zarr files for efficient data input.

Application on Downstream Tasks

Prithvi's architecture allows it to be effectively adapted for various Earth observation tasks, including:

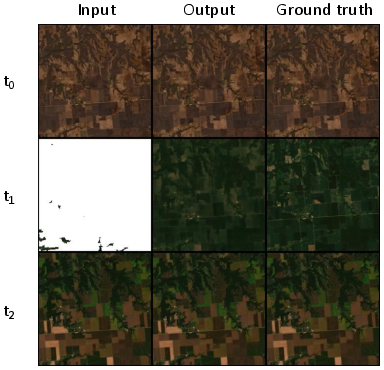

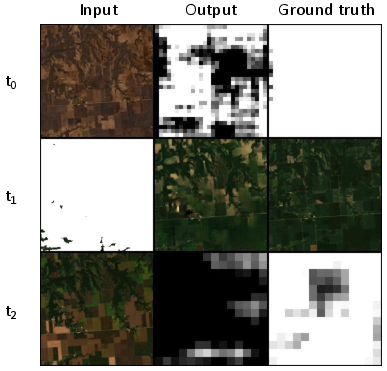

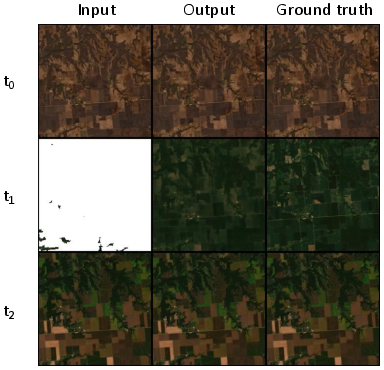

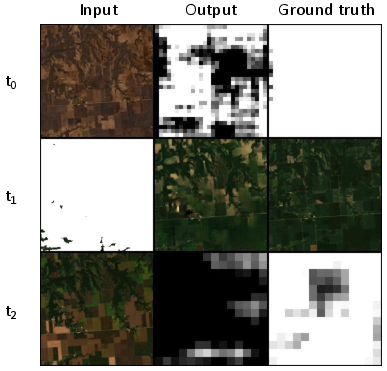

Cloud Gap Imputation

Prithvi outperforms traditional CGAN-based architectures in recreating pixel values for cloud-covered areas, showcasing data efficiency and rapid convergence on metrics such as Structural Similarity Index (SSIM).

Figure 3: Prithvi: Model can infer pixel values without access to the date of any of the time steps.

Flood and Wildfire Scar Mapping

Evaluations on flood mapping with Sentinel data at a 10-meter resolution demonstrate Prithvi's capability to generalize across resolutions and global datasets. Similarly, wildfire scar segmentation is effectively performed, highlighting Prithvi's versatility and efficiency with limited labeling resources.

Discussion

The framework laid by Prithvi for geospatial models is crucial for domains where labeled data is sparse. By leveraging self-supervised pretraining, Prithvi demonstrates robustness in adapting to diverse geospatial tasks, setting precedence for future improvements such as incorporating global data and multi-scale features during pretraining.

Conclusion

The Prithvi model represents a pivotal advancement in geospatial AI by proving the efficacy of foundation models in Earth sciences. Through the open-sourcing of Prithvi's architecture and weight contributions, this paper significantly contributes to the field, potentially enhancing AI applications in remote sensing and climate science. The work exemplifies the vital role of self-supervision and efficient data handling in developing generalist AI models capable of addressing varied geospatial challenges.