- The paper generalizes the Geometric Algebra Transformer by introducing Euclidean and Conformal variants to improve equivariance in 3D data tasks.

- It demonstrates that Conformal and improved Projective variants deliver superior accuracy and sample efficiency in n-body simulations and arterial flow modeling.

- The study shows that while Euclidean variants offer computational efficiency, they require additional adjustments to achieve robust translation invariance.

Introduction

The paper "Euclidean, Projective, Conformal: Choosing a Geometric Algebra for Equivariant Transformers" (2311.04744) presents a generalization of the Geometric Algebra Transformer (GATr) architecture, introducing two new variations based on Euclidean and conformal algebras alongside the traditional projective geometric algebra. The paper methodically evaluates these variations theoretically and empirically in terms of their expressivity, symmetry properties, and computational efficiency.

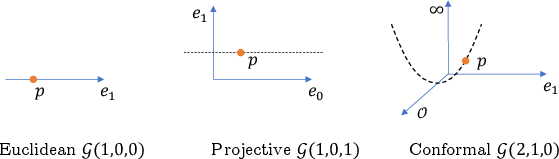

Geometric Algebras Overview

The research leverages the concepts of geometric algebras (GAs), specifically Clifford algebras, in constructing transformer architectures that are equivariant to symmetry groups. Particularly, the study focuses on three types:

The paper extends the initial GATr architecture, which was based on projective algebra, to using both Euclidean and conformal algebras. These adjustments are designed to improve the model's ability to handle complex symmetry transformations in 3D data more efficiently.

Key modifications include:

- Equivariant linear map construction from generalized principles of GA.

- Enhanced normalization techniques that cater to the specific demands of each algebra.

- Integration of distance-based attention mechanisms, most naturally implementable in the CGA framework.

Theoretical Comparisons

Theoretical assessments highlight differences in expressivity and symmetry handling:

- E-GATr emerges as computationally efficient with a simpler architecture, yet less symmetric, limiting its sample efficiency in complex geometrical problems.

- P-GATr initially showed limited expressivity; however, an improved version (iP-GATr) bootstraps its performance by integrating join operations and CGA-based enrichments.

- C-GATr merges algebraic simplicity with rich geometric representation capabilities, albeit with higher computation prerequisites, particularly concerning normalization stability.

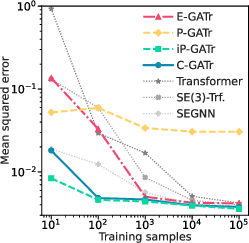

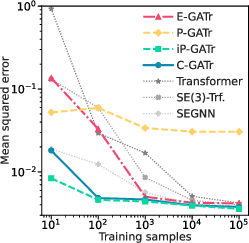

Figure 2: n-body modelling. We show the mean squared error as a function of the number of training samples. We compare E-GATr, P-GATr, iP-GATR, and C-GATr.

Empirical Evaluation

Empirical results from n-body simulations and arterial flow modeling tasks underscore the benefits of these architectural choices:

- C-GATr and iP-GATr demonstrate superior accuracy and sample efficiency, especially in data-limited scenarios, due to robust handling of larger symmetry groups.

- E-GATr serves as a benchmark of simplicity, though requiring additional handling for translation-invariance such as re-centering.

Conclusion

This work systematically explores the implications of selecting different geometric algebras in constructing equivariant transformer architectures. It establishes comprehensive benchmarks and theoretical foundations for adopting CGA and improved PGA architectures for higher expressivity and practical performance in tasks requiring 3D data representations. While E-GATr provides a lightweight alternative, the richest performance is attributed to architectures leveraging the full expressivity of improved PGAs and CGAs. This paper sets the stage for future explorations into scalable, efficient, and expressive geometric deep learning systems.