- The paper presents CharacterGLM, a framework that integrates configurable attributes and behaviors to create personalized conversational AI characters.

- It employs novel dialogue data collection, supervised fine-tuning, and iterative self-refinement to enhance model performance.

- Evaluation shows CharacterGLM outperforms GPT-3.5 in consistency, human-likeness, and engagement in long dialogue contexts.

CharacterGLM: Customizing Chinese Conversational AI Characters with LLMs

Introduction

The research presented in this paper focuses on integrating customized characters into conversational AI through the CharacterGLM framework. Built on top of the ChatGLM framework, this series of models varies in size from 6B to 66B parameters and is specifically adapted for generating Character-based Dialogues (CharacterDial). The CharacterGLM models enable users to configure attributes such as identities, interests, and interaction patterns to create personalized AI characters. Such customizations cater to human emotional and social needs, aiming to outperform existing mainstream LLMs like GPT-3.5 in aspects such as consistency, human-likeness, and engagement.

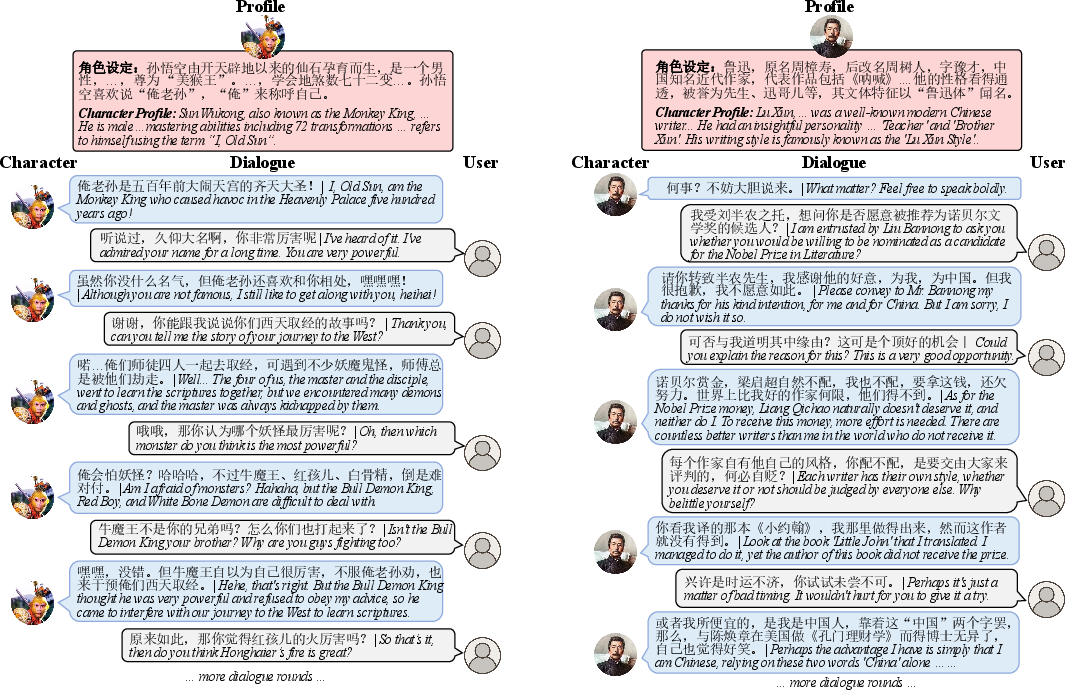

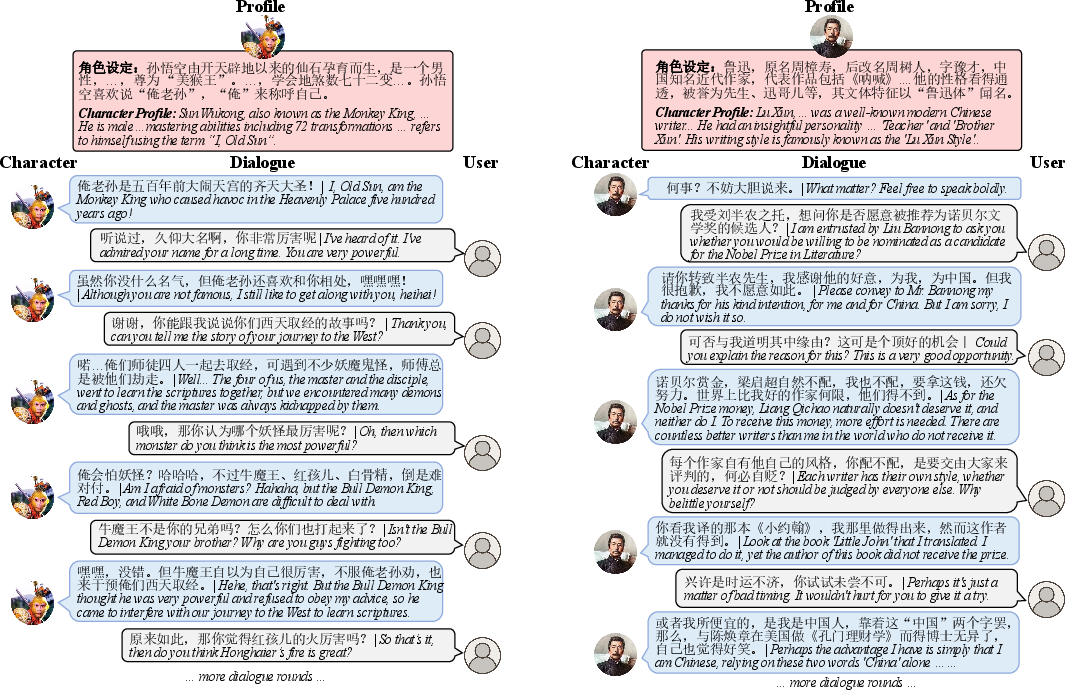

Figure 1: Examples of multi-turn character-based dialogue (CharacterDial) between characters customized by CharacterGLM and users. CharacterDial allows users to converse with personalized characters by configuring their profiles.

Design Principles and Architecture

CharacterGLM is designed to simulate nuanced, human-like interactions by separating core elements into two primary categories: attributes and behaviors. Attributes encapsulate static features like identity and interests, while behaviors refer to dynamic interaction patterns such as linguistic style and emotional expression.

The design focuses on delivering conversational experiences that exhibit consistency in maintaining character personas, human-likeness in response generation, and engaging dialogue flow. To achieve this, CharacterGLM extracts profiles from a robust CharacterDial corpus gathered from various contexts, which includes celebrity, daily life, and virtual love dialogues.

Implementation

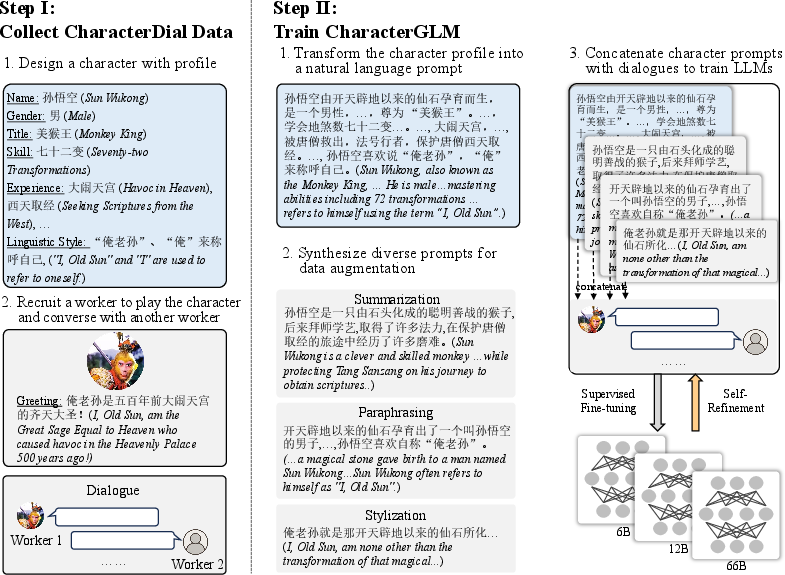

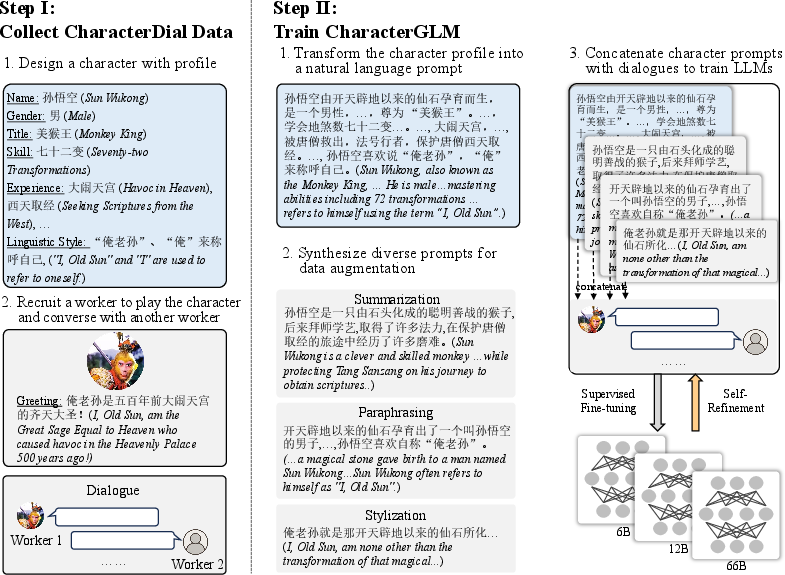

The implementation of CharacterGLM focuses on novel methodologies for effective dialogue data collection, model training, and self-refinement. Figure 2 outlines the architecture of the CharacterGLM which involves several stages:

- Dialogue Data Collection: Contributions to the CharacterDial corpus come from human role-playing, synthesis via LLMs like GPT-4, and extractions from literary resources. Both manual and AI-assisted data curation ensure high-quality dialogue interactions.

- Training and Fine-tuning: CharacterGLM undergoes supervised fine-tuning with character prompts generated from diverse profiles. This enhancement involves summarization and paraphrasing techniques to increase the model's generalizability.

- Self-Refinement: Inspired by frameworks like LaMDA, CharacterGLM iteratively improves post-deployment by leveraging user feedback from human-prototype interaction cycles, refining its adaptive capabilities.

Figure 2: The implementation of our CharacterGLM, including dialogue data collection and CharacterGLM training.

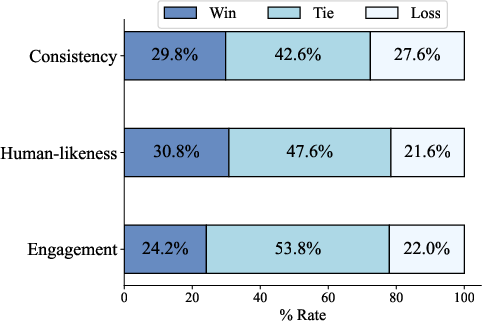

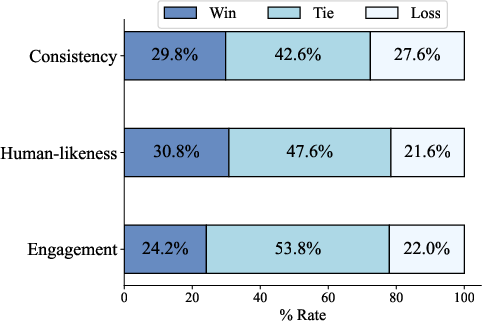

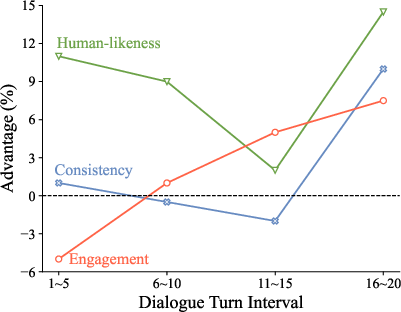

The evaluation of CharacterGLM reveals promising results against peers such as GPT-3.5 and MiniMax across various dimensions. Figure 3 illustrates a pairwise performance assessment where CharacterGLM consistently surpassed GPT-3.5 in consistency, engagement, and human-likeness.

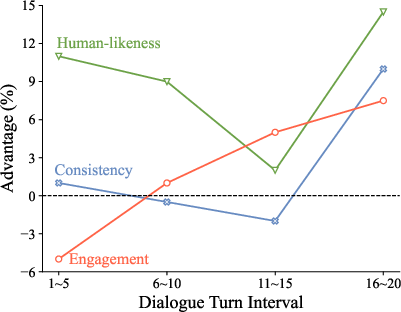

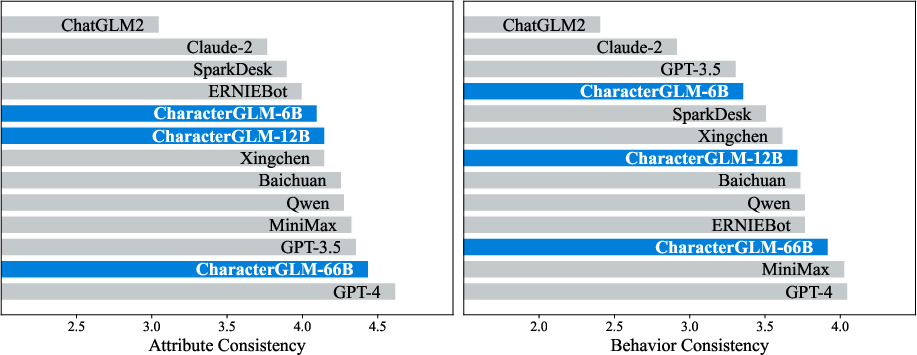

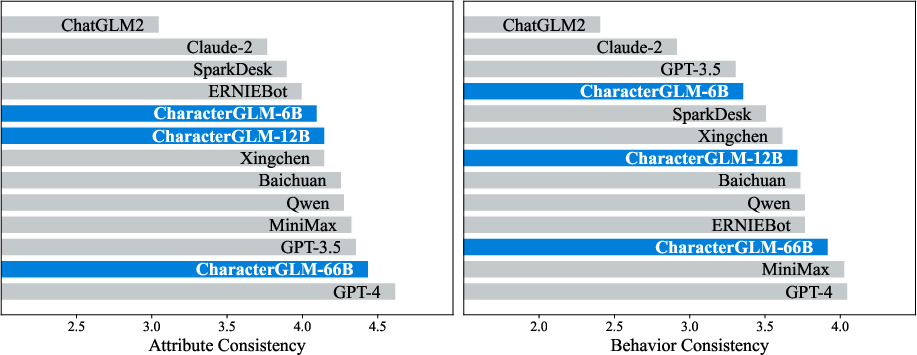

Additionally, CharacterGLM displays superiority in longer dialogue contexts, addressing traditional LLMs' limitations in long-range coherence and user engagement. Figure 4 details attributes and behavior consistency scores, highlighting CharacterGLM's ability to maintain coherent character profiles during complex interactions.

Figure 3: Pair-wise comparison results of CharacterGLM over GPT-3.5 in consistency, human-likeness, and engagement.

Figure 4: The results of attribute consistency and behavior consistency.

Implications and Future Directions

The implications of CharacterGLM extend beyond technical improvements. The successful customization and deployment of AI characters suggest potential applications in areas requiring in-depth emotional intelligence and long-term interaction capabilities, such as education, therapy, and virtual companionship.

Future research should explore enhancing CharacterGLM's capacity for long-term memory, self-awareness, and intra-character social interaction. Additionally, extending cognitive processing beyond text mimicking to incorporate theory of mind capabilities can yield more profound, socially intelligent AI models.

Conclusion

CharacterGLM represents a significant advancement in personalized conversational AI, focusing on embedding realistic and human-like attributes and behaviors within AI characters. By combining static and dynamic character traits, the CharacterGLM series exhibits superior dialogue consistency, engagement, and naturalness while providing a foundational framework for emerging applications in AI-driven social interactions.