BPF-oF: Storage Function Pushdown Over the Network

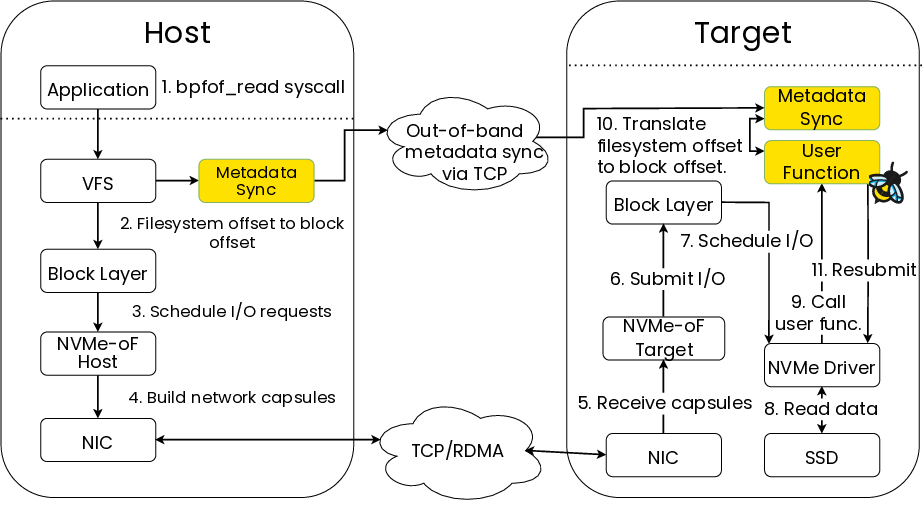

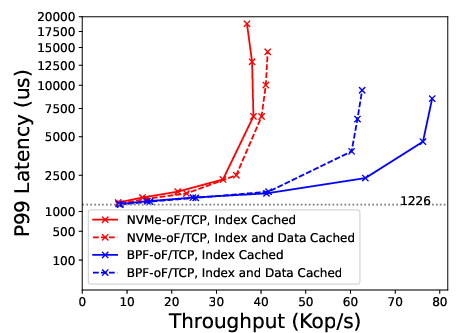

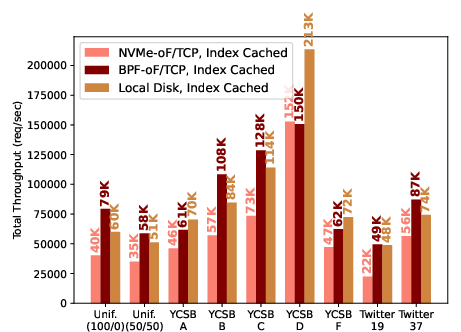

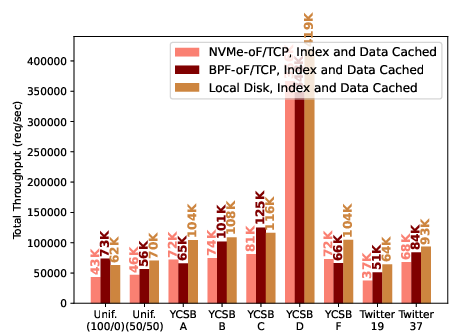

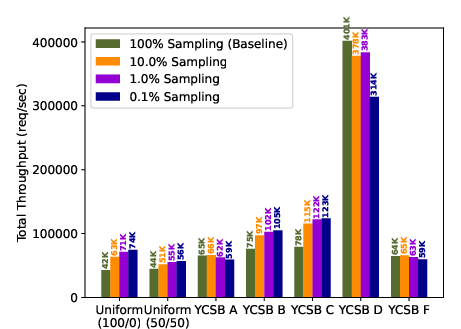

Abstract: Storage disaggregation, wherein storage is accessed over the network, is popular because it allows applications to independently scale storage capacity and bandwidth based on dynamic application demand. However, the added network processing introduced by disaggregation can consume significant CPU resources. In many storage systems, logical storage operations (e.g., lookups, aggregations) involve a series of simple but dependent I/O access patterns. Therefore, one way to reduce the network processing overhead is to execute dependent series of I/O accesses at the remote storage server, reducing the back-and-forth communication between the storage layer and the application. We refer to this approach as \emph{remote-storage pushdown}. We present BPF-oF, a new remote-storage pushdown protocol built on top of NVMe-oF, which enables applications to safely push custom eBPF storage functions to a remote storage server. The main challenge in integrating BPF-oF with storage systems is preserving the benefits of their client-based in-memory caches. We address this challenge by designing novel caching techniques for storage pushdown, including splitting queries into separate in-memory and remote-storage phases and periodically refreshing the client cache with sampled accesses from the remote storage device. We demonstrate the utility of BPF-oF by integrating it with three storage systems, including RocksDB, a popular persistent key-value store that has no existing storage pushdown capability. We show BPF-oF provides significant speedups in all three systems when accessed over the network, for example improving RocksDB's throughput by up to 2.8$\times$ and tail latency by up to 2.6$\times$.

- 100g kernel and user space NVMe/TCP using Chelsio offload. https://www.chelsio.com/wp-content/uploads/resources/t6-100g-nvmetcp-offload.pdf.

- BPF: introduce function-by-function verification. https://lore.kernel.org/bpf/[email protected]/.

- Cilium. https://github.com/cilium/cilium.

- Cloudflare architecture and how BPF eats the world. https://blog.cloudflare.com/cloudflare-architecture-and-how-bpf-eats-the-world/.

- Filtering and retrieving data using Amazon S3 Select. https://docs.aws.amazon.com/AmazonS3/latest/userguide/selecting-content-from-objects.html.

- LevelDB. https://github.com/google/leveldb.

- NVMe base specification. https://nvmexpress.org/wp-content/uploads/NVM-Express-1_4b-2020.09.21-Ratified.pdf.

- NVMe computational storage - an update on the standard. https://www.snia.org/educational-library/nvme-computational-storage-update-standard-2022.

- NVMe over fabrics specification. https://nvmexpress.org/wp-content/uploads/NVMe-over-Fabrics-1.1a-2021.07.12-Ratified.pdf.

- Redis functions. https://redis.io/docs/manual/programmability/functions-intro/.

- RocksDB. https://rocksdb.org/.

- RocksDB users. https://github.com/facebook/rocksdb/blob/main/USERS.md.

- Rockset: real-time analytics at cloud scale. https://rockset.com/.

- SQLite pluggable storage engine. https://sqlite.org/src4/doc/trunk/www/storage.wiki.

- WiredTiger storage engine. https://docs.mongodb.com/manual/core/wiredtiger/.

- Adaptive placement for in-memory storage functions. In 2020 USENIX Annual Technical Conference (USENIX ATC 20), pages 127–141. USENIX Association, July 2020.

- Extension framework for file systems in user space. In 2019 USENIX Annual Technical Conference (USENIX ATC 19), pages 121–134, Renton, WA, July 2019. USENIX Association.

- Hailstorm: Disaggregated compute and storage for distributed LSM-based databases. In Proceedings of the Twenty-Fifth International Conference on Architectural Support for Programming Languages and Operating Systems, ASPLOS ’20, page 301–316, New York, NY, USA, 2020. Association for Computing Machinery.

- SplinterDB: Closing the bandwidth gap for NVMe key-value stores. In 2020 USENIX Annual Technical Conference (USENIX ATC 20), pages 49–63, 2020.

- Benchmarking cloud serving systems with YCSB. In Proceedings of the 1st ACM symposium on Cloud computing, pages 143–154, 2010.

- Evolution of development priorities in key-value stores serving large-scale applications: The RocksDB experience. In 19th USENIX Conference on File and Storage Technologies (FAST 21), pages 33–49. USENIX Association, February 2021.

- The design and operation of CloudLab. In 2019 USENIX Annual Technical Conference (USENIX ATC 19), pages 1–14, Renton, WA, July 2019. USENIX Association.

- Simple and precise static analysis of untrusted Linux kernel extensions. In Proceedings of the 40th ACM SIGPLAN Conference on Programming Language Design and Implementation, PLDI 2019, page 1069–1084, New York, NY, USA, 2019. Association for Computing Machinery.

- BMC: Accelerating memcached using safe in-kernel caching and pre-stack processing. In 18th USENIX Symposium on Networked Systems Design and Implementation (NSDI 21), pages 487–501. USENIX Association, April 2021.

- Cornus: Atomic commit for a cloud DBMS with storage disaggregation. Proc. VLDB Endow., 16(2):379–392, oct 2022.

- Performance characterization of NVMe-over-Fabrics storage disaggregation. ACM Trans. Storage, 14(4), dec 2018.

- Predicate migration: Optimizing queries with expensive predicates. In Proceedings of the 1993 ACM SIGMOD international conference on Management of data, pages 267–276, 1993.

- TCP ≈ RDMA: CPU-efficient remote storage access with i10. In 17th USENIX Symposium on Networked Systems Design and Implementation (NSDI 20), pages 127–140, Santa Clara, CA, February 2020. USENIX Association.

- J Kim. iSCSI - is it the future of cloud storage or doomed by NVMe-oF. https://www.snia.org/sites/default/files/news/iSCSI-Future-Cloud-Storage-Doomed-NVMe-oF.pdf.

- Flash storage disaggregation. In Proceedings of the Eleventh European Conference on Computer Systems, EuroSys ’16, New York, NY, USA, 2016. Association for Computing Machinery.

- ReFlex: Remote flash ≈ local flash. In Proceedings of the Twenty-Second International Conference on Architectural Support for Programming Languages and Operating Systems, ASPLOS ’17, page 345–359, New York, NY, USA, 2017. Association for Computing Machinery.

- Safe and efficient remote application code execution on disaggregated NVM storage with eBPF. arXiv preprint arXiv:2002.11528, 2020.

- Splinter: Bare-Metal extensions for Multi-Tenant Low-Latency storage. In 13th USENIX Symposium on Operating Systems Design and Implementation (OSDI 18), pages 627–643, Carlsbad, CA, October 2018. USENIX Association.

- Privbox: Faster system calls through sandboxed privileged execution. In 2022 USENIX Annual Technical Conference (USENIX ATC 22), Carlsbad, CA, July 2022. USENIX Association.

- Cassandra: a decentralized structured storage system. ACM SIGOPS operating systems review, 44(2):35–40, 2010.

- SkyhookDM: Data processing in Ceph with programmable storage. USENIX login;, 45(2), 2020.

- Understanding Rack-Scale disaggregated storage. In 9th USENIX Workshop on Hot Topics in Storage and File Systems (HotStorage 17), Santa Clara, CA, July 2017. USENIX Association.

- Query optimization by predicate move-around. In VLDB, pages 96–107, 1994.

- WiscKey: Separating keys from values in SSD-conscious storage. In 14th USENIX Conference on File and Storage Technologies (FAST 16), pages 133–148, Santa Clara, CA, February 2016. USENIX Association.

- Gimbal: Enabling multi-tenant storage disaggregation on SmartNIC JBOFs. In Proceedings of the 2021 ACM SIGCOMM 2021 Conference, SIGCOMM ’21, page 106–122, New York, NY, USA, 2021. Association for Computing Machinery.

- Decibel: Isolation and sharing in disaggregated Rack-Scale storage. In 14th USENIX Symposium on Networked Systems Design and Implementation (NSDI 17), pages 17–33, Boston, MA, March 2017. USENIX Association.

- The log-structured merge-tree (LSM-tree). Acta Informatica, 33(4):351–385, 1996.

- PebblesDB: Building key-value stores using fragmented log-structured merge trees. In Proceedings of the 26th Symposium on Operating Systems Principles, pages 497–514, 2017.

- CockroachDB: The resilient geo-distributed SQL database. In Proceedings of the 2020 ACM SIGMOD International Conference on Management of Data, pages 1493–1509, 2020.

- Amazon Aurora: Design considerations for high throughput cloud-native relational databases. In Proceedings of the 2017 ACM International Conference on Management of Data, SIGMOD ’17, page 1041–1052, New York, NY, USA, 2017. Association for Computing Machinery.

- Building an elastic query engine on disaggregated storage. In 17th USENIX Symposium on Networked Systems Design and Implementation (NSDI 20), pages 449–462, Santa Clara, CA, February 2020. USENIX Association.

- Cache modeling and optimization using miniature simulations. In 2017 USENIX Annual Technical Conference (USENIX ATC 17), pages 487–498, Santa Clara, CA, July 2017. USENIX Association.

- Synthesizing safe and efficient kernel extensions for packet processing. In Proceedings of the 2021 ACM SIGCOMM 2021 Conference, SIGCOMM ’21, page 50–64, New York, NY, USA, 2021. Association for Computing Machinery.

- A large scale analysis of hundreds of in-memory cache clusters at twitter. In 14th USENIX Symposium on Operating Systems Design and Implementation (OSDI 20), pages 191–208. USENIX Association, November 2020.

- FlexPushdownDB: Hybrid pushdown and caching in a cloud DBMS. Proc. VLDB Endow., 14(11):2101–2113, jul 2021.

- λ-IO: A unified IO stack for computational storage. In 21st USENIX Conference on File and Storage Technologies (FAST 23), pages 347–362, Santa Clara, CA, February 2023. USENIX Association.

- Ship compute or ship data? why not both? In 18th USENIX Symposium on Networked Systems Design and Implementation (NSDI 21), pages 633–651. USENIX Association, April 2021.

- The demikernel datapath os architecture for microsecond-scale datacenter systems. In Proceedings of the ACM SIGOPS 28th Symposium on Operating Systems Principles, SOSP ’21, page 195–211, New York, NY, USA, 2021. Association for Computing Machinery.

- XRP: In-Kernel storage functions with eBPF. In 16th USENIX Symposium on Operating Systems Design and Implementation (OSDI 22), pages 375–393, Carlsbad, CA, July 2022. USENIX Association.

- BPF for storage: An exokernel-inspired approach. In Proceedings of the Workshop on Hot Topics in Operating Systems, HotOS ’21, page 128–135, New York, NY, USA, 2021. Association for Computing Machinery.

- Electrode: Accelerating distributed protocols with eBPF. In 20th USENIX Symposium on Networked Systems Design and Implementation (NSDI 23), 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.