RAG vs Fine-tuning: Pipelines, Tradeoffs, and a Case Study on Agriculture

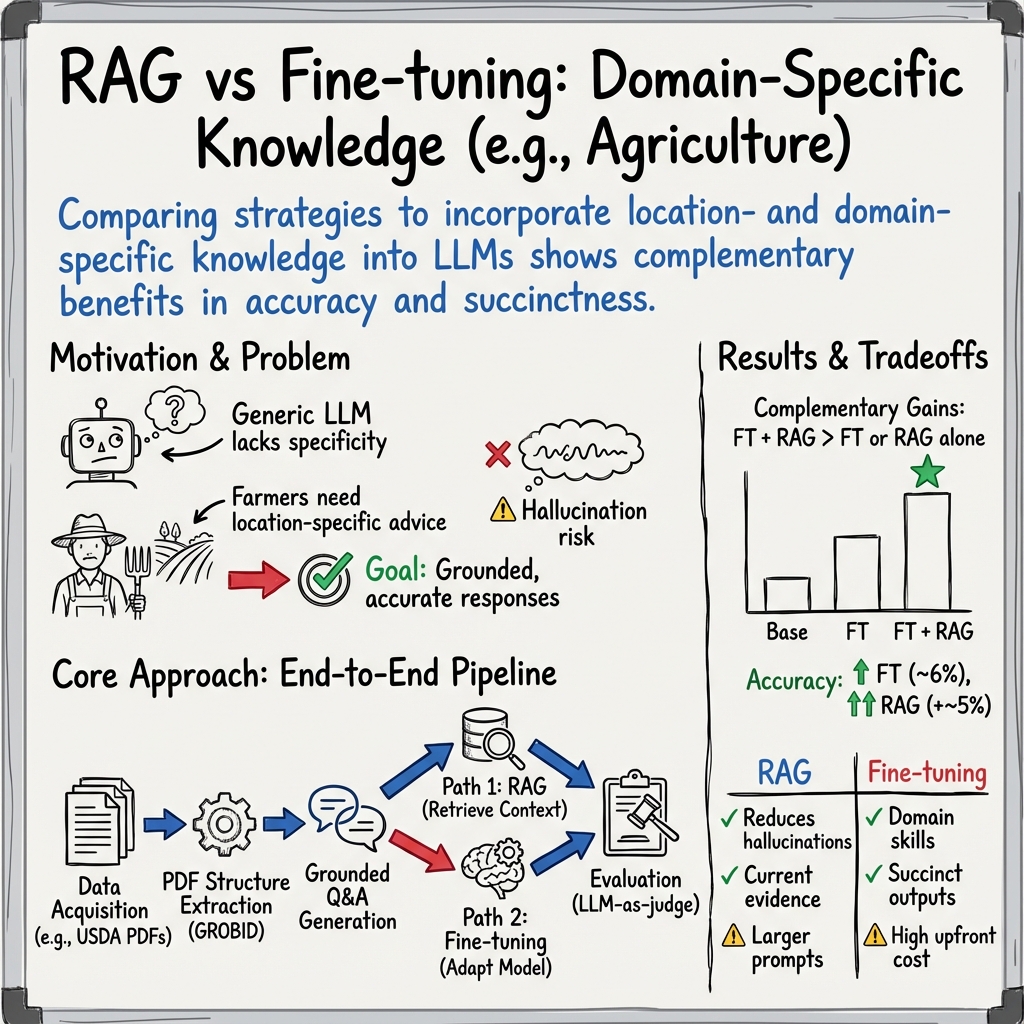

Abstract: There are two common ways in which developers are incorporating proprietary and domain-specific data when building applications of LLMs: Retrieval-Augmented Generation (RAG) and Fine-Tuning. RAG augments the prompt with the external data, while fine-Tuning incorporates the additional knowledge into the model itself. However, the pros and cons of both approaches are not well understood. In this paper, we propose a pipeline for fine-tuning and RAG, and present the tradeoffs of both for multiple popular LLMs, including Llama2-13B, GPT-3.5, and GPT-4. Our pipeline consists of multiple stages, including extracting information from PDFs, generating questions and answers, using them for fine-tuning, and leveraging GPT-4 for evaluating the results. We propose metrics to assess the performance of different stages of the RAG and fine-Tuning pipeline. We conduct an in-depth study on an agricultural dataset. Agriculture as an industry has not seen much penetration of AI, and we study a potentially disruptive application - what if we could provide location-specific insights to a farmer? Our results show the effectiveness of our dataset generation pipeline in capturing geographic-specific knowledge, and the quantitative and qualitative benefits of RAG and fine-tuning. We see an accuracy increase of over 6 p.p. when fine-tuning the model and this is cumulative with RAG, which increases accuracy by 5 p.p. further. In one particular experiment, we also demonstrate that the fine-tuned model leverages information from across geographies to answer specific questions, increasing answer similarity from 47% to 72%. Overall, the results point to how systems built using LLMs can be adapted to respond and incorporate knowledge across a dimension that is critical for a specific industry, paving the way for further applications of LLMs in other industrial domains.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at two popular ways to teach LLMs new, specialized knowledge so they can answer real-world questions better:

- Retrieval-Augmented Generation (RAG): like letting the model “look things up” in trusted documents while it answers.

- Fine-tuning: like giving the model extra classes so it learns the new information and remembers it on its own.

The authors build a full “pipeline” (a step-by-step process) to compare these two methods, test them on real agriculture data, and see which works best, when, and why.

What did the researchers want to find out?

In simple terms, they asked:

- How can we best add industry-specific knowledge (like farming advice for a specific state) to an LLM?

- When is RAG better, and when is fine-tuning better?

- Can we combine RAG and fine-tuning to get even better results?

- How well do popular models (like GPT-4 and Llama 2) handle location-specific farm questions?

- Can we build a repeatable process for collecting documents, creating good questions and answers, and testing results fairly?

How did they do it?

They built a pipeline—think of it as a production line—that turns raw documents into useful training and testing data for LLMs, then measures how well the models perform.

Step 1: Collect the right documents

They gathered high-quality, trusted agriculture documents from the USA, Brazil, and India. Examples include government guides, expert Q&A, and farming advice pages. They focused on material that’s specific to locations (like Washington state or Rajasthan), because farmers need answers tailored to their area.

Step 2: Read and organize messy PDFs

PDFs are made for reading, not for easy copying. The team used tools to pull out the text, tables, figures, and sections in a structured way. This is like turning a scanned book into a clean, searchable outline with chapters and captions, so models can understand what goes where.

- Tool idea: GROBID turns PDFs into structured files (like TEI/JSON), preserving sections, tables, references, and more.

Step 3: Create good questions

They asked an LLM to write questions based on each document section, guided by simple rules (for example, include locations and crops mentioned). This ensures questions match the source content and cover important topics.

Step 4: Generate answers with RAG

For each question, the system first “retrieves” the most relevant text chunks from the collected documents (like doing a smart search), then the LLM writes an answer using those chunks as evidence. This helps keep answers accurate and grounded in the documents.

- Simple analogy: turning text into numbers (called “embeddings”) so the system can quickly find similar passages—like finding matching puzzle pieces.

Step 5: Fine-tune models with the Q&A data

They trained models (like Llama 2) on the new Q&A pairs so the models internalize agriculture knowledge. For a big model like GPT-4, they used an efficient method called LoRA (Low-Rank Adaptation), which is like updating only a few “knobs” inside the model instead of retraining everything.

Step 6: Evaluate with clear metrics

They measured both question quality and answer quality, including:

- Relevance to the source text and location

- Correctness and clarity

- Diversity (not asking the same question repeatedly)

- Whether the question can be answered from the given content

They also used GPT-4 as a judge in some evaluations to compare responses.

What did they find?

- Both RAG and fine-tuning help, in different ways:

- Fine-tuning gave an accuracy boost of over 6 percentage points.

- Adding RAG on top of that gave another 5 percentage points.

- Together, they improved performance more than either one alone.

- Fine-tuning helps the model learn new, domain-specific skills (like agriculture terms and practices) and produce more precise, shorter answers.

- RAG is especially useful when answers depend on specific context—such as local climate or crop rules—because it pulls in the most relevant source passages at answer time.

- The fine-tuned model could use knowledge from different places to answer a location-focused question better. In one test, answer similarity to the expert standard improved from 47% to 72%.

- GPT-4 generally performed best, but it’s more expensive to fine-tune and run. Cost matters when choosing a solution.

- Many general-purpose LLMs give generic answers that don’t fit a particular state or region. With this pipeline, answers become much more location-aware and useful to farmers.

Why does this matter?

- For farmers: Getting advice that fits their exact crop and location can improve yields, save money, and reduce mistakes. Instead of a one-size-fits-all answer, they get practical, local guidance.

- For industry: The same pipeline can be used in other fields—like healthcare, finance, or manufacturing—where answers need to be accurate, concise, and grounded in trusted documents.

- For AI builders: The paper shows a practical way to collect data, generate Q&A, train models, and measure quality. It also explains tradeoffs:

- RAG is flexible and keeps answers tied to sources.

- Fine-tuning teaches the model new knowledge and skills.

- Using both can be even better, but costs and complexity must be managed.

In short, the research shows how to adapt LLMs to real-world, location-specific needs and highlights a clear path to building helpful AI “copilots” across many industries.

Collections

Sign up for free to add this paper to one or more collections.