Enhancing Q&A with Domain-Specific Fine-Tuning and Iterative Reasoning: A Comparative Study

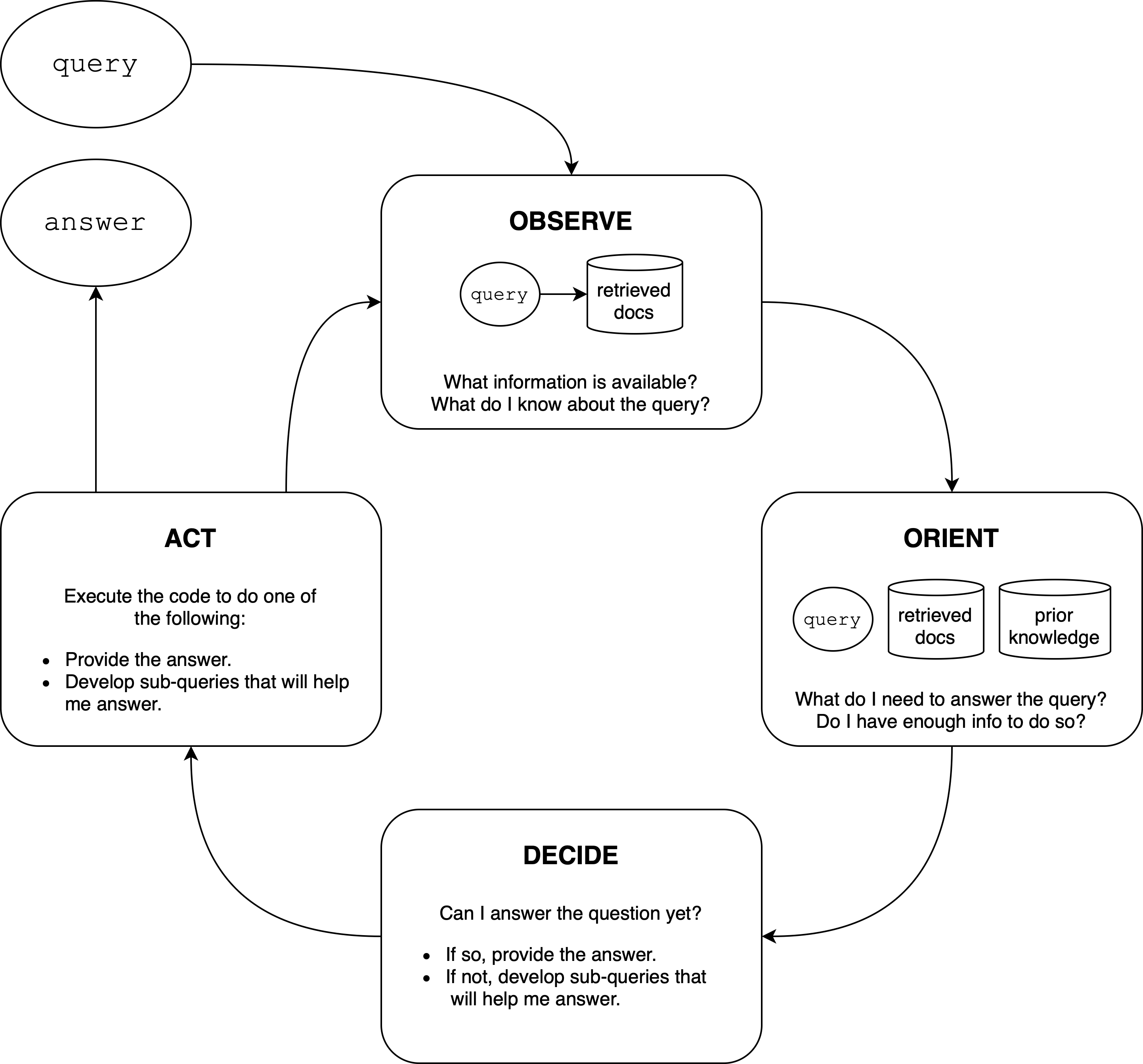

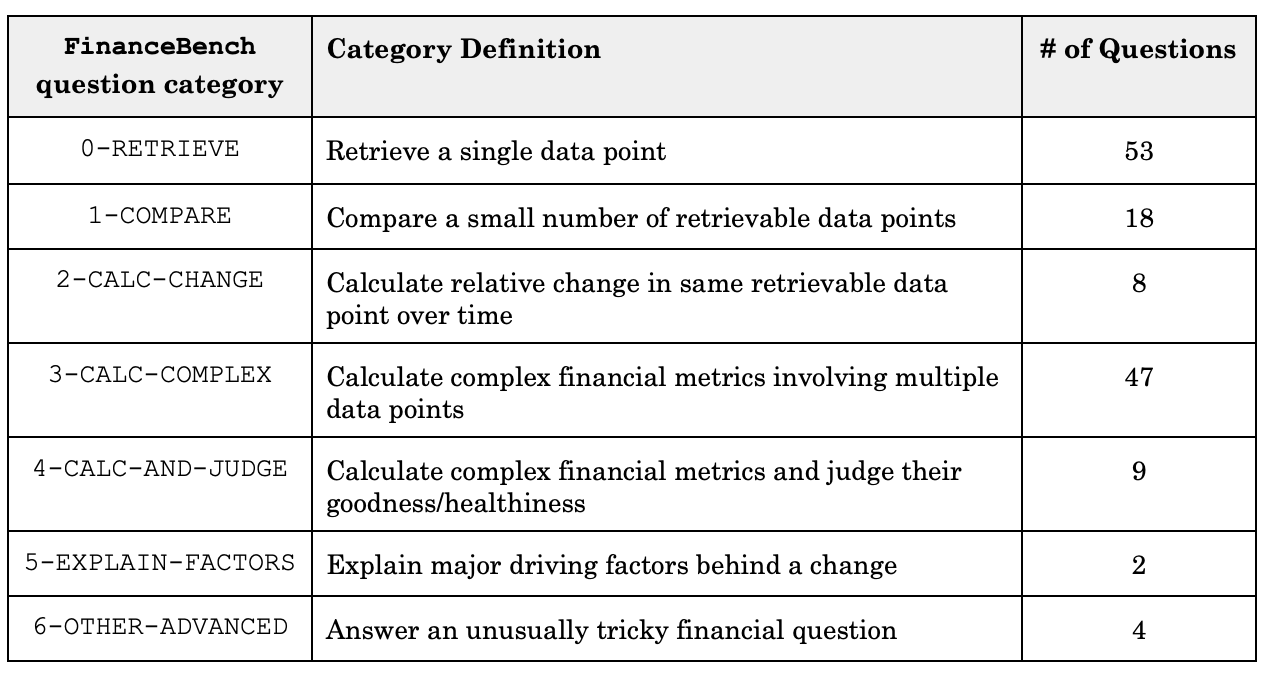

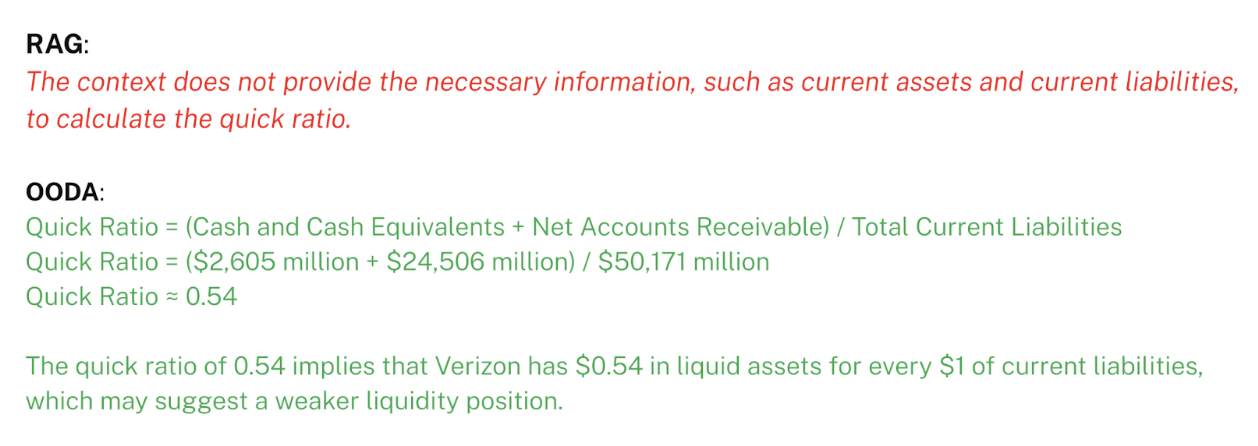

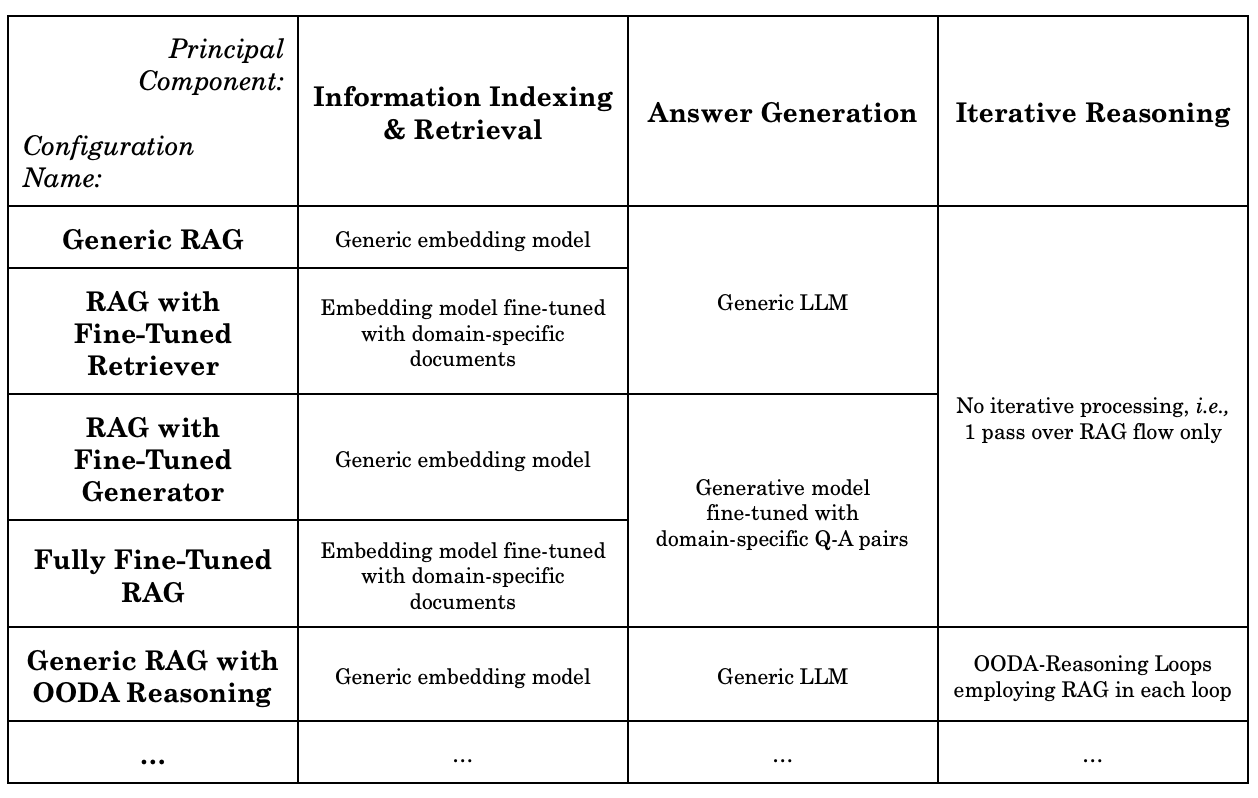

Abstract: This paper investigates the impact of domain-specific model fine-tuning and of reasoning mechanisms on the performance of question-answering (Q&A) systems powered by LLMs and Retrieval-Augmented Generation (RAG). Using the FinanceBench SEC financial filings dataset, we observe that, for RAG, combining a fine-tuned embedding model with a fine-tuned LLM achieves better accuracy than generic models, with relatively greater gains attributable to fine-tuned embedding models. Additionally, employing reasoning iterations on top of RAG delivers an even bigger jump in performance, enabling the Q&A systems to get closer to human-expert quality. We discuss the implications of such findings, propose a structured technical design space capturing major technical components of Q&A AI, and provide recommendations for making high-impact technical choices for such components. We plan to follow up on this work with actionable guides for AI teams and further investigations into the impact of domain-specific augmentation in RAG and into agentic AI capabilities such as advanced planning and reasoning.

- Iz Beltagy, Matthew E. Peters and Arman Cohan “Longformer: The Long-Document Transformer”, 2020 arXiv:2004.05150 [cs.CL]

- John Richard Boyd “Patterns of Conflict”, 1986 URL: http://d-n-i.net/second_level/boyd_military.htm

- “Language Models are Few-Shot Learners”, 2020 arXiv:2005.14165 [cs.CL]

- “HybridQA: A Dataset of Multi-Hop Question Answering over Tabular and Textual Data”, 2020 arXiv:2004.07347 [cs.CL]

- “Transformer-XL: Attentive Language Models Beyond a Fixed-Length Context”, 2019 arXiv:1901.02860 [cs.LG]

- “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding”, 2018 arXiv:1810.04805 [cs.CL]

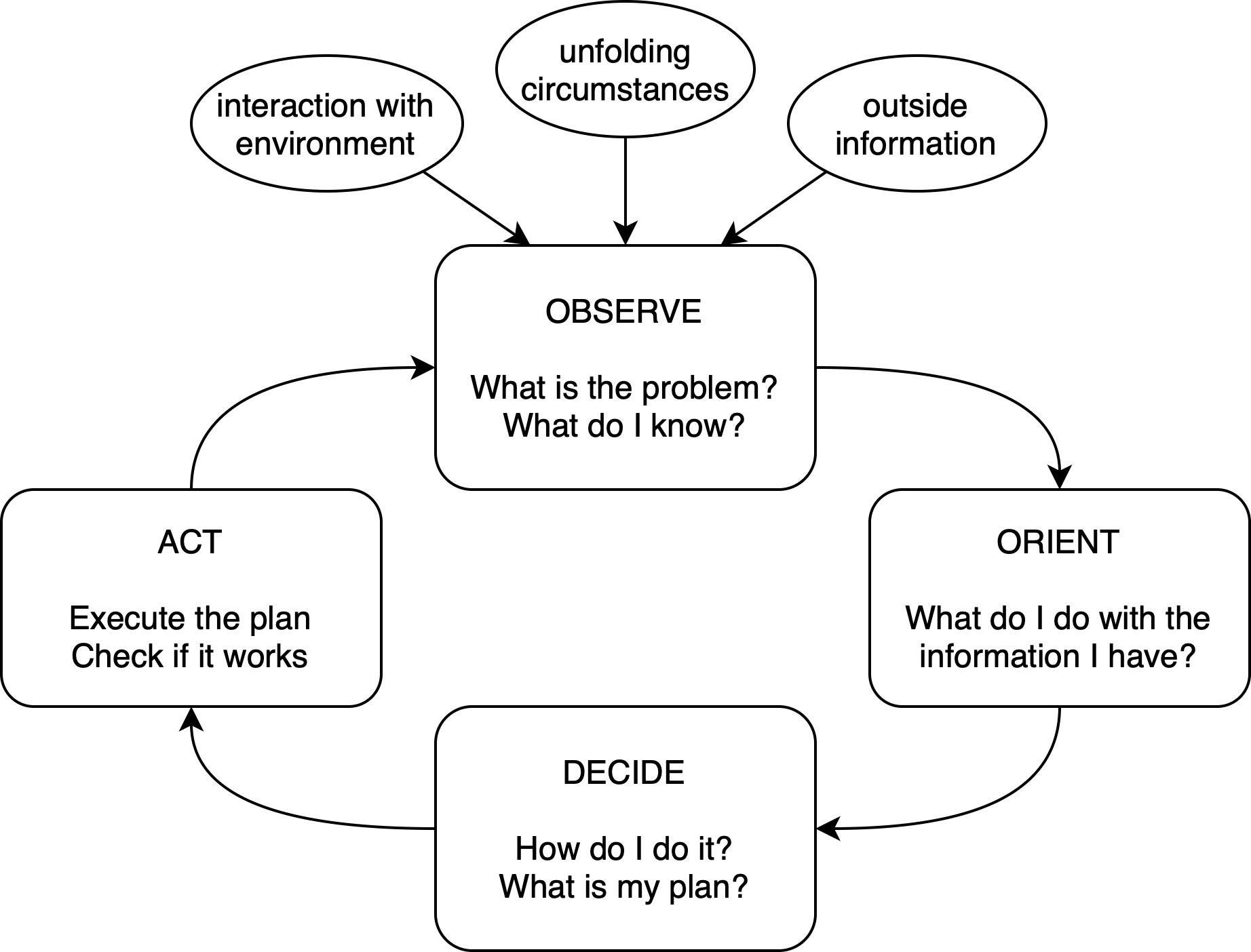

- Robert E. Enck “The OODA Loop” In Home Health Care Management & Practice 24.3, 2012, pp. 123–124 DOI: 10.1177/1084822312439314

- “Retrieval-Generation Synergy Augmented Large Language Models”, 2023 arXiv:2310.05149 [cs.CL]

- “Prompt-Guided Retrieval Augmentation for Non-Knowledge-Intensive Tasks”, 2023 arXiv:2305.17653 [cs.CL]

- “Parameter-Efficient Transfer Learning for NLP”, 2019 arXiv:1902.00751 [cs.LG]

- “RAVEN: In-Context Learning with Retrieval-Augmented Encoder-Decoder Language Models”, 2024 arXiv:2308.07922 [cs.CL]

- “FinanceBench: A New Benchmark for Financial Question Answering”, 2023 arXiv:2311.11944 [cs.CL]

- “Active Retrieval Augmented Generation”, 2023 arXiv:2305.06983 [cs.CL]

- “Knowledge-Augmented Reasoning Distillation for Small Language Models in Knowledge-Intensive Tasks”, 2023 arXiv:2305.18395 [cs.CL]

- “UnifiedQA: Crossing Format Boundaries With a Single QA System”, 2020 arXiv:2005.00700 [cs.CL]

- Yann LeCun “A Path Towards Autonomous Machine Intelligence”, 2022 URL: https://openreview.net/pdf?id=BZ5a1r-kVsf

- “Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks”, 2021 arXiv:2005.11401 [cs.CL]

- “RoBERTa: A Robustly Optimized BERT Pretraining Approach”, 2019 arXiv:1907.11692 [cs.CL]

- “Self-Refine: Iterative Refinement with Self-Feedback”, 2023 arXiv:2303.17651 [cs.CL]

- “Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer”, 2023 arXiv:1910.10683 [cs.LG]

- “DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter”, 2020 arXiv:1910.01108 [cs.CL]

- “PlanBench: An Extensible Benchmark for Evaluating Large Language Models on Planning and Reasoning about Change”, 2022 arXiv:2206.10498 [cs.CL]

- “Attention Is All You Need”, 2017 arXiv:1706.03762 [cs.CL]

- “Self-Evaluation Guided Beam Search for Reasoning”, 2023 arXiv:2305.00633 [cs.CL]

- “Tree of Thoughts: Deliberate Problem Solving with Large Language Models”, 2023 arXiv:2305.10601 [cs.CL]

- “Retrieve Anything To Augment Large Language Models”, 2023 arXiv:2310.07554 [cs.IR]

- “Self-Discover: Large Language Models Self-Compose Reasoning Structures”, 2024 arXiv:2402.03620 [cs.AI]

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.