SVARM-IQ: Efficient Approximation of Any-order Shapley Interactions through Stratification

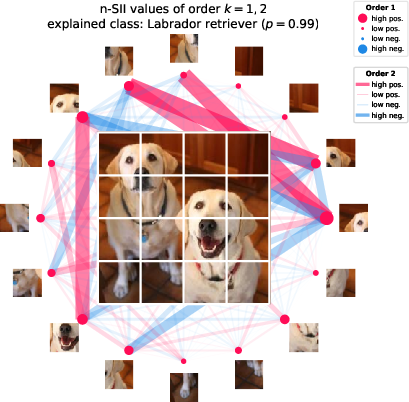

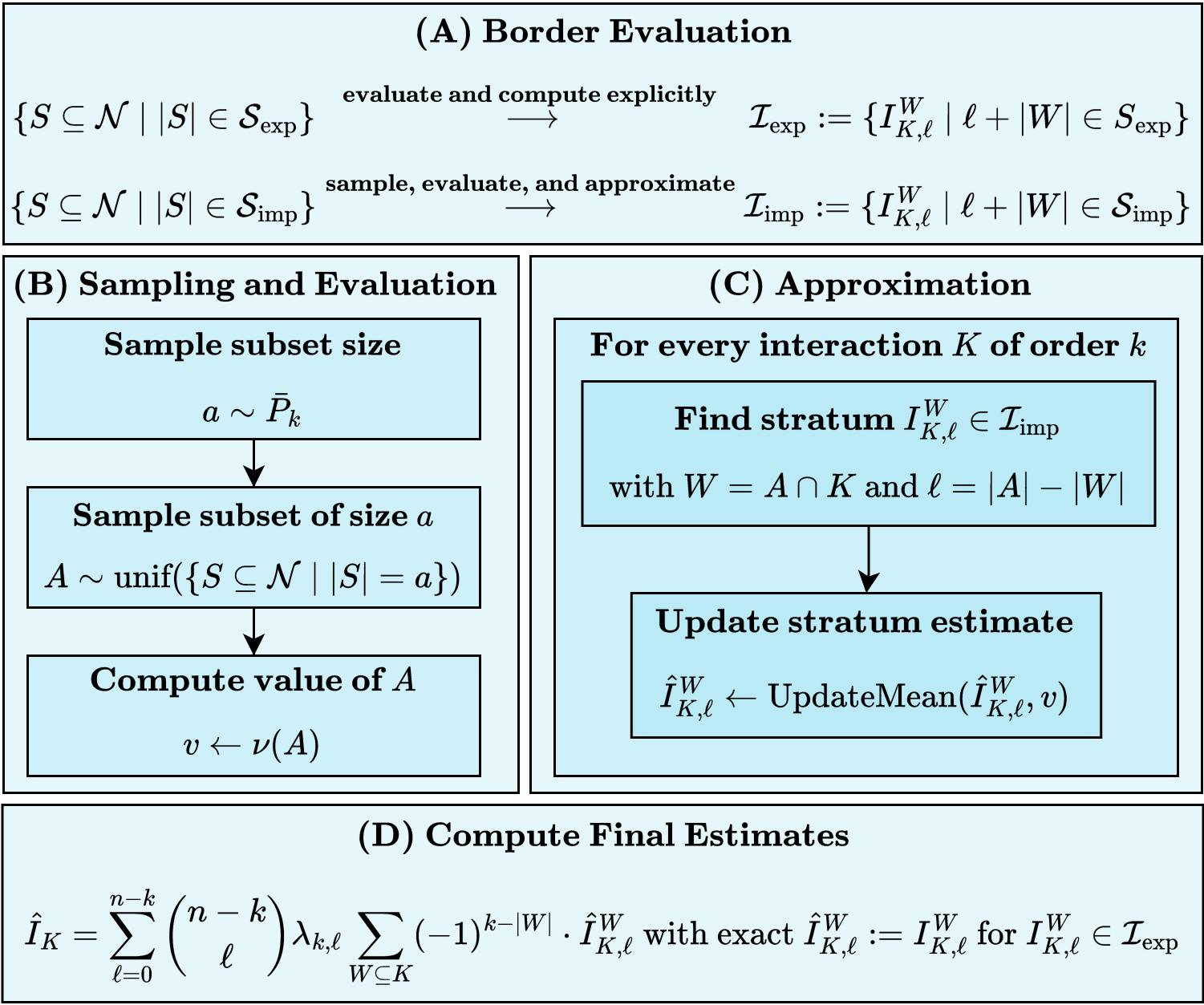

Abstract: Addressing the limitations of individual attribution scores via the Shapley value (SV), the field of explainable AI (XAI) has recently explored intricate interactions of features or data points. In particular, extensions of the SV, such as the Shapley Interaction Index (SII), have been proposed as a measure to still benefit from the axiomatic basis of the SV. However, similar to the SV, their exact computation remains computationally prohibitive. Hence, we propose with SVARM-IQ a sampling-based approach to efficiently approximate Shapley-based interaction indices of any order. SVARM-IQ can be applied to a broad class of interaction indices, including the SII, by leveraging a novel stratified representation. We provide non-asymptotic theoretical guarantees on its approximation quality and empirically demonstrate that SVARM-IQ achieves state-of-the-art estimation results in practical XAI scenarios on different model classes and application domains.

- SLIC Superpixels Compared to State-of-the-Art Superpixel Methods. IEEE Transactions on Pattern Analysis and Machine Intelligence, 34(11):2274–2282.

- Peeking Inside the Black-Box: A Survey on Explainable Artificial Intelligence (XAI). IEEE Access, 6:52138–52160.

- From Shapley Values to Generalized Additive Models and back. In The 26th International Conference on Artificial Intelligence and Statistics (AISTATS 2023), volume 206 of Proceedings of Machine Learning Research, pages 709–745. PMLR.

- Approximating the shapley value using stratified empirical bernstein sampling. In Proceedings of the 30th International Joint Conference on Artificial Intelligence, (IJCAI) 2021, pages 73–81. ijcai.org.

- Improving polynomial estimation of the Shapley value by stratified random sampling with optimum allocation. Computers & Operations Research, 82:180–188.

- Polynomial calculation of the Shapley value based on sampling. Computers & Operations Research, 36(5):1726–1730.

- Negative Moments of Positive Random Variables. Journal of the American Statistical Association, 67(338):429–431.

- Extremal Principle Solutions of Games in Characteristic Function Form: Core, Chebychev and Shapley Value Generalizations, volume 11 of Advanced Studies in Theoretical and Applied Econometrics, page 123–133. Springer Netherlands.

- Algorithms to estimate Shapley value feature attributions. Nature Machine Intelligence, (5):590–601.

- Feature selection using approximated high-order interaction components of the shapley value for boosted tree classifier. IEEE Access, 8:112742–112750.

- Improving KernelSHAP: Practical Shapley Value Estimation Using Linear Regression. In The 24th International Conference on Artificial Intelligence and Statistics (AISTATS 2021), volume 130 of Proceedings of Machine Learning Research, pages 3457–3465. PMLR.

- Explaining by Removing: A Unified Framework for Model Explanation. Journal of Machine Learning Research, 22:209:1–209:90.

- ImageNet: A large-scale hierarchical image database. In 2009 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR 2009), pages 248–255. IEEE Computer Society.

- On the Complexity of Cooperative Solution Concepts. Mathematics of Operations Research, 19(2):257–266.

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In 9th International Conference on Learning Representations (ICLR 2021). OpenReview.net.

- Axiomatic characterizations of probabilistic and cardinal-probabilistic interaction indices. Games and Economic Behavior, 55(1):72–99.

- SHAP-IQ: Unified Approximation of any-order Shapley Interactions. CoRR, abs/2303.01179.

- An axiomatic approach to the concept of interaction among players in cooperative games. International Journal of Game Theory, 28(4):547–565.

- Approximations of pseudo-Boolean functions; applications to game theory. ZOR Mathematical Methods of Operations Research, 36(1):3–21.

- Deep Residual Learning for Image Recognition. In 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2016), pages 770–778. IEEE Computer Society.

- Unifying local and global model explanations by functional decomposition of low dimensional structures. In The 26th International Conference on Artificial Intelligence and Statistics (AISTATS 2023), volume 206 of Proceedings of Machine Learning Research, pages 7040–7060. PMLR.

- Hooker, G. (2004). Discovering additive structure in black box functions. In Kim, W., Kohavi, R., Gehrke, J., and DuMouchel, W., editors, Proceedings of the Tenth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (SIGKDD 2004), pages 575–580. ACM.

- Hooker, G. (2007). Generalized Functional ANOVA Diagnostics for High-Dimensional Functions of Dependent Variables. Journal of Computational and Graphical Statistics, 16(3):709–732.

- Explaining Explanations: Axiomatic Feature Interactions for Deep Networks. Journal of Machine Learning Research, 22:104:1–104:54.

- Approximating the Shapley Value without Marginal Contributions. CoRR, abs/2302.00736.

- Shapley Residuals: Quantifying the limits of the Shapley value for explanations. In Advances in Neural Information Processing Systems 34: Annual Conference on Neural Information Processing Systems 2021 (NeurIPS 2021), pages 26598–26608.

- Problems with Shapley-value-based explanations as feature importance measures. In Proceedings of the 37th International Conference on Machine Learning (ICML 2020), volume 119 of Proceedings of Machine Learning Research, pages 5491–5500. PMLR.

- Purifying Interaction Effects with the Functional ANOVA: An Efficient Algorithm for Recovering Identifiable Additive Models. In The 23rd International Conference on Artificial Intelligence and Statistics (AISTATS 2020), volume 108 of Proceedings of Machine Learning Research, pages 2402–2412. PMLR.

- Accurate intelligible models with pairwise interactions. In The 19th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD 2013), pages 623–631. ACM.

- From local explanations to global understanding with explainable AI for trees. Nature Machine Intelligence, 2(1):56–67.

- A Unified Approach to Interpreting Model Predictions. In Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017 (NeurIPS 2017), pages 4765–4774.

- Learning Word Vectors for Sentiment Analysis. In Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, pages 142–150. Association for Computational Linguistics.

- Bounding the Estimation Error of Sampling-based Shapley Value Approximation With/Without Stratifying. CoRR, abs/1306.4265.

- Quantifying Model Complexity via Functional Decomposition for Better Post-hoc Interpretability. In Machine Learning and Knowledge Discovery in Databases (ECML PKDD 2019), volume Communications in Computer and Information Science, pages 193–204. Springer, Cham.

- Beyond Word Importance: Contextual Decomposition to Extract Interactions from LSTMs. In 6th International Conference on Learning Representations (ICLR 2018).

- Beyond TreeSHAP: Efficient Computation of Any-Order Shapley Interactions for Tree Ensembles. CoRR, abs/2401.12069.

- Automatic differentiation in PyTorch. In Workshop at Conference on Neural Information Processing Systems (NeurIPS 2017).

- DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter. CoRR.

- Shapley, L. S. (1953). A Value for n-Person Games. In Contributions to the Theory of Games (AM-28), Volume II, pages 307–318. Princeton University Press.

- Hierarchical interpretations for neural network predictions. In 7th International Conference on Learning Representations (ICLR 2019).

- Fooling LIME and SHAP: Adversarial Attacks on Post hoc Explanation Methods. In AAAI/ACM Conference on AI, Ethics, and Society (AIES 2020), pages 180–186. ACM.

- The Shapley Taylor Interaction Index. In Proceedings of the 37th International Conference on Machine Learning (ICML 2020), volume 119 of Proceedings of Machine Learning Research, pages 9259–9268. PMLR.

- The Many Shapley Values for Model Explanation. In Proceedings of the 37th International Conference on Machine Learning (ICML 2020), volume 119 of Proceedings of Machine Learning Research, pages 9269–9278. PMLR.

- Faith-Shap: The Faithful Shapley Interaction Index. Journal of Machine Learning Research, 24(94):1–42.

- Feature Interaction Interpretability: A Case for Explaining Ad-Recommendation Systems via Neural Interaction Detection. In 8th International Conference on Learning Representations (ICLR 2020).

- Detecting Statistical Interactions from Neural Network Weights. In 6th International Conference on Learning Representations (ICLR 2018).

- Data Banzhaf: A Robust Data Valuation Framework for Machine Learning. In The 26th International Conference on Artificial Intelligence and Statistics (AISTATS 2023), volume 206 of Proceedings of Machine Learning Research, pages 6388–6421. PMLR.

- Transformers: State-of-the-Art Natural Language Processing. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations (EMNLP 2020), pages 38–45. Association for Computational Linguistics.

- Do little interactions get lost in dark random forests? BMC Bioinformatics, 17:145.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.