2000 character limit reached

Prompt Design and Engineering: Introduction and Advanced Methods

Published 24 Jan 2024 in cs.SE and cs.LG | (2401.14423v4)

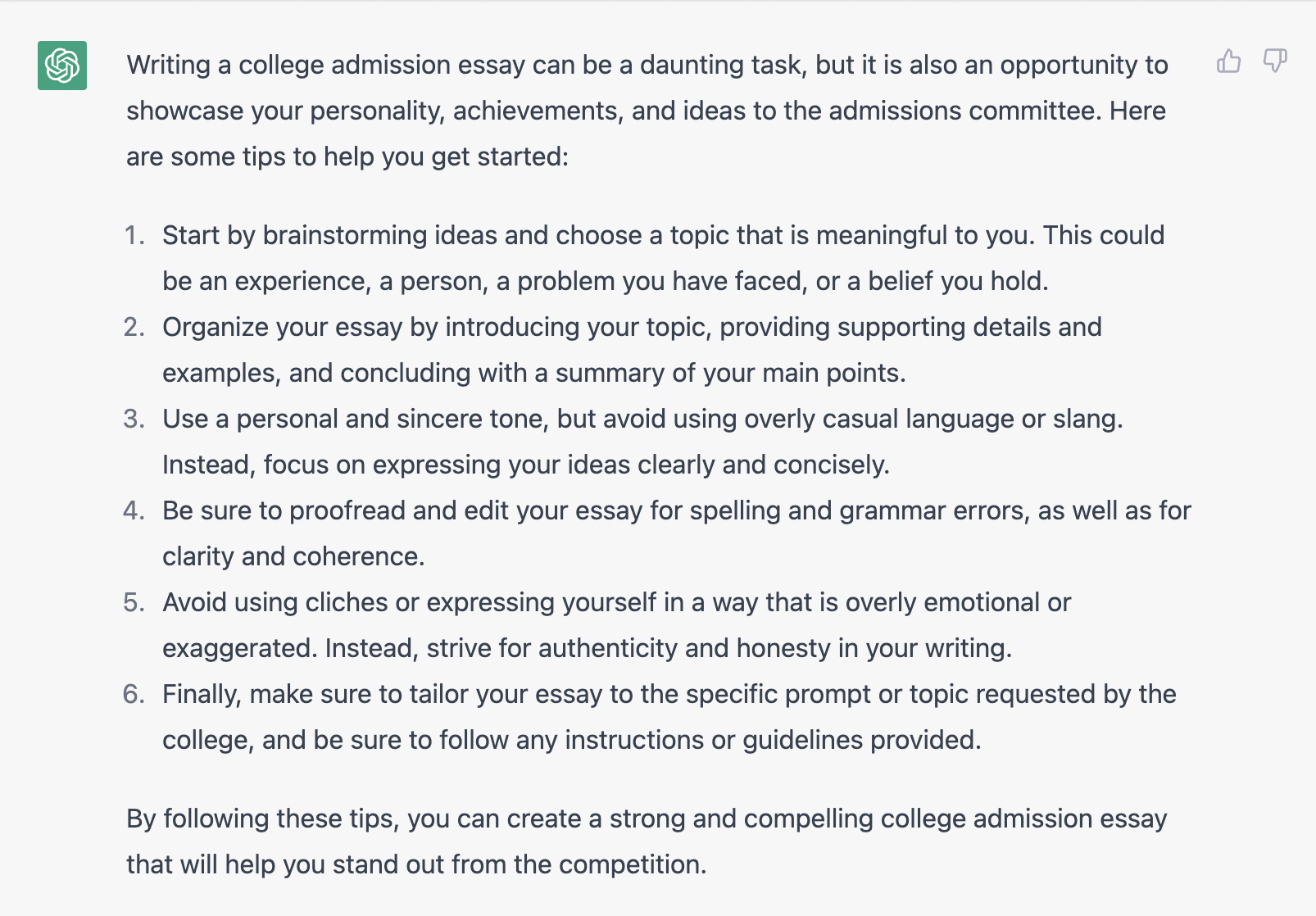

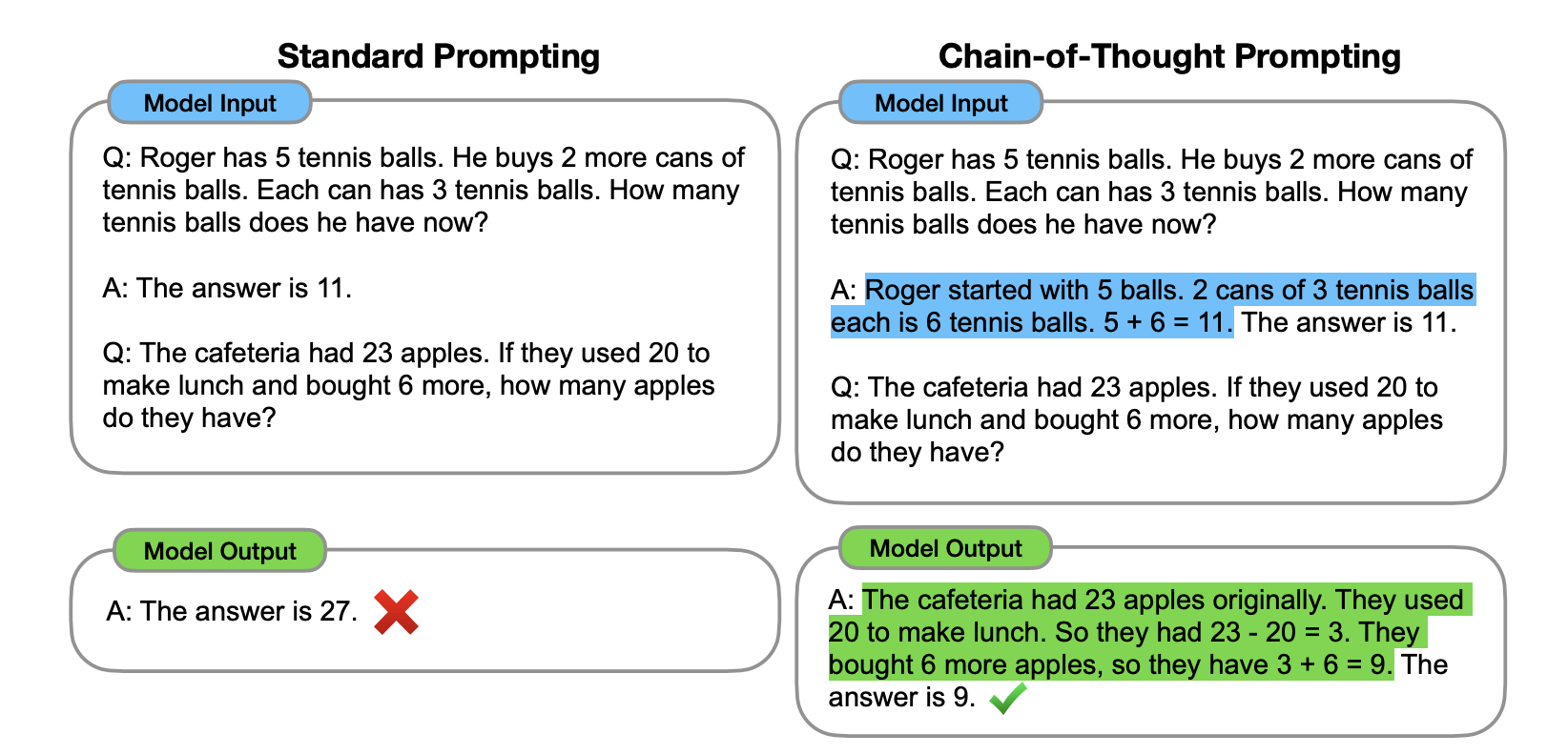

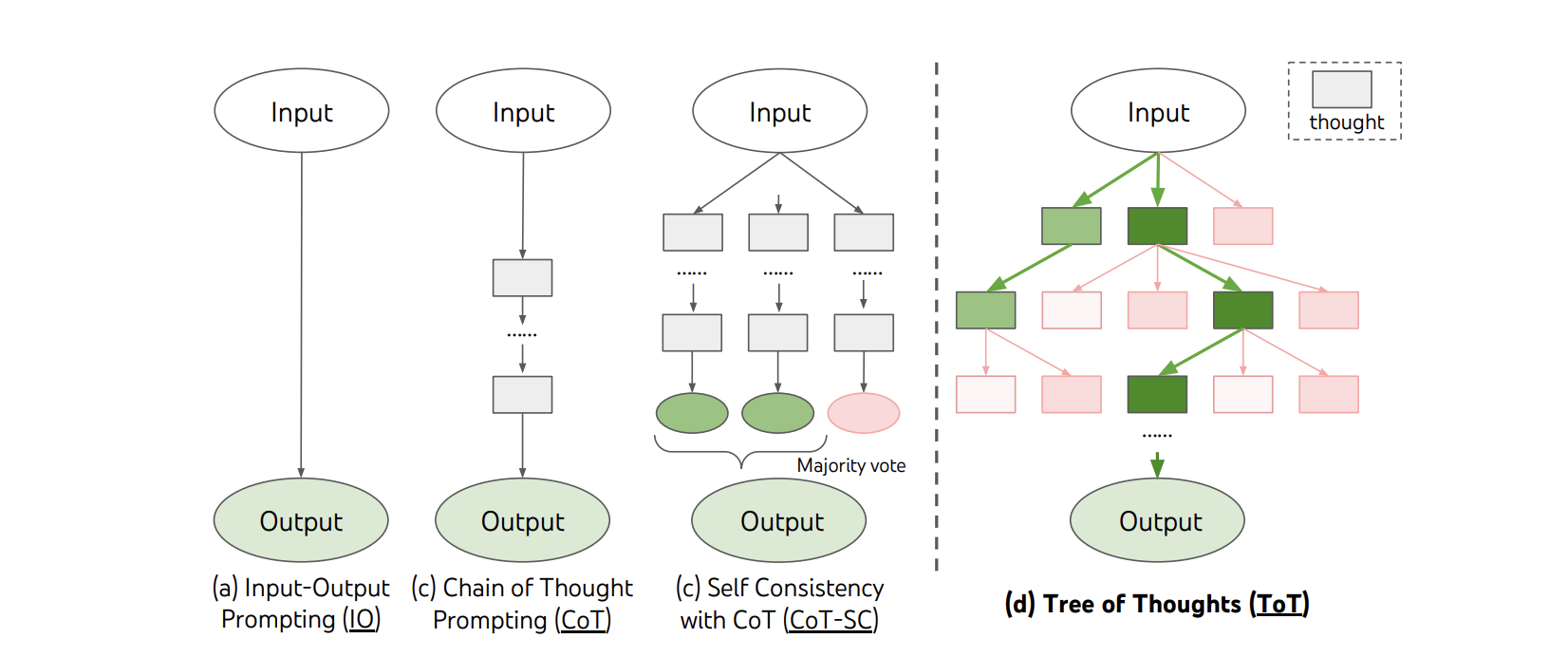

Abstract: Prompt design and engineering has rapidly become essential for maximizing the potential of LLMs. In this paper, we introduce core concepts, advanced techniques like Chain-of-Thought and Reflection, and the principles behind building LLM-based agents. Finally, we provide a survey of tools for prompt engineers.

- Machine learning: The high interest credit card of technical debt. In SE4ML: Software Engineering for Machine Learning (NIPS 2014 Workshop), 2014.

- Chain-of-thought prompting elicits reasoning in large language models. In S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh, editors, Advances in Neural Information Processing Systems, volume 35, pages 24824–24837. Curran Associates, Inc., 2022.

- Automatic chain of thought prompting in large language models, 2022.

- Tree of thoughts: Deliberate problem solving with large language models, 2023.

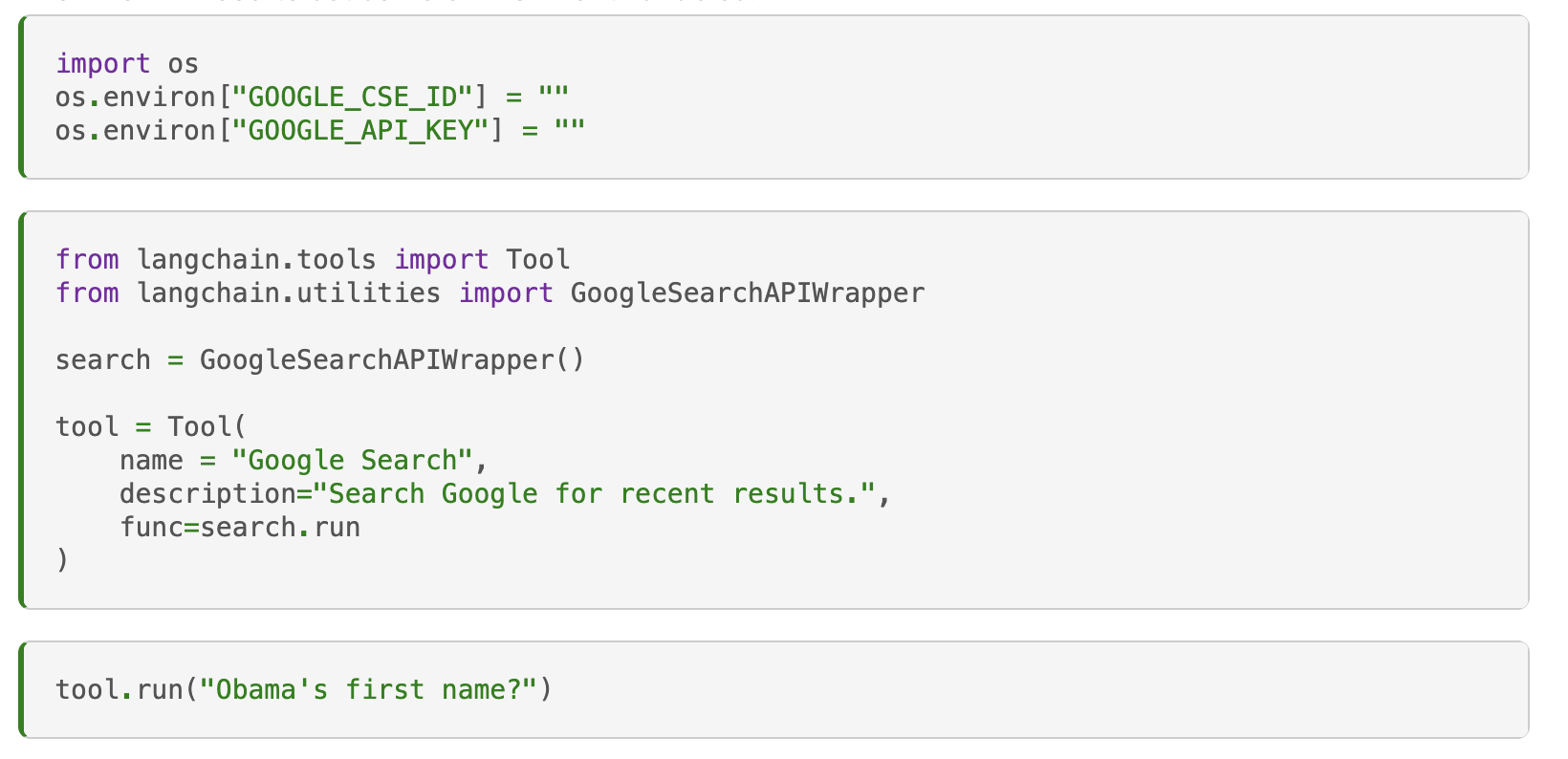

- Toolformer: Language models can teach themselves to use tools, 2023.

- Gorilla: Large language model connected with massive apis, 2023.

- Art: Automatic multi-step reasoning and tool-use for large language models, 2023.

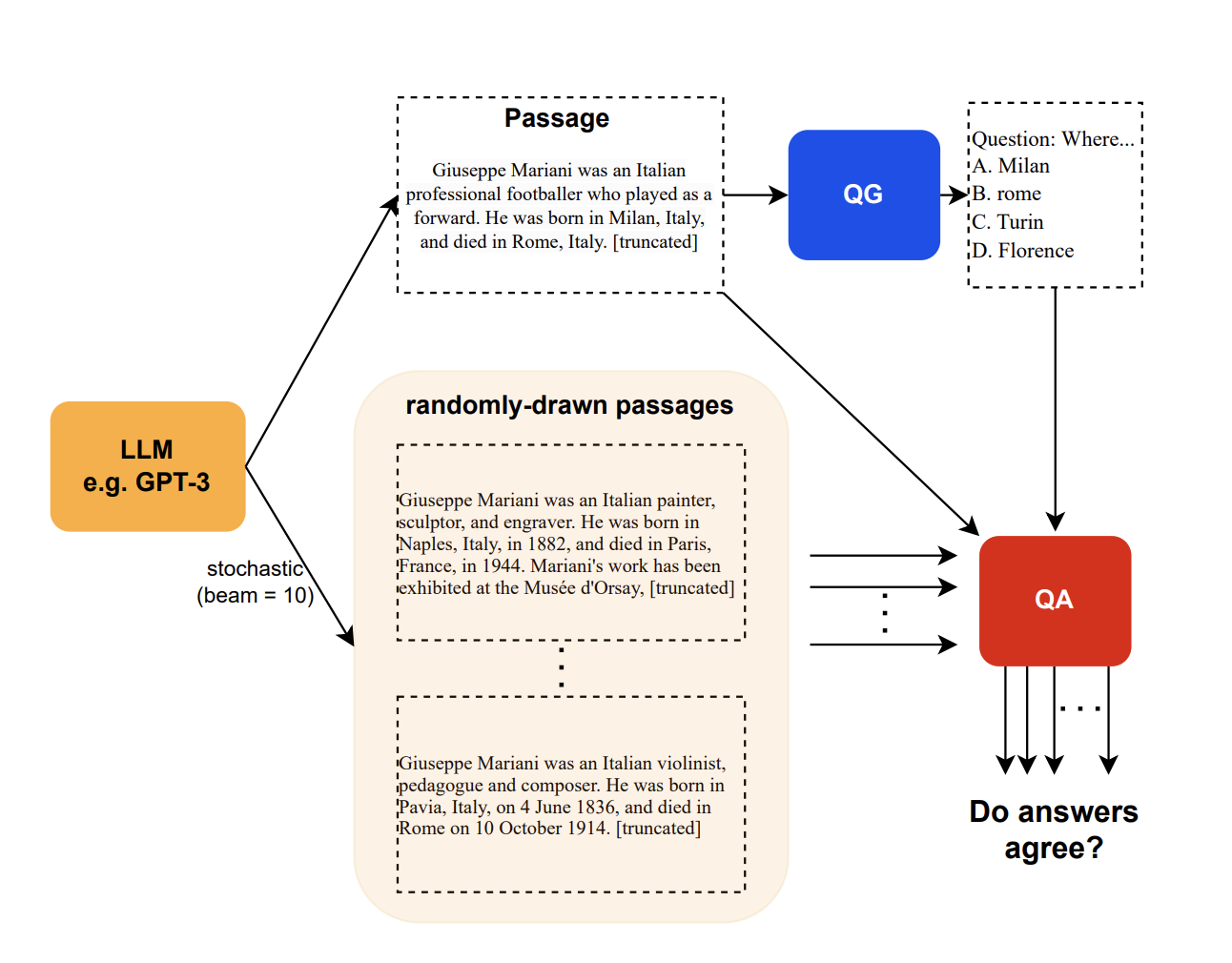

- Selfcheckgpt: Zero-resource black-box hallucination detection for generative large language models, 2023.

- Reflexion: Language agents with verbal reinforcement learning, 2023.

- Exploring the mit mathematics and eecs curriculum using large language models, 2023.

- Promptchainer: Chaining large language model prompts through visual programming, 2022.

- Large language models are human-level prompt engineers, 2023.

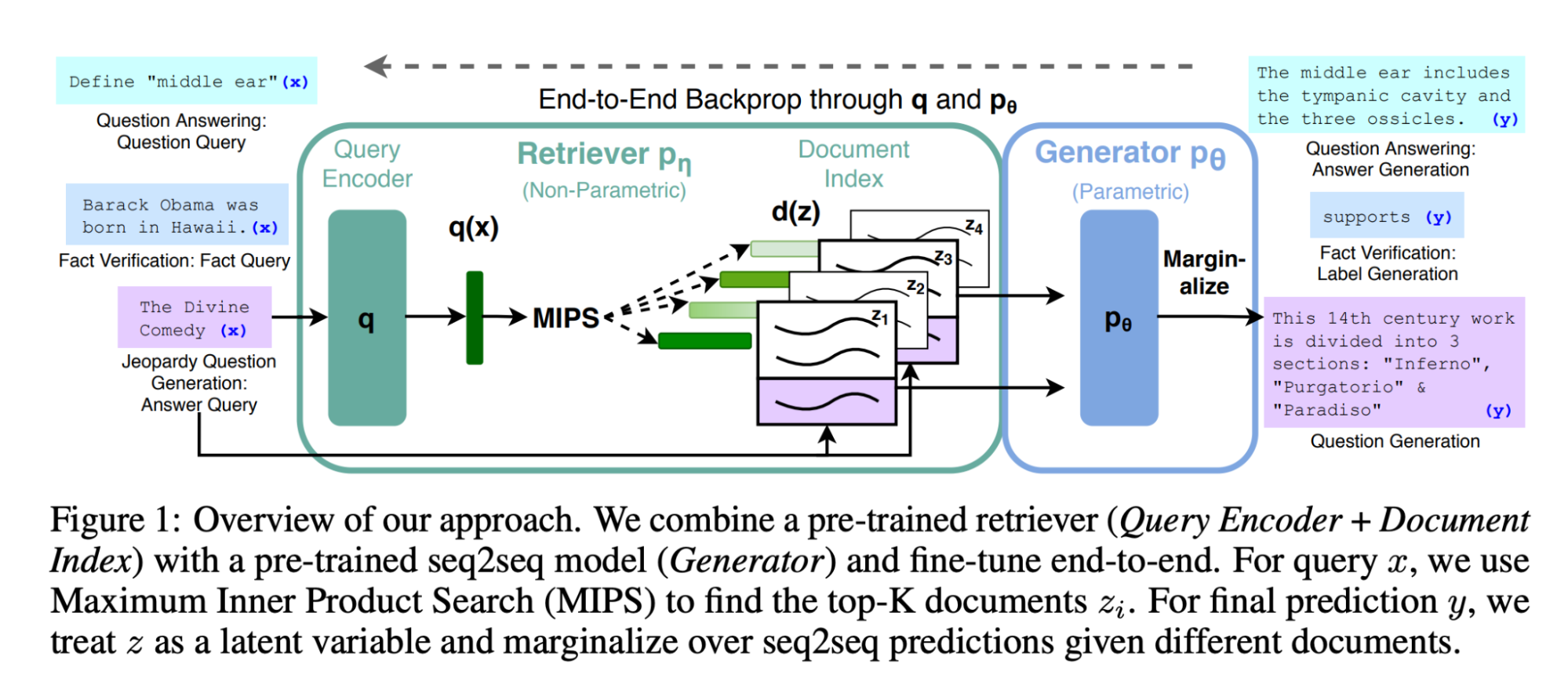

- Retrieval-augmented generation for knowledge-intensive NLP tasks. CoRR, abs/2005.11401, 2020.

- Amazon Web Services. Question answering using retrieval augmented generation with foundation models in amazon sagemaker jumpstart, Year of publication, e.g., 2023. Accessed: Date of access, e.g., December 5, 2023.

- Unifying large language models and knowledge graphs: A roadmap. arXiv preprint arXiv:2306.08302, 2023.

- Active retrieval augmented generation, 2023.

- Retrieval-augmented generation for large language models: A survey. arXiv preprint arXiv:2312.10997, 2023.

- Hugginggpt: Solving ai tasks with chatgpt and its friends in huggingface. arXiv preprint arXiv:2303.17580, 2023.

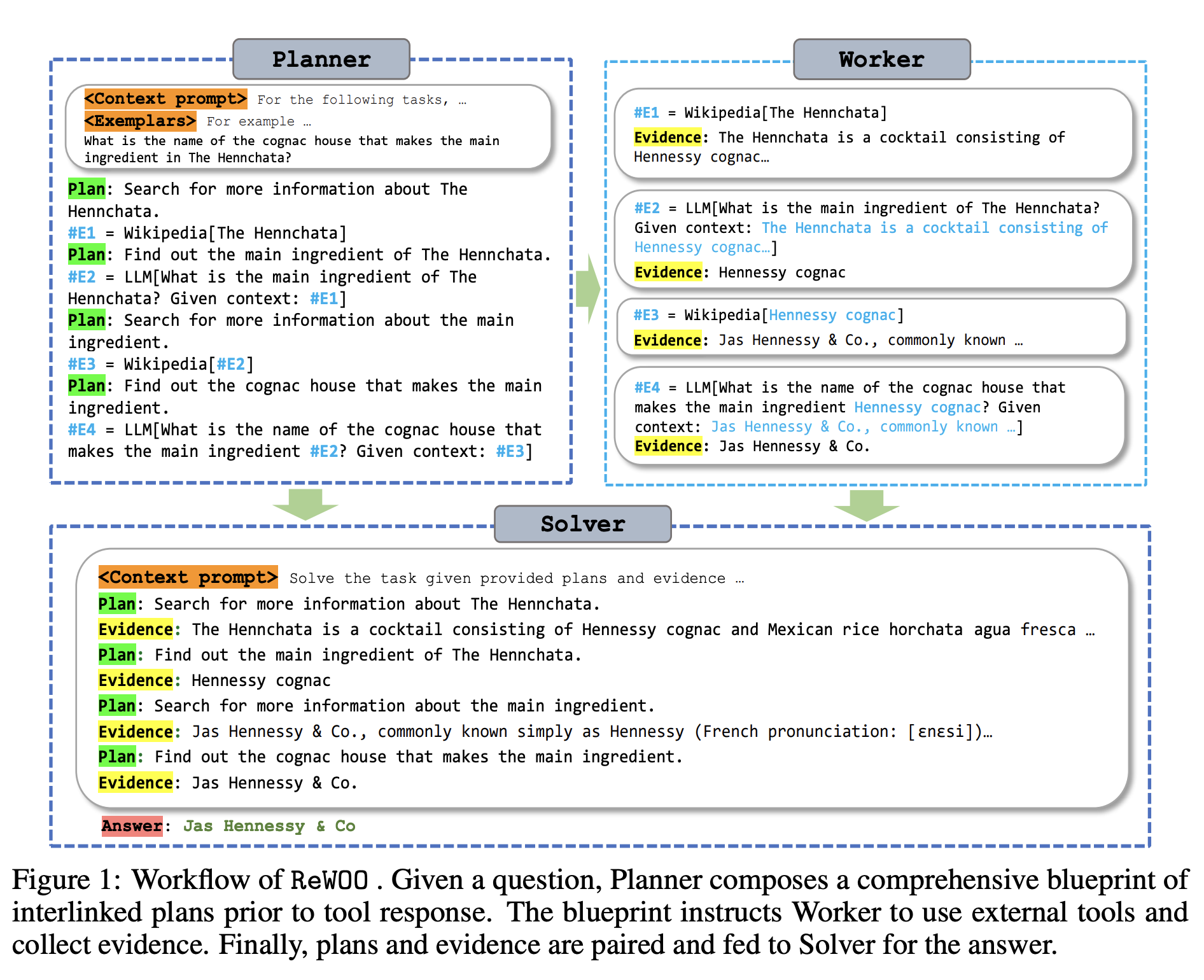

- Rewoo: Decoupling reasoning from observations for efficient augmented language models, 2023.

- React: Synergizing reasoning and acting in language models, 2023.

- Dera: Enhancing large language model completions with dialog-enabled resolving agents, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.