Content-Based Collaborative Generation for Recommender Systems

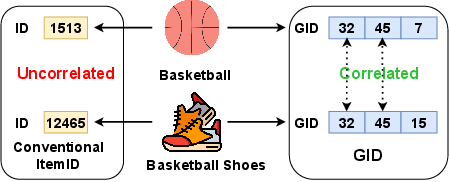

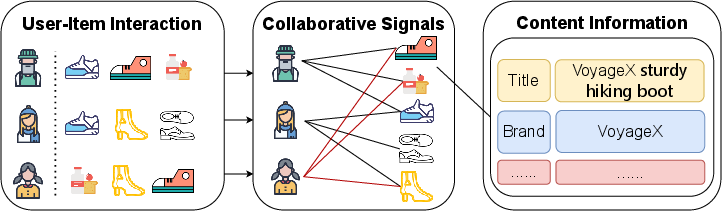

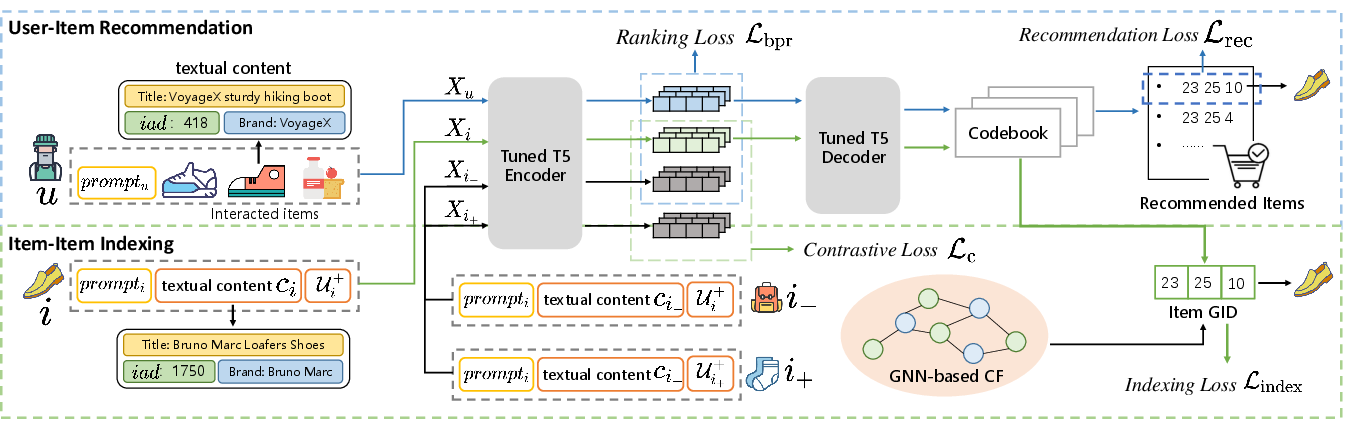

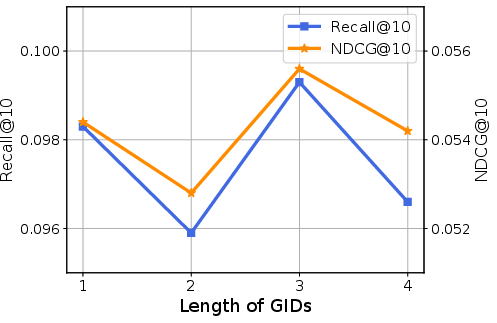

Abstract: Generative models have emerged as a promising utility to enhance recommender systems. It is essential to model both item content and user-item collaborative interactions in a unified generative framework for better recommendation. Although some existing LLM-based methods contribute to fusing content information and collaborative signals, they fundamentally rely on textual language generation, which is not fully aligned with the recommendation task. How to integrate content knowledge and collaborative interaction signals in a generative framework tailored for item recommendation is still an open research challenge. In this paper, we propose content-based collaborative generation for recommender systems, namely ColaRec. ColaRec is a sequence-to-sequence framework which is tailored for directly generating the recommended item identifier. Precisely, the input sequence comprises data pertaining to the user's interacted items, and the output sequence represents the generative identifier (GID) for the suggested item. To model collaborative signals, the GIDs are constructed from a pretrained collaborative filtering model, and the user is represented as the content aggregation of interacted items. To this end, ColaRec captures both collaborative signals and content information in a unified framework. Then an item indexing task is proposed to conduct the alignment between the content-based semantic space and the interaction-based collaborative space. Besides, a contrastive loss is further introduced to ensure that items with similar collaborative GIDs have similar content representations. To verify the effectiveness of ColaRec, we conduct experiments on four benchmark datasets. Empirical results demonstrate the superior performance of ColaRec.

- TALLRec: An Effective and Efficient Tuning Framework to Align Large Language Model with Recommendation. In Proceedings of the 17th ACM Conference on Recommender Systems, RecSys 2023, Singapore, Singapore, September 18-22, 2023, Jie Zhang, Li Chen, Shlomo Berkovsky, Min Zhang, Tommaso Di Noia, Justin Basilico, Luiz Pizzato, and Yang Song (Eds.). ACM, 1007–1014. https://doi.org/10.1145/3604915.3608857

- Graph convolutional matrix completion. arXiv preprint arXiv:1706.02263 (2017).

- Autoregressive search engines: Generating substrings as document identifiers. Advances in Neural Information Processing Systems 35 (2022), 31668–31683.

- Zefeng Cai and Zerui Cai. 2022. PEVAE: A Hierarchical VAE for Personalized Explainable Recommendation. In SIGIR ’22: The 45th International ACM SIGIR Conference on Research and Development in Information Retrieval, Madrid, Spain, July 11 - 15, 2022, Enrique Amigó, Pablo Castells, Julio Gonzalo, Ben Carterette, J. Shane Culpepper, and Gabriella Kazai (Eds.). ACM, 692–702. https://doi.org/10.1145/3477495.3532039

- A survey on generative diffusion model. arXiv preprint arXiv:2209.02646 (2022).

- Autoregressive Entity Retrieval. In 9th International Conference on Learning Representations, ICLR 2021, Virtual Event, Austria, May 3-7, 2021. OpenReview.net. https://openreview.net/forum?id=5k8F6UU39V

- A survey on evaluation of large language models. arXiv preprint arXiv:2307.03109 (2023).

- Neural Attentional Rating Regression with Review-level Explanations. In Proceedings of the 2018 World Wide Web Conference on World Wide Web, WWW 2018, Lyon, France, April 23-27, 2018, Pierre-Antoine Champin, Fabien Gandon, Mounia Lalmas, and Panagiotis G. Ipeirotis (Eds.). ACM, 1583–1592. https://doi.org/10.1145/3178876.3186070

- A Unified Generative Retriever for Knowledge-Intensive Language Tasks via Prompt Learning. In Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR 2023, Taipei, Taiwan, July 23-27, 2023. ACM, 1448–1457. https://doi.org/10.1145/3539618.3591631

- Generative adversarial networks: An overview. IEEE signal processing magazine 35, 1 (2018), 53–65.

- M6-Rec: Generative Pretrained Language Models are Open-Ended Recommender Systems. CoRR abs/2205.08084 (2022). https://doi.org/10.48550/ARXIV.2205.08084 arXiv:2205.08084

- Set-Sequence-Graph: A Multi-View Approach Towards Exploiting Reviews for Recommendation. In CIKM ’20: The 29th ACM International Conference on Information and Knowledge Management, Virtual Event, Ireland, October 19-23, 2020, Mathieu d’Aquin, Stefan Dietze, Claudia Hauff, Edward Curry, and Philippe Cudré-Mauroux (Eds.). ACM, 395–404. https://doi.org/10.1145/3340531.3411939

- Recommendation as Language Processing (RLP): A Unified Pretrain, Personalized Prompt & Predict Paradigm (P5). In RecSys ’22: Sixteenth ACM Conference on Recommender Systems, Seattle, WA, USA, September 18 - 23, 2022, Jennifer Golbeck, F. Maxwell Harper, Vanessa Murdock, Michael D. Ekstrand, Bracha Shapira, Justin Basilico, Keld T. Lundgaard, and Even Oldridge (Eds.). ACM, 299–315. https://doi.org/10.1145/3523227.3546767

- Generative Adversarial Nets. In Advances in Neural Information Processing Systems 27: Annual Conference on Neural Information Processing Systems 2014, December 8-13 2014, Montreal, Quebec, Canada, Zoubin Ghahramani, Max Welling, Corinna Cortes, Neil D. Lawrence, and Kilian Q. Weinberger (Eds.). 2672–2680. https://proceedings.neurips.cc/paper/2014/hash/5ca3e9b122f61f8f06494c97b1afccf3-Abstract.html

- IPGAN: Generating Informative Item Pairs by Adversarial Sampling. IEEE Trans. Neural Networks Learn. Syst. 33, 2 (2022), 694–706. https://doi.org/10.1109/TNNLS.2020.3028572

- Ruining He and Julian McAuley. 2016. VBPR: visual bayesian personalized ranking from implicit feedback. In Proceedings of the AAAI conference on artificial intelligence, Vol. 30.

- Lightgcn: Simplifying and powering graph convolution network for recommendation. In Proceedings of the 43rd International ACM SIGIR conference on research and development in Information Retrieval. 639–648.

- Adversarial Personalized Ranking for Recommendation. In The 41st International ACM SIGIR Conference on Research & Development in Information Retrieval, SIGIR 2018, Ann Arbor, MI, USA, July 08-12, 2018, Kevyn Collins-Thompson, Qiaozhu Mei, Brian D. Davison, Yiqun Liu, and Emine Yilmaz (Eds.). ACM, 355–364. https://doi.org/10.1145/3209978.3209981

- Neural collaborative filtering. In Proceedings of the 26th international conference on world wide web. 173–182.

- beta-vae: Learning basic visual concepts with a constrained variational framework. In International conference on learning representations.

- Denoising Diffusion Probabilistic Models. In Advances in Neural Information Processing Systems 33: Annual Conference on Neural Information Processing Systems 2020, NeurIPS 2020, December 6-12, 2020, virtual, Hugo Larochelle, Marc’Aurelio Ranzato, Raia Hadsell, Maria-Florina Balcan, and Hsuan-Tien Lin (Eds.). https://proceedings.neurips.cc/paper/2020/hash/4c5bcfec8584af0d967f1ab10179ca4b-Abstract.html

- Reinforcement learning to rank in e-commerce search engine: Formalization, analysis, and application. In Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. ACM, 368–377.

- How to Index Item IDs for Recommendation Foundation Models. In Annual International ACM SIGIR Conference on Research and Development in Information Retrieval in the Asia Pacific Region, SIGIR-AP 2023, Beijing, China, November 26-28, 2023, Qingyao Ai, Yiqin Liu, Alistair Moffat, Xuanjing Huang, Tetsuya Sakai, and Justin Zobel (Eds.). ACM, 195–204. https://doi.org/10.1145/3624918.3625339

- Genrec: Large language model for generative recommendation. arXiv e-prints (2023), arXiv–2307.

- Wang-Cheng Kang and Julian J. McAuley. 2018. Self-Attentive Sequential Recommendation. In IEEE International Conference on Data Mining, ICDM 2018, Singapore, November 17-20, 2018. IEEE Computer Society, 197–206. https://doi.org/10.1109/ICDM.2018.00035

- A style-based generator architecture for generative adversarial networks. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 4401–4410.

- Diederik P. Kingma and Max Welling. 2014. Auto-Encoding Variational Bayes. In 2nd International Conference on Learning Representations, ICLR 2014, Banff, AB, Canada, April 14-16, 2014, Conference Track Proceedings, Yoshua Bengio and Yann LeCun (Eds.). http://arxiv.org/abs/1312.6114

- Matrix factorization techniques for recommender systems. Computer 42, 8 (2009), 30–37.

- Text Is All You Need: Learning Language Representations for Sequential Recommendation. In Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, KDD 2023, Long Beach, CA, USA, August 6-10, 2023. ACM, 1258–1267. https://doi.org/10.1145/3580305.3599519

- GPT4Rec: A generative framework for personalized recommendation and user interests interpretation. arXiv preprint arXiv:2304.03879 (2023).

- DiffuRec: A Diffusion Model for Sequential Recommendation. arXiv preprint arXiv:2304.00686 (2023).

- Variational Autoencoders for Collaborative Filtering. In Proceedings of the 2018 World Wide Web Conference on World Wide Web, WWW 2018, Lyon, France, April 23-27, 2018, Pierre-Antoine Champin, Fabien Gandon, Mounia Lalmas, and Panagiotis G. Ipeirotis (Eds.). ACM, 689–698. https://doi.org/10.1145/3178876.3186150

- Improving graph collaborative filtering with neighborhood-enriched contrastive learning. In Proceedings of the ACM Web Conference 2022. 2320–2329.

- SimpleX: A simple and strong baseline for collaborative filtering. In Proceedings of the 30th ACM International Conference on Information & Knowledge Management. 1243–1252.

- Aleksandr V. Petrov and Craig Macdonald. 2023. Generative Sequential Recommendation with GPTRec. CoRR abs/2306.11114 (2023). https://doi.org/10.48550/ARXIV.2306.11114 arXiv:2306.11114

- Privacy-Preserving News Recommendation Model Learning. In EMNLP (Findings) (Findings of ACL, Vol. EMNLP 2020). Association for Computational Linguistics, 1423–1432.

- Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer. J. Mach. Learn. Res. 21 (2020), 140:1–140:67. http://jmlr.org/papers/v21/20-074.html

- Recommender Systems with Generative Retrieval. arXiv preprint arXiv:2305.05065 (2023).

- Steffen Rendle. 2010. Factorization machines. In 2010 IEEE International conference on data mining. IEEE, 995–1000.

- BPR: Bayesian personalized ranking from implicit feedback. arXiv preprint arXiv:1205.2618 (2012).

- RecVAE: A New Variational Autoencoder for Top-N Recommendations with Implicit Feedback. In WSDM ’20: The Thirteenth ACM International Conference on Web Search and Data Mining, Houston, TX, USA, February 3-7, 2020, James Caverlee, Xia (Ben) Hu, Mounia Lalmas, and Wei Wang (Eds.). ACM, 528–536. https://doi.org/10.1145/3336191.3371831

- Generative Retrieval with Semantic Tree-Structured Item Identifiers via Contrastive Learning. arXiv preprint arXiv:2309.13375 (2023).

- Learning to Tokenize for Generative Retrieval. ArXiv abs/2304.04171 (2023).

- Semantic-Enhanced Differentiable Search Index Inspired by Learning Strategies. In Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, KDD 2023, Long Beach, CA, USA, August 6-10, 2023. ACM, 4904–4913. https://doi.org/10.1145/3580305.3599903

- Transformer memory as a differentiable search index. Advances in Neural Information Processing Systems 35 (2022), 21831–21843.

- Attention is all you need. Advances in neural information processing systems 30 (2017).

- Generative recommendation: Towards next-generation recommender paradigm. arXiv preprint arXiv:2304.03516 (2023).

- Diffusion Recommender Model. In Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR 2023, Taipei, Taiwan, July 23-27, 2023, Hsin-Hsi Chen, Wei-Jou (Edward) Duh, Hen-Hsen Huang, Makoto P. Kato, Josiane Mothe, and Barbara Poblete (Eds.). ACM, 832–841. https://doi.org/10.1145/3539618.3591663

- Neural graph collaborative filtering. In Proceedings of the 42nd international ACM SIGIR conference on Research and development in Information Retrieval. 165–174.

- A Neural Corpus Indexer for Document Retrieval. In NeurIPS. http://papers.nips.cc/paper_files/paper/2022/hash/a46156bd3579c3b268108ea6aca71d13-Abstract-Conference.html

- Generative Session-based Recommendation. In WWW ’22: The ACM Web Conference 2022, Virtual Event, Lyon, France, April 25 - 29, 2022, Frédérique Laforest, Raphaël Troncy, Elena Simperl, Deepak Agarwal, Aristides Gionis, Ivan Herman, and Lionel Médini (Eds.). ACM, 2227–2235. https://doi.org/10.1145/3485447.3512095

- Graph-Refined Convolutional Network for Multimedia Recommendation with Implicit Feedback. In MM ’20: The 28th ACM International Conference on Multimedia, Virtual Event / Seattle, WA, USA, October 12-16, 2020, Chang Wen Chen, Rita Cucchiara, Xian-Sheng Hua, Guo-Jun Qi, Elisa Ricci, Zhengyou Zhang, and Roger Zimmermann (Eds.). ACM, 3541–3549. https://doi.org/10.1145/3394171.3413556

- MMGCN: Multi-modal Graph Convolution Network for Personalized Recommendation of Micro-video. In Proceedings of the 27th ACM International Conference on Multimedia, MM 2019, Nice, France, October 21-25, 2019, Laurent Amsaleg, Benoit Huet, Martha A. Larson, Guillaume Gravier, Hayley Hung, Chong-Wah Ngo, and Wei Tsang Ooi (Eds.). ACM, 1437–1445. https://doi.org/10.1145/3343031.3351034

- Joint Training of Ratings and Reviews with Recurrent Recommender Networks. In 5th International Conference on Learning Representations, ICLR 2017, Toulon, France, April 24-26, 2017, Workshop Track Proceedings. OpenReview.net. https://openreview.net/forum?id=Bkv9FyHYx

- Diffusion models: A comprehensive survey of methods and applications. Comput. Surveys 56, 4 (2023), 1–39.

- A Simple Convolutional Generative Network for Next Item Recommendation. In Proceedings of the Twelfth ACM International Conference on Web Search and Data Mining. ACM, 582–590.

- SoundStream: An End-to-End Neural Audio Codec. IEEE ACM Trans. Audio Speech Lang. Process. 30 (2022), 495–507. https://doi.org/10.1109/TASLP.2021.3129994

- Recommendation as instruction following: A large language model empowered recommendation approach. arXiv preprint arXiv:2305.07001 (2023).

- A survey of large language models. arXiv preprint arXiv:2303.18223 (2023).

- Joint Deep Modeling of Users and Items Using Reviews for Recommendation. In Proceedings of the Tenth ACM International Conference on Web Search and Data Mining, WSDM 2017, Cambridge, United Kingdom, February 6-10, 2017, Maarten de Rijke, Milad Shokouhi, Andrew Tomkins, and Min Zhang (Eds.). ACM, 425–434. https://doi.org/10.1145/3018661.3018665

- Ultron: An ultimate retriever on corpus with a model-based indexer. arXiv preprint arXiv:2208.09257 (2022).

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.