A Review of Modern Recommender Systems Using Generative Models (Gen-RecSys)

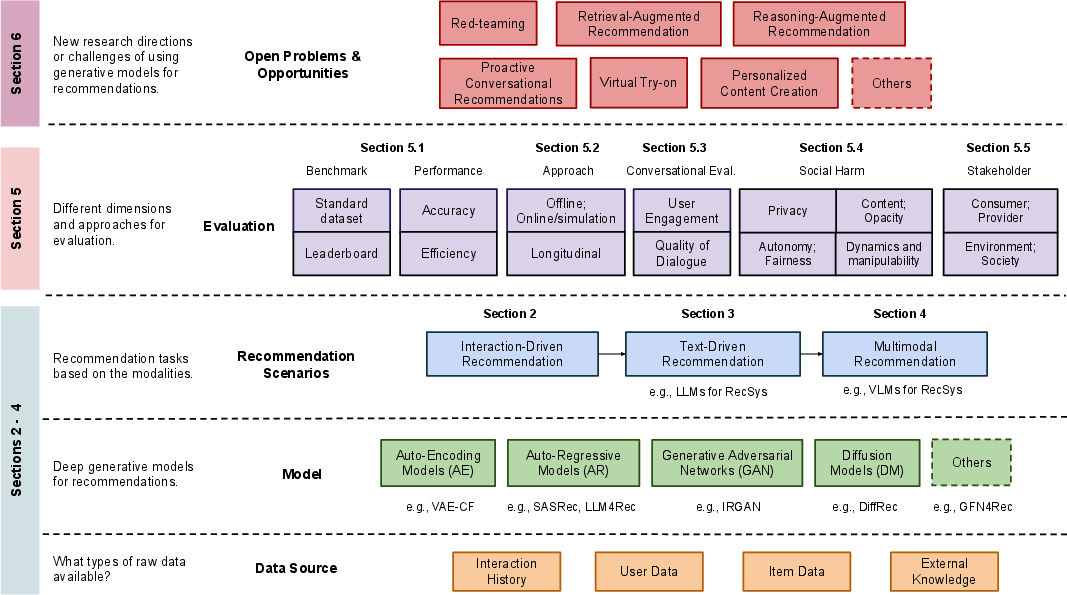

Abstract: Traditional recommender systems (RS) typically use user-item rating histories as their main data source. However, deep generative models now have the capability to model and sample from complex data distributions, including user-item interactions, text, images, and videos, enabling novel recommendation tasks. This comprehensive, multidisciplinary survey connects key advancements in RS using Generative Models (Gen-RecSys), covering: interaction-driven generative models; the use of LLMs (LLM) and textual data for natural language recommendation; and the integration of multimodal models for generating and processing images/videos in RS. Our work highlights necessary paradigms for evaluating the impact and harm of Gen-RecSys and identifies open challenges. This survey accompanies a tutorial presented at ACM KDD'24, with supporting materials provided at: https://encr.pw/vDhLq.

- 2014. Yelp Dataset. (2014). https://www.yelp.com/dataset

- Self-supervised Contrastive BERT Fine-tuning for Fusion-Based Reviewed-Item Retrieval. In European Conference on Information Retrieval. Springer, 3–17.

- Multistakeholder recommendation: Survey and research directions. User Modeling and User-Adapted Interaction 30 (2020), 127–158.

- Amy Ross Arguedas and Felix M Simon. 2023. Automating democracy: Generative AI, journalism, and the future of democracy. (2023).

- Structured denoising diffusion models in discrete state-spaces. Advances in Neural Information Processing Systems 34 (2021), 17981–17993.

- Personalized bundle list recommendation. In The World Wide Web Conference. 60–71.

- Zero-shot composed image retrieval with textual inversion. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 15338–15347.

- Tallrec: An effective and efficient tuning framework to align large language model with recommendation. In Proceedings of the 17th ACM Conference on Recommender Systems. 1007–1014.

- BIG bench authors. 2023. Beyond the Imitation Game: Quantifying and extrapolating the capabilities of language models. Transactions on Machine Learning Research (2023). https://openreview.net/forum?id=uyTL5Bvosj

- Flow network based generative models for non-iterative diverse candidate generation. Advances in Neural Information Processing Systems 34 (2021), 27381–27394.

- A neural probabilistic language model. Advances in neural information processing systems 13 (2000).

- Estimating the environmental impact of Generative-AI services using an LCA-based methodology. In CIRP LCE 2024 - 31st Conference on Life Cycle Engineering, Turin, Italy. 1–10.

- Typology of risks of generative text-to-image models. In Proceedings of the 2023 AAAI/ACM Conference on AI, Ethics, and Society. 396–410.

- Improving language models by retrieving from trillions of tokens. In International conference on machine learning. PMLR, 2206–2240.

- Instructpix2pix: Learning to follow image editing instructions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 18392–18402.

- Language models are few-shot learners. Advances in neural information processing systems 33 (2020), 1877–1901.

- Sparks of Artificial General Intelligence: Early experiments with GPT-4. arXiv:2303.12712 [cs.CL]

- Liwei Cai and William Yang Wang. 2018. KBGAN: Adversarial Learning for Knowledge Graph Embeddings. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers). 1470–1480.

- CFGAN: A generic collaborative filtering framework based on generative adversarial networks. In Proceedings of the 27th ACM international conference on information and knowledge management. 137–146.

- Towards knowledge-based recommender dialog system. arXiv preprint arXiv:1908.05391 (2019).

- Generative adversarial user model for reinforcement learning based recommendation system. In International Conference on Machine Learning. PMLR, 1052–1061.

- Zheng Chen. 2023. PALR: Personalization Aware LLMs for Recommendation. arXiv preprint arXiv:2305.07622 (2023).

- Look, listen, and attend: Co-attention network for self-supervised audio-visual representation learning. In Proceedings of the 28th ACM International Conference on Multimedia. 3884–3892.

- Palm: Scaling language modeling with pathways. 2022. arXiv preprint arXiv:2204.02311 (2022).

- Empirical evaluation of gated recurrent neural networks on sequence modeling. In NIPS 2014 Workshop on Deep Learning, December 2014.

- M6-rec: Generative pretrained language models are open-ended recommender systems. arXiv preprint arXiv:2205.08084 (2022).

- Uncovering ChatGPT’s Capabilities in Recommender Systems. arXiv preprint arXiv:2305.02182 (2023).

- How generative AI could disrupt creative work. Harvard Business Review 13 (2023).

- Generative slate recommendation with reinforcement learning. In Proceedings of the Sixteenth ACM International Conference on Web Search and Data Mining. 580–588.

- Yashar Deldjoo. 2024. Understanding Biases in ChatGPT-based Recommender Systems: Provider Fairness, Temporal Stability, and Recency. arXiv preprint arXiv:2401.10545 (2024).

- Yashar Deldjoo and Tommaso Di Noia. 2024. CFaiRLLM: Consumer Fairness Evaluation in Large-Language Model Recommender System. arXiv preprint arXiv:2403.05668 (2024).

- A survey on adversarial recommender systems: from attack/defense strategies to generative adversarial networks. ACM Computing Surveys (CSUR) 54, 2 (2021), 1–38.

- Attack prompt generation for red teaming and defending large language models. arXiv preprint arXiv:2310.12505 (2023).

- BERT: Pre-training of deep bidirectional transformers for language understanding. In Association for Computational Linguistics.

- BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers). Association for Computational Linguistics, Minneapolis, Minnesota, 4171–4186.

- Wavenet: A generative model for raw audio. arXiv preprint arXiv:1609.03499 12 (2016).

- Idnp: Interest dynamics modeling using generative neural processes for sequential recommendation. In Proceedings of the Sixteenth ACM International Conference on Web Search and Data Mining. 481–489.

- Recommender systems in the era of large language models (llms). arXiv preprint arXiv:2307.02046 (2023).

- Leveraging Large Language Models in Conversational Recommender Systems. arXiv preprint arXiv:2305.07961 (2023).

- Luyu Gao and Jamie Callan. 2021. Condenser: a pre-training architecture for dense retrieval. arXiv preprint arXiv:2104.08253 (2021).

- Chat-rec: Towards interactive and explainable llms-augmented recommender system. arXiv preprint arXiv:2303.14524 (2023).

- Conditional neural processes. In International conference on machine learning. PMLR, 1704–1713.

- Neural processes. arXiv preprint arXiv:1807.01622 (2018).

- Recommendation as language processing (rlp): A unified pretrain, personalized prompt & predict paradigm (p5). In Proceedings of the 16th ACM Conference on Recommender Systems. 299–315.

- Imagebind: One embedding space to bind them all. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 15180–15190.

- Generative adversarial nets. In Advances in Neural Information Processing Systems.

- Open-vocabulary Object Detection via Vision and Language Knowledge Distillation. In International Conference on Learning Representations. https://api.semanticscholar.org/CorpusID:238744187

- Deep multimodal representation learning: A survey. Ieee Access 7 (2019), 63373–63394.

- Deepesh V Hada and Shirish K Shevade. 2021. Rexplug: Explainable recommendation using plug-and-play language model. In Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval. 81–91.

- Best of Both Worlds: Multimodal Contrastive Learning with Tabular and Imaging Data. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 23924–23935.

- F Maxwell Harper and Joseph A Konstan. 2015. The Movielens datasets: History and context. ACM Transactions on Interactive Intelligent Systems (2015).

- Leveraging large language models for sequential recommendation. In Proceedings of the 17th ACM Conference on Recommender Systems. 1096–1102.

- INSPIRED: Toward Sociable Recommendation Dialog Systems. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP). Association for Computational Linguistics, Online, 8142–8152. https://www.aclweb.org/anthology/2020.emnlp-main.654

- Masked autoencoders are scalable vision learners. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 16000–16009.

- Ruining He and Julian McAuley. 2016. Ups and downs: Modeling the visual evolution of fashion trends with one-class collaborative filtering. In International World Wide Web Conference.

- Large language models as zero-shot conversational recommenders. arXiv preprint arXiv:2308.10053 (2023).

- Locker: Locally constrained self-attentive sequential recommendation. In Proceedings of the 30th ACM International Conference on Information & Knowledge Management. 3088–3092.

- Query-Aware Sequential Recommendation. In Proceedings of the 31st ACM International Conference on Information & Knowledge Management. 4019–4023.

- Extending CLIP for Category-to-image Retrieval in E-commerce. In European Conference on Information Retrieval. Springer, 289–303.

- Balázs Hidasi and Alexandros Karatzoglou. 2018. Recurrent neural networks with top-k gains for session-based recommendations. In Proceedings of the 27th ACM international conference on information and knowledge management. 843–852.

- Session-based recommendations with recurrent neural networks. In International Conference on Learning Representations.

- Sepp Hochreiter and Jürgen Schmidhuber. 1997. Long short-term memory. Neural Computation (1997).

- Efficiently Teaching an Effective Dense Retriever with Balanced Topic Aware Sampling. arXiv:2104.06967 [cs.IR]

- Multilayer feedforward networks are universal approximators. Neural networks 2, 5 (1989), 359–366.

- Large language models are zero-shot rankers for recommender systems. arXiv preprint arXiv:2305.08845 (2023).

- Haoji Hu and Xiangnan He. 2019. Sets2sets: Learning from sequential sets with neural networks. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 1491–1499.

- Foundation Models for Recommender Systems: A Survey and New Perspectives. arXiv preprint arXiv:2402.11143 (2024).

- Weichen Huang. 2023. Multimodal Contrastive Learning and Tabular Attention for Automated Alzheimer’s Disease Prediction. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 2473–2482.

- FROSTER: Frozen CLIP is A Strong Teacher for Open-Vocabulary Action Recognition. In The Twelfth International Conference on Learning Representations.

- Gautier Izacard and Edouard Grave. 2020. Leveraging passage retrieval with generative models for open domain question answering. arXiv preprint arXiv:2007.01282 (2020).

- Categorical Reparameterization with Gumbel-Softmax. In International Conference on Learning Representations.

- A Survey on Conversational Recommender Systems. arXiv preprint arXiv:2004.00646 (2020).

- Scaling up visual and vision-language representation learning with noisy text supervision. In International conference on machine learning. PMLR, 4904–4916.

- Beyond Greedy Ranking: Slate Optimization via List-CVAE. In International Conference on Learning Representations.

- Visually-aware fashion recommendation and design with generative image models. In International Conference on Data Mining. IEEE.

- Wang-Cheng Kang and Julian McAuley. 2018. Self-attentive sequential recommendation. In 2018 IEEE international conference on data mining (ICDM). IEEE, 197–206.

- Do LLMs Understand User Preferences? Evaluating LLMs On User Rating Prediction. arXiv preprint arXiv:2305.06474 (2023).

- Saketh Reddy Karra and Theja Tulabandhula. 2024. InteraRec: Interactive Recommendations Using Multimodal Large Language Models. arXiv preprint arXiv:2403.00822 (2024).

- Diederik P Kingma and Max Welling. 2013. Auto-encoding variational bayes. arXiv preprint arXiv:1312.6114 (2013).

- Segment anything. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 4015–4026.

- Yuanhang Zhou Chenzhan Shang Yuan Cheng Wayne Xin Zhao Yaliang Li Ji-Rong Wen Kun Zhou, Xiaolei Wang. 2021. CRSLab: An Open-Source Toolkit for Building Conversational Recommender System. arXiv preprint arXiv:2101.00939 (2021).

- Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems 33 (2020), 9459–9474.

- A diversity-promoting objective function for neural conversation models. arXiv preprint arXiv:1510.03055 (2015).

- Blip: Bootstrapping language-image pre-training for unified vision-language understanding and generation. In International Conference on Machine Learning. PMLR, 12888–12900.

- Align before fuse: Vision and language representation learning with momentum distillation. Advances in neural information processing systems 34 (2021), 9694–9705.

- GPT4Rec: A generative framework for personalized recommendation and user interests interpretation. arXiv preprint arXiv:2304.03879 (2023).

- MINER: Multi-interest matching network for news recommendation. In Findings of the Association for Computational Linguistics: ACL 2022. 343–352.

- Generate neural template explanations for recommendation. In Proceedings of the 29th ACM International Conference on Information & Knowledge Management. 755–764.

- Personalized prompt learning for explainable recommendation. ACM Transactions on Information Systems 41, 4 (2023), 1–26.

- Large language models for generative recommendation: A survey and visionary discussions. arXiv preprint arXiv:2309.01157 (2023).

- Towards deep conversational recommendations. In Advances in Neural Information Processing Systems.

- Towards Deep Conversational Recommendations. In Advances in Neural Information Processing Systems 31 (NIPS 2018).

- Variational autoencoders for collaborative filtering. In Proceedings of the 2018 world wide web conference. 689–698.

- Holistic evaluation of language models. arXiv preprint arXiv:2211.09110 (2022).

- How can recommender systems benefit from large language models: A survey. arXiv preprint arXiv:2306.05817 (2023).

- FISSA: Fusing item similarity models with self-attention networks for sequential recommendation. In Proceedings of the 14th ACM conference on recommender systems. 130–139.

- Improved baselines with visual instruction tuning. arXiv preprint arXiv:2310.03744 (2023).

- Visual instruction tuning. Advances in neural information processing systems 36 (2024).

- Is chatgpt a good recommender? a preliminary study. arXiv preprint arXiv:2304.10149 (2023).

- Diffusion augmentation for sequential recommendation. In Proceedings of the 32nd ACM International Conference on Information and Knowledge Management. 1576–1586.

- Generative flow network for listwise recommendation. In Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. 1524–1534.

- Variation control and evaluation for generative slate recommendations. In Proceedings of the Web Conference 2021. 436–448.

- Content-based recommender systems: State of the art and trends. Recommender systems handbook (2011), 73–105.

- RevCore: Review-augmented conversational recommendation. arXiv preprint arXiv:2106.00957 (2021).

- A Workflow Analysis of Context-driven Conversational Recommendation. In Proceedings of the 30th International Conference on the World Wide Web (WWW-21). Ljubljana, Slovenia.

- UniTRec: A Unified Text-to-Text Transformer and Joint Contrastive Learning Framework for Text-based Recommendation. In The 61st Annual Meeting Of The Association For Computational Linguistics.

- Augmented Language Models: a Survey. arXiv:2302.07842 [cs.CL]

- Recommender systems and their ethical challenges. Ai & Society 35 (2020), 957–967.

- Ethical aspects of multi-stakeholder recommendation systems. The Information Society 37 (2021), 35–45.

- Mehdi Mirza and Simon Osindero. 2014. Conditional generative adversarial nets. arXiv preprint arXiv:1411.1784 (2014).

- Large Language Model Augmented Narrative Driven Recommendations. arXiv preprint arXiv:2306.02250 (2023).

- Dataset diffusion: Diffusion-based synthetic data generation for pixel-level semantic segmentation. Advances in Neural Information Processing Systems 36 (2024).

- Justifying recommendations using distantly-labeled reviews and fine-grained aspects. In International Joint Conference on Natural Language Processing.

- Chils: Zero-shot image classification with hierarchical label sets. In International Conference on Machine Learning. PMLR, 26342–26362.

- TB OpenAI. 2022. Chatgpt: Optimizing language models for dialogue. OpenAI (2022).

- Better training of gflownets with local credit and incomplete trajectories. In International Conference on Machine Learning. PMLR, 26878–26890.

- Gustavo Penha and Claudia Hauff. 2020. What does bert know about books, movies and music? probing bert for conversational recommendation. In Proceedings of the 14th ACM Conference on Recommender Systems. 388–397.

- Large language models are effective text rankers with pairwise ranking prompting. arXiv preprint arXiv:2306.17563 (2023).

- U-BERT: Pre-training user representations for improved recommendation. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 35. 4320–4327.

- Learning transferable visual models from natural language supervision. In International conference on machine learning. PMLR, 8748–8763.

- Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of machine learning research 21, 140 (2020), 1–67.

- Multimodal co-learning: Challenges, applications with datasets, recent advances and future directions. Information Fusion 81 (2022), 203–239.

- Recommender systems with generative retrieval. Advances in Neural Information Processing Systems 36 (2024).

- Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125 1, 2 (2022), 3.

- Danilo Rezende and Shakir Mohamed. 2015. Variational inference with normalizing flows. In International conference on machine learning. PMLR, 1530–1538.

- High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 10684–10695.

- CLASP: Few-Shot Cross-Lingual Data Augmentation for Semantic Parsing. AACL-IJCNLP 2022 (2022), 444.

- Dreambooth: Fine tuning text-to-image diffusion models for subject-driven generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 22500–22510.

- Sequential variational autoencoders for collaborative filtering. In Proceedings of the twelfth ACM international conference on web search and data mining. 600–608.

- Large Language Models are Competitive Near Cold-start Recommenders for Language-and Item-based Preferences. arXiv preprint arXiv:2307.14225 (2023).

- Markus Schedl. 2016. The lfm-1b dataset for music retrieval and recommendation. In Proceedings of the 2016 ACM on international conference on multimedia retrieval. 103–110.

- Autorec: Autoencoders meet collaborative filtering. In Proceedings of the 24th international conference on World Wide Web. 111–112.

- Towards Understanding and Mitigating Unintended Biases in Language Model-driven Conversational Recommendation. Information Processing & Management 60, 1 (2023), 103139.

- AttackEval: How to Evaluate the Effectiveness of Jailbreak Attacking on Large Language Models. arXiv:2401.09002 [cs.CL]

- Zero-shot recommendation as language modeling. In European Conference on Information Retrieval. Springer, 223–230.

- Deep unsupervised learning using nonequilibrium thermodynamics. In International conference on machine learning. PMLR, 2256–2265.

- Learning structured output representation using deep conditional generative models. Advances in neural information processing systems 28 (2015).

- BERT4Rec: Sequential recommendation with bidirectional encoder representations from transformer. In Proceedings of the 28th ACM international conference on information and knowledge management. 1441–1450.

- Multistakeholder recommendation with provider constraints. In Proceedings of the 12th ACM Conference on Recommender Systems. 54–62.

- Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805 (2023).

- Attention is all you need. In Advances in Neural Information Processing Systems.

- Exploring the Impact of Large Language Models on Recommender Systems: An Extensive Review. arXiv preprint arXiv:2402.18590 (2024).

- Extracting and composing robust features with denoising autoencoders. In Proceedings of the 25th international conference on Machine learning. 1096–1103.

- Recommendation via collaborative diffusion generative model. In International Conference on Knowledge Science, Engineering and Management. Springer, 593–605.

- Irgan: A minimax game for unifying generative and discriminative information retrieval models. In Proceedings of the 40th International ACM SIGIR conference on Research and Development in Information Retrieval. 515–524.

- Lei Wang and Ee-Peng Lim. 2023. Zero-Shot Next-Item Recommendation using Large Pretrained Language Models. arXiv preprint arXiv:2304.03153 (2023).

- Recagent: A novel simulation paradigm for recommender systems. arXiv preprint arXiv:2306.02552 (2023).

- Neural memory streaming recommender networks with adversarial training. In Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 2467–2475.

- Enhancing collaborative filtering with generative augmentation. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 548–556.

- Generative recommendation: Towards next-generation recommender paradigm. arXiv preprint arXiv:2304.03516 (2023).

- Diffusion Recommender Model. arXiv preprint arXiv:2304.04971 (2023).

- Rethinking the evaluation for conversational recommendation in the era of large language models. arXiv preprint arXiv:2305.13112 (2023).

- Towards unified conversational recommender systems via knowledge-enhanced prompt learning. In Proceedings of the 28th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. 1929–1937.

- Recmind: Large language model powered agent for recommendation. arXiv preprint arXiv:2308.14296 (2023).

- Contrastvae: Contrastive variational autoencoder for sequential recommendation. In Proceedings of the 31st ACM International Conference on Information & Knowledge Management. 2056–2066.

- Aligning large language models with human: A survey. arXiv preprint arXiv:2307.12966 (2023).

- Emergent abilities of large language models. arXiv preprint arXiv:2206.07682 (2022).

- Taxonomy of risks posed by language models. In Proceedings of the 2022 ACM Conference on Fairness, Accountability, and Transparency. 214–229.

- Neural news recommendation with multi-head self-attention. In Proceedings of the 2019 conference on empirical methods in natural language processing and the 9th international joint conference on natural language processing (EMNLP-IJCNLP). 6389–6394.

- Empowering news recommendation with pre-trained language models. In Proceedings of the 44th international ACM SIGIR conference on research and development in information retrieval. 1652–1656.

- SSE-PT: Sequential recommendation via personalized transformer. In Proceedings of the 14th ACM conference on recommender systems. 328–337.

- A survey on large language models for recommendation. arXiv preprint arXiv:2305.19860 (2023).

- Collaborative denoising auto-encoders for top-n recommender systems. In Proceedings of the ninth ACM international conference on web search and data mining. 153–162.

- Diff4Rec: Sequential Recommendation with Curriculum-scheduled Diffusion Augmentation. In Proceedings of the 31st ACM International Conference on Multimedia. 9329–9335.

- Tile Networks: Learning Optimal Geometric Layout for Whole-page Recommendation. In International Conference on Artificial Intelligence and Statistics. PMLR, 8360–8369.

- Improving Conversational Recommendation Systems’ Quality with Context-Aware Item Meta-Information. In Findings of the Association for Computational Linguistics: NAACL 2022. 38–48.

- ReprBERT: distilling BERT to an efficient representation-based relevance model for e-commerce. In Proceedings of the 28th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. 4363–4371.

- FiD-ICL: A Fusion-in-Decoder Approach for Efficient In-Context Learning. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). 8158–8185.

- Kg-fid: Infusing knowledge graph in fusion-in-decoder for open-domain question answering. arXiv preprint arXiv:2110.04330 (2021).

- Predicting temporal sets with deep neural networks. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. 1083–1091.

- Where to go next for recommender systems? id-vs. modality-based recommender models revisited. In Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval. 2639–2649.

- Black-box attacks on sequential recommenders via data-free model extraction. In Proceedings of the 15th ACM Conference on Recommender Systems. 44–54.

- Linear recurrent units for sequential recommendation. In Proceedings of the 17th ACM International Conference on Web Search and Data Mining. 930–938.

- Mm-llms: Recent advances in multimodal large language models. arXiv preprint arXiv:2401.13601 (2024).

- Is chatgpt fair for recommendation? evaluating fairness in large language model recommendation. In Proceedings of the 17th ACM Conference on Recommender Systems. 993–999.

- Adding conditional control to text-to-image diffusion models. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 3836–3847.

- UNBERT: User-News Matching BERT for News Recommendation.. In IJCAI, Vol. 21. 3356–3362.

- Collm: Integrating collaborative embeddings into large language models for recommendation. arXiv preprint arXiv:2310.19488 (2023).

- Zizhuo Zhang and Bang Wang. 2023. Prompt learning for news recommendation. In Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval. 227–237.

- Session-based recommendation via flow-based deep generative networks and Bayesian inference. Neurocomputing 391 (2020), 129–141.

- Zegclip: Towards adapting clip for zero-shot semantic segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 11175–11185.

- Tryondiffusion: A tale of two unets. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 4606–4615.

- Improving recommendation lists through topic diversification. In International World Wide Web Conference.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.