A Taxonomy for Human-LLM Interaction Modes: An Initial Exploration

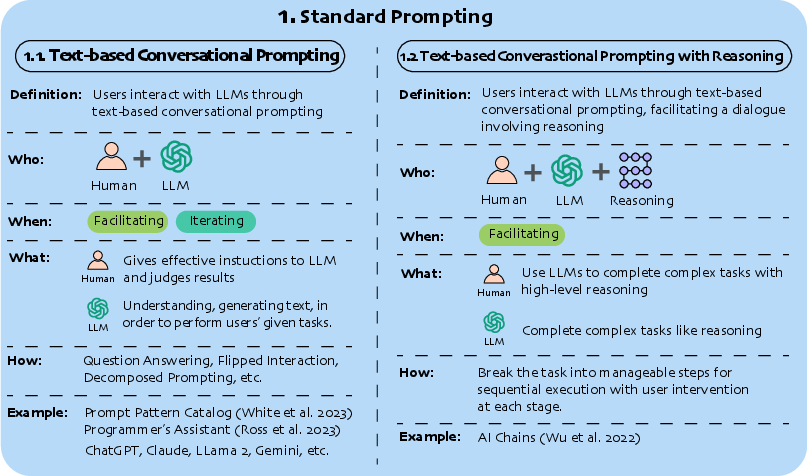

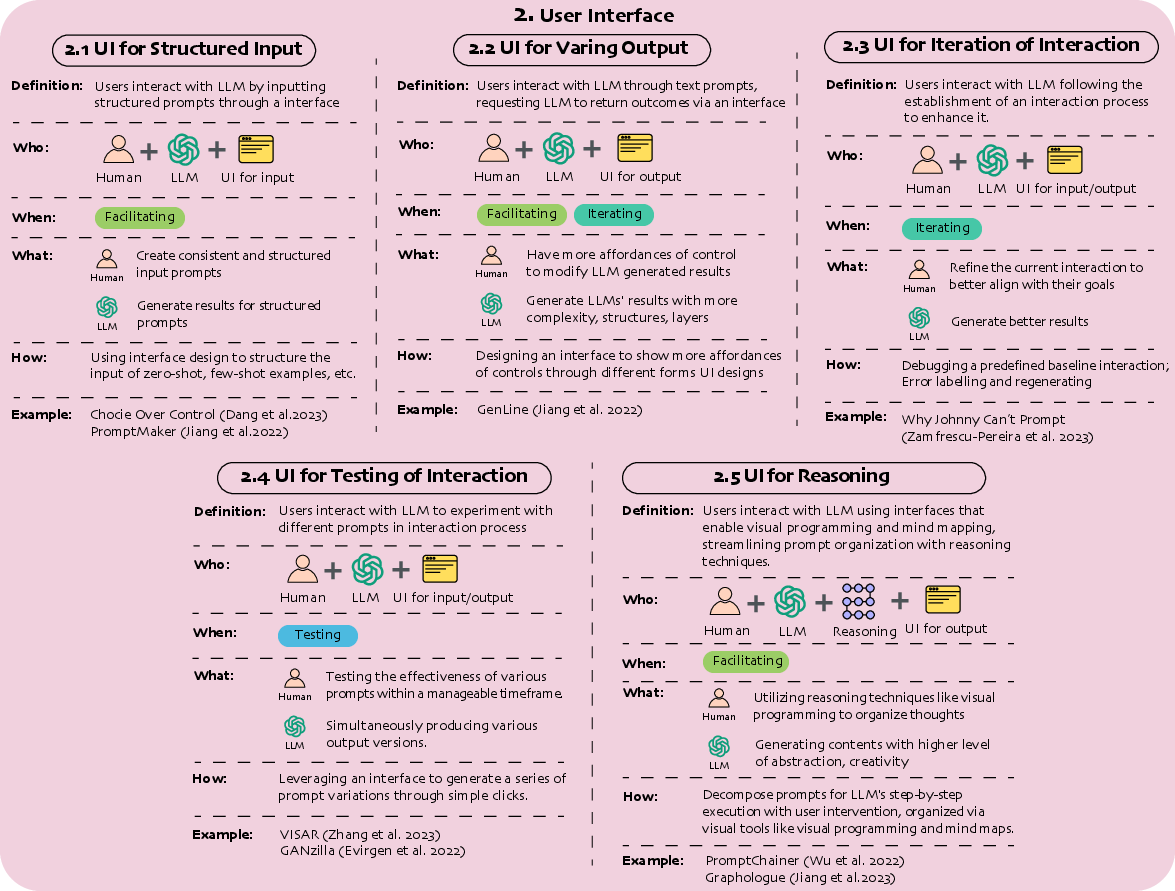

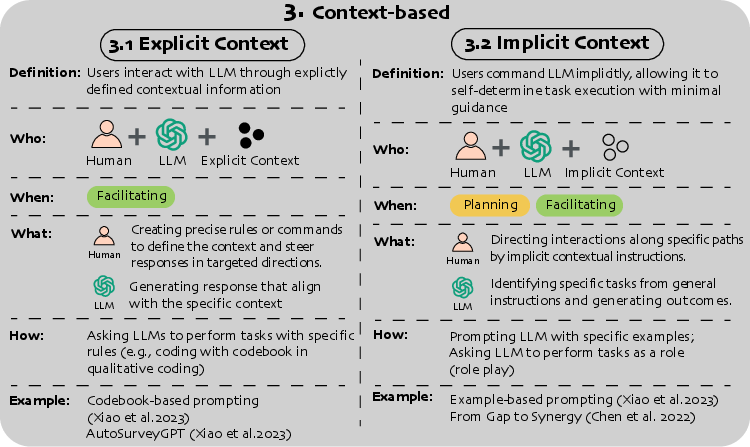

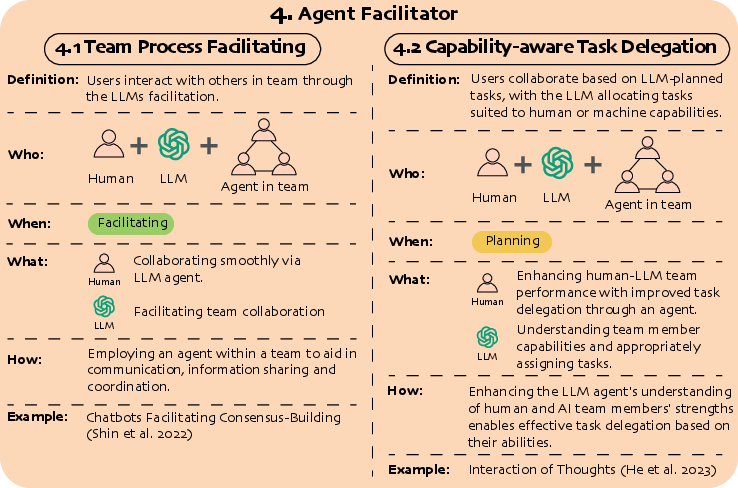

Abstract: With ChatGPT's release, conversational prompting has become the most popular form of human-LLM interaction. However, its effectiveness is limited for more complex tasks involving reasoning, creativity, and iteration. Through a systematic analysis of HCI papers published since 2021, we identified four key phases in the human-LLM interaction flow - planning, facilitating, iterating, and testing - to precisely understand the dynamics of this process. Additionally, we have developed a taxonomy of four primary interaction modes: Mode 1: Standard Prompting, Mode 2: User Interface, Mode 3: Context-based, and Mode 4: Agent Facilitator. This taxonomy was further enriched using the "5W1H" guideline method, which involved a detailed examination of definitions, participant roles (Who), the phases that happened (When), human objectives and LLM abilities (What), and the mechanics of each interaction mode (How). We anticipate this taxonomy will contribute to the future design and evaluation of human-LLM interaction.

- Laura Aina and Tal Linzen. 2021. The language model understood the prompt was ambiguous: Probing syntactic uncertainty through generation. arXiv preprint arXiv:2109.07848 (2021).

- Spellburst: A Node-based Interface for Exploratory Creative Coding with Natural Language Prompts. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. ACM, San Francisco CA USA, 1–22. https://doi.org/10.1145/3586183.3606719

- ChainForge: An open-source visual programming environment for prompt engineering. In Adjunct Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23 Adjunct). Association for Computing Machinery, New York, NY, USA, 1–3. https://doi.org/10.1145/3586182.3616660

- Interacting with Next-Phrase Suggestions: How Suggestion Systems Aid and Influence the Cognitive Processes of Writing. In Proceedings of the 28th International Conference on Intelligent User Interfaces (IUI ’23). Association for Computing Machinery, New York, NY, USA, 436–452. https://doi.org/10.1145/3581641.3584060

- Follow the Successful Herd: Towards Explanations for Improved Use and Mental Models of Natural Language Systems. In Proceedings of the 28th International Conference on Intelligent User Interfaces (IUI ’23). Association for Computing Machinery, New York, NY, USA, 220–239. https://doi.org/10.1145/3581641.3584088

- Promptify: Text-to-Image Generation through Interactive Prompt Exploration with Large Language Models. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23). Association for Computing Machinery, New York, NY, USA, 1–14. https://doi.org/10.1145/3586183.3606725

- Developing a Conversational Recommendation System for Navigating Limited Options. In Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems. 1–6. https://doi.org/10.1145/3411763.3451596 arXiv:2104.06552 [cs].

- The Impact of Multiple Parallel Phrase Suggestions on Email Input and Composition Behaviour of Native and Non-Native English Writers. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems. ACM, Yokohama Japan, 1–13. https://doi.org/10.1145/3411764.3445372

- Low-code LLM: Visual Programming over LLMs. arXiv:2304.08103 [cs.CL]

- From Gap to Synergy: Enhancing Contextual Understanding through Human-Machine Collaboration in Personalized Systems. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23). Association for Computing Machinery, New York, NY, USA, 1–15. https://doi.org/10.1145/3586183.3606741

- TaleBrush: Sketching Stories with Generative Pretrained Language Models. In CHI Conference on Human Factors in Computing Systems. ACM, New Orleans LA USA, 1–19. https://doi.org/10.1145/3491102.3501819

- My Bad! Repairing Intelligent Voice Assistant Errors Improves Interaction. Proceedings of the ACM on Human-Computer Interaction 5, CSCW1 (April 2021), 27:1–27:24. https://doi.org/10.1145/3449101

- Beyond Text Generation: Supporting Writers with Continuous Automatic Text Summaries. In Proceedings of the 35th Annual ACM Symposium on User Interface Software and Technology (UIST ’22). Association for Computing Machinery, New York, NY, USA, 1–13. https://doi.org/10.1145/3526113.3545672

- Choice Over Control: How Users Write with Large Language Models using Diegetic and Non-Diegetic Prompting. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–17. https://doi.org/10.1145/3544548.3580969

- How to Prompt? Opportunities and Challenges of Zero- and Few-Shot Learning for Human-AI Interaction in Creative Applications of Generative Models. http://arxiv.org/abs/2209.01390 arXiv:2209.01390 [cs].

- Bridging Fluency Disparity between Native and Nonnative Speakers in Multilingual Multiparty Collaboration Using a Clarification Agent. Proceedings of the ACM on Human-Computer Interaction 5, CSCW2 (Oct. 2021), 435:1–435:31. https://doi.org/10.1145/3479579

- Noyan Evirgen and Xiang ’Anthony’ Chen. 2022. GANzilla: User-Driven Direction Discovery in Generative Adversarial Networks. In Proceedings of the 35th Annual ACM Symposium on User Interface Software and Technology. ACM, Bend OR USA, 1–10. https://doi.org/10.1145/3526113.3545638

- Human-AI Collaboration for UX Evaluation: Effects of Explanation and Synchronization. Proceedings of the ACM on Human-Computer Interaction 6, CSCW1 (April 2022), 96:1–96:32. https://doi.org/10.1145/3512943

- Design patterns: elements of reusable object-oriented software. Addison-Wesley Longman Publishing Co., Inc., USA.

- PaTAT: Human-AI Collaborative Qualitative Coding with Explainable Interactive Rule Synthesis. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–19. https://doi.org/10.1145/3544548.3581352

- Augmenting Pathologists with NaviPath: Design and Evaluation of a Human-AI Collaborative Navigation System. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–19. https://doi.org/10.1145/3544548.3580694

- Interaction of Thoughts: Towards Mediating Task Assignment in Human-AI Cooperation with a Capability-Aware Shared Mental Model. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–18. https://doi.org/10.1145/3544548.3580983

- Use of an AI-powered Rewriting Support Software in Context with Other Tools: A Study of Non-Native English Speakers. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23). Association for Computing Machinery, New York, NY, USA, 1–13. https://doi.org/10.1145/3586183.3606810

- PromptMaker: Prompt-based Prototyping with Large Language Models. In CHI Conference on Human Factors in Computing Systems Extended Abstracts. ACM, New Orleans LA USA, 1–8. https://doi.org/10.1145/3491101.3503564

- GenLine and GenForm: Two Tools for Interacting with Generative Language Models in a Code Editor. In Adjunct Proceedings of the 34th Annual ACM Symposium on User Interface Software and Technology. ACM, Virtual Event USA, 145–147. https://doi.org/10.1145/3474349.3480209

- Discovering the Syntax and Strategies of Natural Language Programming with Generative Language Models. In CHI Conference on Human Factors in Computing Systems. ACM, New Orleans LA USA, 1–19. https://doi.org/10.1145/3491102.3501870

- Graphologue: Exploring Large Language Model Responses with Interactive Diagrams. arXiv preprint arXiv:2305.11473 (2023).

- Graphologue: Exploring Large Language Model Responses with Interactive Diagrams. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23). Association for Computing Machinery, New York, NY, USA, 1–20. https://doi.org/10.1145/3586183.3606737

- Understanding the Benefits and Challenges of Deploying Conversational AI Leveraging Large Language Models for Public Health Intervention. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–16. https://doi.org/10.1145/3544548.3581503

- Toward Value Scenario Generation Through Large Language Models. In Companion Publication of the 2023 Conference on Computer Supported Cooperative Work and Social Computing (CSCW ’23 Companion). Association for Computing Machinery, New York, NY, USA, 212–220. https://doi.org/10.1145/3584931.3606960

- Interactive User Interface for Dialogue Summarization. In Proceedings of the 28th International Conference on Intelligent User Interfaces (IUI ’23). Association for Computing Machinery, New York, NY, USA, 934–957. https://doi.org/10.1145/3581641.3584057

- Staffs Keele et al. 2007. Guidelines for performing systematic literature reviews in software engineering.

- Facilitating Continuous Text Messaging in Online Romantic Encounters by Expanded Keywords Enumeration. In Companion Publication of the 2022 Conference on Computer Supported Cooperative Work and Social Computing (CSCW’22 Companion). Association for Computing Machinery, New York, NY, USA, 3–7. https://doi.org/10.1145/3500868.3559441

- Stylette: Styling the Web with Natural Language. In CHI Conference on Human Factors in Computing Systems. ACM, New Orleans LA USA, 1–17. https://doi.org/10.1145/3491102.3501931

- Cells, Generators, and Lenses: Design Framework for Object-Oriented Interaction with Large Language Models. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23). Association for Computing Machinery, New York, NY, USA, 1–18. https://doi.org/10.1145/3586183.3606833

- Large language models are zero-shot reasoners. Advances in neural information processing systems 35 (2022), 22199–22213.

- Exploring the Use of Large Language Models for Improving the Awareness of Mindfulness. In Extended Abstracts of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–7. https://doi.org/10.1145/3544549.3585614

- Human-AI Collaboration via Conditional Delegation: A Case Study of Content Moderation. http://arxiv.org/abs/2204.11788 arXiv:2204.11788 [cs].

- Ray Lc and Daijiro Mizuno. 2021. Designing for Narrative Influence:: Speculative Storytelling for Social Good in Times of Public Health and Climate Crises. In Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems. ACM, Yokohama Japan, 1–13. https://doi.org/10.1145/3411763.3450373

- CoAuthor: Designing a Human-AI Collaborative Writing Dataset for Exploring Language Model Capabilities. In CHI Conference on Human Factors in Computing Systems. 1–19. https://doi.org/10.1145/3491102.3502030 arXiv:2201.06796 [cs].

- Exploring the Effects of Incorporating Human Experts to Deliver Journaling Guidance through a Chatbot. Proceedings of the ACM on Human-Computer Interaction 5, CSCW1 (April 2021), 122:1–122:27. https://doi.org/10.1145/3449196

- Human-Centered Deferred Inference: Measuring User Interactions and Setting Deferral Criteria for Human-AI Teams. In Proceedings of the 28th International Conference on Intelligent User Interfaces (IUI ’23). Association for Computing Machinery, New York, NY, USA, 681–694. https://doi.org/10.1145/3581641.3584092

- “What It Wants Me To Say”: Bridging the Abstraction Gap Between End-User Programmers and Code-Generating Large Language Models. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–31. https://doi.org/10.1145/3544548.3580817

- Opal: Multimodal Image Generation for News Illustration. In Proceedings of the 35th Annual ACM Symposium on User Interface Software and Technology. ACM, Bend OR USA, 1–17. https://doi.org/10.1145/3526113.3545621

- Cutting down on prompts and parameters: Simple few-shot learning with language models. arXiv preprint arXiv:2106.13353 (2021).

- Expressive Communication: Evaluating Developments in Generative Models and Steering Interfaces for Music Creation. In 27th International Conference on Intelligent User Interfaces (IUI ’22). Association for Computing Machinery, New York, NY, USA, 405–417. https://doi.org/10.1145/3490099.3511159

- On the Design of AI-powered Code Assistants for Notebooks. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–16. https://doi.org/10.1145/3544548.3580940

- Co-Writing Screenplays and Theatre Scripts with Language Models: Evaluation by Industry Professionals. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–34. https://doi.org/10.1145/3544548.3581225

- ImpactBot: Chatbot Leveraging Language Models to Automate Feedback and Promote Critical Thinking Around Impact Statements. In Extended Abstracts of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–8. https://doi.org/10.1145/3544549.3573844

- DIY: Assessing the Correctness of Natural Language to SQL Systems. In 26th International Conference on Intelligent User Interfaces (IUI ’21). Association for Computing Machinery, New York, NY, USA, 597–607. https://doi.org/10.1145/3397481.3450667

- BunCho: AI Supported Story Co-Creation via Unsupervised Multitask Learning to Increase Writers’ Creativity in Japanese. In Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems. ACM, Yokohama Japan, 1–10. https://doi.org/10.1145/3411763.3450391

- PromptInfuser: Bringing User Interface Mock-ups to Life with Large Language Models. In Extended Abstracts of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–6. https://doi.org/10.1145/3544549.3585628

- SemanticOn: Specifying Content-Based Semantic Conditions for Web Automation Programs. In Proceedings of the 35th Annual ACM Symposium on User Interface Software and Technology. ACM, Bend OR USA, 1–16. https://doi.org/10.1145/3526113.3545691

- ChatGPT in Healthcare: Exploring AI Chatbot for Spontaneous Word Retrieval in Aphasia. In Companion Publication of the 2023 Conference on Computer Supported Cooperative Work and Social Computing (CSCW ’23 Companion). Association for Computing Machinery, New York, NY, USA, 1–5. https://doi.org/10.1145/3584931.3606993

- Laria Reynolds and Kyle McDonell. 2021. Prompt Programming for Large Language Models: Beyond the Few-Shot Paradigm. In Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems. ACM, Yokohama Japan, 1–7. https://doi.org/10.1145/3411763.3451760

- The Programmer’s Assistant: Conversational Interaction with a Large Language Model for Software Development. In Proceedings of the 28th International Conference on Intelligent User Interfaces (IUI ’23). Association for Computing Machinery, New York, NY, USA, 491–514. https://doi.org/10.1145/3581641.3584037

- Pattern-oriented software architecture, patterns for concurrent and networked objects. John Wiley & Sons.

- RetroLens: A Human-AI Collaborative System for Multi-step Retrosynthetic Route Planning. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–20. https://doi.org/10.1145/3544548.3581469

- Chatbots Facilitating Consensus-Building in Asynchronous Co-Design. In Proceedings of the 35th Annual ACM Symposium on User Interface Software and Technology (UIST ’22). Association for Computing Machinery, New York, NY, USA, 1–13. https://doi.org/10.1145/3526113.3545671

- Ben Shneiderman and Catherine Plaisant. 2004. Designing the User Interface: Strategies for Effective Human-Computer Interaction (4th Edition). Pearson Addison Wesley.

- GridBook: Natural Language Formulas for the Spreadsheet Grid. In 27th International Conference on Intelligent User Interfaces (IUI ’22). Association for Computing Machinery, New York, NY, USA, 345–368. https://doi.org/10.1145/3490099.3511161

- Arjun Srinivasan and Vidya Setlur. 2021. Snowy: Recommending Utterances for Conversational Visual Analysis. In The 34th Annual ACM Symposium on User Interface Software and Technology (UIST ’21). Association for Computing Machinery, New York, NY, USA, 864–880. https://doi.org/10.1145/3472749.3474792

- Sensecape: Enabling Multilevel Exploration and Sensemaking with Large Language Models. arXiv:2305.11483 [cs.HC]

- Sensecape: Enabling Multilevel Exploration and Sensemaking with Large Language Models. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23). Association for Computing Machinery, New York, NY, USA, 1–18. https://doi.org/10.1145/3586183.3606756

- Enabling Conversational Interaction with Mobile UI Using Large Language Models. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems (Hamburg, Germany) (CHI ’23). Association for Computing Machinery, New York, NY, USA, Article 432, 17 pages. https://doi.org/10.1145/3544548.3580895

- PopBlends: Strategies for Conceptual Blending with Large Language Models. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–19. https://doi.org/10.1145/3544548.3580948

- Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. arXiv:2201.11903 [cs.CL]

- Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems 35 (2022), 24824–24837.

- A Prompt Pattern Catalog to Enhance Prompt Engineering with ChatGPT. arXiv:2302.11382 [cs.SE]

- ScatterShot: Interactive In-context Example Curation for Text Transformation. In Proceedings of the 28th International Conference on Intelligent User Interfaces (IUI ’23). Association for Computing Machinery, New York, NY, USA, 353–367. https://doi.org/10.1145/3581641.3584059

- PromptChainer: Chaining Large Language Model Prompts through Visual Programming. http://arxiv.org/abs/2203.06566 arXiv:2203.06566 [cs].

- AI Chains: Transparent and Controllable Human-AI Interaction by Chaining Large Language Model Prompts. In CHI Conference on Human Factors in Computing Systems. ACM, New Orleans LA USA, 1–22. https://doi.org/10.1145/3491102.3517582

- AI Chains: Transparent and Controllable Human-AI Interaction by Chaining Large Language Model Prompts. arXiv:2110.01691 [cs.HC]

- Chang Xiao. 2023. AutoSurveyGPT: GPT-Enhanced Automated Literature Discovery. In Adjunct Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology (UIST ’23 Adjunct). Association for Computing Machinery, New York, NY, USA, 1–3. https://doi.org/10.1145/3586182.3616648

- Let Me Ask You This: How Can a Voice Assistant Elicit Explicit User Feedback? Proceedings of the ACM on Human-Computer Interaction 5, CSCW2 (Oct. 2021), 388:1–388:24. https://doi.org/10.1145/3479532

- Supporting Qualitative Analysis with Large Language Models: Combining Codebook with GPT-3 for Deductive Coding. In 28th International Conference on Intelligent User Interfaces. ACM, Sydney NSW Australia, 75–78. https://doi.org/10.1145/3581754.3584136

- Wordcraft: Story Writing With Large Language Models. In 27th International Conference on Intelligent User Interfaces (IUI ’22). Association for Computing Machinery, New York, NY, USA, 841–852. https://doi.org/10.1145/3490099.3511105

- Why Johnny Can’t Prompt: How Non-AI Experts Try (and Fail) to Design LLM Prompts. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–21. https://doi.org/10.1145/3544548.3581388

- Towards Human-Centred AI-Co-Creation: A Three-Level Framework for Effective Collaboration between Human and AI. In Companion Publication of the 2023 Conference on Computer Supported Cooperative Work and Social Computing (CSCW ’23 Companion). Association for Computing Machinery, New York, NY, USA, 312–316. https://doi.org/10.1145/3584931.3607008

- VISAR: A Human-AI Argumentative Writing Assistant with Visual Programming and Rapid Draft Prototyping. arXiv preprint arXiv:2304.07810 (2023).

- StoryBuddy: A Human-AI Collaborative Chatbot for Parent-Child Interactive Storytelling with Flexible Parental Involvement. In CHI Conference on Human Factors in Computing Systems. ACM, New Orleans LA USA, 1–21. https://doi.org/10.1145/3491102.3517479

- Yubo Zhao and Xiying Bao. 2023. Narratron: Collaborative Writing and Shadow-playing of Children Stories with Large Language Models. In Adjunct Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. ACM, San Francisco CA USA, 1–6. https://doi.org/10.1145/3586182.3625120

- Competent but Rigid: Identifying the Gap in Empowering AI to Participate Equally in Group Decision-Making. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems. ACM, Hamburg Germany, 1–19. https://doi.org/10.1145/3544548.3581131

- Interactive Exploration-Exploitation Balancing for Generative Melody Composition. In 26th International Conference on Intelligent User Interfaces (IUI ’21). Association for Computing Machinery, New York, NY, USA, 43–47. https://doi.org/10.1145/3397481.3450663

- Qingxiaoyang Zhu and Hao-Chuan Wang. 2023. Leveraging Large Language Model as Support for Human Problem Solving: An Exploration of Its Appropriation and Impact. In Companion Publication of the 2023 Conference on Computer Supported Cooperative Work and Social Computing (CSCW ’23 Companion). Association for Computing Machinery, New York, NY, USA, 333–337. https://doi.org/10.1145/3584931.3606965

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.