AI2Apps: A Visual IDE for Building LLM-based AI Agent Applications

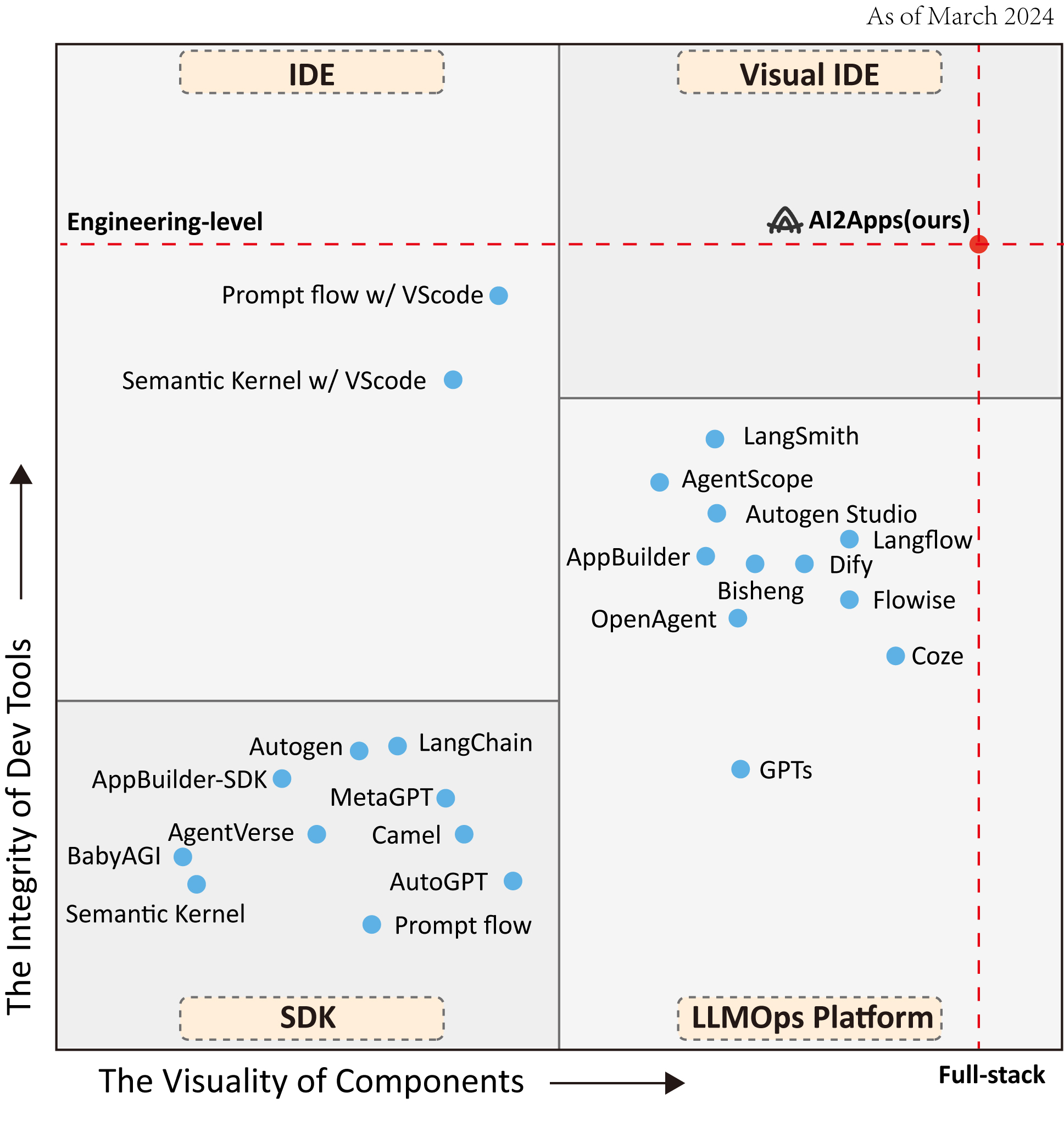

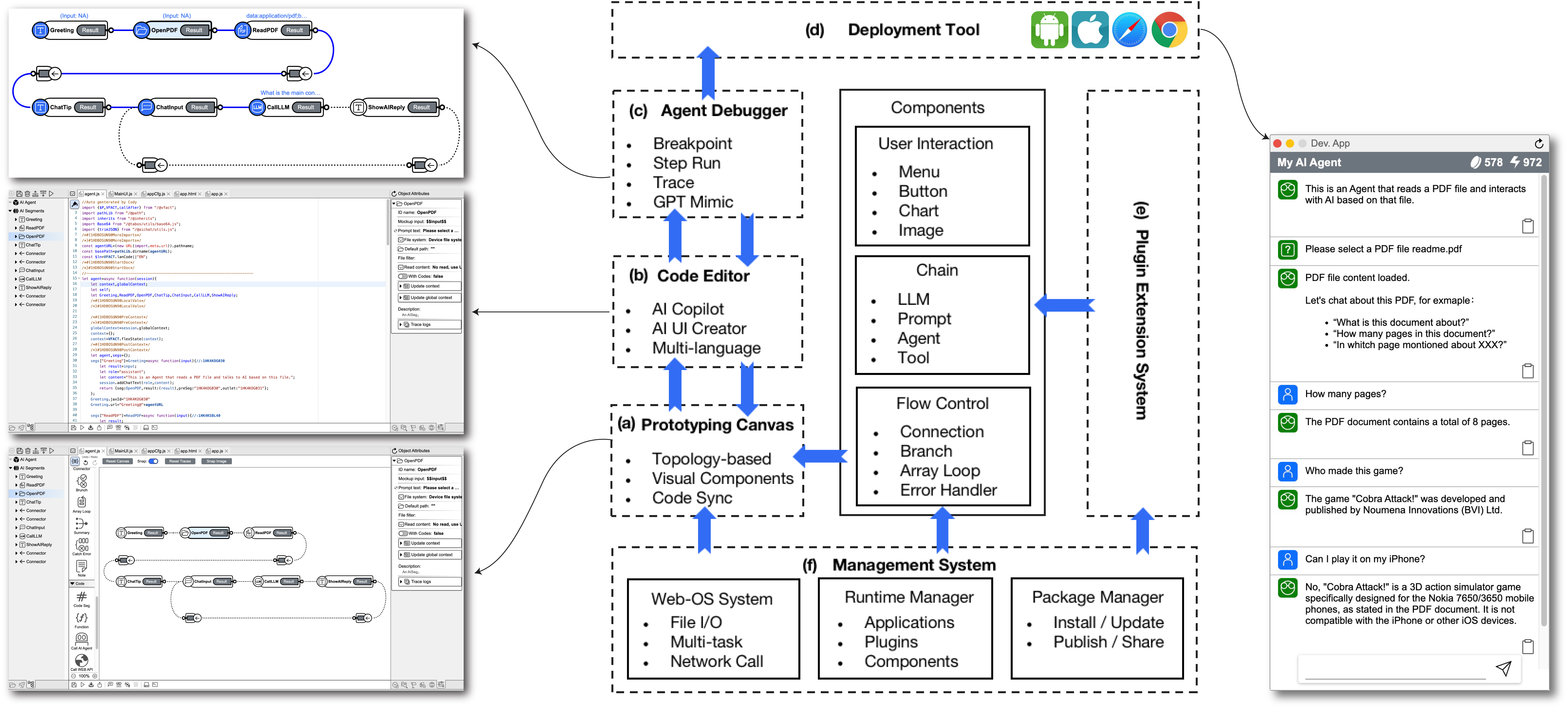

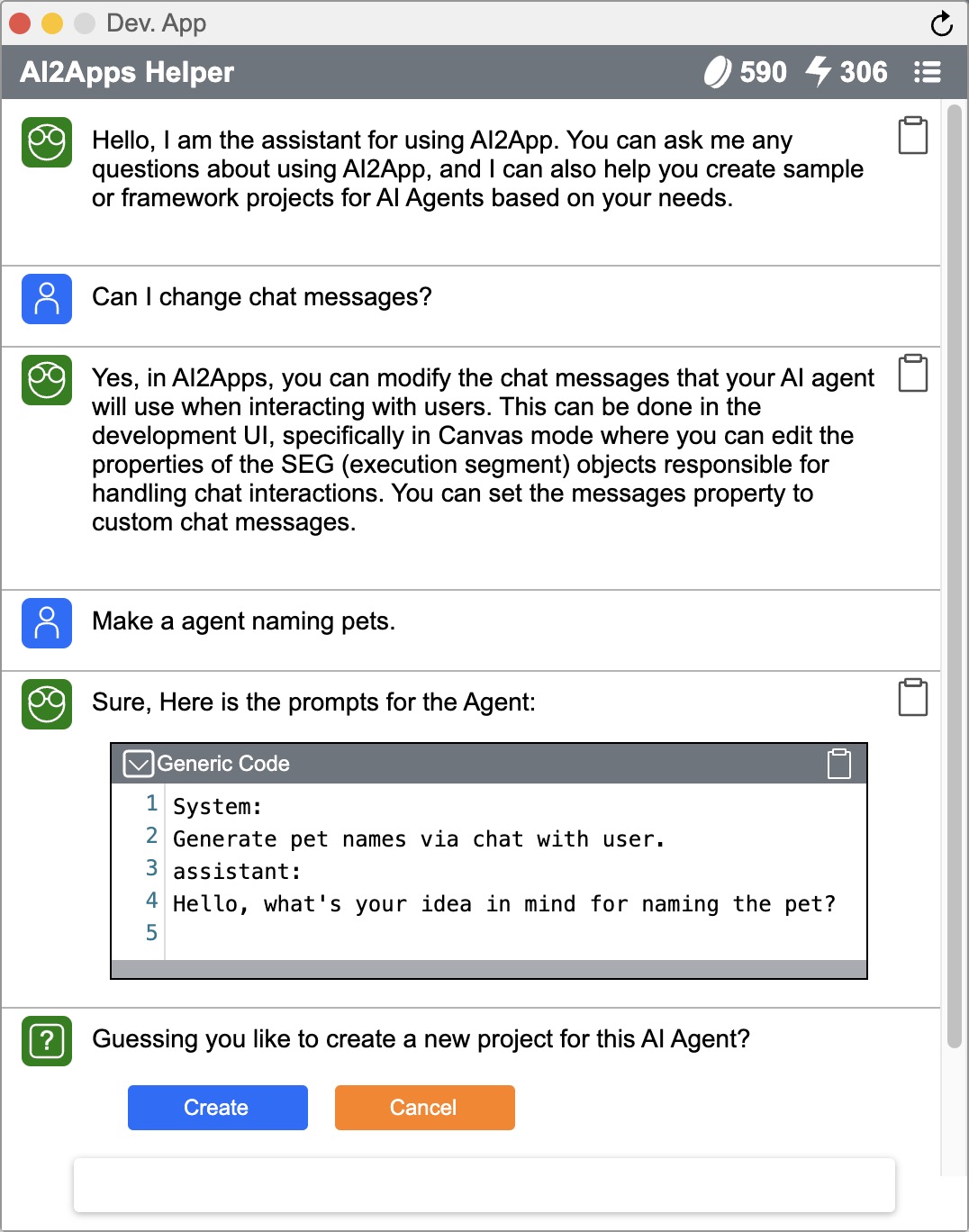

Abstract: We introduce AI2Apps, a Visual Integrated Development Environment (Visual IDE) with full-cycle capabilities that accelerates developers to build deployable LLM-based AI agent Applications. This Visual IDE prioritizes both the Integrity of its development tools and the Visuality of its components, ensuring a smooth and efficient building experience.On one hand, AI2Apps integrates a comprehensive development toolkit ranging from a prototyping canvas and AI-assisted code editor to agent debugger, management system, and deployment tools all within a web-based graphical user interface. On the other hand, AI2Apps visualizes reusable front-end and back-end code as intuitive drag-and-drop components. Furthermore, a plugin system named AI2Apps Extension (AAE) is designed for Extensibility, showcasing how a new plugin with 20 components enables web agent to mimic human-like browsing behavior. Our case study demonstrates substantial efficiency improvements, with AI2Apps reducing token consumption and API calls when debugging a specific sophisticated multimodal agent by approximately 90% and 80%, respectively. The AI2Apps, including an online demo, open-source code, and a screencast video, is now publicly accessible.

- AutoGPT. 2023. Autogpt. https://github.com/Significant-Gravitas/AutoGPT.

- Baidubce. 2023a. Appbuilder. https://cloud.baidu.com/product/AppBuilder.

- Baidubce. 2023b. Appbuilder-sdk. https://github.com/baidubce/app-builder.

- Emergent autonomous scientific research capabilities of large language models. arXiv preprint arXiv:2304.05332.

- Autonomous chemical research with large language models. Nature, 624(7992):570–578.

- Augmenting large language models with chemistry tools. In NeurIPS 2023 AI for Science Workshop.

- ByteDance. 2023. Coze: Next-gen ai chatbot developing platform. https://www.coze.com/.

- Agentverse: Facilitating multi-agent collaboration and exploring emergent behaviors in agents. arXiv preprint arXiv:2308.10848.

- Dataelement. 2023. Bisheng. https://github.com/dataelement/bisheng.

- FlowiseAI. 2023. Flowise. https://github.com/FlowiseAI/Flowise.

- Agentscope: A flexible yet robust multi-agent platform. arXiv preprint arXiv:2402.14034.

- Metagpt: Meta programming for multi-agent collaborative framework. In The Twelfth International Conference on Learning Representations.

- Large language models are zero-shot reasoners. Advances in neural information processing systems, 35:22199–22213.

- LangChain. 2023a. Langchain. https://github.com/langchain-ai/langchain.

- LangChain. 2023b. Langsmith. https://www.langchain.com/langsmith.

- LangGenius. 2023. Dify. https://github.com/langgenius/dify.

- Camel: Communicative agents for "mind" exploration of large language model society. In Thirty-seventh Conference on Neural Information Processing Systems.

- Logspace. 2023. Langflow. https://github.com/logspace-ai/langflow.

- Microsoft. 2023a. Autogen studio 2.0: Revolutionizing ai agents. https://autogen-studio.com/.

- Microsoft. 2023b. Prompt flow. https://github.com/microsoft/promptflow.

- Microsoft. 2023c. Prompt flow for vscode. https://marketplace.visualstudio.com/items?itemName=prompt-flow.prompt-flow.

- Microsoft. 2023d. Semantic kernel. https://github.com/microsoft/semantic-kernel.

- Microsoft. 2023e. Semantic kernel for vscode. https://learn.microsoft.com/en-us/semantic-kernel/vs-code-tools/.

- Microsoft. 2023f. Visual studio code - open source. https://github.com/microsoft/vscode.

- Yohei Nakajima. 2023. Babyagi. https://github.com/yoheinakajima/babyagi.

- Webgpt: Browser-assisted question-answering with human feedback. arXiv preprint arXiv:2112.09332.

- Openai. 2023. Explore gpts. https://chat.openai.com/gpts.

- Communicative agents for software development. arXiv preprint arXiv:2307.07924.

- Toolformer: Language models can teach themselves to use tools. Advances in Neural Information Processing Systems, 36.

- A survey on large language model based autonomous agents. arXiv preprint arXiv:2308.11432.

- Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35:24824–24837.

- Autogen: Enabling next-gen llm applications via multi-agent conversation framework. arXiv preprint arXiv:2308.08155.

- The rise and potential of large language model based agents: A survey. arXiv preprint arXiv:2309.07864.

- Openagents: An open platform for language agents in the wild. arXiv preprint arXiv:2310.10634.

- React: Synergizing reasoning and acting in language models. In The Eleventh International Conference on Learning Representations.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.