- The paper presents a novel collaborative AI architecture that utilizes multiple specialized capabilities instead of relying on a single LLM for reasoning.

- It introduces a modular 'Registration-Discovery-Invocation' framework that dynamically identifies and employs relevant tool services for task execution.

- The system demonstrates practical extensibility through scenarios that integrate contextual data and allow swift adaptation to external tool innovations.

"CACA Agent: Capability Collaboration based AI Agent" (2403.15137)

Abstract Overview

The paper introduces the CACA Agent, a Capability Collaboration-based AI agent system designed to address two critical challenges in AI agent deployment: efficient deployment and extensibility in application scenarios. Unlike conventional AI agents that rely heavily on a single LLM for reasoning, CACA Agent employs a collaborative architecture inspired by service computing. This architecture integrates multiple capabilities such as planning, methodology, tools, and more to enhance the functionality and extensibility of AI agents.

Introduction

The advancement of LLMs has enabled substantial capabilities for AI agents, allowing them to handle tasks requiring complex reasoning and decision-making. However, there exists a dependency on large single LLMs for reasoning, which can limit extensibility and lead to complications in model complexity. The CACA Agent proposes an open architecture where different capabilities collaborate to provide specific functionalities, reducing dependence on a single model and enhancing the agent's ability to adapt and expand its application scenarios.

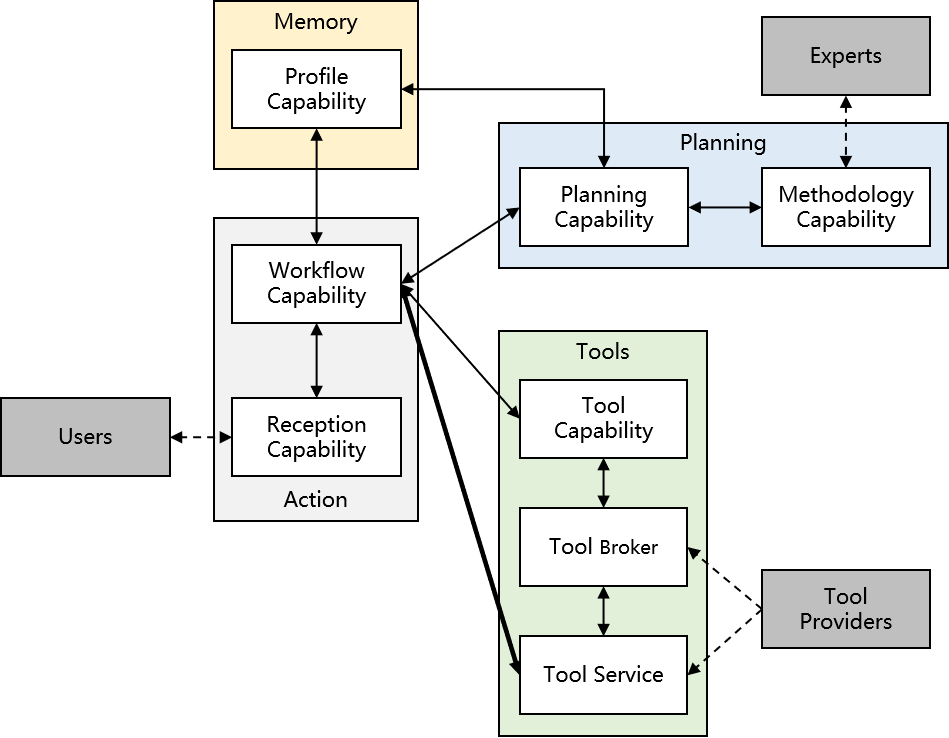

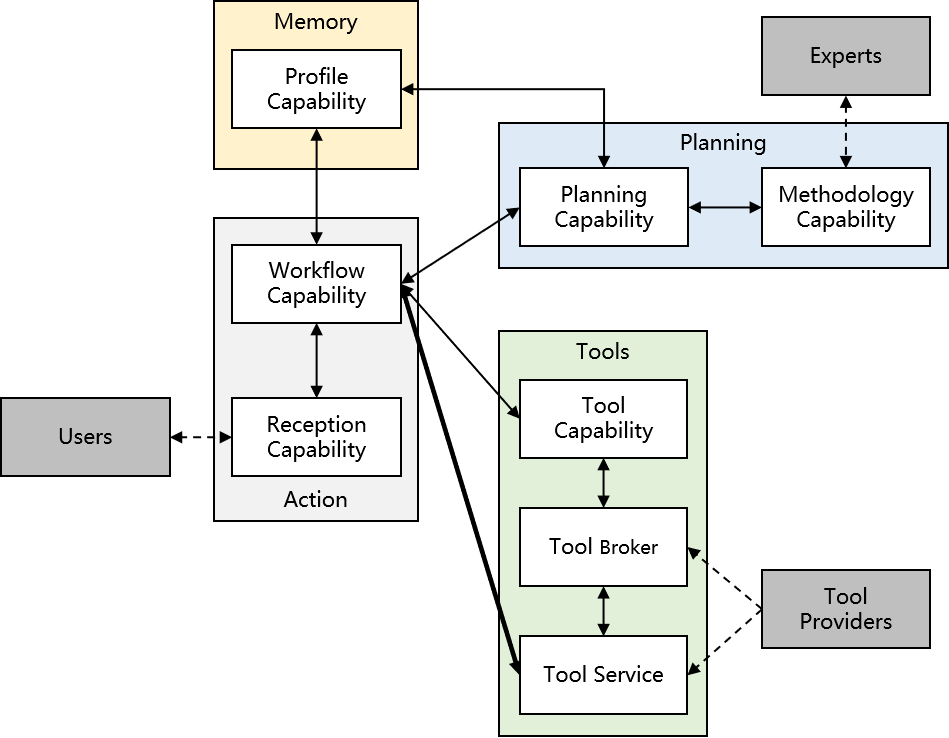

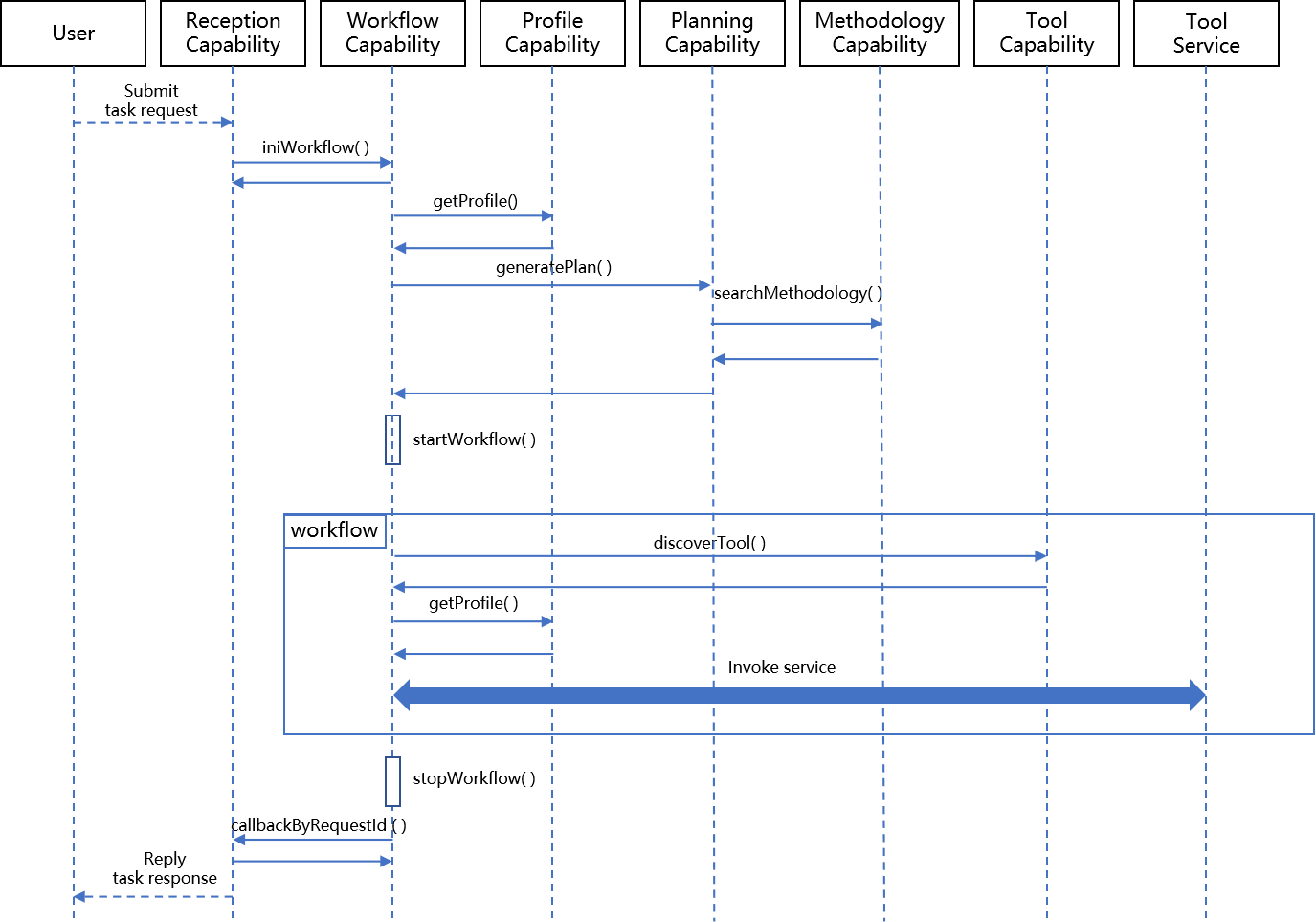

Figure 1: System Design Diagram illustrating the collaborative capabilities integrated into the CACA Agent architecture.

The literature highlights how planning capabilities have been leveraged to decompose complex tasks via techniques like Chain of Thought prompting. However, these models face challenges such as hallucinations, unrealistic outputs, and difficulties in tool use integration. Service computing-inspired models like RestGPT and ToolFormer have attempted to address tool integration challenges by enabling models to autonomously learn to use RESTful APIs, thereby enhancing practical interfacing with real-world applications.

System Architecture

Overall System Design

The architecture of CACA Agent is based on a collaborative approach, where functionalities are distributed across various components, each designed to fulfill specific roles. This modularity allows independent development, deployment, and updating of components, enhancing the agent's flexibility. The integration of planning and methodology capabilities improves task execution processes by dynamically expanding process knowledge and facilitating expert interaction for improved decision pathways.

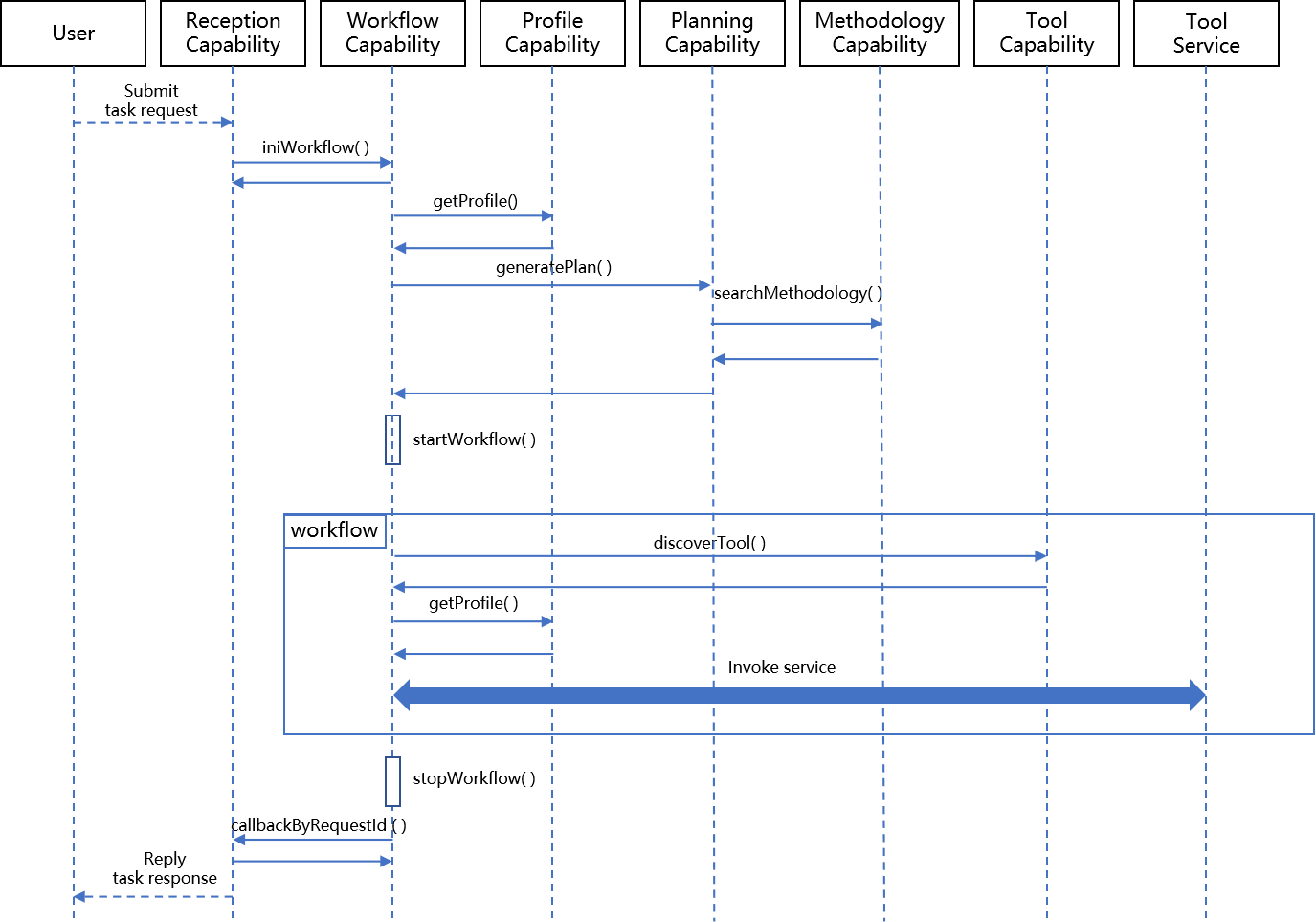

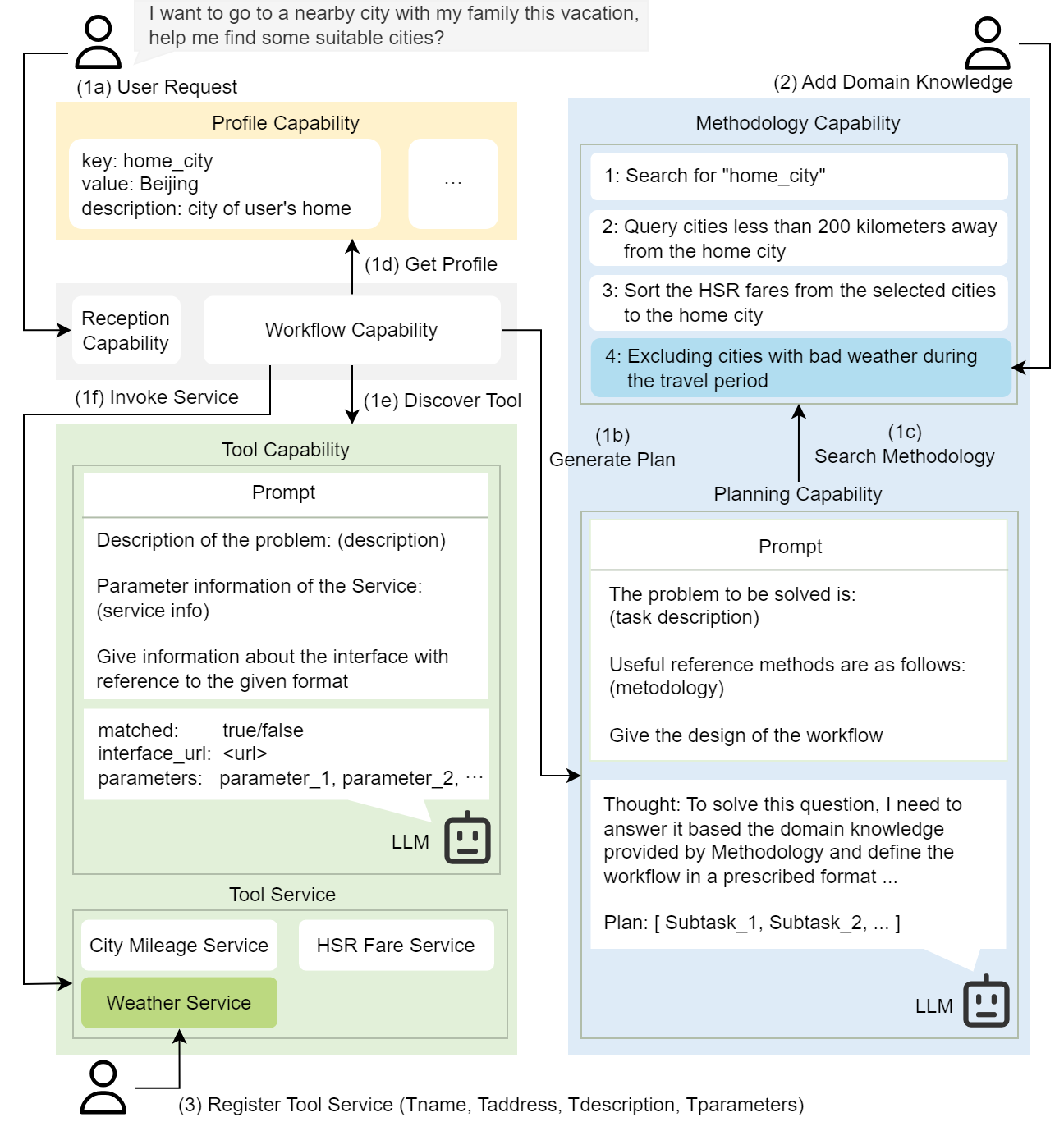

Figure 2: User Request Processing flow highlighting the interactions between different capabilities within the CACA Agent.

Key Workflows

The CACA Agent employs a "Registration-Discovery-Invocation" framework borrowed from service computing to manage tool capabilities. This framework supports dynamic tool discovery and selection based on user needs, executed through a workflow that achieves structured task decompositions and calls appropriate tool services for execution.

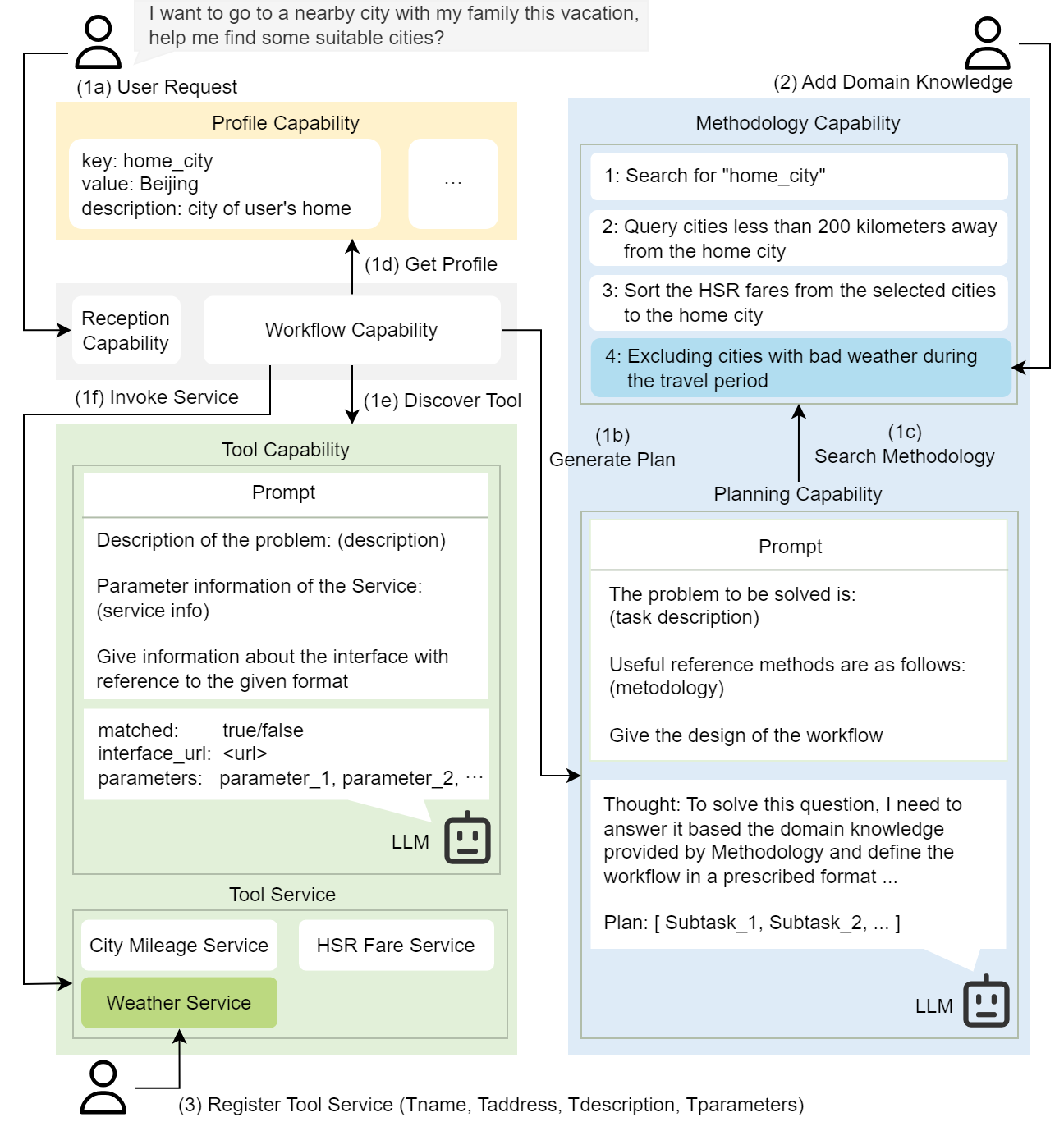

Figure 3: Workflow of CACA Agent demonstrated through a use-case scenario for travel recommendations.

Demo

The demonstration outlines three scenarios showcasing the CACA Agent's extensibility in planning and tool utility. Scenario 1 illustrates the basic workflow, showcasing interactions between planning capabilities and tool services. Scenario 2 highlights the system's capacity for extending planning to incorporate contextual data like weather conditions. Scenario 3 demonstrates the swift adaptation to new tools introduced by external providers, aiding dynamic scenario expansions.

Conclusion

The CACA Agent presents a robust solution for simplifying the deployment and extension of AI agents. By utilizing a service computing-inspired architecture, it effectively separates functionalities into modular capabilities, providing a platform that supports scalable and adaptable agent solutions. This modularity in design paves the way for integrating smaller, domain-specific LLMs, enhancing inference quality while maintaining system flexibility. Future transitions to deployable LLMs in CPU environments are underway to further optimize practicality and resource utilization for AI agents.