- The paper introduces VulEval, a comprehensive framework for evaluating vulnerabilities from function-level analysis to repository-level context.

- It leverages extensive datasets, including 4,196 CVE entries and 347,533 function dependencies, to assess various detection methods.

- Experiments show that fine-tuning methods excel in function-level detection while incorporating repository context notably enhances models like ChatGPT.

VulEval: Towards Repository-Level Evaluation of Software Vulnerability Detection

Introduction

The paper introduces VulEval, a comprehensive evaluation framework designed specifically for assessing vulnerability detection at both intra-procedural and inter-procedural levels in software repositories. It addresses the increased incidence of software vulnerabilities and their real-world implications, such as significant financial losses and security breaches.

Background and Objectives

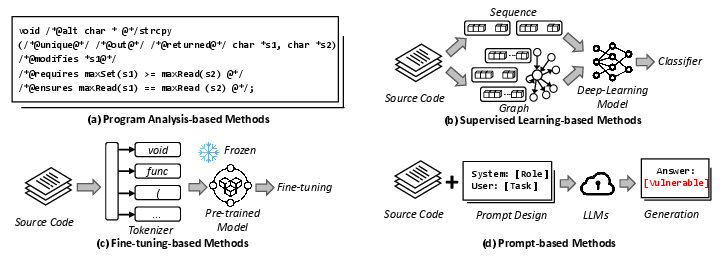

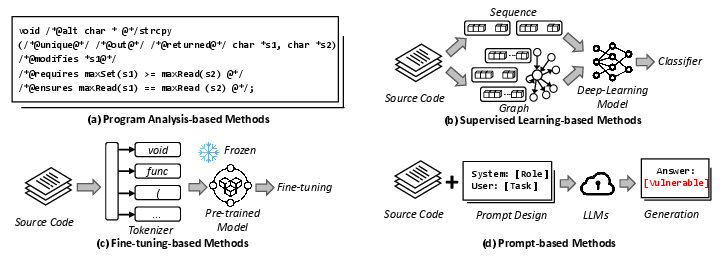

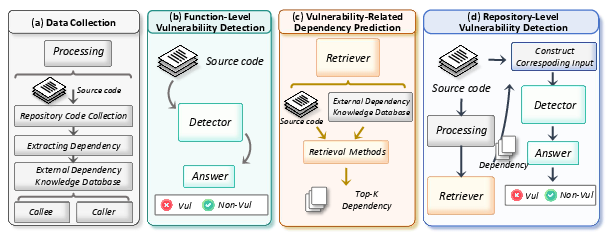

VulEval aims to bridge the gap between existing evaluation methods that predominantly focus on function-level vulnerability detection and the complexities involved in real-world scenarios, where vulnerabilities span across multiple files and even entire repositories. The framework categorizes vulnerability detection methods into four major types: program analysis-based, supervised learning-based, fine-tuning-based, and prompt-based techniques (Figure 1).

Figure 1: The four types of vulnerability detection methods.

Framework Architecture

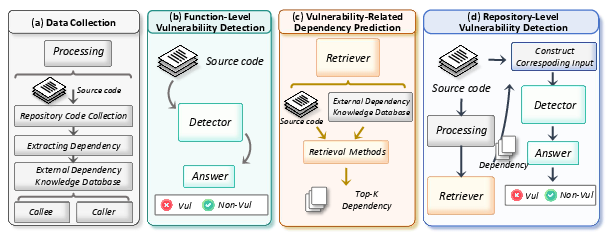

Data Collection

VulEval collects a substantial dataset, including 4,196 CVE entries and over 347,533 function dependencies, focusing on the C/C++ programming languages. It extracts repository-level source code and vulnerability-related dependencies using static analysis tools, providing a vast dataset for thorough evaluation (Figure 2).

Evaluation Tasks

VulEval evaluates three interconnected tasks:

- Function-level Vulnerability Detection: This task focuses on determining if a code snippet is vulnerable based solely on its content.

- Vulnerability-Related Dependency Prediction: This task involves predicting which dependencies are relevant to the vulnerability, providing critical context for understanding the software's potential weaknesses.

- Repository-level Vulnerability Detection: It integrates function-level predictions and dependency analysis to detect vulnerabilities that span multiple functions or files within a repository.

Figure 2: The overview of VulEval. Figure (a), (b), (c), and (d) denote the process of data collection, function-level vulnerability detection, vulnerability-related dependency prediction, and repository-level vulnerability detection, respectively.

Experimental Setup and Results

The experiments conducted using VulEval explore the performance of various detection methods under both random and time-split settings to simulate real-world applications.

Key Findings

- Effectiveness of Fine-Tuning Methods: Fine-tuning-based methods generally yield superior results in detecting vulnerabilities at the function level. However, performance declines are noted in time-split settings due to their reliance on historical data.

- Dependency Prediction: Lexical-based methods outperform semantic methods in identifying vulnerability-relevant dependencies, suggesting the need for more sophisticated retrieval techniques.

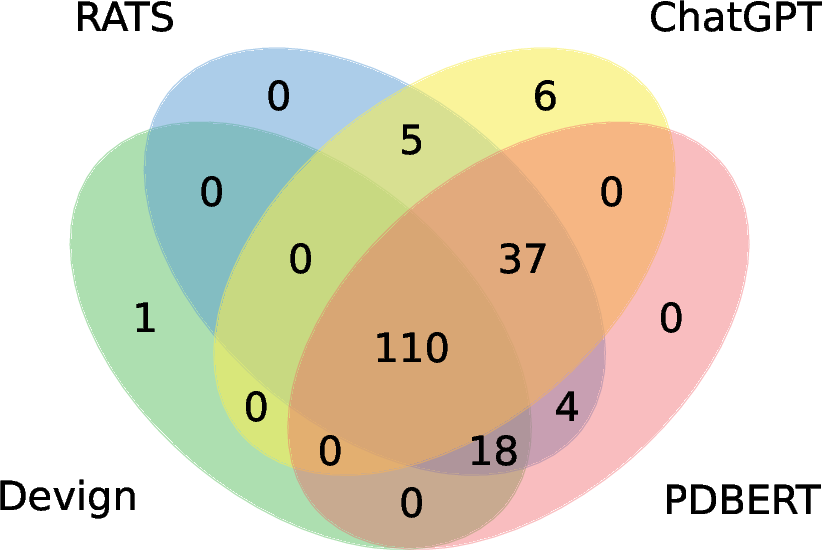

- Repository-Level Enhancement: Introducing repository-level contexts enhances the detection capabilities, particularly for models like ChatGPT, which benefits significantly from broader contextual information.

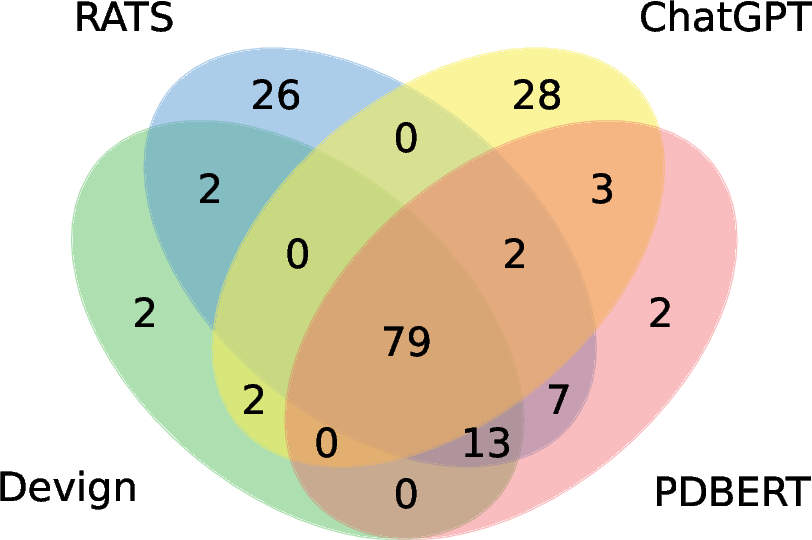

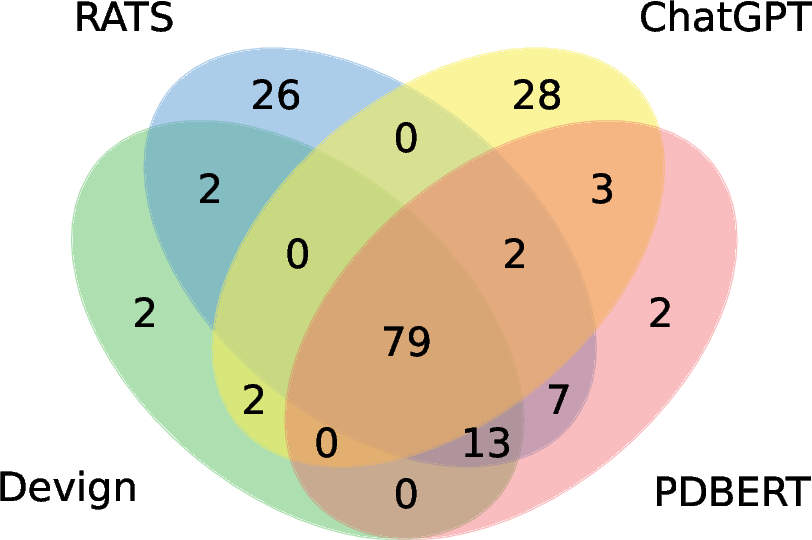

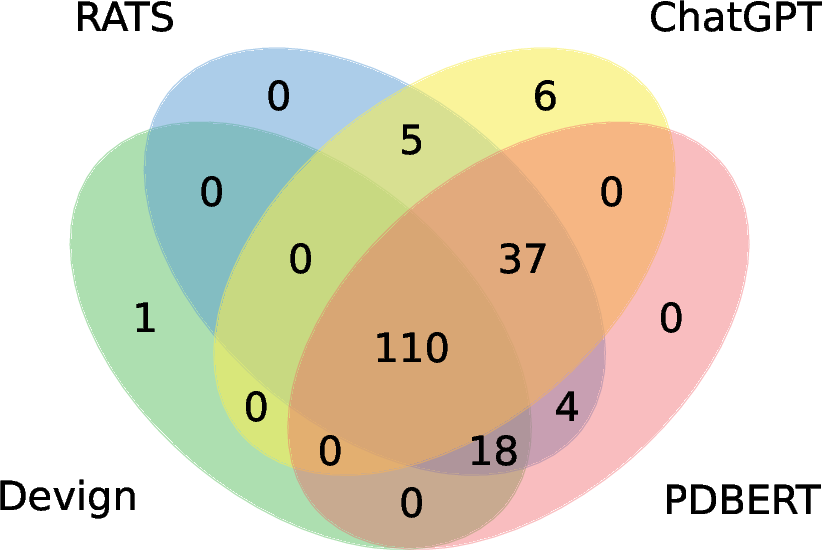

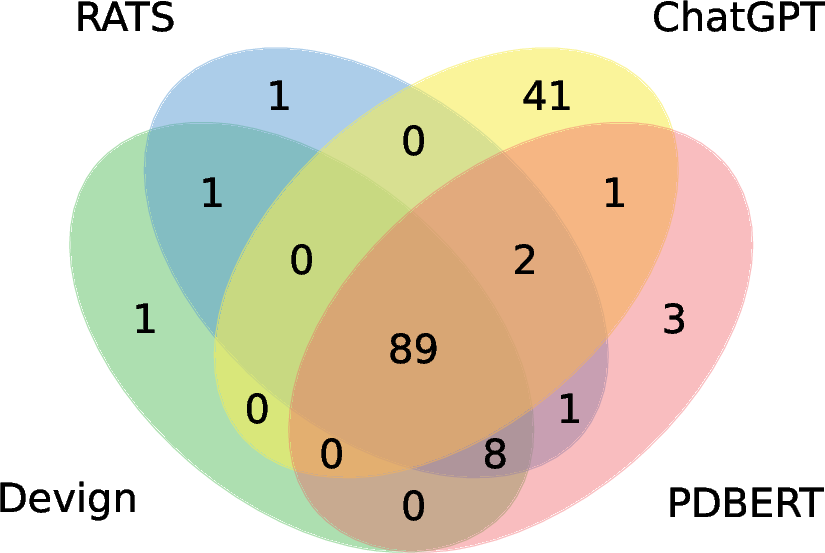

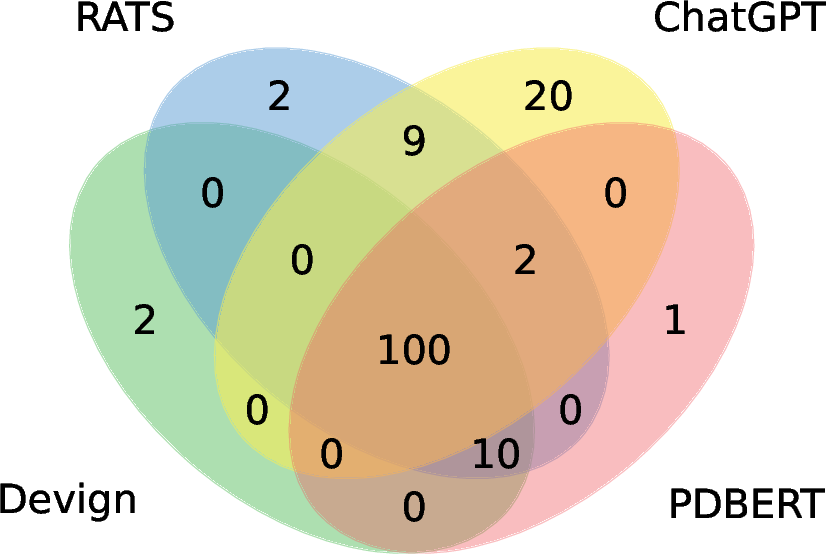

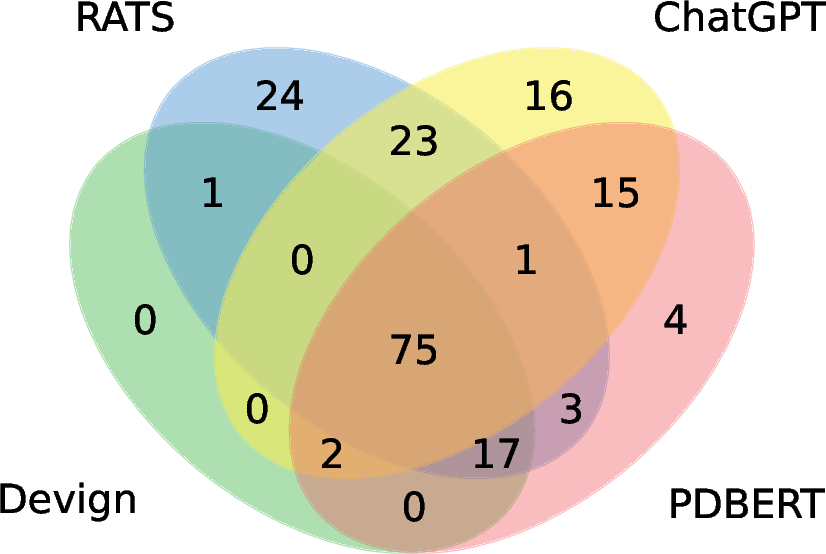

- Specific CWE Vulnerability Detection: The study highlights the strong performance of models like ChatGPT in detecting specific CWE vulnerabilities effectively, leveraging their capacity to understand and process extensive contextual information (Figure 3).

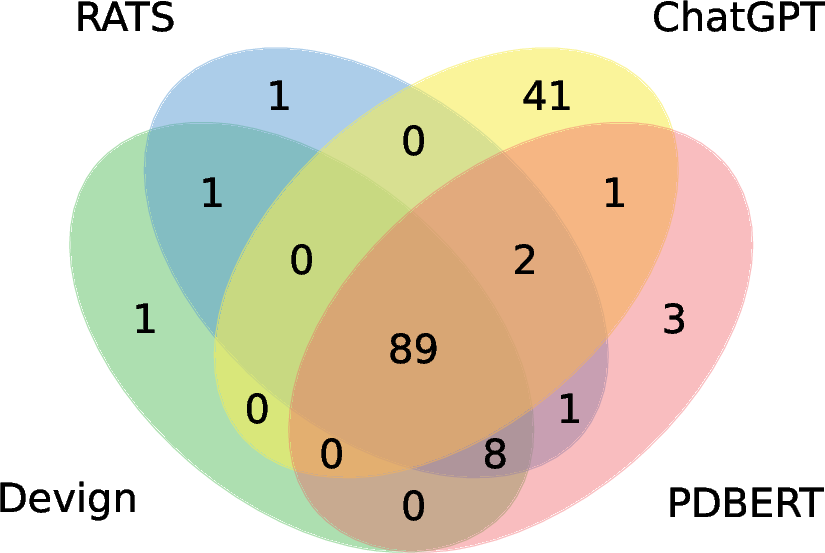

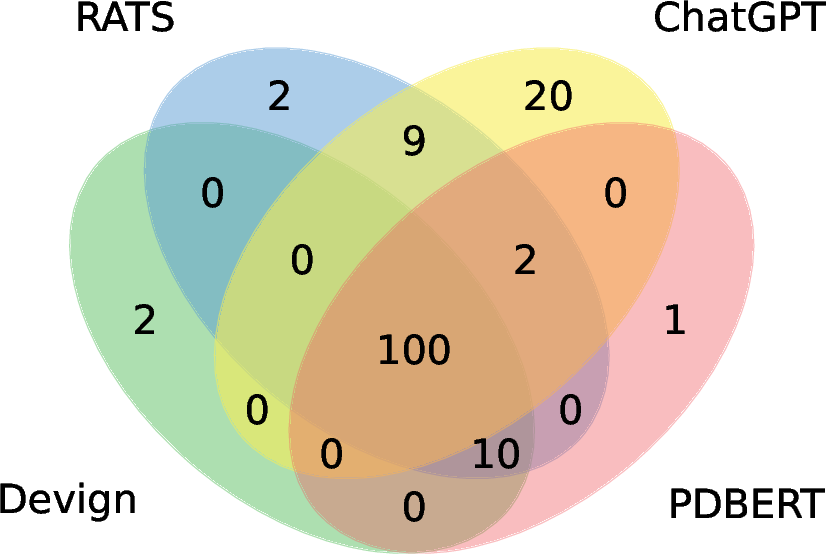

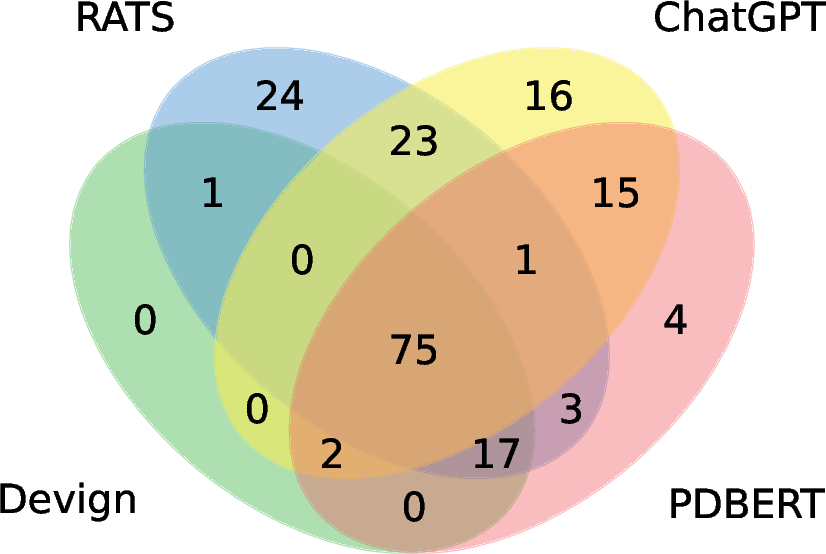

Figure 3: The experimental results of several vulnerability types, including CWE-190, CWE-400, CWE-415, CWE-416, and CWE-787. The green, blue, red, and yellow circles denote the results of Devign, RATS, PDBERT, and ChatGPT, respectively.

Discussion

The implications of VulEval extend to the development of more contextual awareness in vulnerability detection systems, particularly at the repository level. The paper suggests future research directions, such as improving retrieval techniques for dependency prediction and leveraging LLMs for specific vulnerability types.

Conclusion

VulEval serves as a pioneering framework for a comprehensive evaluation of software vulnerability detection, emphasizing the importance of repository-level insights. Future work will continue to enhance the framework's capabilities, focusing on more effective dependency identification and integration into holistic detection strategies.