- The paper introduces FixAgent, a novel framework leveraging LLM multi-agent synergy to address challenges in automated debugging.

- It uses specialized agents for fault localization, patch generation, and post-error analysis, enhanced by intermediate variable tracking and program context construction.

- Experimental results on datasets like QuixBugs and Codeflaws show FixAgent outperforms traditional methods in accurate bug fixing and patch generation.

A Unified Debugging Approach via LLM-Based Multi-Agent Synergy

Introduction

The paper "A Unified Debugging Approach via LLM-Based Multi-Agent Synergy" explores the potential of LLMs in addressing the challenges of automated software debugging. In contrast to traditional debugging tools, which struggle with accurate fault localization, complex logic errors, and context ignorance, this paper presents FixAgent, an innovative framework employing multiple specialized LLM agents to handle these issues synergistically.

Challenges in Automated Debugging

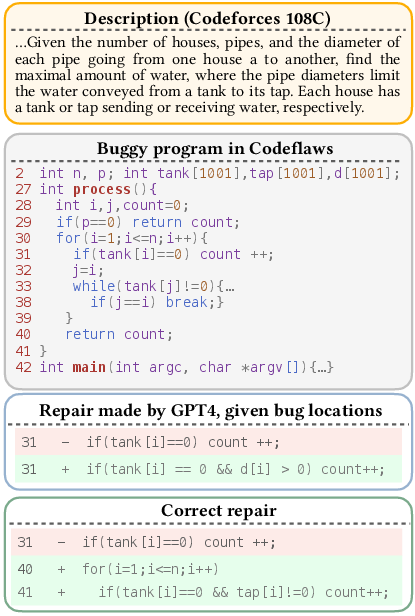

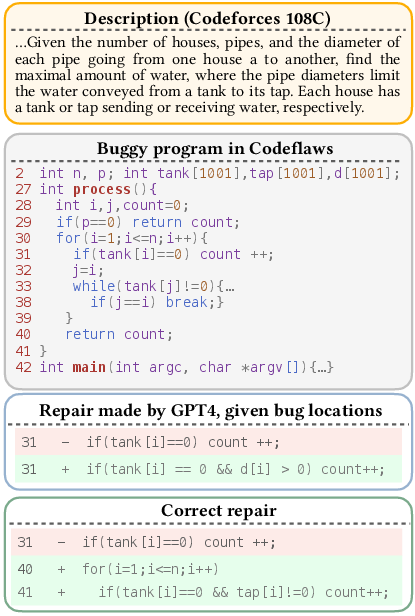

Automated debugging typically involves fault localization (FL) and automated program repair (APR). Traditional methods face significant challenges due to imperfect FL, which impairs subsequent repair processes (Figure 1).

Figure 1: Complex bug fixing is still challenging for LLMs.

LLM-based tools have shown promise but still struggle with significant obstacles, such as handling intricate logic errors and ignoring vital program contexts (Figure 2).

Figure 2: The repair made by an APR tool (also regarded as correct in the dataset) ignores the variable scope requirement.

FixAgent's Methodology

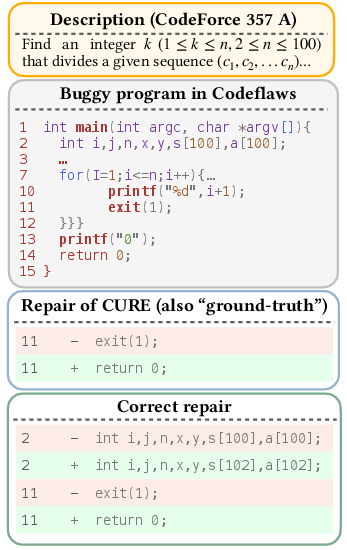

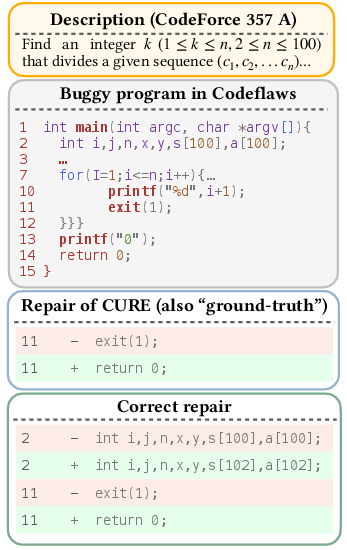

FixAgent innovatively tackles the aforementioned challenges by leveraging LLM-based multi-agent synergy. Its architecture includes three main components: specialized agent synergy, intermediate variable tracking, and program context construction.

Specialized Agent Synergy

FixAgent employs three LLM agents, each dedicated to a specific stage of debugging: fault localization, patch generation, and post-error analysis. Each agent is tasked with detailed explanations similar to "rubber duck debugging," which enhances program comprehension and repair.

Figure 3: Overview of FixAgent.

Agents are prompted to track critical variables, especially those affecting program logic significantly. This aids in identifying discrepancies and promotes precise error diagnosis, mirroring effective human debugging strategies.

Program Context Construction

By constructing a detailed context, including specifications and code dependencies, FixAgent ensures a comprehensive understanding of the program's intended behavior. This enables agents to make informed, context-aware repairs.

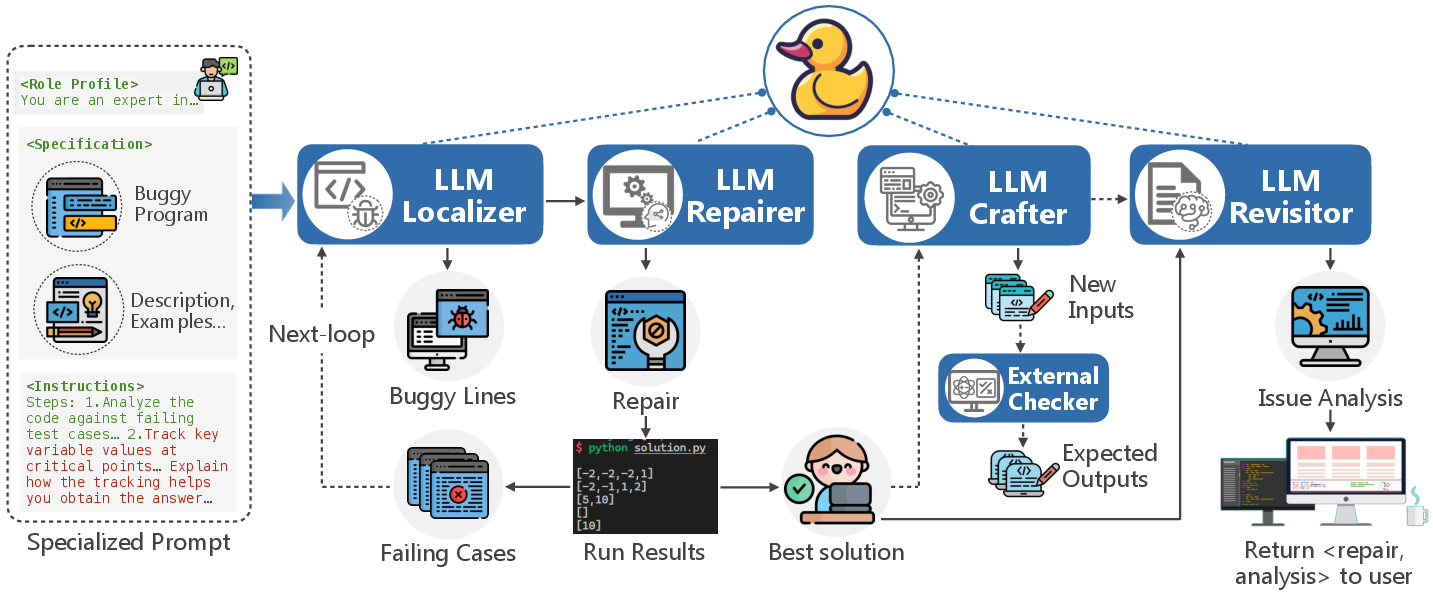

Experimental Results

Evaluations across datasets, such as QuixBugs and Codeflaws, demonstrated FixAgent's superiority in generating correct patches without prior fault localization knowledge. It plausibly patched 2780 out of 3982 bugs, vastly outperforming existing methods.

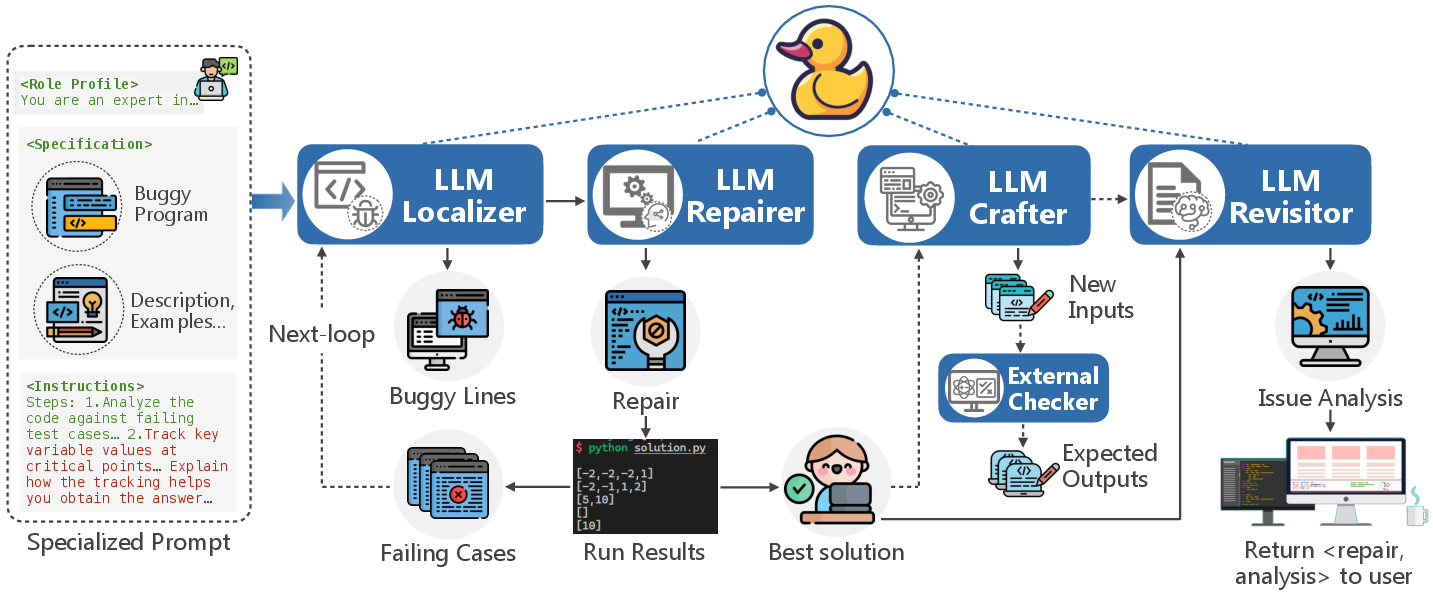

Figure 4: Example of a bug fixed by FixAgent in QuixBugs.

Implications and Future Directions

FixAgent illustrates LLMs' potential when guided by structured prompts and specialized roles, significantly improving debugging efficiency. Future research can extend this approach to other complex programming challenges, leveraging LLMs' advanced natural language capabilities for software engineering tasks.

Conclusion

This paper introduces FixAgent, a novel approach to automated debugging using LLM-based multi-agent synergy. By adopting elements of human debugging practices, FixAgent addresses critical challenges in software debugging, offering significant improvements over traditional methods. Its framework establishes a promising direction for integrating AI into software development processes.