- The paper outlines the primary causes of hallucination in multimodal LLMs, emphasizing data quality, model architecture, and inference challenges.

- The paper details evaluation metrics and benchmarks such as CHAIR, POPE, AMBER, and HallusionBench to quantify hallucination effectively.

- The paper discusses mitigation strategies including data enhancement, improved cross-modal alignment, and inference adjustments to ensure robust outputs.

Hallucination of Multimodal LLMs: A Survey

Introduction

The phenomenon of hallucination in multimodal LLMs (MLLMs), particularly in Large Vision-LLMs (LVLMs), has emerged as a significant concern, impacting their deployment and reliability in real-world applications. Despite their advanced capabilities in tasks such as image captioning and visual question answering, MLLMs exhibit a propensity to generate outputs misaligned with the provided visual content, termed hallucination. This paper provides an extensive survey of recent advancements in identifying, evaluating, and mitigating hallucinations in MLLMs to enhance their robustness and reliability.

Defining Hallucination in MLLMs

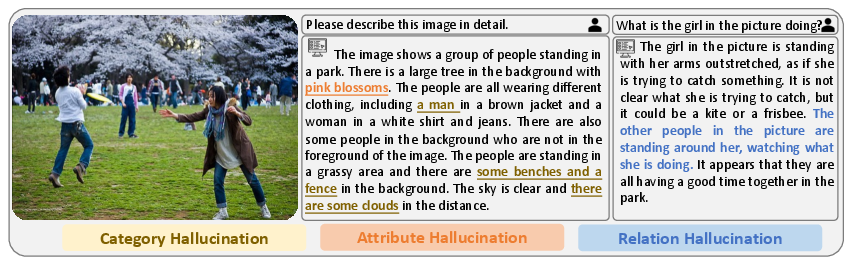

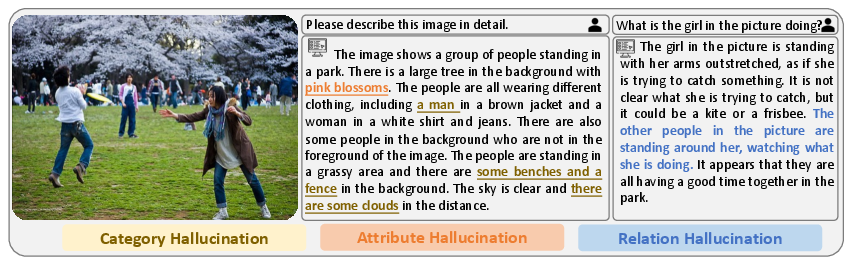

Hallucination in MLLMs refers to the discrepancy between text generated by the model and the visual content it is supposed to describe. This phenomenon can manifest as factuality hallucination, which involves discrepancies between the model output and real-world facts, or as faithfulness hallucination, where the output diverges from user instructions or context. In MLLMs, the focus is primarily on cross-modal inconsistencies, notably object hallucinations, which are further categorized into incorrect identification of object categories, attributes, and relations.

Figure 1: Three types of typical hallucination.

Causes of Hallucination

Hallucination in MLLMs stems from various factors during data acquisition, model training, and inference:

- Data-Related Causes: Hallucinations can arise due to insufficient data, low-quality data which may include noise, and a lack of diversity in training datasets, contributing to biases. The frequent co-occurrence of certain objects within datasets also exacerbates hallucination issues.

- Model Architecture and Training: The architecture of MLLMs, often built with separate pre-trained vision and LLMs linked by a cross-modal interface, can lead to hallucinations if the interface alignment is inadequate. Training strategies such as auto-regressive next token prediction, commonly used in LLMs, are insufficient for MLLMs due to their complex spatial data structures.

- Inference Issues: During inference, the dilution of visual attention over long sequence generation can result in outputs that are less grounded in visual reality, contributing to hallucination.

Evaluation Metrics and Benchmarks

Assessing hallucination requires meticulous metrics and benchmarks. These include CHAIR for measuring hallucinations in captions and POPE which evaluates object hallucination in a binary manner. The use of LLMs like GPT-4 for assessing generated responses is also common, though it involves substantial computational costs. Newer benchmarks like AMBER and HallusionBench offer more nuanced evaluations by integrating vision-based and textual assessments.

Mitigation Strategies

To address hallucinations, diverse strategies are employed:

- Data-Centric Mitigations: Enhancing data quality and diversity through counterfactual data generation and noise reduction processes are crucial approaches.

- Model Enhancements: Improving cross-modal alignment through enhanced model architectures and scaling up the visual resolution of encoders help reduce hallucinations.

- Training Adjustments: Incorporating additional learning objectives such as auxiliary supervision and applying reinforcement learning techniques offer pathways to reduce hallucination.

- Inference Adjustments: Techniques such as contrastive decoding and guided sentence generation refine output generations to improve consistency with visual inputs.

Future Directions

Future research directions include improving data acquisition strategies, enhancing model architectures for better cross-modal alignment, and developing standardized benchmarks for consistent evaluation. Moreover, leveraging hallucination capabilities tactically as a feature rather than strictly a flaw, could uncover creative applications for MLLMs beyond current use cases.

Conclusion

This survey underscores the challenges hallucination poses to the deployment and trustworthiness of MLLMs. By exploring its causes, evaluation methods, and mitigation strategies, we aim to inspire further research for more reliable and robust multimodal systems. Such advances would significantly contribute to the practical and ethical deployment of these sophisticated AI models in real-world scenarios.