Believing Anthropomorphism: Examining the Role of Anthropomorphic Cues on Trust in Large Language Models

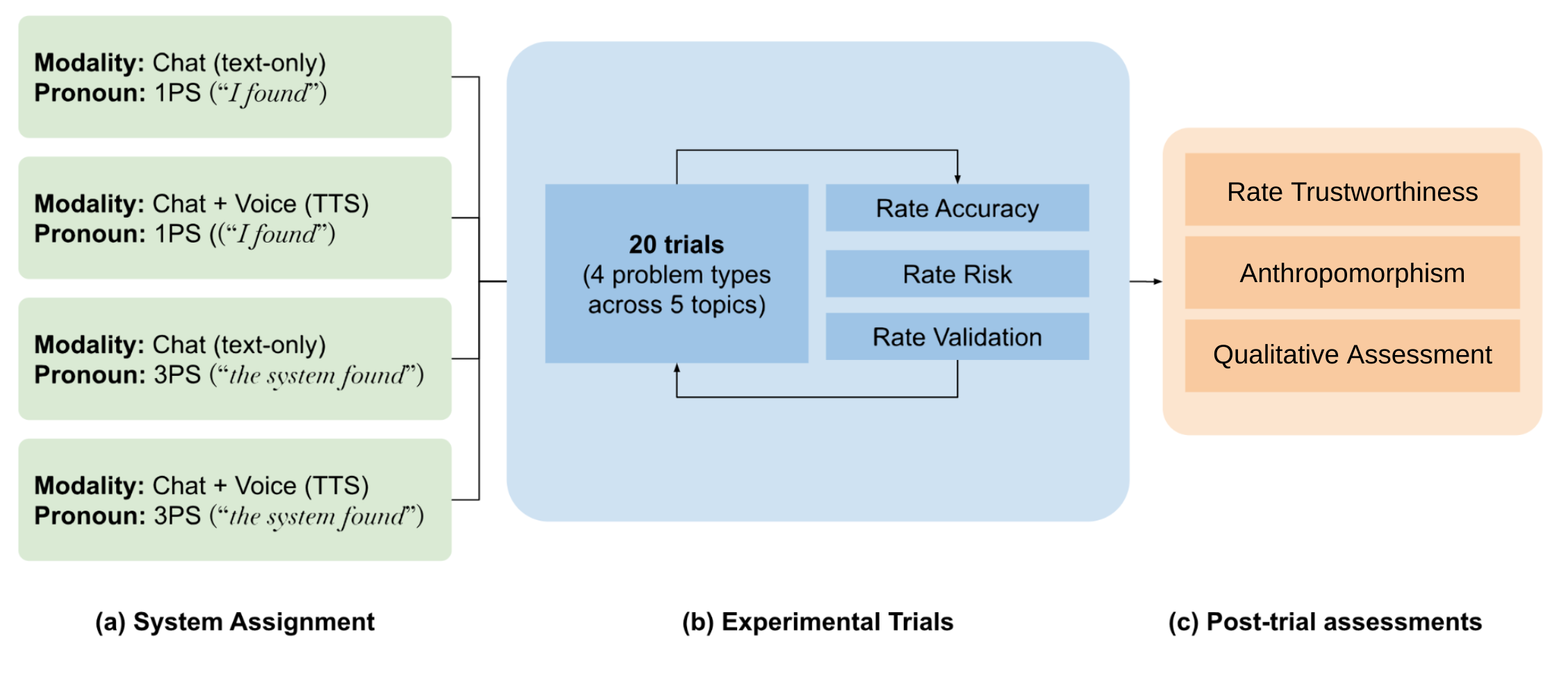

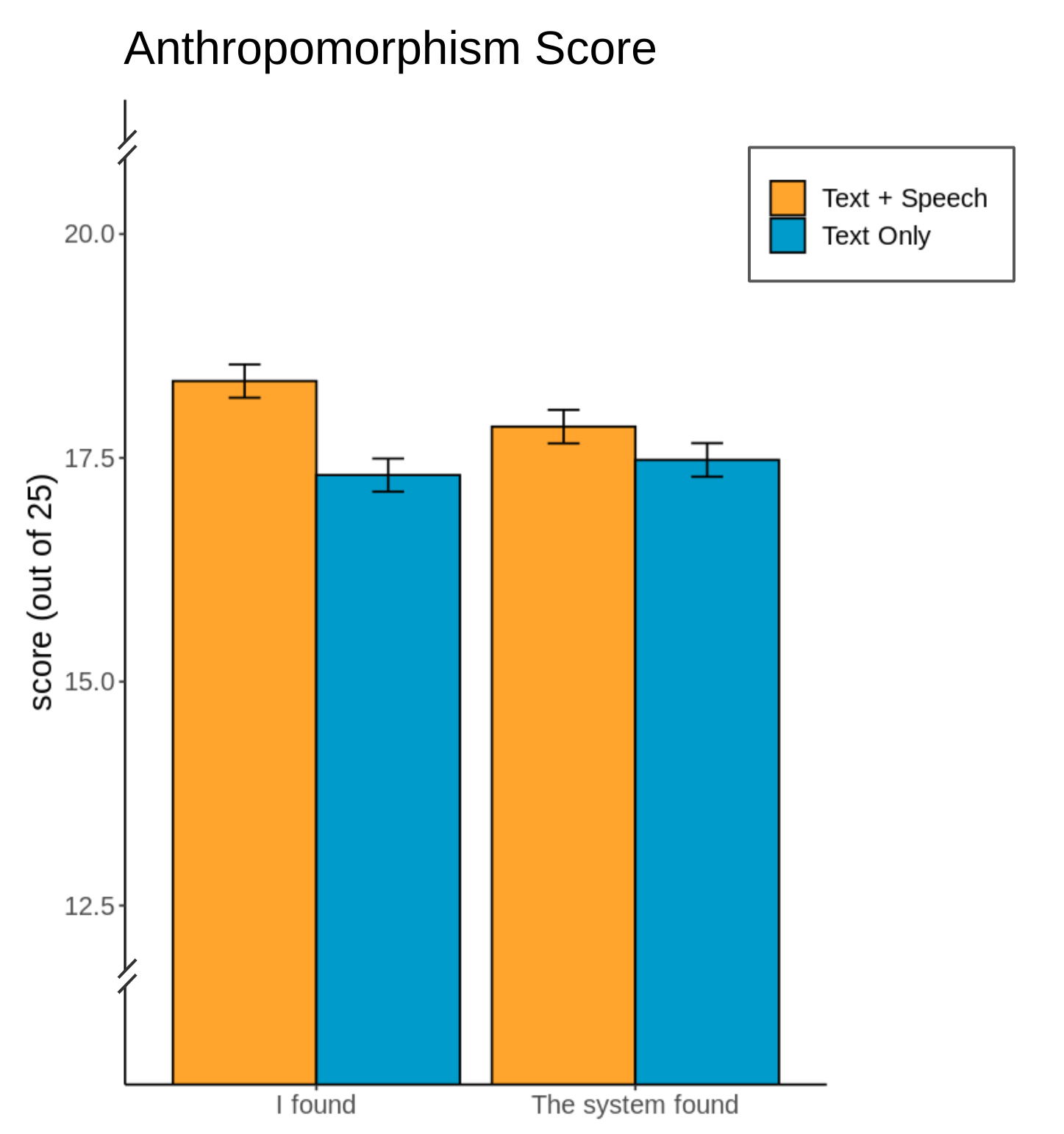

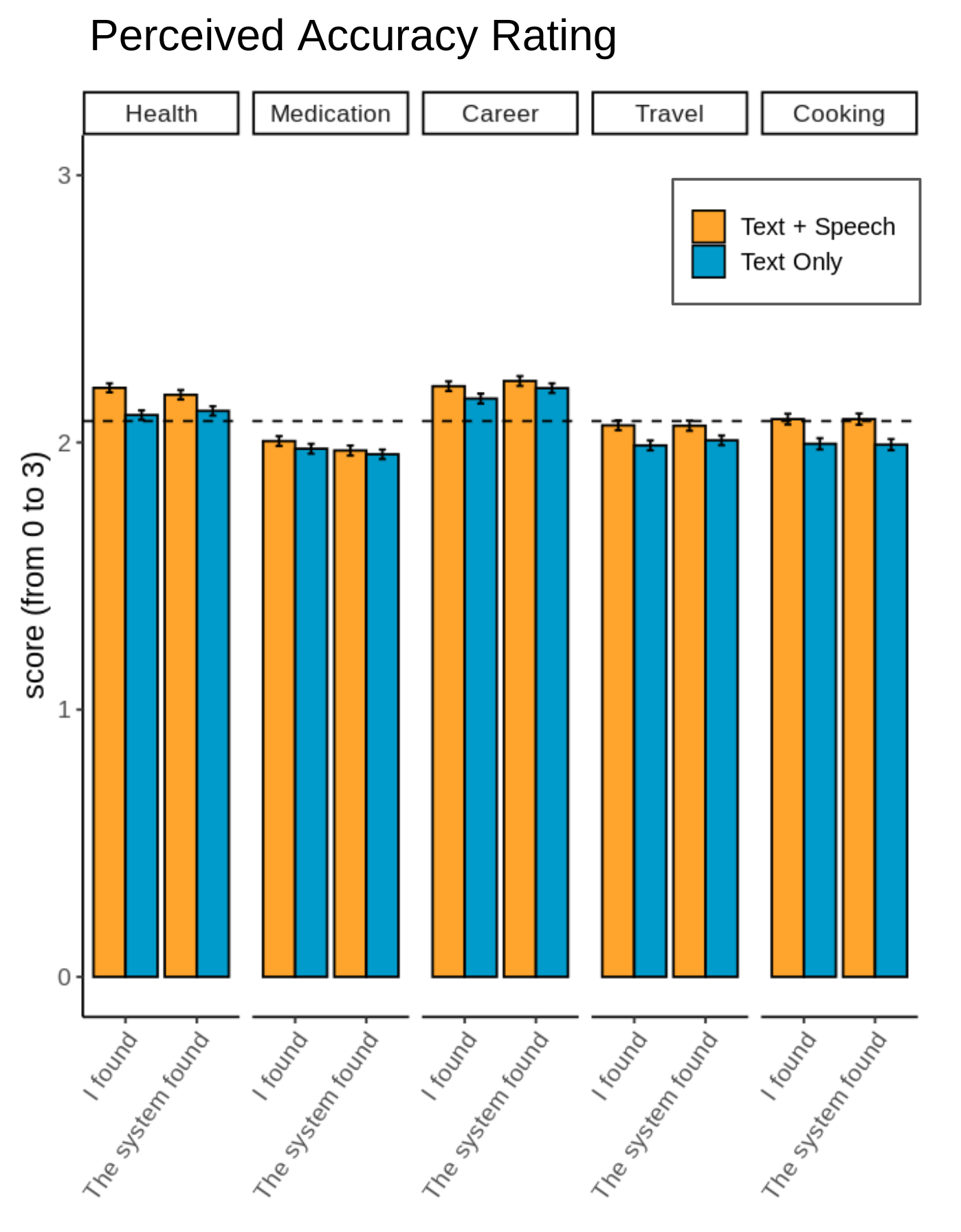

Abstract: People now regularly interface with LLMs via speech and text (e.g., Bard) interfaces. However, little is known about the relationship between how users anthropomorphize an LLM system (i.e., ascribe human-like characteristics to a system) and how they trust the information the system provides. Participants (n=2,165; ranging in age from 18-90 from the United States) completed an online experiment, where they interacted with a pseudo-LLM that varied in modality (text only, speech + text) and grammatical person ("I" vs. "the system") in its responses. Results showed that the "speech + text" condition led to higher anthropomorphism of the system overall, as well as higher ratings of accuracy of the information the system provides. Additionally, the first-person pronoun ("I") led to higher information accuracy and reduced risk ratings, but only in one context. We discuss these findings for their implications for the design of responsible, human-generative AI experiences.

- [n. d.]. https://developer.apple.com/design/human-interface-guidelines/siri/overview/editorial-guidelines

- Alberto Acerbi and Joseph M Stubbersfield. 2023. Large language models show human-like content biases in transmission chain experiments. Proceedings of the National Academy of Sciences 120, 44 (2023), e2313790120.

- Music, search, and IoT: How people (really) use voice assistants. ACM Transactions on Computer-Human Interaction (TOCHI) 26, 3 (2019), 1–28.

- A comparison of ChatGPT-generated articles with human-written articles. Skeletal Radiology (2023), 1–4.

- Measurement Instruments for the Anthropomorphism, Animacy, Likeability, Perceived Intelligence, and Perceived Safety of Robots. International Journal of Social Robotics 1, 1 (Jan. 2009), 71–81. https://doi.org/10.1007/s12369-008-0001-3

- Amy L Baylor and Jeeheon Ryu. 2003. The effects of image and animation in enhancing pedagogical agent persona. Journal of Educational Computing Research 28, 4 (2003), 373–394.

- On the dangers of stochastic parrots: Can language models be too big?. In Proceedings of the 2021 ACM conference on fairness, accountability, and transparency. 610–623.

- Cesar Cadenas. 2023. Google bard can now speak loud and clear as update introduces speech feature. https://www.techradar.com/computing/artificial-intelligence/google-bard-can-now-speak-loud-and-clear-as-update-introduces-speech-feature

- Rune Haubo B. Christensen. 2015. Analysis of ordinal data with cumulative link models—estimation with the R-package ordinal. R-package version 28 (2015), 406. https://mran.revolutionanalytics.com/snapshot/2014-09-12/web/packages/ordinal/vignettes/clm_intro.pdf

- Acoustic-phonetic properties of Siri- and human-directed speech. Journal of Phonetics 90 (Jan. 2022), 101123. https://doi.org/10.1016/j.wocn.2021.101123

- Michelle Cohn and Georgia Zellou. 2020. Perception of concatenative vs. neural text-to-speech (TTS): Differences in intelligibility in noise and language attitudes. In Proceedings of Interspeech.

- Voice anthropomorphism, interlocutor modelling and alignment effects on syntactic choices in human-computer dialogue. International Journal of Human-Computer Studies 83 (2015), 27–42. https://doi.org/10.1016/j.ijhcs.2015.05.008

- On measuring and mitigating biased inferences of word embeddings. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 34. 7659–7666.

- Conversational Agents Trust Calibration: A User-Centred Perspective to Design. In Proceedings of the 4th Conference on Conversational User Interfaces. 1–6.

- What generative AI means for trust in health communications. Journal of Communication in Healthcare 16, 4 (2023), 385–388.

- On seeing human: a three-factor theory of anthropomorphism. Psychological review 114, 4 (2007), 864.

- Claus-Peter Ernst and Nils Herm-Stapelberg. 2020. Gender Stereotyping’s Influence on the Perceived Competence of Siri and Co.. In Proceedings of the 53rd Hawaii International Conference on System Sciences. 4448–4453.

- John Andrew Fisher. 1991. Disambiguating anthropomorphism: An interdisciplinary review. Perspectives in ethology 9, 9 (1991), 49–85.

- ” I wouldn’t say offensive but…”: Disability-Centered Perspectives on Large Language Models. In Proceedings of the 2023 ACM Conference on Fairness, Accountability, and Transparency. 205–216.

- Comparing scientific abstracts generated by ChatGPT to real abstracts with detectors and blinded human reviewers. NPJ Digital Medicine 6, 1 (2023), 75.

- Phonetic accommodation in interaction with a virtual language learning tutor: A Wizard-of-Oz study. Journal of Phonetics 86 (2021), 101029.

- Li Gong. 2008. How social is social responses to computers? The function of the degree of anthropomorphism in computer representations. Computers in Human Behavior 24, 4 (2008), 1494–1509.

- A meta-analysis of factors affecting trust in human-robot interaction. Human factors 53, 5 (2011), 517–527.

- Nicole Holliday. 2023. Siri, you’ve changed! Acoustic properties and racialized judgments of voice assistants. Frontiers in Communication 8 (2023), 1116955.

- Do You Trust ChatGPT?–Perceived Credibility of Human and AI-Generated Content. arXiv preprint arXiv:2309.02524 (2023).

- The effect of communication modality on cooperation in online environments. In Proceedings of the SIGCHI conference on Human Factors in Computing Systems. 470–477.

- Youjeong Kim and S Shyam Sundar. 2012. Anthropomorphism of computers: Is it mindful or mindless? Computers in Human Behavior 28, 1 (2012), 241–250.

- Kwan Min Lee. 2008. Media Equation Theory. Vol. 1. John Wiley and Sons, Ltd, Malden, MA, USA, 1–4. https://doi.org/10.1002/9781405186407.wbiecm035

- Miriam J Metzger and Andrew J Flanagin. 2013. Credibility and trust of information in online environments: The use of cognitive heuristics. Journal of pragmatics 59 (2013), 210–220.

- The uncanny valley [from the field]. IEEE Robotics & automation magazine 19, 2 (2012), 98–100.

- How perceptions of intelligence and anthropomorphism affect adoption of personal intelligent agents. Electronic Markets 31 (2021), 343–364.

- Clifford Nass and Youngme Moon. 2000. Machines and mindlessness: Social responses to computers. Journal of social issues 56, 1 (2000), 81–103.

- Are people polite to computers? Responses to computer-based interviewing systems 1. Journal of applied social psychology 29, 5 (1999), 1093–1109.

- Computers are social actors: A review of current research. Human values and the design of computer technology 72 (1997), 137–162.

- Computers are social actors. In Proceedings of the SIGCHI conference on Human factors in computing systems. ACM, Boston, Massachusetts, USA, 72–78. https://doi.org/10.1145/259963.260288

- “Alexa is My New BFF”: Social Roles, User Satisfaction, and Personification of the Amazon Echo. In Proceedings of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems (CHI EA ’17). ACM, Denver, Colorado, USA, 2853–2859. https://doi.org/10.1145/3027063.3053246

- Lingyun Qiu and Izak Benbasat. 2005. Online consumer trust and live help interfaces: The effects of text-to-speech voice and three-dimensional avatars. International journal of human-computer interaction 19, 1 (2005), 75–94.

- Lingyun Qiu and Izak Benbasat. 2009. Evaluating anthropomorphic product recommendation agents: A social relationship perspective to designing information systems. Journal of management information systems 25, 4 (2009), 145–182.

- The effect of personal pronouns on users and the social role of conversational agents. Behaviour & Information Technology 41, 16 (2022), 3470–3486.

- Systematic review: Trust-building factors and implications for conversational agent design. International Journal of Human–Computer Interaction 37, 1 (2021), 81–96.

- A meta-analysis on the effectiveness of anthropomorphism in human-robot interaction. Science Robotics 6, 58 (2021), eabj5425.

- The dynamics of human–robot trust attitude and behavior—Exploring the effects of anthropomorphism and type of failure. Computers in Human Behavior 150 (2024), 108008.

- Personality traits in large language models. arXiv preprint arXiv:2307.00184 (2023).

- Enhancing Trust in LLM-Based AI Automation Agents: New Considerations and Future Challenges. arXiv preprint arXiv:2308.05391 (2023).

- William Seymour and Max Van Kleek. 2021. Exploring interactions between trust, anthropomorphism, and relationship development in voice assistants. Proceedings of the ACM on Human-Computer Interaction 5, CSCW2 (2021), 1–16.

- Synthesising Uncertainty: The Interplay of Vocal Effort and Hesitation Disfluencies.. In INTERSPEECH. 804–808.

- Trust in artificial voices: A” congruency effect” of first impressions and behavioural experience. In Proceedings of the Technology, Mind, and Society. 1–6.

- Trust in humanoid robots: implications for services marketing. Journal of Services Marketing 33, 4 (2019), 507–518.

- Who sees human? The stability and importance of individual differences in anthropomorphism. Perspectives on Psychological Science 5, 3 (2010), 219–232.

- The mind in the machine: Anthropomorphism increases trust in an autonomous vehicle. Journal of experimental social psychology 52 (2014), 113–117.

- Ethical and social risks of harm from language models. arXiv preprint arXiv:2112.04359 (2021).

- A brief overview of ChatGPT: The history, status quo and potential future development. IEEE/CAA Journal of Automatica Sinica 10, 5 (2023), 1122–1136.

- Understanding the effect of accuracy on trust in machine learning models. In Proceedings of the 2019 chi conference on human factors in computing systems. 1–12.

- Defending against neural fake news. Advances in neural information processing systems 32 (2019).

- Age- and Gender-Related Differences in Speech Alignment Toward Humans and Voice-AI. Frontiers in Communication 5 (2021), 1–11. https://doi.org/10.3389/fcomm.2020.600361

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.