LLM-for-X: Application-agnostic Integration of Large Language Models to Support Personal Writing Workflows

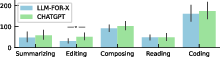

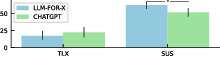

Abstract: To enhance productivity and to streamline workflows, there is a growing trend to embed LLM functionality into applications, from browser-based web apps to native apps that run on personal computers. Here, we introduce LLM-for-X, a system-wide shortcut layer that seamlessly augments any application with LLM services through a lightweight popup dialog. Our native layer seamlessly connects front-end applications to popular LLM backends, such as ChatGPT and Gemini, using their uniform chat front-ends as the programming interface or their custom API calls. We demonstrate the benefits of LLM-for-X across a wide variety of applications, including Microsoft Office, VSCode, and Adobe Acrobat as well as popular web apps such as Overleaf. In our evaluation, we compared LLM-for-X with ChatGPT's web interface in a series of tasks, showing that our approach can provide users with quick, efficient, and easy-to-use LLM assistance without context switching to support writing and reading tasks that is agnostic of the specific application.

- Victor Adamson and Johan Bägerfeldt. 2023. Assessing the effectiveness of ChatGPT in generating Python code.

- Valentina Alto. 2023. Modern Generative AI with ChatGPT and OpenAI Models: Leverage the capabilities of OpenAI’s LLM for productivity and innovation with GPT3 and GPT4.

- Amazon. 2024a. What is Alexa. https://developer.amazon.com/en-US/alexa Accessed: 30-03-2024.

- Amazon. 2024b. what is Amazon Lex. https://docs.aws.amazon.com/lex/latest/dg/what-is.html Accessed: 30-03-2024.

- anthropic. 2024. Introducing Claude. https://www.anthropic.com/news/introducing-claude Accessed: 30-03-2024.

- Apple. 2024. Siri. https://www.apple.com/siri/ Accessed: 30-03-2024.

- Predictive text encourages predictable writing. In Proceedings of the 25th International Conference on Intelligent User Interfaces. 128–138.

- A Writer’s Collaborative Assistant. International Conference on Intelligent User Interfaces, Proceedings IUI (06 2002). https://doi.org/10.1145/502716.502722

- Improving image generation with better captions. Computer Science. https://cdn. openai. com/papers/dall-e-3. pdf 2, 3 (2023), 8.

- John Brooke. 1995. SUS: A quick and dirty usability scale. Usability Eval. Ind. 189 (11 1995).

- Generative AI at work. Technical Report. National Bureau of Economic Research.

- Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374 (2021).

- Work interrupted: a comparison of workplace interruptions in emergency departments and primary care offices. Annals of emergency medicine 38, 2 (2001), 146–151.

- Chrome. 2023. Native messaging. https://developer.chrome.com/docs/extensions/develop/concepts/native-messaging Accessed: 2024-04-03.

- ” What can i help you with?” infrequent users’ experiences of intelligent personal assistants. In Proceedings of the 19th international conference on human-computer interaction with mobile devices and services. 1–12.

- Beyond text generation: Supporting writers with continuous automatic text summaries. In Proceedings of the 35th Annual ACM Symposium on User Interface Software and Technology. 1–13.

- Intelligent personal assistants: A systematic literature review. Expert Systems with Applications 147 (2020), 113193. https://doi.org/10.1016/j.eswa.2020.113193

- Google DeepMind. 2024. Welcome to Gemini. https://deepmind.google/technologies/gemini/#introduction Accessed: 30-03-2024.

- The Idea Machine: LLM-based Expansion, Rewriting, Combination, and Suggestion of Ideas. In Proceedings of the 14th Conference on Creativity and Cognition (Venice, Italy) (C&C ’22). 623–627. https://doi.org/10.1145/3527927.3535197

- Towards next-generation intelligent assistants leveraging llm techniques. In Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining. 5792–5793.

- A survey investigating usage of virtual personal assistants. arXiv preprint arXiv:1807.04606 (2018).

- PAL: Program-aided Language Models. In Proceedings of the 40th International Conference on Machine Learning (Proceedings of Machine Learning Research, Vol. 202), Andreas Krause, Emma Brunskill, Kyunghyun Cho, Barbara Engelhardt, Sivan Sabato, and Jonathan Scarlett (Eds.). PMLR, 10764–10799. https://proceedings.mlr.press/v202/gao23f.html

- A Design Space for Writing Support Tools Using a Cognitive Process Model of Writing. In Proceedings of the First Workshop on Intelligent and Interactive Writing Assistants (In2Writing 2022), Ting-Hao ’Kenneth’ Huang, Vipul Raheja, Dongyeop Kang, John Joon Young Chung, Daniel Gissin, Mina Lee, and Katy Ilonka Gero (Eds.). Association for Computational Linguistics, Dublin, Ireland, 11–24. https://doi.org/10.18653/v1/2022.in2writing-1.2

- Frederic Gmeiner and Nur Yildirim. 2023. Dimensions for Designing LLM-based Writing Support. In In2Writing Workshop at CHI.

- You’re the Voice: Evaluating user interfaces for encouraging underserved youths to express themselves through creative writing. In Proceedings of the 2015 ACM SIGCHI Conference on Creativity and Cognition. 63–72.

- Google. 2024. Google Workspace. https://workspace.google.com/ Accessed: 30-03-2024.

- Grammarly. 2024. Grammarly AI Writing Tools. https://www.grammarly.com/ai-writing-tools Accessed: 30-03-2024.

- Simone Grassini. 2023. Shaping the Future of Education: Exploring the Potential and Consequences of AI and ChatGPT in Educational Settings. Education Sciences 13, 7 (2023). https://doi.org/10.3390/educsci13070692

- Sandra G. Hart and Lowell E. Staveland. 1988. Development of NASA-TLX (Task Load Index): Results of Empirical and Theoretical Research. In Human Mental Workload, Peter A. Hancock and Najmedin Meshkati (Eds.). Advances in Psychology, Vol. 52. North-Holland, 139–183. https://doi.org/10.1016/S0166-4115(08)62386-9

- Supporting complex search tasks. In Proceedings of the 23rd ACM international conference on conference on information and knowledge management. 829–838.

- How good are gpt models at machine translation? a comprehensive evaluation. arXiv preprint arXiv:2302.09210 (2023).

- Inflection. 2024. The new Inflection. https://inflection.ai/the-new-inflection Accessed: 30-03-2024.

- AI-mediated communication: How the perception that profile text was written by AI affects trustworthiness. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems. 1–13.

- A comparison of menu selection techniques: touch panel, mouse and keyboard. International Journal of Man-Machine Studies 25, 1 (1986), 73–88. https://doi.org/10.1016/S0020-7373(86)80034-7

- ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences 103 (2023), 102274. https://doi.org/10.1016/j.lindif.2023.102274

- Large Language Models are Zero-Shot Reasoners. In Advances in Neural Information Processing Systems, S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh (Eds.), Vol. 35. Curran Associates, Inc., 22199–22213. https://proceedings.neurips.cc/paper_files/paper/2022/file/8bb0d291acd4acf06ef112099c16f326-Paper-Conference.pdf

- CoAuthor: Designing a Human-AI Collaborative Writing Dataset for Exploring Language Model Capabilities. In Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems (New Orleans, LA, USA) (CHI ’22). Association for Computing Machinery, New York, NY, USA, Article 388, 19 pages. https://doi.org/10.1145/3491102.3502030

- Interruptions and task transitions: Understanding their characteristics, processes, and consequences. Academy of Management Annals 14, 2 (2020), 661–694.

- James R Lewis. 2018. The system usability scale: past, present, and future. International Journal of Human–Computer Interaction 34, 7 (2018), 577–590.

- Chatting with gpt-3 for zero-shot human-like mobile automated gui testing. arXiv preprint arXiv:2305.09434 (2023).

- The work life of developers: Activities, switches and perceived productivity. IEEE Transactions on Software Engineering 43, 12 (2017), 1178–1193.

- Augmented language models: a survey. arXiv preprint arXiv:2302.07842 (2023).

- Microsoft. 2024a. Copilot AI Features. https://www.microsoft.com/en-us/windows/copilot-ai-features Accessed: 30-03-2024.

- Microsoft. 2024b. What is Cortana. https://support.microsoft.com/en-us/topic/what-is-cortana-953e648d-5668-e017-1341-7f26f7d0f825 Accessed: 30-03-2024.

- Promptaid: Prompt exploration, perturbation, testing and iteration using visual analytics for large language models. arXiv preprint arXiv:2304.01964 (2023).

- Collaborative storytelling with large-scale neural language models. In Proceedings of the 13th ACM SIGGRAPH Conference on Motion, Interaction and Games. 1–10.

- OpenAI. 2024a. Introducing ChatGPT. https://openai.com/blog/chatgpt Accessed: 30-03-2024.

- OpenAI. 2024b. Introducing GPTs. https://openai.com/blog/introducing-gpts Accessed: 30-03-2024.

- OpenAI. 2024c. Start Using ChatGPT. https://openai.com/blog/start-using-chatgpt-instantly Accessed: 30-03-2024.

- Vishakh Padmakumar and He He. 2022. Machine-in-the-Loop Rewriting for Creative Image Captioning. In Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Marine Carpuat, Marie-Catherine de Marneffe, and Ivan Vladimir Meza Ruiz (Eds.). Association for Computational Linguistics, Seattle, United States, 573–586. https://doi.org/10.18653/v1/2022.naacl-main.42

- Filip Radlinski and Nick Craswell. 2017. A theoretical framework for conversational search. In Proceedings of the 2017 conference on conference human information interaction and retrieval. 117–126.

- The programmer’s assistant: Conversational interaction with a large language model for software development. In Proceedings of the 28th International Conference on Intelligent User Interfaces. 491–514.

- SAGA: Collaborative Storytelling with GPT-3 (CSCW ’21 Companion). Association for Computing Machinery, New York, NY, USA, 163–166. https://doi.org/10.1145/3462204.3481771

- Where to hide a stolen elephant: Leaps in creative writing with multimodal machine intelligence. ACM Transactions on Computer-Human Interaction 30, 5 (2023), 1–57.

- Snapchat. 2024. What is My AI on Snapchat and how do I use it. https://help.snapchat.com/hc/en-us/articles/13266788358932-What-is-My-AI-on-Snapchat-and-how-do-I-use-it Accessed: 30-03-2024.

- Decoding ChatGPT: A taxonomy of existing research, current challenges, and possible future directions. Journal of King Saud University - Computer and Information Sciences 35, 8 (2023), 101675. https://doi.org/10.1016/j.jksuci.2023.101675

- Interactive and visual prompt engineering for ad-hoc task adaptation with large language models. IEEE transactions on visualization and computer graphics 29, 1 (2022), 1146–1156.

- Expectation vs. experience: Evaluating the usability of code generation tools powered by large language models. In Chi conference on human factors in computing systems extended abstracts. 1–7.

- GPTVoiceTasker: LLM-Powered Virtual Assistant for Smartphone. arXiv preprint arXiv:2401.14268 (2024).

- Understanding User Experience in Large Language Model Interactions. arXiv:2401.08329 [cs.HC]

- Ryen W White. 2023. Navigating complex search tasks with AI copilots. arXiv preprint arXiv:2311.01235 (2023).

- Wikipedia. 2024. Virtual Assistants. https://en.wikipedia.org/wiki/Virtual_assistant Accessed: 30-03-2024.

- Writeful. 2024. TexGPT: Harness the power of ChatGPT in Overleaf. https://blog.writefull.com/texgpt-harness-the-power-of-chatgpt-in-overleaf/ Accessed: 30-03-2024.

- A survey on multimodal large language models. arXiv preprint arXiv:2306.13549 (2023).

- Wordcraft: story writing with large language models. In 27th International Conference on Intelligent User Interfaces. 841–852.

- Toolqa: A dataset for llm question answering with external tools. Advances in Neural Information Processing Systems 36 (2024).

- Work interruptions resiliency: toward an improved understanding of employee efficiency. Journal of Organizational Effectiveness: People and Performance 4, 1 (2017), 39–58.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.