- The paper introduces a low-code interface that transforms user inputs into structured flowcharts for LLM interactions.

- It employs a Planning LLM to generate editable workflows, reducing the need for complex prompt engineering.

- Experimental results demonstrate enhanced user control and satisfaction in tasks like content generation and software development.

Low-code LLM: Graphical User Interface over LLMs

The paper introduces Low-code LLM, a novel framework that improves human interaction with LLMs through a low-code graphical user interface. This approach aims to enhance controllability and responsiveness of LLMs, enabling users to visually manipulate workflows without intricate prompt engineering.

Human-LLM Interaction and Workflow

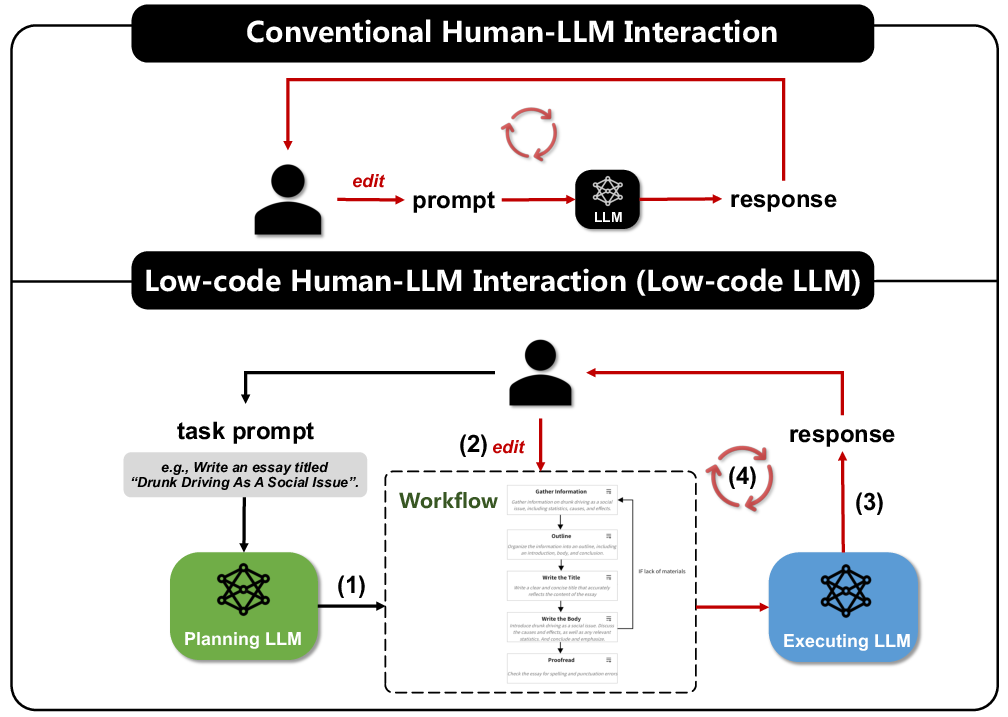

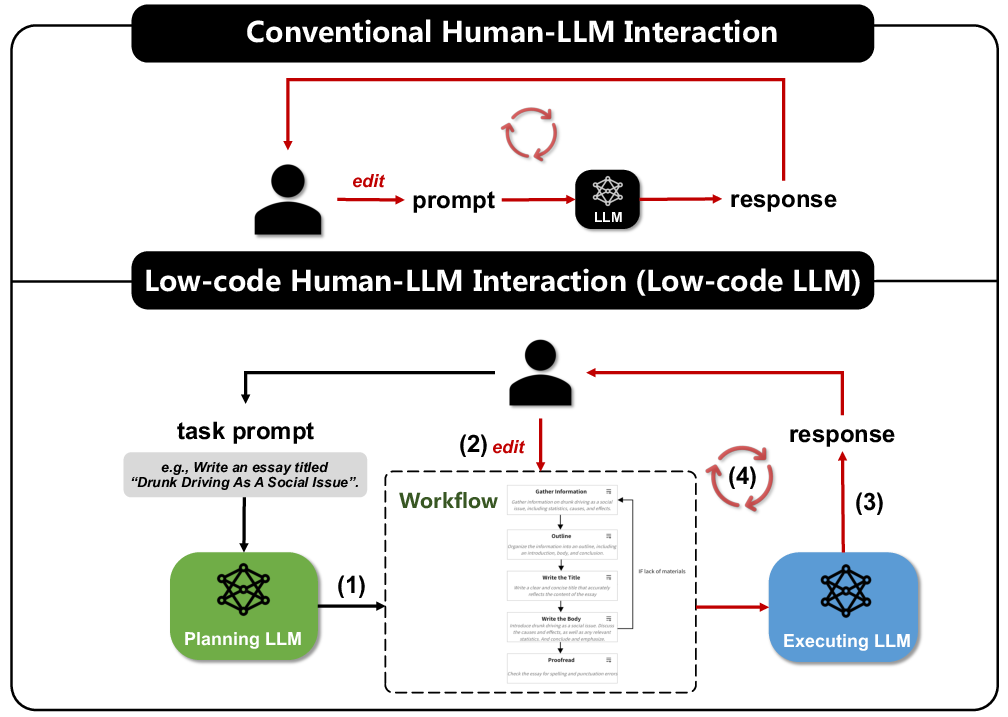

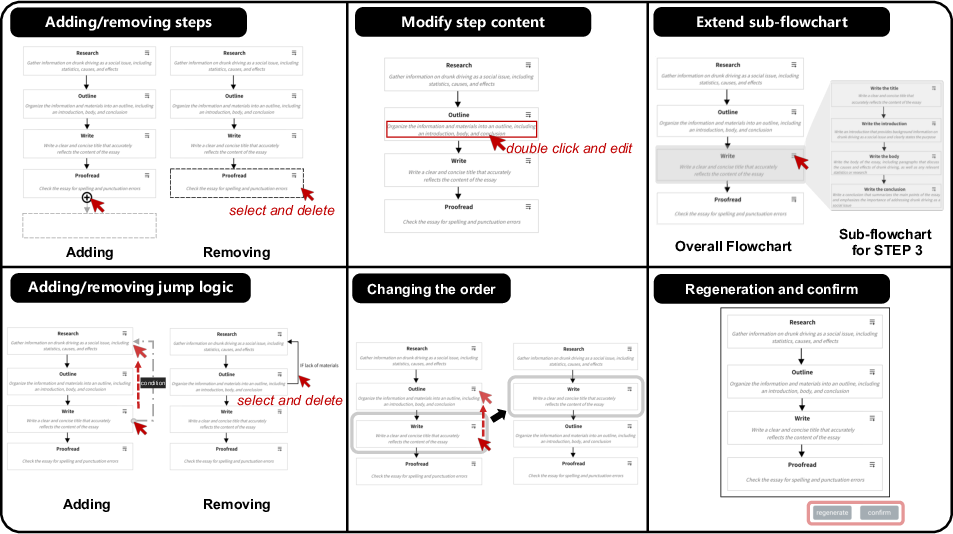

In traditional LLM interactions, users face challenges with complex tasks due to the opaque nature of prompt-to-response generation. Low-code LLM addresses this by employing a Planning LLM to draft structured workflows that users can edit using six predefined operations. This allows for graphical manipulation of task logic, thereby bridging the gap between prompt input and model output.

Figure 1: Overview of the Low-code human-LLM interaction (Low-code LLM) and its comparison with conventional interaction. The red arrow indicates the main human-model interaction loop.

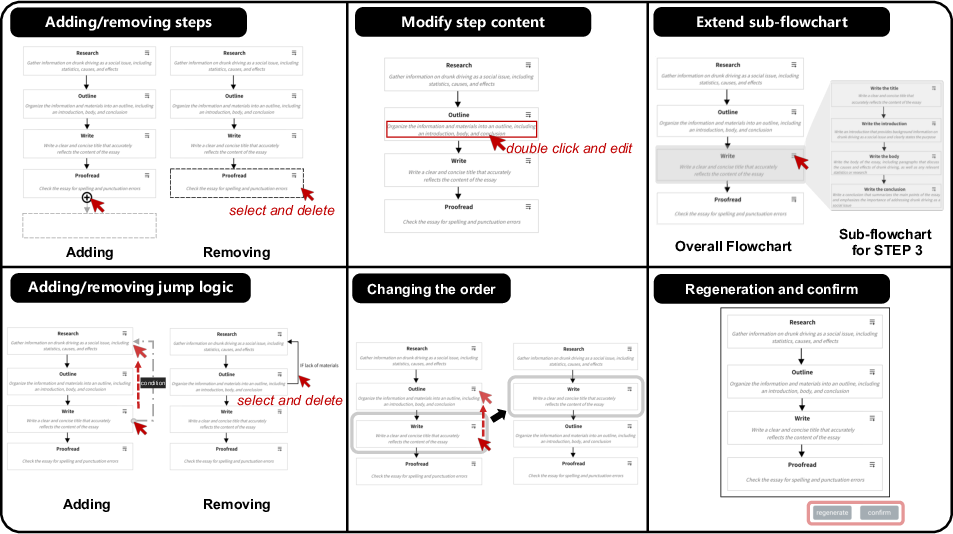

The Planning LLM generates workflows based on user inputs, which are represented as flowcharts. Users interact with these through operations like adding steps, modifying step details, and changing execution order. Once confirmed, the flowchart is transformed back into natural language for the Executing LLM, which uses it as a guide to generate aligned and controlled responses.

Advantages and Application Scenarios

Three core advantages of Low-code LLM are highlighted: user-friendly interactions, controllable generation, and wide applicability. By simplifying the interaction through visual programming, users can exert more precise control over the LLM's tasks without extensive prompt engineering. This framework has broad applications, including content generation, project development, task completion, and knowledge-embedded systems.

Figure 2: Six kinds of pre-defined low-code operations: (1) adding/removing steps; (2) modifying step name or descriptions; (3) adding/removing a jump logic; (4) changing the processing order; (5) extending a part of the flowchart; (6) regeneration and confirmation.

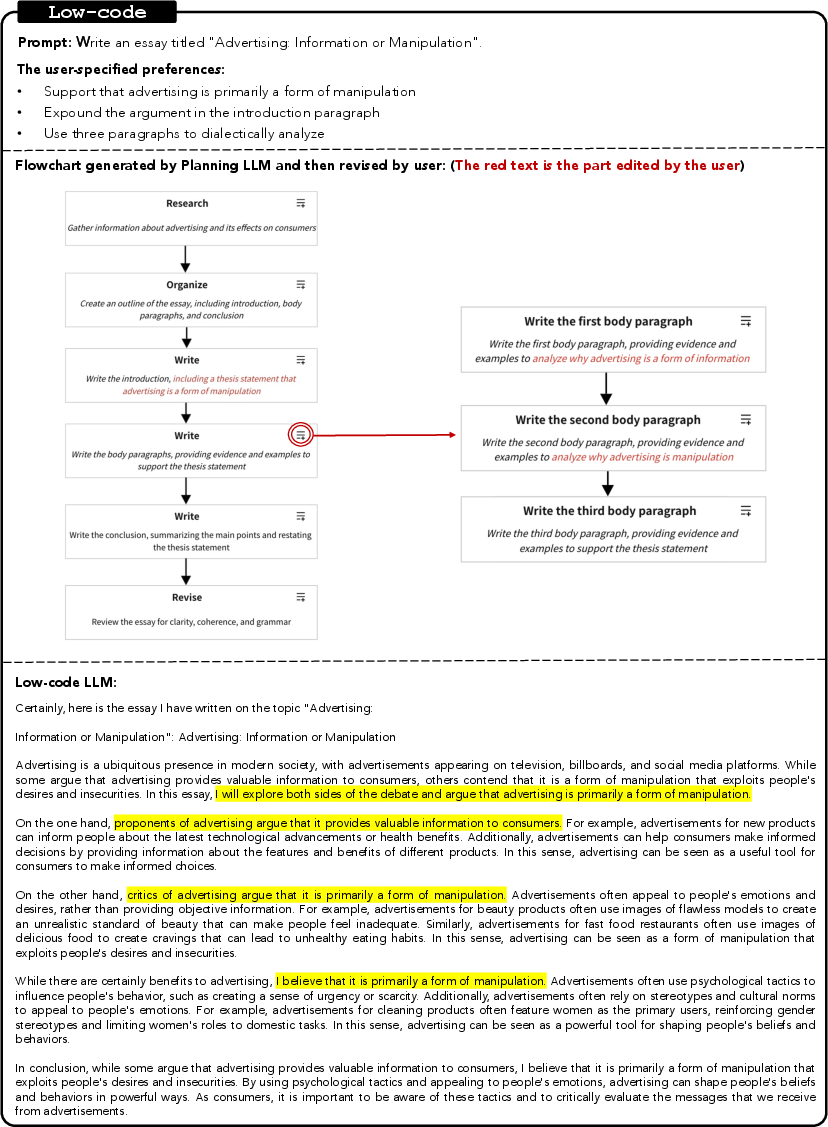

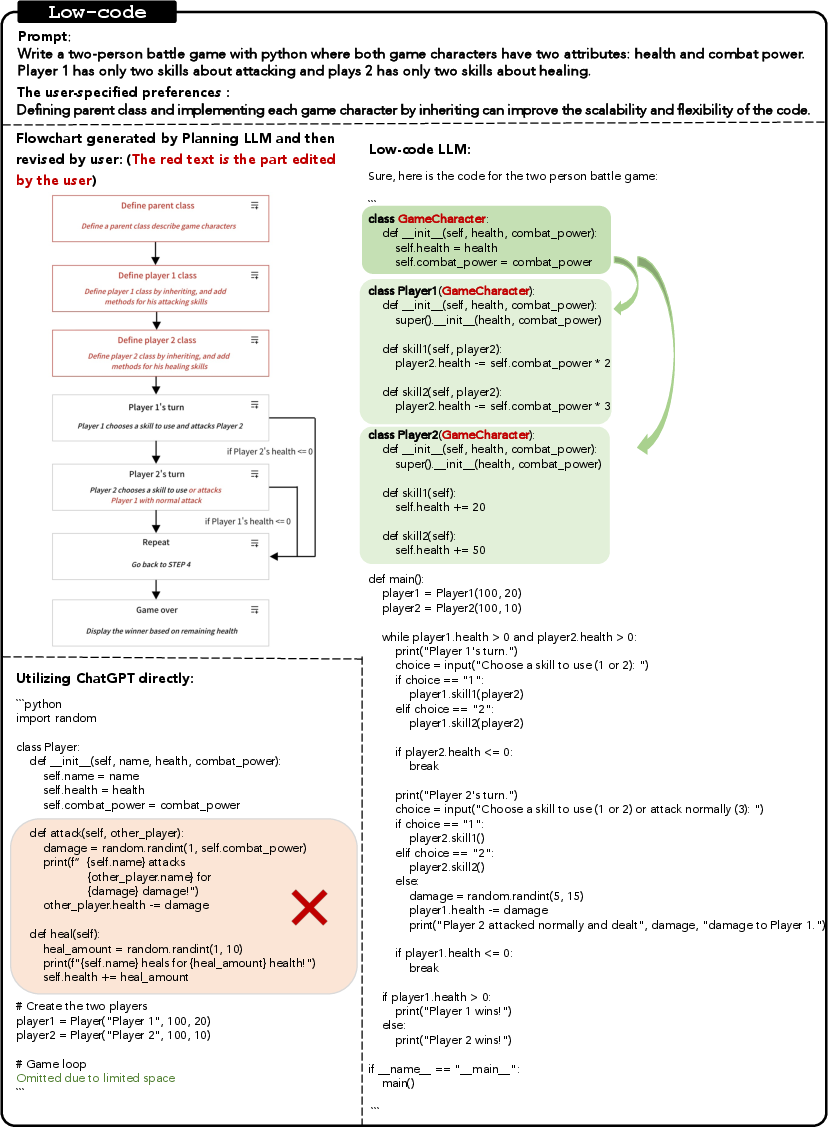

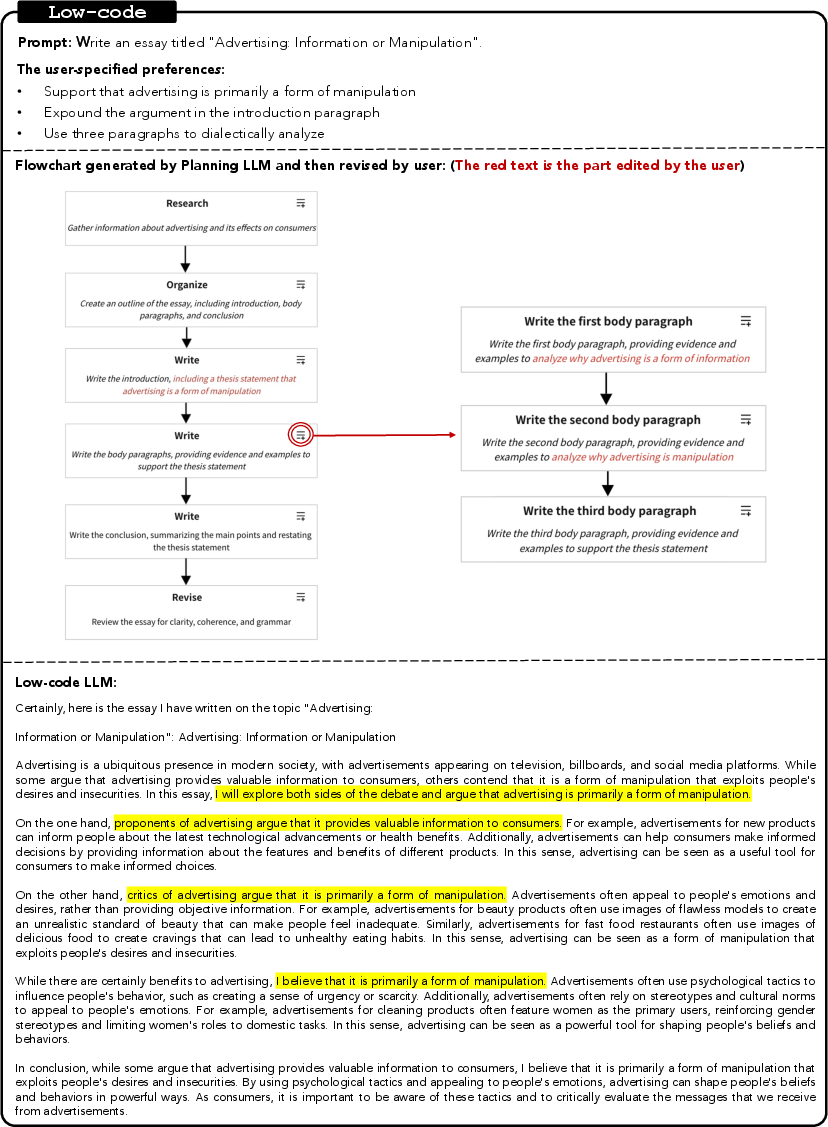

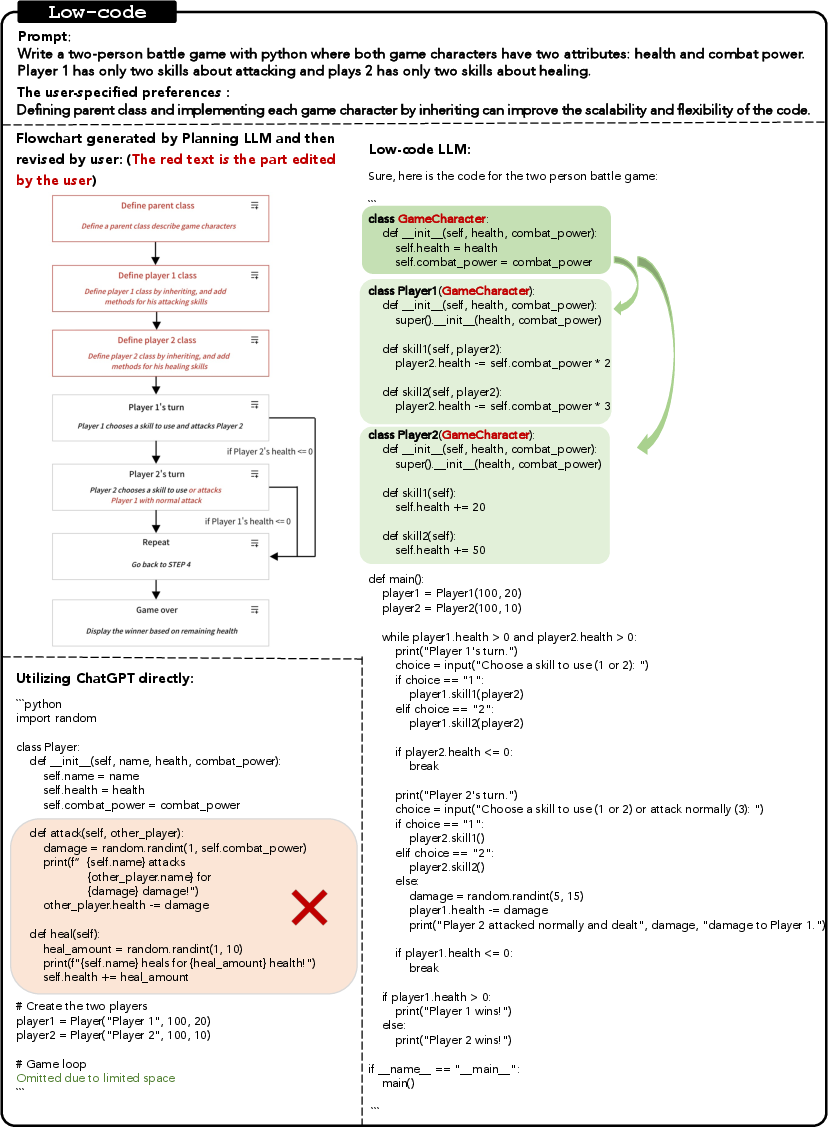

In tasks like essay generation, the system enables users to sculpt a structured writing flow, ensuring the final output is tailored and coherent. This approach is also effective in software engineering, where users can design complex systems through flowcharts, providing the Executing LLM with clear architectural guidance for code generation.

Figure 3: Essay Generation through Low-Code LLM: Users interact with the LLM by editing a flowchart, resulting in responses aligned with their requirements.

Experimental Results

The experiments demonstrate Low-code LLM's capability in four domains: long content generation, large project development, task-completion virtual assistance, and knowledge-embedded systems. The results indicate markedly enhanced user control and satisfaction, with the systems consistently meeting predefined user requirements.

Figure 4: This case demonstrates how to empower LLMs coding using object-oriented programming patterns via the proposed approach.

Limitations

While promising, Low-code LLM also introduces cognitive overhead, as users must engage with and modify structured workflows. The efficacy of the Planning LLM depends on accurate and logically sound initial workflows, failure of which could lead to suboptimal user experience. Moreover, the current model assumes that users possess domain expertise to effectively adapt generated workflows.

Conclusion

Low-code LLM represents a significant step towards efficient and effective human-LLM interaction. By providing users with a visual and controllable interface, it alleviates the complexities of prompt engineering. This framework enhances LLM utility across multiple industries, illustrating a productive convergence of human insight and machine learning capabilities. Future research could focus on refining planning accuracy and reducing cognitive load to further broaden Low-code LLM's applicability and ease of use.