- The paper presents BSharedRAG, which efficiently leverages a shared backbone for simultaneous retrieval and generation improvements in the e-commerce domain.

- The framework employs continual pre-training and task-specific LoRA modules to enhance model alignment while reducing the need for extensive hyperparameter tuning.

- Experimental results show notable gains, including a 5–13% improvement in Hit@3 and a 23% increase in BLEU-3, validating its effectiveness in real-world datasets.

BSharedRAG: Backbone Shared Retrieval-Augmented Generation for the E-commerce Domain

The paper focuses on the development and evaluation of a novel framework, Backbone Shared Retrieval-Augmented Generation (BSharedRAG), tailored for the e-commerce domain. The main objective is to improve the synergy between retrieval and generation tasks by leveraging shared, continually pre-trained backbones to enhance performance in information-dense and rapidly evolving fields like e-commerce.

Introduction

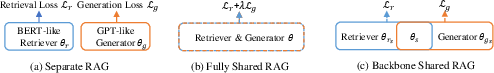

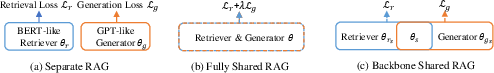

Retrieval-Augmented Generation (RAG) systems have become increasingly important in scenarios requiring domain-specific knowledge, such as e-commerce, where entities are numerous and the information is frequently updated. Traditional RAG systems typically employ separate models for retrieval and generation, thus limiting the mutual benefit these tasks could bring to one another (Figure 1). This paper introduces BSharedRAG, which utilizes a shared backbone model to improve knowledge transfer between retrieval and generation tasks without the necessity for effort-intensive loss balancing. The approach promises more efficient knowledge updating and reduced reliance on hyperparameter tuning, particularly crucial for domains like e-commerce with pronounced long-tail distributions of information.

Figure 1: Comparing three categories of possible RAG frameworks: (a) separate RAG, (b) fully shared RAG, (c) backbone shared RAG.

Methodology

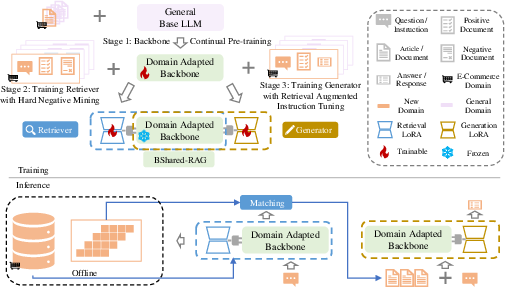

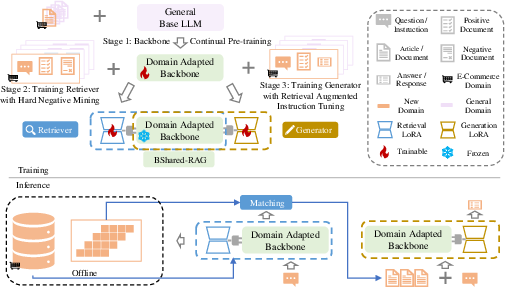

The BSharedRAG framework involves three primary phases:

- Backbone Continual Pre-training: A base LLM is pre-trained on a domain-specific corpus, forming the backbone for subsequent retrieval and generation tasks. This continual pre-training enables the model to retain domain-specific knowledge vital for effective retrieval and generation.

- Task-specific LoRA Modules: Two Low-Rank Adaptation (LoRA) modules are integrated for retrieval and generation tasks, building upon the shared backbone. This architecture utilizes independent parameter sets optimized separately for retrieval and generation objectives, thus avoiding negative transfer and the complexities involved in balancing competing task objectives.

- Training Strategy for Task Optimization: Hard negative mining is employed to enhance the quality of retrieval training, whereas retrieval-augmented instruction tuning refines the generator's performance by feeding retrieved results as part of the input context during training (Figure 2).

Figure 2: Overview of training and inference of our proposed BSharedRAG Framework.

Experimental Results

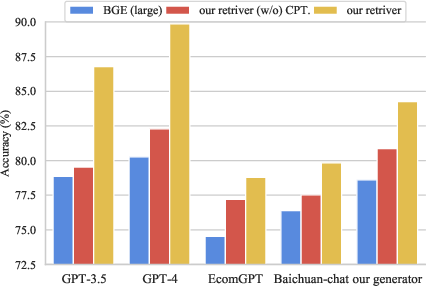

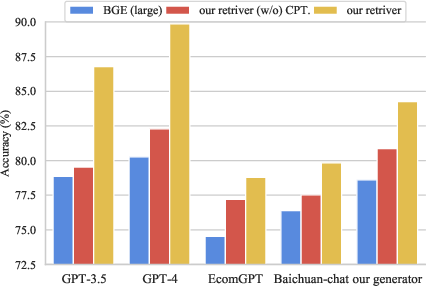

The BSharedRAG framework was tested against existing methods using e-commerce datasets. It achieved notable improvements across multiple metrics:

- Retrieval Performance: BSharedRAG outperformed separate retriever models by significant margins, such as 5% to 13% improvements in Hit@3 over two datasets.

- Generation Performance: The generation component showed a 23% increase in BLEU-3 scores over traditional RAG methods, affirming the efficacy of shared learning.

These improvements are attributed to the better alignment between the retriever and generator facilitated by the shared backbone, which allows both components to leverage updates from continual pre-training (Figure 3).

Figure 3: Evaluating the influence of different retrievers to generation effectiveness.

Dataset Contribution

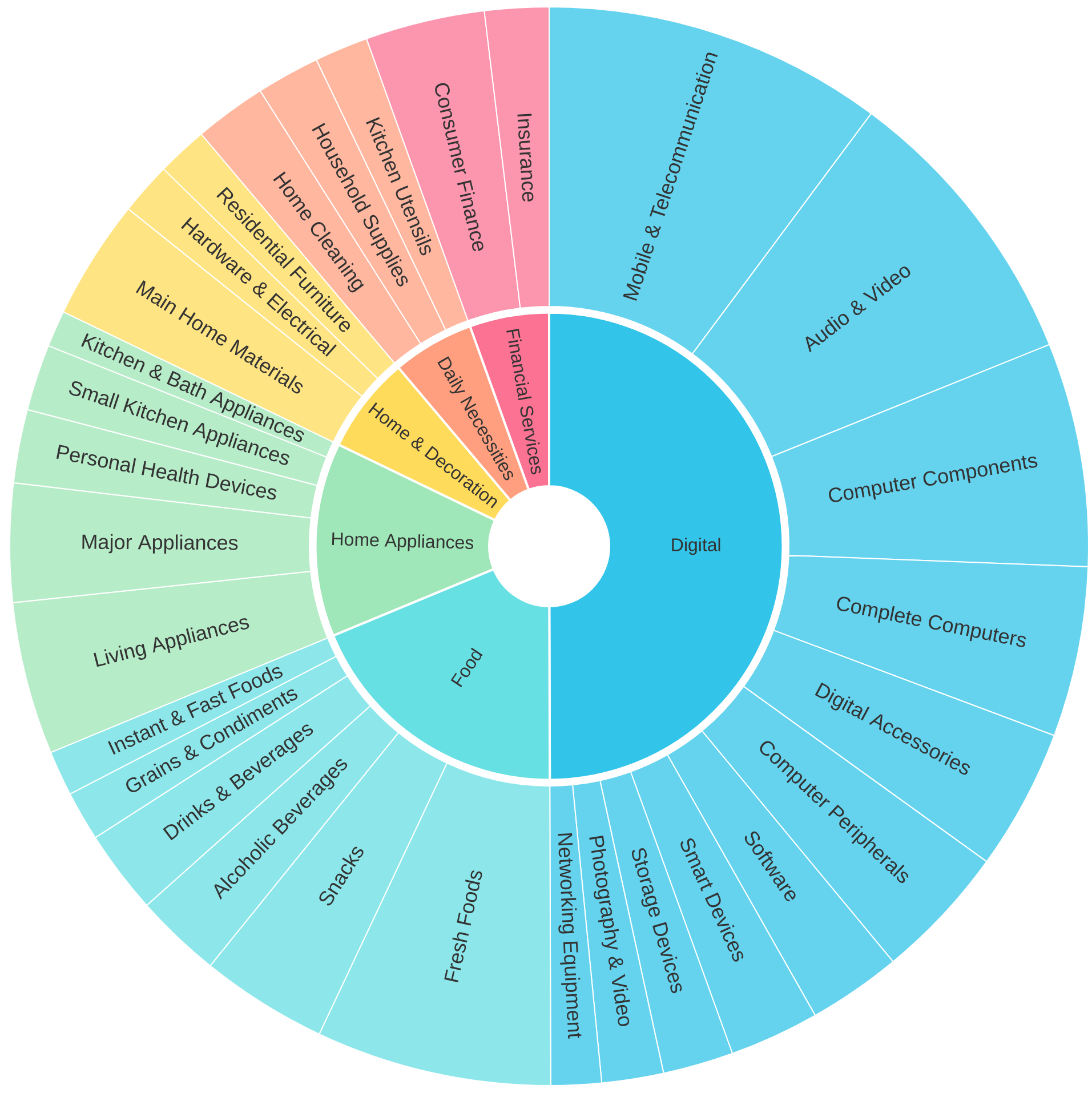

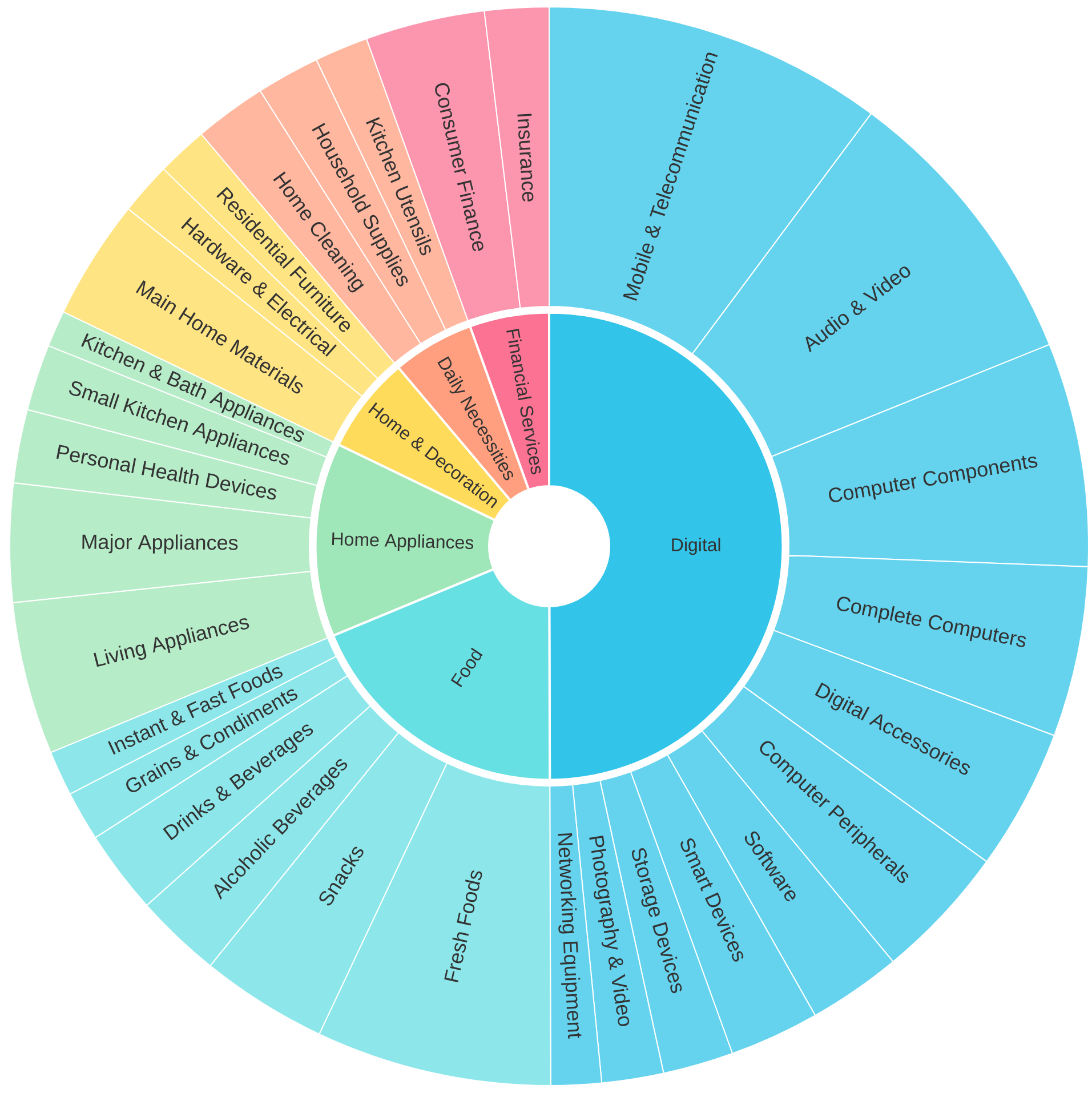

A new dataset, WorthBuying, was constructed to address the paucity of high-quality retrieval-augmented generation datasets in the e-commerce domain. It comprises 735K documents and 50K question-document-answer tuples, annotated for domain specificity and designed to support training both retriever and generator models (Figure 4).

Figure 4: Partial categories of WorthBuying dataset.

Conclusion

The BSharedRAG framework represents a significant stride towards optimizing retrieval-augmented generation processes in domain-specific contexts such as e-commerce. By sharing a pre-trained backbone between retrieval and generation while utilizing separate task-specific parameter adaptations, BSharedRAG successfully overcomes the limitations of traditional RAG systems. Future work will focus on extending this framework to other domains and improving the methodologies used for task-specific parameter optimization.