- The paper introduces an end-to-end pipeline that combines monocular SLAM, transformer-based depth estimation, and 3D Gaussian splatting for precise 3D reconstruction in minimally invasive arthroscopy.

- It demonstrates superior photometric fidelity with PSNR up to 34.51 and SSIM up to 0.94, outperforming traditional methods like COLMAP in challenging, texture-poor environments.

- The integrated AR tools achieve accurate intraoperative measurement (1.59 ± 1.81 mm) and annotation (mean IoU of 0.721) without requiring extra hardware, enhancing surgical navigation.

Seamless Augmented Reality Integration for Articular Reconstruction and Guidance in Arthroscopy

Introduction

This paper presents a comprehensive vision-based pipeline for dense 3D reconstruction and augmented reality (AR) guidance in minimally invasive arthroscopy, leveraging monocular video captured intraoperatively. The authors propose a method that sequentially combines robust point-based SLAM, monocular depth estimation, and 3D Gaussian Splatting (3D-GS) for real-time, high-fidelity scene reconstruction—without requiring any additional hardware or preoperative imaging. This explicit 3D representation is subsequently integrated into a set of AR applications for annotation anchoring and articular notch measurement, supporting surgical navigation and improving spatial perception within the constrained joint environment.

Methodological Framework

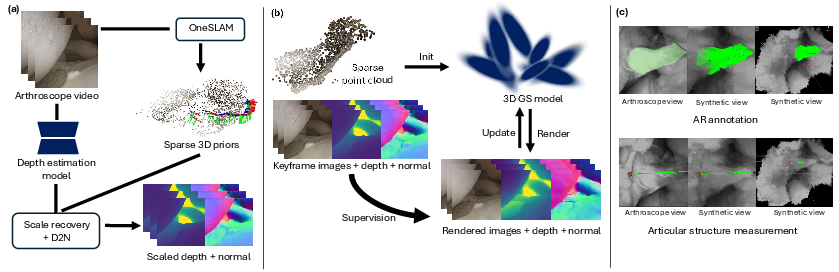

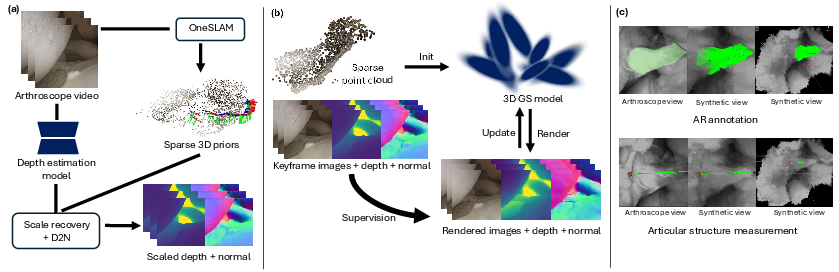

The pipeline is structured in three primary stages:

- Sparse 3D Prior Reconstruction: OneSLAM, underpinned by the CoTracker framework, is used to achieve robust sparse point cloud tracking across arthroscopic video frames, taking into account the scarcity of distinctive features prevalent in endoscopic imaging. Pose estimation is performed via RANSAC-based PnP optimization with bundle adjustment over keyframes for reduced drift and computational efficiency.

- Depth and Surface Normal Estimation: Monocular depth maps are inferred via a large-scale pre-trained transformer, Depth Anything. These raw disparities are then scaled and shifted based on corresponding 3D priors, optimizing for a consistent pseudo-depth volume through Nelder-Mead simplex search. Surface normals are then estimated using a depth-to-normal translator, further regularizing geometric fidelity.

- 3D Gaussian Splatting Scene Construction: The sparse point cloud initializes a set of 3D Gaussians for splatting. The model leverages a compound loss, integrating photometric error, surface alignment constraints (from pseudo-depth and normals), and Gaussian opacity regularization to suppress floating artifacts and enforce surficial accuracy. The explicit 3D representation supports real-time, view-consistent rendering essential for interactive AR.

The overall workflow is summarized in the proposed pipeline schematic.

Figure 1: An overview of the proposed system, covering sparse prior extraction, depth/normal regularization, dense 3D Gaussian Splatting, and AR tool integration for annotation and intraoperative measurement.

Quantitative and Qualitative Evaluation

Datasets and Metrics

Evaluation encompasses both public cadaveric and proprietary phantom datasets, each comprising sequences with varying anatomical content and camera trajectories. Quantitative metrics include RMSE (mm) for point cloud alignment, Hausdorff distance, PSNR, and SSIM for rendering fidelity. Annotation accuracy leverages mean IoU (mIoU) on propagated segmentation masks, compared against the video object segmentation method Cutie.

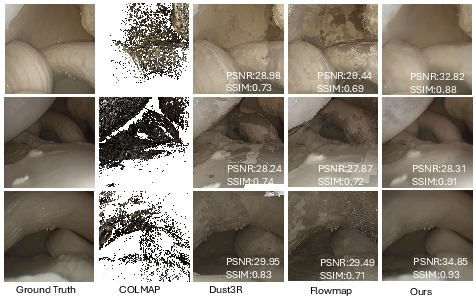

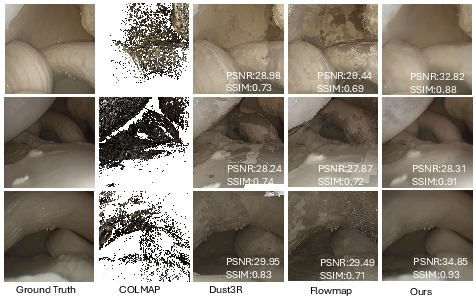

The presented pipeline matches or surpasses competitive baselines (COLMAP, Flowmap, Dust3R) in average RMSE and demonstrates superior photometric and perceptual fidelity with PSNR values up to 34.51 and SSIM up to 0.94. Statistical analysis confirms that PSNR/SSIM are both significantly improved (p < 0.01) relative to all alternatives.

Rendering comparisons further attest to the sharper recovery of articular surface detail and the suppression of floating artifacts, attributed to the incorporation of depth/normal supervision and opacity regularization, which prior methods such as COLMAP and NeRF derivatives lack.

Figure 3: Direct comparison of rendering quality, highlighting improved sharpness and geometric consistency (ours, rightmost column) versus competing 3D and neural reconstruction techniques.

AR Measurement and Annotation Utility

The AR measurement tool, enabled by the explicit 3D GS model, attains distance estimation accuracy within 1.59±1.81 mm, supporting robust intraoperative metric analysis without physical rulers. The AR annotation anchor achieves a mean IoU of 0.721, outperforming Cutie (mIoU=0.569) and exhibiting higher consistency across divergent frames, particularly in regions suffering illumination changes. Error distributions reveal stability and reduced variance, except in highly occluded or under-illuminated regions, which are primarily limited by the base monocular depth estimation and point tracking fidelity.

Discussion and Implications

The proposed pipeline eliminates reliance on external hardware, fiducials, or preoperative scans. It directly leverages intraoperative monocular video, offering an end-to-end, deployable approach suitable for typical arthroscopic clinical settings. The explicit 3D Gaussian Splatting paradigm—augmented by photometric, depth, and surface normal supervision—successfully addresses fundamental challenges in confined, texture-poor environments: scale ambiguity, sparse features, and limited viewpoint coverage.

By supporting highly accurate real-time AR overlays for both annotation anchoring and geometrically consistent distance measurement, the approach has practical implications for error mitigation, procedure streamlining, and future computer-assisted surgery platforms. From a theoretical perspective, the results underscore the effectiveness of combined vision transformer depth priors and explicit point-based 3D representations in challenging medical SLAM settings, where traditional SfM and NeRF-based approaches struggle.

Limitations and Future Prospects

The method exhibits sensitivity to point tracking errors, especially under rapid arthroscope motion or severe occlusions. Monocular depth prediction is not adapted specifically to intra-articular content, leading to outlier errors in poorly visualized cavities. The assumption of a rigid scene and static intrinsics constrains generalizability to dynamic or real-patient settings. Future work should focus on integrating robust external pose tracking, finetuning depth models on domain-specific data, and extending to non-rigid tissue scenarios. The extension to learning-based deformable scene representations and real-time clinical deployment is particularly promising.

Conclusion

This study provides a formalized, vision-only pipeline for simultaneous 3D reconstruction and AR-guided intraoperative assistance in arthroscopy. The integration of robust point-based SLAM, transformer-based monocular depth priors, and 3D Gaussian Splatting yields explicit, high-fidelity articular models that power accurate AR measurement and annotation capabilities. On both synthetic and real anatomical datasets, the system demonstrably outperforms established reconstruction and segmentation baselines under realistic constraints. These results reinforce the feasibility of monocular vision-based guidance systems for future surgical navigation, with the potential to enhance precision, safety, and workflow efficiency in minimally invasive interventions.